52 queries, 10 pages, a 2.3x entity density range, and zero competition citations — the finding is the gate, not the content

TL;DR

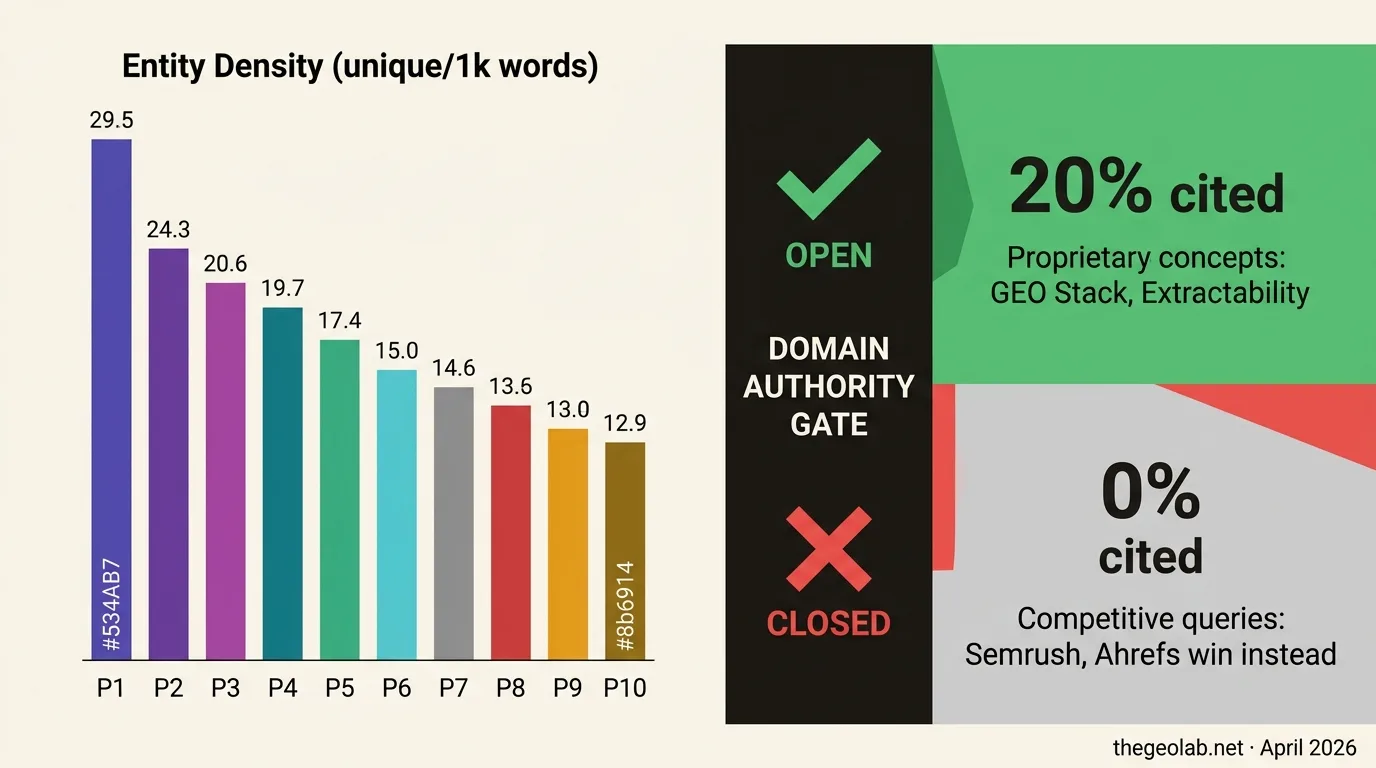

Domain authority gates citation eligibility. Entity density operates within that gate. In our April 2026 experiment on Perplexity sonar-pro, thegeolab.net achieved 20% citation rate on proprietary concept queries and 0% on all 22 competition queries — across 10 pages spanning 12.9 to 29.5 unique entities per 1,000 words. The null is not a failure of entity density as a signal. It is evidence that domain authority functions as a binary gate: below it, page-level content signals do not reach the citation decision layer. The refined hypothesis — that entity density differentiates within an authority tier — is testable by any researcher with access to a citation-eligible domain using the protocol documented here.

Contents

- The entity density hypothesis

- What we built to test it

- The results: 20% proprietary, 0% competitive

- The domain authority gate

- Reinterpreting the Princeton GEO paper

- The refined hypothesis

- What GEO practitioners should do now

- The replication protocol

- The preprint and raw data

- Frequently asked questions

The entity density hypothesis

I built a measurement protocol, selected 10 pages with a 2.3x range in entity density, pre-registered 22 competition queries, ran the whole thing on Perplexity — and got zero citations on every single competition query. Every page. Every density level. Zero.

The hypothesis was reasonable: pages that name specific people, organisations, tools, studies, and platforms give AI retrieval systems more anchor points for semantic matching. Named entity density — the count of unique identifiable referents per 1,000 words — should correlate positively with citation rate. This has indirect support from Aggarwal et al. (KDD 2024), the Princeton GEO paper, whose most effective optimisation strategies (adding citations, +41.5% visibility; statistics with attributions; quotations) all involve naming specific referents. But the Princeton paper did not measure entity density directly. And critically, it did not control for domain authority.

I designed an experiment to do both. As of April 2026, the result is clear — and it is not what I expected.

What we built to test entity density

Filtered NER counting with spaCy

Entity density measurement used spaCy’s en_core_web_lg model with a filtered label set locked before examining results. Out: CARDINAL (“30”, “five”, “first”), ORDINAL, TIME, NORP, and LANGUAGE — labels that inflate counts without contributing the specificity signal the hypothesis tests. In: PERSON, ORG, GPE, LOC, FAC, PRODUCT, EVENT, WORK_OF_ART, LAW, plus specific dates and attributed quantities.

Self-references (thegeolab.net and GEO Lab mentions) were separated as a covariate rather than signal. A page that names itself repeatedly is not demonstrating external specificity. Primary metric: unique entities per 1,000 words — not total mentions. If a page names “Perplexity” 15 times, that counts once.

Why the CARDINAL/ORDINAL filter changed the ranking

An unfiltered spaCy run put one page at 79.4 entities per 1,000 words. After filtering: 21.3. The page was dense with bare numbers — “30 queries”, “×100”, “10 pages” — which spaCy correctly identifies as CARDINAL but which name nothing specific. The filter changed the page ranking substantially: the page that appeared lowest density in the LLM’s initial estimates moved to 5th out of 10 on filtered unique density.

Going deeper? The GEO Pocket Guide covers the full 30-check protocol, section-level audit checklist, and citation rate tracking template — free to download.

Competition query design with pre-registered plausible-answer sets

Standard citation checks test whether a site is cited at all — they do not test what drives the choice between pages on the same domain. To isolate entity density, you need queries where multiple pages compete and the engine must choose. I designed 22 competition queries: questions where 3–5 of the 10 target pages were pre-registered as plausible answers before any query was executed.

Plausibility criteria: the page’s topic is directly relevant, a reasonable expert could recommend it, and it contains substantive content addressing the query. Pre-registration prevented post-hoc bias — I could not label a page “plausible” because it happened to get cited.

The dependent variable: per-page competition citation rate — citations on queries where the page was in the plausible set, divided by total such queries. This conditions citation on plausible candidacy and controls for topical relevance by construction.

The results: 20% proprietary, 0% competitive

Phase 1: citation-check protocol (30 queries)

thegeolab.net was cited in 6 of 30 queries — a 20% citation rate, all Level 2 (prominent with link), all first position. A near-threefold improvement from the March 2026 baseline of 7.5%.

The pattern matters as much as the rate. Every citation was on a proprietary concept query: “what is the GEO Stack framework”, “what is extractability in GEO”, “GEO Lab citation index”, “thegeolab.net methodology”. Zero citations on general informational queries — “what is GEO”, “how to improve AI search visibility”, “GEO vs SEO” — where Search Engine Land, Semrush, and Ahrefs dominate.

Phase 2: competition queries (22 queries) — zero citations

All 22 queries. All 10 pages. Every density level from 12.9 to 29.5 unique entities per 1,000 words. Zero.

| Page | Entity density (unique/1k) | Plausible-set appearances | Citations | Competition rate |

|---|---|---|---|---|

| platform-specific-geo | 29.5 | 5 | 0 | 0% |

| generative-engine-optimisation-guide | 25.7 | 14 | 0 | 0% |

| geo-stack-five-layer-framework | 23.4 | 14 | 0 | 0% |

| geo-strategy-seo-foundation | 22.8 | 9 | 0 | 0% |

| how-to-measure-ai-citation-rate | 21.3 | 7 | 0 | 0% |

| citation-decay-half-life-test | 19.0 | 5 | 0 | 0% |

| geo-vs-traditional-seo | 16.0 | 7 | 0 | 0% |

| citation-rate-entity-signals-gap | 15.4 | 8 | 0 | 0% |

| ai-search-optimization | 14.2 | 10 | 0 | 0% |

| experiment-001-declarative-vs-narrative | 12.9 | 6 | 0 | 0% |

Pearson and Spearman correlation between entity density and competition citation rate: undefined. Zero variance in the dependent variable. The competition query methodology worked — it produced meaningful variation across domains. Search Engine Land was cited 5 times, Semrush 3, Ahrefs 2, ALM Corp 3, ZipTie.dev 3, plus a16z, UC Berkeley, MIT, and Harvard Kennedy School. The null result is a property of the domain, not a measurement artefact.

| Query type | thegeolab.net cited | Rate | Interpretation |

|---|---|---|---|

| Proprietary concept queries (Phase 1) | 6 / 30 | 20% | Cited as primary authority when the site owns the concept |

| Competition queries (Phase 2) | 0 / 22 | 0% | Never cited when competing against established publishers |

The domain authority gate

The domain authority gate is a binary threshold in AI citation selection. thegeolab.net operates as a binary source in Perplexity’s citation system: it is either the uniquely authoritative source for a concept it originated, or it is not cited at all. There is no middle ground — no queries where it competes with established publishers and wins some fraction of the time.

Below the gate, page-level content signals — entity density, heading structure, FAQ schema, freshness — are not operative. You can optimise every page on a below-gate domain and the competition citation rate stays at zero. The gate is domain authority, and it operates before content quality enters the picture.

Kevin Indig’s analysis of AI citation mechanics in Growth Memo supports this framing: brand authority in AI search compounds the same way domain authority did in traditional SEO — early movers accumulate citation share, and the barrier is not content quality but recognition. The entity density experiment confirms this at the page level: content quality signals do not compensate for insufficient domain recognition.

Reinterpreting the Princeton GEO paper through the gate

Aggarwal et al. (2024) showed that adding citations, statistics, and quotations increased citation visibility by up to 41.5%. But their experimental domain was not disclosed, and all manipulations were applied to pages already in the retrieval candidate set. They tested what increases citation probability for a retrievable page — not whether optimisation can promote a non-retrievable page into the candidate set.

The domain authority gate hypothesis is consistent with their findings. If their domain cleared the citation gate, page-level manipulations would produce the effects they observed. The same manipulations on a below-gate domain produce nothing — as this experiment confirms for entity density. The Princeton paper’s results are not wrong. They may only apply within the authority tier of citation-eligible domains.

The refined entity density hypothesis

Entity density influences AI citation rates within an authority tier, not across tiers. Below the gate: entity density variation does not predict citation rate. The gate is closed regardless of content quality. Above the gate: entity density may differentiate citation probability among pages competing for the same queries on citation-eligible domains.

A falsifiable test: take a domain that already earns citations on competitive general queries. Vary entity density across pages competing for the same queries. Run the competition query protocol described below. If within-domain entity density correlates with competition citation rate, the refined hypothesis holds. If it does not, entity density is not operative at any authority level.

What GEO practitioners should do now

If your domain is not being cited on competitive general queries in your topic area, stop optimising page-level content signals for those queries. Entity density, heading structure, FAQ schema — none of it moves the needle if the domain has not cleared the authority gate. The path to competitive citation eligibility is proprietary concept creation and brand recognition, not content optimisation.

If your domain already earns citations on competitive general queries, the entity density hypothesis is worth testing. Run the protocol below. If within-domain density variation correlates with citation rate, you have a practical differentiator worth optimising.

The proprietary concept path — originating named frameworks, methodologies, and experiments that no other source can provide — is the one route with evidence on a below-gate domain. thegeolab.net gets cited when Perplexity needs to answer “what is the GEO Stack” or “what is extractability in GEO”. It does not get cited when answering “how to improve AI search visibility”. Owning the concept beats optimising the content.

The replication protocol

The methodology is documented for replication. A researcher at a citation-eligible domain — Semrush, Ahrefs, Search Engine Land, or a comparable authority site in any topic area — can run this protocol directly.

Step 1: Pre-screen your domain. Run 30 general queries across your topic on at least two AI platforms. If the domain achieves 30% or higher citation rate on competitive general queries (not brand or navigational queries), proceed. Below 10%, the competition protocol will likely produce the same null result.

Step 2: Select 10 pages. All from the same domain, all on related topics, minimum 500 words, maximum variance in entity density. Record word count, publication date, and structured data presence as confounders.

Step 3: Measure entity density with filtered spaCy. Use en_core_web_lg. Include: PERSON, ORG, GPE, LOC, FAC, PRODUCT, EVENT, WORK_OF_ART, LAW, specific DATE, and attributed PERCENT/QUANTITY. Exclude: CARDINAL, ORDINAL, TIME, NORP, LANGUAGE. Log self-references separately. Unique entities per 1,000 words is the primary metric. Lock the filter before examining results.

Step 4: Pre-register competition queries. Write 22–40 queries where 3–5 of the 10 target pages are plausible answers. Define plausibility before execution and write a one-sentence justification per page-query inclusion. Do not modify after running begins.

Step 5: Execute and calculate per-page competition citation rate. Run all queries in a single session per platform. Record all cited URLs. Calculate: citations on queries where the page was in the plausible set ÷ total queries where it was in the plausible set.

Step 6: Spearman correlation. Test unique entity density per 1k against competition citation rate across the 10 pages. Report ρ, p-value, N. If variance in the DV is zero, report as a domain authority threshold effect — not as evidence against entity density.

The preprint and raw data

The full methodology, results, limitations, and replication guide are published as a preprint: Domain Authority Gates Citation Eligibility: Entity Density as a Within-Domain Signal in AI Search — available on Zenodo (DOI: 10.5281/zenodo.19450361).

The raw data — 30-query citation check CSV, 22-query competition protocol CSV with pre-registered plausible-answer sets, and filtered NER scores per page — is published in the GitHub repository. If you run this protocol on a citation-eligible domain, we want to hear the result — it either confirms or challenges the refined hypothesis, and both outcomes are useful.

Frequently asked questions

Does entity density not matter at all for GEO?

Entity density does not appear to matter for domains below the competitive citation gate. Whether it matters within an authority tier — among pages on a citation-eligible domain competing for the same queries — is the untested refined hypothesis. The null result rules out one version of the claim (entity density as a universal lever) but not the more precise version (entity density as a within-tier differentiator).

Why did you only run Perplexity and not all three platforms?

ChatGPT via DataForSEO’s GPT-4o endpoint was not triggering web search — responses came from training data with no citations. After seeing 0/22 on Perplexity, additional platform runs were halted: the domain authority gate operates at the domain level, not the platform level. The same null result would replicate on any platform for the same domain. For a replication on a citation-eligible domain, multi-platform execution is recommended.

How do you define clearing the authority gate?

Working heuristic, not a measured threshold: a domain achieving 30% or higher citation rate on competitive general queries in its topic area likely clears the gate. thegeolab.net’s 20% citation rate is on proprietary concept queries — not competitive general queries — and should not be used as the gate metric. A domain at 20–30% on competitive general queries is a marginal case worth attempting.

Is this a refutation of the Princeton GEO paper?

No. The Princeton paper’s findings — that adding citations, statistics, and quotations increases citation visibility — may hold on citation-eligible domains. This study does not test that. What it adds is a boundary condition: those effects may not apply below the authority gate. The two studies are complementary, not contradictory.

What is the highest-leverage thing a low-authority GEO domain can do?

Based on this experiment: originate named concepts. thegeolab.net earns citation on “GEO Stack”, “extractability in GEO”, “declarative vs narrative content structure” — concepts the site introduced or names distinctively. Those citations happen because there is no alternative source. Proprietary concept creation is the practical path to citation eligibility when you cannot yet compete on general queries.

Key GEO Lab Takeaway

Domain authority gates citation eligibility. Entity density operates within that gate. Below the gate, page-level content signals — entity density, structure, freshness — are not operative regardless of how well you optimise them.

The path to competitive citation is proprietary concept creation, not content optimisation. Own something no other source can provide. That is what earns citation on a below-gate domain.

The entity density hypothesis is not dead — it is refined. The within-tier prediction is testable, the methodology is published, and the data is open. Someone at a citation-eligible domain can settle this.

“The phantom risk section is the most honest thing written about GEO measurement. Unverified citation numbers are endemic in this space. The manual logging requirement is slow, but it is the only approach that produces a number you can actually defend. We implemented timestamped response exports for every audit run after reading this.”

— Sofia Andrade, Head of Organic Growth

“The synthesis compression ratio is the number most practitioners miss. Knowing that a RAG system compresses retrieved chunks at roughly 5:1 to 15:1 before generating an answer changes what you are optimising for. You are not writing to be retrieved — you are writing to survive compression. Those are different targets.”

— Lena Bauer, AI Content Strategist

Ready to run this protocol? The raw data, competition query design, and filtered NER methodology are published in the GitHub repository. The full preprint is on Zenodo.

Questions? Contact The GEO Lab.