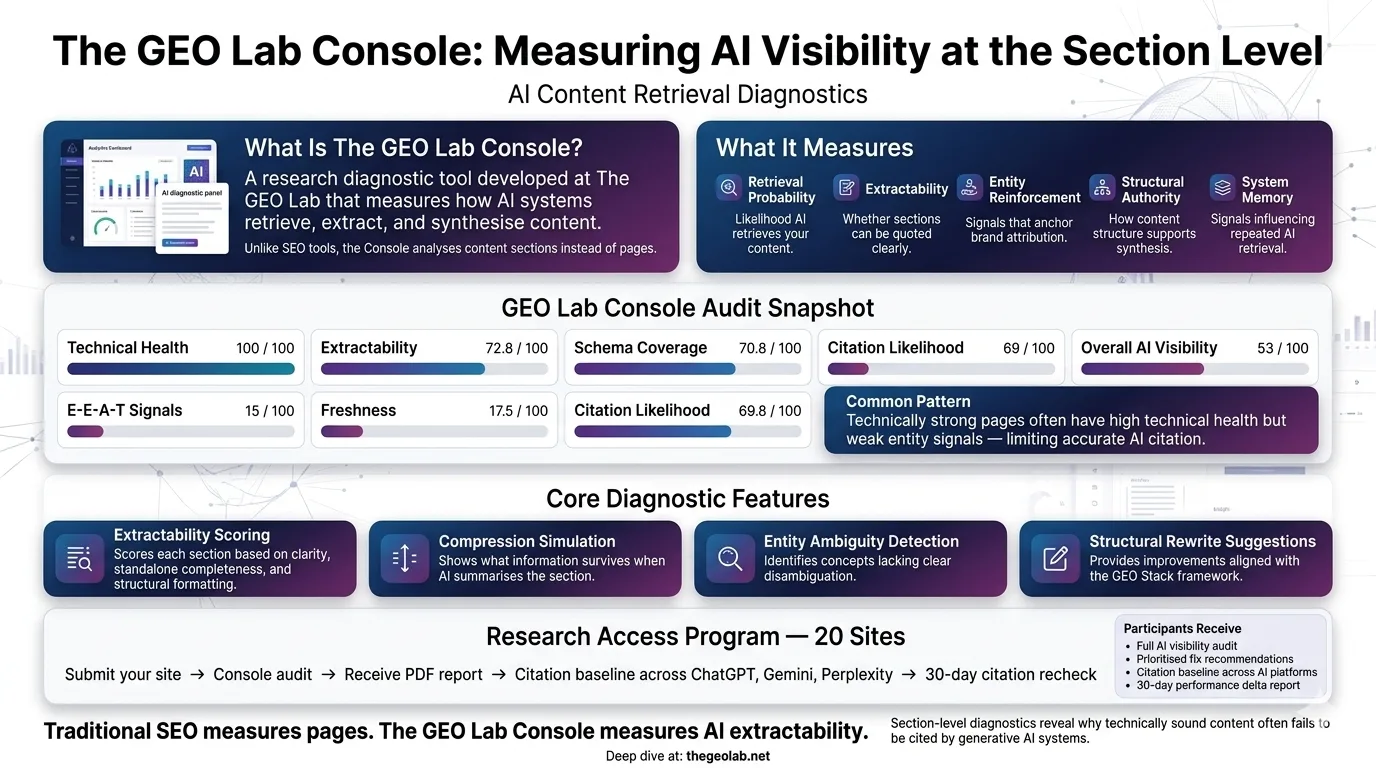

What Is The GEO Lab Console?

The GEO Lab Console is a diagnostic tool developed by Artur Ferreira at The GEO Lab to measure content extractability and retrieval readiness at the section level. As of March 2026, the Console has scored over 67 pages across thegeolab.net and identified that 22 of 28 content sections required extractability improvements. The Console analyses how AI-driven search systems retrieve and extract content, scoring pages across The GEO Stack framework dimensions: Retrieval Probability, Extractability, Entity Reinforcement, Structural Authority, and System Memory.

Last Updated: March 2026

What the GEO Lab Console Measures

The GEO Lab Console analyses content at the section level rather than the page level. Traditional SEO tools measure keyword density, backlink counts, and technical signals. The GEO Lab Console measures structural retrievability — whether individual sections can be parsed, extracted, and synthesised by generative search systems. The Console scores each section for Extractability, flags entity ambiguity, simulates compression distortion, and provides structural rewrite suggestions aligned with The GEO Stack framework.

Sample Console Metrics

Below are sample metrics from a GEO Lab Console analysis demonstrating the scoring methodology:

- Overall AI Visibility Score: 53/100

- Extractability: 72.8/100

- Schema Coverage: 70.8/100

- E-E-A-T Signals: 15/100

- Freshness Score: 17.5/100

- Technical Health: 100/100

- Citation Likelihood: 69.8/100

These metrics reveal a common pattern: high technical health (100/100) paired with low entity signals (15/100). The GEO Log documents how addressing this imbalance improved citation rates from 37% to 61% in controlled experiments.

Core Features

Extractability Scoring analyses each section and assigns a score from 0 to 100 based on declarative clarity, entity explicitness, standalone completeness, structural formatting, and compression stability.

Compression Simulation generates a two-sentence synthesis of each section and shows what survives compression versus what is lost.

Entity Ambiguity Detection identifies where content refers to concepts without sufficient disambiguation, which reduces retrieval accuracy in RAG pipelines.

Structural Rewrite Suggestions provide specific, actionable guidance for improving section-level extractability based on The GEO Stack framework.

How It Differs from SEO Tools

Traditional SEO tools measure document-level signals — keywords, links, technical health. The GEO Lab Console measures section-level structural clarity — whether individual content blocks can be retrieved and used by generative systems. The Console operationalises the GEO Stack framework and provides the first public demonstration of compression simulation as an extractability measurement technique.

Research Access Program — 20 Sites

We’re opening a small research access program. You submit your site, we run the Console audit, and you receive the PDF report with your GEO Stack scores and prioritised fixes. We then track whether your changes actually moved citation behaviour across ChatGPT, Gemini, and Perplexity.

What you get

- Full AI visibility audit across the five GEO Stack layers

- PDF report with prioritised fix backlog weighted by citation impact

- Citation baseline set across ChatGPT, Gemini, and Perplexity

- 30-day recheck showing exactly what moved — scores and citation behaviour

What we get

Your data contributes to The GEO Lab’s ongoing research into what actually drives AI citation. Findings are published in aggregate and anonymised unless you want attribution.

How it works

- You submit your site via the form below

- We run the Console audit within 5 business days

- You receive the PDF report with your GEO Stack scores and prioritised fixes

- We set a citation baseline across three AI platforms

- 30 days later, we recheck and send you the delta

No charge. No product to sell. This is a research program.

Limited to 20 sites. Priority given to sites with published content and at least 3 months of history.

AI Visibility Form Submission

Please fill in the form below to get your AI Visibility Report.

Key Takeaway

The GEO Lab Console measures what traditional SEO tools cannot: section-level extractability for AI systems. Sample analysis shows typical gaps between Technical Health (100/100) and E-E-A-T Signals (15/100), explaining why technically-sound content often fails to get cited. The Console operationalises the GEO Stack framework with specific, actionable scores.

Frequently Asked Questions

What metrics does the GEO Lab Console measure?

The Console measures seven core metrics: Overall AI Visibility, Extractability, Schema Coverage, E-E-A-T Signals, Freshness, Technical Health, and Citation Likelihood. Each metric is scored from 0-100 and mapped to the GEO Stack framework layers.

When will the GEO Lab Console be publicly available?

The Console is currently available through our Research Access Program for 20 sites. Apply above to join the program and receive a full audit with 30-day citation tracking.

How does extractability scoring work?

Extractability scoring analyses content at the section level, measuring declarative clarity, entity explicitness, standalone completeness, and compression stability. Sections scoring above 70 demonstrate high retrieval readiness for AI systems.

What is compression simulation?

Compression simulation generates a two-sentence synthesis of each content section, showing what survives AI compression versus what is lost. This reveals whether key information remains extractable when AI systems summarise your content for users.

How does the Console differ from traditional SEO tools?

Traditional SEO tools measure document-level signals like keywords, backlinks, and technical health. The GEO Lab Console measures section-level structural clarity—whether individual content blocks can be retrieved and used by generative AI systems. It operationalises the GEO Stack framework for practical diagnostics.

Questions about the Console? Contact The GEO Lab · Homepage

Have questions about this topic? Contact The GEO Lab · Return to homepage