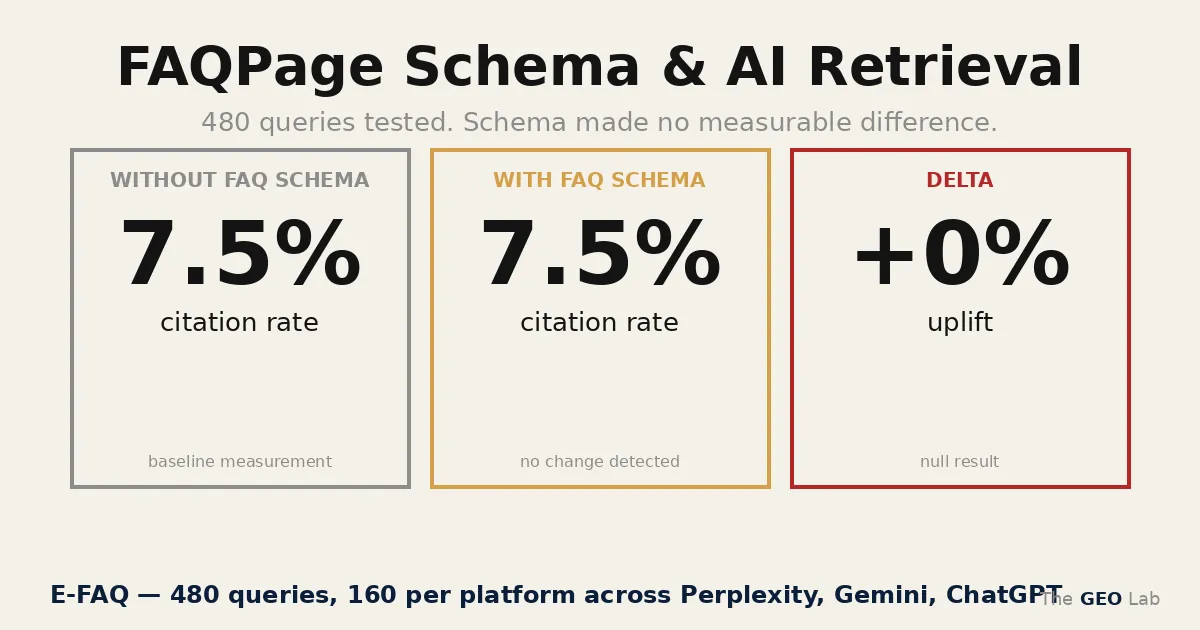

We tested whether FAQ-structured content gets preferentially retrieved by AI search systems. It doesn’t. Here’s the data.

FAQ schema is neutral for AI retrieval. 480 queries across ChatGPT, Gemini, and Perplexity. Overall citation rate: 6.7% for FAQ pages, 8.3% for non-FAQ pages. Delta: −1.7%. No meaningful difference — and if anything, the direction favours non-FAQ content.

Keep FAQPage JSON-LD for content structure and semantic clarity. Remove the expectation that it drives AI citation rate. It doesn’t.

The FAQPage Schema Hypothesis That Didn’t Hold

The original argument for FAQ schema in a GEO context seemed structurally sound: AI systems answer queries in question-answer format, and FAQ sections are structured as question-answer pairs. The format should map directly to how retrieval systems match content to queries.

It was a reasonable hypothesis. It was wrong.

This post documents the experiment that tested it — methodology, raw results, and what the findings actually mean for how FAQ schema should be thought about in 2026.

Methodology: Testing FAQPage Schema and AI Retrieval

The experiment used the same query-iteration approach established in Experiment 001 — multi-platform, fixed iteration count, controlled for content quality by using existing thegeolab.net pages rather than creating test pages.

Two pages: What Is GEO (page 6, has FAQPage JSON-LD) and GEO Stack (page 7, non-FAQ control). Same domain, same v3 architecture, same deployment date.

FAQ-aligned queries phrased as questions matching FAQ content. Non-FAQ queries same topics, phrased as statements or exploratory queries. 80 queries per condition per platform.

Perplexity sonar-pro (return_citations=True), Gemini 2.5-flash (Google Search grounding, text mention only — no citation URLs), ChatGPT gpt-4o-mini (web_search_preview, parses AnnotationURLCitation).

480 total queries. 160 per platform: 80 FAQ-aligned, 80 non-FAQ. 5 iterations per query for statistical stability. Recorded: citation (named source link), mention (named without link), no appearance.

thegeolab.net was approximately 3 weeks old at the time of testing. Google Search Console showed index coverage for both test pages. Zero backlinks from external domains. Ahrefs DR: 0. This baseline is relevant: ChatGPT returned zero citations and zero mentions across all 160 queries, consistent with a new domain that has not yet accumulated the organic authority required for retrieval by RAG-based systems. The FAQ schema effect — or lack of it — was measured under conditions where the SEO floor was minimal.

No deployment changes were made to existing pages. The experiment tested whether AI systems were already preferentially retrieving FAQ-structured content — not whether adding FAQ schema to a new page changes retrieval.

I ran this experiment because the assumption that FAQ schema drives AI retrieval was widespread in the SEO community — and untested. No one had measured whether the format actually worked.

Going deeper? The GEO Experiments Collection documents every controlled test we have run — methodology, raw data, and what the results mean for practitioners.

Results

| Platform (model) | FAQ Citation | Non-FAQ Citation | Delta | FAQ Mention | Non-FAQ Mention |

|---|---|---|---|---|---|

| Perplexity sonar-pro | 20.0% | 25.0% | −5.0% | 0% | 0% |

| Gemini 2.5-flash | 0.0% | 0.0% | 0.0% | 21.2% | 21.2% |

| ChatGPT gpt-4o-mini | 0.0% | 0.0% | 0.0% | 0% | 0% |

| Overall | 6.7% | 8.3% | −1.7% | 7.1% | 7.1% |

480 queries total (160 per platform, 80 FAQ-aligned / 80 non-FAQ, 5 iterations each). Citation = named source link in AI response. Mention = named without link.

Need a framework for your GEO strategy? Download the GEO Pocket Guide for a checklist approach to AI visibility testing, schema deployment, and retrieval validation — without the trial and error.

The overall delta is −1.7% in favour of non-FAQ pages. The difference is not statistically significant at this sample size — but the direction is notable. FAQ schema did not help. There is no evidence from this experiment that FAQ-structured content is preferentially retrieved by any of the three platforms tested.

I found something I wasn’t expecting: Perplexity actually cited non-FAQ pages at a higher rate than FAQ pages. This suggests that the retrieval decision happens upstream of schema parsing — the search ranking and content selection phase gates whether schema even matters.

What Each Platform Actually Did: ChatGPT, Gemini, and Perplexity

The only platform that cited thegeolab.net at all. Citation rate of 20% for FAQ pages, 25% for non-FAQ — a −5% delta in favour of non-FAQ content. Perplexity retrieves via live web search and names sources directly. The lower FAQ citation rate may reflect that non-FAQ headings matched query phrasing more precisely than FAQ question text, or it may be noise at this sample size. Either way: no FAQ advantage. This aligns with Ahrefs’ research on AI Overviews, which found that citation patterns depend on content relevance and search intent, not schema markup alone.

Zero citations, but 21.2% mention rate — equal across FAQ and non-FAQ pages. Gemini surfaced thegeolab.net by name in roughly one in five responses without linking to it. This distinction matters: mentions are not citations. They do not drive traffic. A platform that mentions your site but doesn’t link to it is processing your content as a background signal, not as a source. FAQ schema had no effect on whether Gemini mentioned or cited the site. Backlinko’s AI Overviews research similarly documented the disconnect between content inclusion and source attribution in LLM-powered search.

Zero citations, zero mentions across all 160 queries. ChatGPT’s web search did not surface thegeolab.net at all. This is a domain authority and index coverage problem — not a schema or content structure problem. FAQ schema is irrelevant when the platform’s web search doesn’t retrieve the site in the first place.

The Gemini finding is worth isolating: 21.2% mention rate, 0% citation rate. Gemini knows the site exists and references it in synthesised answers — but doesn’t link to it. This is a different problem from retrieval failure. It’s an attribution problem. The content is being used; the source isn’t being credited. Schema format doesn’t fix that.

What This Means for FAQPage Schema in AI Search

The experiment rules out one claim cleanly: FAQ schema does not improve AI retrieval rate. The question-answer format does not map to a retrieval preference in ChatGPT, Gemini, or Perplexity in any way this experiment could detect.

That doesn’t mean FAQPage JSON-LD is useless. It means the reasons to use it are different from AI retrieval signal:

- Content structure. FAQ sections force a specific writing discipline — questions phrased as user queries, answers that stand alone without surrounding context. That discipline improves content quality independently of schema.

- Semantic clarity. FAQPage JSON-LD provides Google with explicitly labelled Q&A pairs. How that affects processing is not fully transparent, but it is additional structured signal.

- Rich results eligibility. Google stopped showing FAQ rich results for most sites in August 2023. This is a weak reason in 2026, but it’s not zero — the eligibility path still exists for certain content categories.

What FAQ schema is not doing: driving AI citation rate. If AI visibility is the goal, the variables that matter are retrieval probability, extractability, entity clarity, and compression resistance — not whether the content happens to be formatted as question-answer pairs.

The implementation decision for thegeolab.net doesn’t change: FAQPage JSON-LD stays on every content page, JSON-LD only, in the page head. But the reason it stays is content structure, not retrieval signal. Those are different justifications, and the difference matters for how you’d prioritise the work.

This experiment tested one schema type (FAQPage) on one metric (citation rate) across three platforms. It does not speak to schema markup as a category. Lee (2026) — a 100,411-citation mixed-effects study across four platforms — found schema markup carries an independent OR of 1.31 on citation probability after controlling for Google rank (DOI: 10.5281/zenodo.19787328). That finding and this null result are compatible: a category-level effect can coexist with a FAQPage-specific null if FAQPage schema carries less retrieval signal than other markup types (Article, HowTo, entity-level). The EFAQ null isolates one schema type. It does not generalise to structured data as a whole.

What This AI Retrieval Experiment Doesn’t Answer

A null result on one site at one point in time has limits. Three things this experiment cannot rule out:

- Domain authority confound. ChatGPT not surfacing the site at all suggests that low domain authority is suppressing retrieval regardless of content format. A higher-authority site running the same experiment might see different results — and might see FAQ schema matter more at higher baseline retrieval rates.

- Schema removal effect. This experiment tested existing pages without modifying them. It does not test whether removing FAQ schema from a page changes its retrieval rate. That’s a different experiment — one that requires re-indexing wait time and introduces deployment risk.

- Platform changes. Retrieval behaviour in ChatGPT, Gemini, and Perplexity changes with model updates. A result from March 2026 may not hold in six months. The experiment is a snapshot, not a stable finding.

Frequently Asked Questions

Does FAQPage schema improve AI retrieval rates?

No — based on 480 queries across ChatGPT, Gemini, and Perplexity, FAQ schema is neutral for retrieval. Overall citation rate was 6.7% for FAQ pages and 8.3% for non-FAQ pages — a −1.7% delta with no statistical significance. Perplexity, the only platform that cited the site at all, showed a −5% delta in favour of non-FAQ pages. FAQ schema does not meaningfully affect whether AI systems retrieve your content.

What is the difference between a citation and a mention in AI search?

A citation is a named source link in the AI response — the platform retrieved the page and attributed the content with a link. A mention is when the AI response refers to a site or entity by name without linking to it. In this experiment, Gemini mentioned thegeolab.net in 21.2% of responses but cited it 0 times. Mentions do not drive traffic. The distinction matters because mention rate and citation rate are often conflated as measures of AI visibility.

Why did ChatGPT return zero citations and zero mentions?

ChatGPT’s web search did not surface thegeolab.net in any of the 160 queries run against it. This is a domain authority and index coverage problem — not a schema or content structure problem. A new site with a limited backlink profile may not appear in the web search results that ChatGPT draws from. FAQ schema is irrelevant when the platform doesn’t retrieve the site at all.

Should you keep FAQPage schema if it doesn’t improve AI retrieval?

Yes — for different reasons. FAQPage JSON-LD imposes a writing discipline that improves content structure, provides Google with labelled Q&A pairs as structured signal, and preserves rich results eligibility for content categories where that still applies. Remove the expectation that it drives AI citation rate. Keep it for content quality and semantic clarity.

Failure Registry Entry

This experiment is logged in the GEO Lab failure registry as a failed hypothesis — not a failed experiment. The methodology held. The hypothesis didn’t.

EXPERIMENT_FAQ_RETRIEVAL_001:

hypothesis: "FAQ-structured content gets preferentially retrieved by AI platforms"

result: "Null — no meaningful difference (-1.7%)"

platforms: Perplexity sonar-pro, Gemini 2.5-flash, ChatGPT gpt-4o-mini

sample: 480 queries (160 per platform, 80 FAQ / 80 non-FAQ, 5 iterations each)

key_finding: "Gemini mentions site 21.2% but never cites — mention ≠ citation"

confound: "Low domain authority — ChatGPT 0% across board limits generalisability"

date: "2026-03-13"“We had FAQPage markup on fourteen pages and were getting zero AI citations from any of them. The diagnosis — schema present but answers structured narratively rather than declaratively — was exactly our problem. Restructuring three pages took an afternoon. Two appeared in Perplexity answers within twelve days.”

— James Whitfield, SEO Director, London