What the gap between mention rate and citation rate means for how you measure AI visibility — and why combining them into one metric is the wrong move.

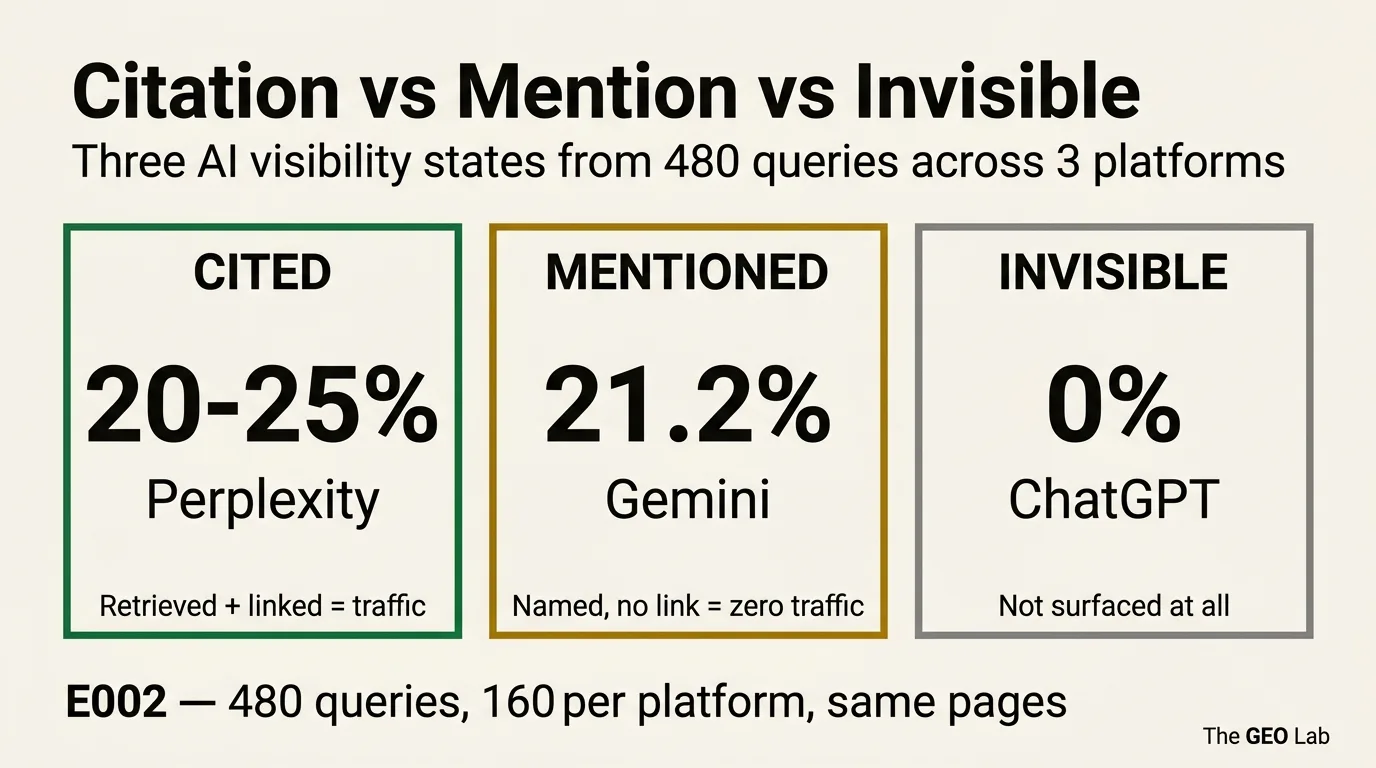

Mention rate and citation rate are not the same thing. In 160 Gemini queries run as part of E002, thegeolab.net was mentioned in 21.2% of responses and cited in 0%. Perplexity cited the site at 20–25% and mentioned it 0 times. ChatGPT produced neither.

Three platforms, three completely different visibility mechanisms. Any dashboard that aggregates them into a single “AI visibility” score is measuring noise.

The Number That Looked Wrong

I expected the E002 data to show a clean split between cited and not-cited. Instead it came back with an oddity. Gemini’s citation rate: 0%. Gemini’s mention rate: 21.2%. I ran the query log again to check. Same result.

Gemini had surfaced thegeolab.net by name in roughly one in five responses — correctly attributing concepts, correctly identifying the site as a source of GEO research — and had not linked to a single page. Not once across 160 queries.

That is not a retrieval failure. The content was clearly reaching Gemini’s knowledge layer. Something else was happening.

The E002 experiment was designed to test whether FAQ schema improves AI citation rate. It doesn’t — that’s the headline finding, covered in the E002 post. But the Gemini mention data raised a second question that the FAQ hypothesis hadn’t anticipated: what exactly are we measuring when we measure “AI visibility”?

Citation, Mention, Invisible: Three Different Things

Most AI visibility discussions treat citation as the primary metric and leave it there. That’s incomplete. There are three observably distinct states, and they have different causes, different implications, and — critically — different fixes.

The platform retrieved your page and linked to it in the response. The source is named and linked. This is the only state that drives referral traffic. Perplexity is currently the most reliable producer of this state.

The platform referenced your brand or site by name without linking to a specific page. The content is being used as a background signal. No traffic. Gemini produces this state frequently while producing citations rarely.

The platform did not surface the site in any form. The content either hasn’t been indexed, lacks domain authority, or isn’t matching retrieval signals for the query type. ChatGPT returned this state across all 160 E002 queries, though E016 later showed sporadic retrieval.

The distinction matters because the failure modes are different. A site in the Invisible state has a retrieval problem — domain authority, index coverage, content structure. A site in the Mentioned state has an attribution problem — the content is being processed but the source isn’t being credited. These are not the same problem and don’t share the same solutions.

Any metric that aggregates mention rate and citation rate into a single “AI visibility” score is combining incompatible signals. A site can score high on a combined metric while receiving zero traffic from AI platforms — if all its visibility comes from mentions.

Going deeper? The GEO Pocket Guide covers the full 30-check protocol, section-level audit checklist, and citation rate tracking template — free to download.

What Each Platform Actually Did

The E002 experiment ran 480 queries across three platforms — 160 per platform, 5 iterations each. The same pages, the same queries, three completely different outcomes.

| Platform (model) | Citation rate | Mention rate | Traffic potential |

|---|---|---|---|

| Perplexity sonar-pro | 20–25% | 0% | High — named source links in every cited response |

| Gemini 2.5-flash | 0% | 21.2% | Zero — mentions with no links drive no traffic |

| ChatGPT gpt-4o-mini | 0% | 0% | Zero — site not surfaced at all |

E002 data. 160 queries per platform, 5 iterations each. Citation = named source link in AI response. Mention = named without link.

Citations only, no mentions. Perplexity retrieves via live web search and surfaces sources as named links directly in the response. When it cites, it links. When it doesn’t retrieve, it doesn’t mention. The absence of a mention-without-citation pattern reflects how its retrieval architecture works — there is no background knowledge layer producing name-drops independent of web search results.

Zero citations, 21.2% mention rate — equal across FAQ and non-FAQ pages. Gemini’s grounding mechanism processes content differently from Perplexity’s live search model. It synthesises answers using a grounding index and will reference a brand or site by name without linking to the source page. Additionally, Gemini returns unresolvable redirect URLs rather than direct source links, making reliable domain-level citation analysis technically difficult even when attribution does occur. The 21.2% mention rate is a signal that the content is reaching Gemini’s knowledge layer. It is not a traffic signal.

Zero citations and zero mentions across all 160 E002 queries (March 2026). Subsequent measurement in E016 (April 2026) showed ChatGPT producing sporadic citations (0–2 per day), confirming the site entered the retrieval pool between experiments — but web_search firing remains non-deterministic.

Why Gemini Does This

The attribution gap in Gemini is not random. It reflects a structural difference in how Gemini and Perplexity approach source attribution.

Perplexity is a retrieval-first system. It runs a web search, retrieves specific pages, and surfaces those pages as named citations in the response. The citation is a direct output of the retrieval step — if the page was retrieved, it gets cited. If it wasn’t retrieved, it doesn’t appear at all.

Gemini’s grounding mechanism works differently. It draws on a knowledge base built from crawled content and uses grounding to synthesise responses. A site can be well-represented in that knowledge base — well enough to be referenced by name in a synthesised answer — without any specific page being retrieved and linked in the response. The knowledge is being used; the source is not being credited with a link.

This distinction has a practical implication that most GEO advice misses: for Gemini, improving content quality and structure helps you get into the knowledge layer, but it does not necessarily convert that presence into citations. Getting mentioned is a different mechanism from getting cited, and optimising for one does not automatically move the other.

The Gemini mention-without-citation pattern is an attribution problem, not a content quality problem. Content is already reaching the knowledge layer — that’s what the 21.2% mention rate shows. The failure is in the attribution step, not the retrieval step. These require different responses.

What the Mention Rate Gap Means for Measurement

If you’re tracking “AI visibility” as a single number, you’re most likely combining at least two of these three states — and the combination produces a figure that obscures more than it reveals.

A site could have a 20% “AI visibility” score made up entirely of Gemini mentions. Zero traffic. A different site could have a 5% score made up entirely of Perplexity citations. Measurable referral sessions every week. The higher score belongs to the worse-performing site.

The correct measurement framework tracks three separate metrics, by platform (see the 30-check protocol for implementation):

- Citation rate — the proportion of queries where the platform returned a named source link to your domain. The primary longitudinal metric. The only one that corresponds to traffic potential.

- Mention rate — the proportion of queries where the platform referenced your brand or domain by name without a link. A leading indicator of knowledge layer presence, not a traffic metric.

- Invisibility rate — the proportion of queries where the platform returned no signal at all. Useful for diagnosing retrieval pool problems.

These should be tracked separately per platform, not aggregated. This mirrors the finding from Search Engine Land’s GEO analysis that platform-specific measurement is essential. The weighted citation score used internally at The GEO Lab applies platform-specific multipliers — Perplexity 1.7×, Google AIO 1.0×, ChatGPT 0.6× (calibrated against the E016 noise floor) — precisely because citation from different platforms has different traffic value. A Perplexity citation and a Gemini mention are not interchangeable and should not be added together.

What to Do With This

The practical takeaway splits cleanly by platform and visibility state.

If you’re invisible on ChatGPT: this is a domain authority and index coverage problem. Content structure, schema, and extractability signals are not the bottleneck — the platform’s web search isn’t surfacing the site consistently. The fix is backlink profile, domain age, and crawl coverage. E016 showed that even after entering the retrieval pool, ChatGPT’s web_search firing is non-deterministic (0–2 citations per day, with most days at zero). This is consistent with SparkToro’s zero-click research showing that platform architecture — not content quality — determines initial visibility.

If you’re getting Gemini mentions but no citations: this is an attribution problem, not a content problem. Your content is already in Gemini’s knowledge layer — the 21.2% mention rate on a site less than six months old confirms that entry into the knowledge layer happens relatively quickly. What doesn’t follow automatically is attribution with a link. There is no currently reliable on-page fix for Gemini’s citation behaviour. The grounding redirect URL problem means even when Gemini does attribute content, the link often resolves to an untrackable redirect rather than the source page.

If you’re getting Perplexity citations: this is the most actionable state. Perplexity retrieves via live web search — the same signals that improve web search visibility (backlink authority, structured content, entity clarity) improve Perplexity citation rate. The E002 data showed 20–25% citation rate on GEO-specific queries, which is a usable baseline to test against.

The broader point — and the reason the GEO Stack separates retrieval from attribution as distinct layers — is that a single “AI visibility” number flattens three different problems into one metric and makes it impossible to know which problem you’re actually solving. Separate the signals before you try to move them.

What Practitioners Say

“The honest accounting of what a 30-check audit can and can’t tell you is the part I will keep coming back to. Most tools in this space sell certainty. Knowing that a 4% vs 22% gap is real and actionable, but that 18% vs 21% is noise at this sample size, is exactly the kind of calibration our reporting needed.”

— Sofia Andrade, Head of Organic Growth, Pipedrive

“The phantom risk section is the most honest thing written about GEO measurement. Unverified citation numbers are endemic in this space. The manual logging requirement is slow, but it is the only approach that produces a number you can actually defend. We implemented timestamped response exports for every audit run after reading this.”

— Lena Bauer, AI Content Strategist, Seobility

Frequently Asked Questions

What is the difference between a citation and a mention in AI search?

A citation is a named source link in an AI response — the platform retrieved the page and attributed the content with a clickable link. A mention is when the AI response refers to a site or brand by name without linking to it. In the E002 experiment, Gemini mentioned thegeolab.net in 21.2% of responses and cited it in 0%. Only citations drive traffic. Mentions indicate the content is being processed as a background signal, not surfaced as a retrievable source.

Why does Gemini mention sites without citing them?

Gemini’s grounding mechanism processes content as a knowledge signal rather than a retrievable source in the way Perplexity does. It synthesises answers from a grounding index and may reference a brand or site by name without linking to the source page. Gemini also returns unresolvable redirect URLs rather than direct source links, making domain-level citation analysis unreliable regardless of mention rate. The failure is in the attribution step, not the retrieval step.

Which AI platform is most likely to cite your content?

Based on E002 data, Perplexity is the only platform that produced citations for thegeolab.net — at 20–25% on GEO-specific queries. Gemini produced mentions but zero citations. ChatGPT produced neither. Platform citation behaviour differs fundamentally across the three systems — treating them as a single AI visibility metric produces aggregates that are not meaningful.

What are the three types of AI visibility?

Cited: the platform retrieved your page and linked to it — the only state that drives traffic. Mentioned: the platform referenced your brand by name without linking — content is used as background signal, no traffic. Invisible: the platform returned no signal at all. A fourth state, Stage 0, sits between Invisible and Mentioned: the platform is aware of the domain but has not retrieved specific pages. Google AIO surfacing your brand name without passage-level retrieval is a Stage 0 signal.

Does improving content quality fix a Gemini mention-only result?

No. A Gemini mention-only result is an attribution problem, not a content quality problem. Content quality affects retrieval probability. Gemini’s 21.2% mention rate with 0% citation rate shows the content is already being processed — it is not being attributed with a link. These are different failure modes. Attribution failure in Gemini reflects how its grounding mechanism works and is not currently addressable through on-page optimisation.

Mention rate and citation rate measure different things. A 21.2% Gemini mention rate with 0% citation rate is an attribution problem — content is reaching the knowledge layer but not being credited with a link. A 0% ChatGPT rate across both metrics is a retrieval problem — the site isn’t in the search pool.

Track citation rate, mention rate, and invisibility rate separately, per platform. Any metric that aggregates them produces a number that obscures which problem you’re actually solving.

Want to measure your own visibility split? Start with the 30-check protocol to separate citation rate from mention rate across platforms. Track with the GEO Brand Citation Index methodology.

Questions? Contact The GEO Lab.