50 queries. 3 platforms. 5 consecutive days. Zero content changes. This is what the baseline actually looks like.

E016 ran the GEO Lab 30-check protocol for five consecutive days (13–17 April 2026) with a publishing freeze — no content changes, no schema updates, no new pages. The result establishes the noise floor every other experiment depends on.

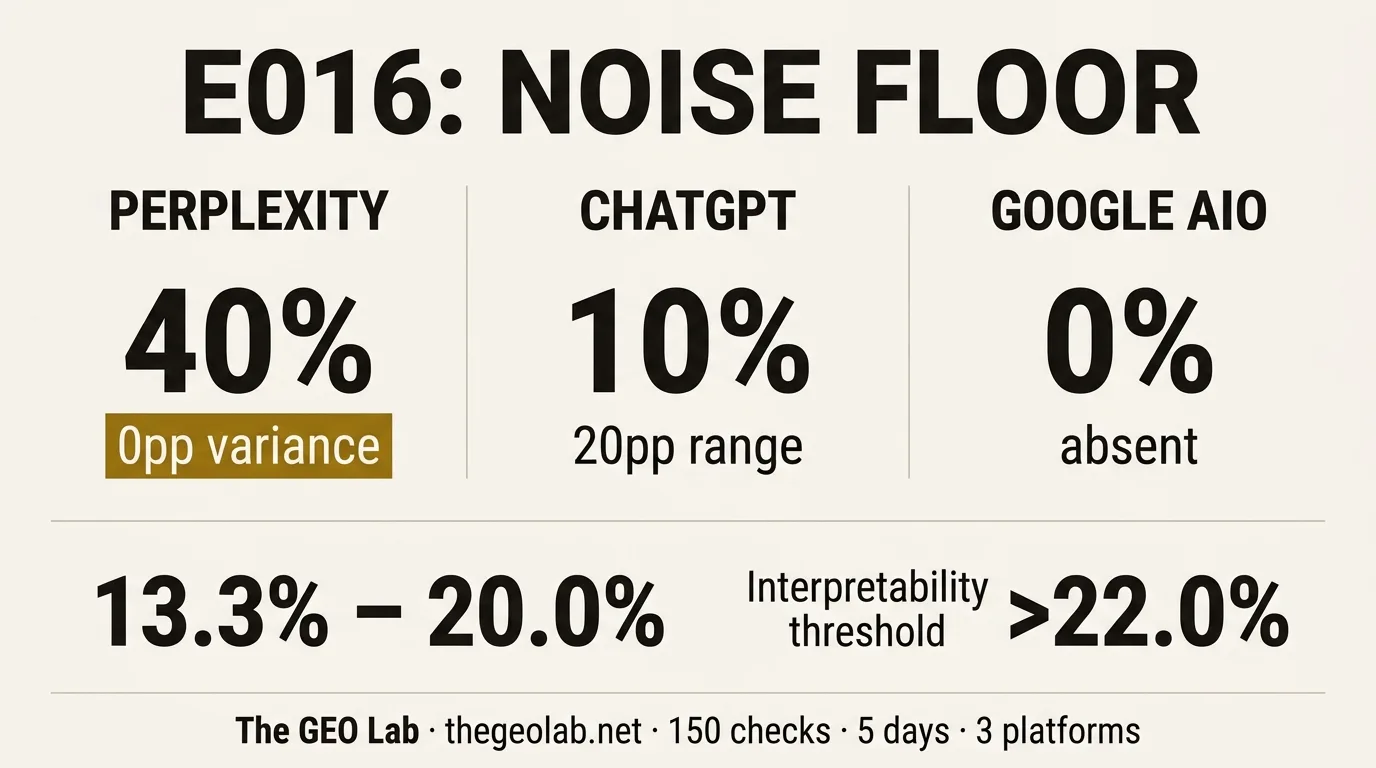

Combined citation rate: 13.3%–20.0% (6.7pp range). Perplexity: stable at 40%, zero variance. ChatGPT: noisy at 0%–20%, 20pp range. Google AIO: absent across all 50 checks. Tier 1 proprietary queries: 80% citation rate. Tier 2 category queries: 0%. Any future experiment result must exceed 22.0% combined to be interpreted as signal rather than noise.

Why This Experiment Exists

Here is an embarrassing thing about running GEO experiments without a noise floor: you cannot tell whether your results mean anything.

I ran Experiment 001 — declarative vs narrative structure, 24pp citation rate gap — and it looked like a meaningful result. The FAQ retrieval experiment found a −1.7% delta and called it null. The E014 Month 1 baseline measured a combined citation rate of 6.7% across Perplexity, ChatGPT, and Google AIO.

The problem: none of those results had a variability reference. A +4pp delta from an intervention looks like signal — unless the platform naturally swings ±6pp on the same query set on consecutive days. Then it’s noise. Without knowing the natural variability range, every result floats in interpretive ambiguity.

E016 was designed to resolve that ambiguity. The hypothesis was simple: the GEO Lab’s citation rate has a stable noise floor that can be characterised within five consecutive daily measurement cycles. The method was even simpler — run the same queries, same platforms, same protocol, for five days, and change nothing on the site while doing it.

Why E016 was the highest priority experiment in the backlog: every other experiment in the queue — E003, E006, E002 remeasure, and all 17 query-set-ready experiments — produces uninterpretable data without a noise floor reference. This experiment had to run before any of them.

Methodology

The design is the simplest possible: no intervention, no changes, no new content. Just the 30-check protocol running on repeat.

10 fixed queries — the same set used in E014. 5 Tier 1 proprietary queries (GEO Stack, LLM readability, Extractability, Retrieval Probability, System Memory). 5 Tier 2 category queries (what is GEO, GEO vs SEO, does GEO work, how to measure AI citation rate, how AI search engines select content).

Perplexity Sonar Pro (return_citations=True), ChatGPT (gpt-4o-search-preview, AnnotationURLCitation parsing), Google AI Overviews (DataForSEO SERP API, ai_overview item references check).

5 consecutive days: 13–17 April 2026. Automated via cron at 10:00 UTC daily. No content published, no pages updated, no schema changes during the window.

C = citation (named source link in response). M = mention (named without link). X = no appearance. AIO diagnostic logs whether an AI Overview was present or absent per query, distinguishing “not cited” from “no AIO triggered”.

Total checks: 150 (10 queries × 3 platforms × 5 days). The publishing freeze was the critical control — any content change during the window would have introduced a freshness signal that could contaminate the baseline.

Going deeper on GEO measurement? The GEO Pocket Guide covers the full 30-check protocol, noise floor methodology, and how to set interpretability thresholds for your own experiments. Free to download.

Results

| Platform | Day 1 | Day 2 | Day 3 | Day 4 | Day 5 | Avg | Range |

|---|---|---|---|---|---|---|---|

| Perplexity sonar-pro | 40% | 40% | 40% | 40% | 40% | 40% | 0pp |

| ChatGPT gpt-4o-search | 0% | 20% | 10% | 10% | 10% | 10% | 20pp |

| Google AIO DataForSEO | 0% | 0% | 0% | 0% | 0% | 0% | 0pp |

| Combined | 13.3% | 20.0% | 16.7% | 16.7% | 16.7% | 16.7% | 6.7pp |

150 checks total (10 queries × 3 platforms × 5 days). Citation = named source link in AI response. Zero mentions (M) recorded on any platform on any day.

The most striking result is not the combined rate. It is the zero variance on Perplexity — 40% every single day, the same four pages cited every time, for five consecutive days. That kind of stability in an AI retrieval system is not what most people would expect, and it changes how Perplexity results should be interpreted going forward.

The second most striking result is the zero mentions across 150 checks. Not a single M result on any platform on any day. The outcome distribution is binary: citation or nothing. There is no “mentioned without link” middle state on this query set and this site.

What Each Platform Did

40% citation rate, 0pp variance across 5 days. The same four pages cited every day without exception: Retrieval Probability, GEO Stack, Extractability, and System Memory. All four are Tier 1 proprietary concept pages. Zero Tier 2 category queries produced citations on any day. Perplexity is the primary signal platform for all subsequent experiments — any movement above 40% is immediate signal given zero baseline variance.

Average 10% citation rate, but with a 20pp variability range (0%–20%). Day 1 returned zero citations. Day 2 spiked to two citations (GEO Stack and Extractability). Days 3–5 settled at one citation per day. The utm_source=openai URL parameters confirm live web retrieval on positive days. ChatGPT is the noisy platform — a 20pp variability range means any ChatGPT-only delta under 20pp is uninterpretable as signal. It remains a secondary signal platform only.

Zero citations across all 50 checks. AI Overviews were present on most SERPs — this is not a case of AIO being absent from the results page. The diagnostic confirmed AIO items were returning results; thegeolab.net was simply not cited as a source in any of them. This is a domain authority and category competition problem. Established sources dominate both Tier 1 and Tier 2 queries in Google AIO at thegeolab.net’s current authority level. This requires a separate investigation — it is not a content structure problem that GEO Stack layer optimisation will resolve.

The zero mentions finding: across 150 checks on 3 platforms over 5 days, not a single M result appeared. The citation-or-nothing distribution means the “mentioned without link” state — which appeared at 21.2% on Gemini in the FAQ experiment — is not a characteristic of this query set and these platforms. On the E016 query set, the site is either cited with a link or entirely absent. There is no middle ground to optimise for.

The Tier 1 vs Tier 2 Split

The most important finding in E016 is not the noise floor range. It is the absolute split between Tier 1 proprietary queries and Tier 2 category queries on Perplexity.

4 citations per day, every day. Same 4 pages: Retrieval Probability, GEO Stack, Extractability, System Memory. Zero variance. These are queries for concepts The GEO Lab coined or named — no competing source can claim the same entity association.

Zero citations on every day across all 5 days. Queries like “what is GEO”, “GEO vs traditional SEO”, “does GEO actually work” — generic category questions where established higher-authority sources dominate retrieval. Format optimisation does not change this.

This is not a gradient. It is an absolute separation. The E014 Month 1 baseline suggested this split; E016 confirms it across five days of consistent measurement. Tier 1 at 80%, Tier 2 at 0% — every day, without exception.

The practical implication is significant. Most GEO frameworks advise optimising content structure — answer-first headings, declarative openings, context-independent sections. Those interventions improve passage-level extractability. But extractability is not the binding constraint on Tier 2 citation for a domain at thegeolab.net’s current authority level. Domain authority is the binding constraint. Perplexity retrieves thegeolab.net on Tier 1 queries because no other source owns those entity associations. On Tier 2 queries, it retrieves established sources regardless of how well-structured thegeolab.net’s content is.

What this means for the experiment queue: E003 (heading format) and E006 (context management) will test structural variables. Those experiments are most likely to show signal on Perplexity Tier 1 queries — where the baseline is stable and any change is interpretable. Expecting those experiments to move Tier 2 citation rate would be expecting the wrong variable to respond.

The Noise Floor: What It Means for Everything That Follows

The per-platform interpretability thresholds are different from the combined threshold, and the difference matters for experiment design.

Perplexity is the decisive measurement platform going forward. Zero variance across five days means any movement — even a single additional citation — is interpretable signal. An experiment that increases Perplexity citation rate from 40% to 50% is a real finding. An experiment that produces no Perplexity movement but a 15pp ChatGPT swing is noise.

ChatGPT’s 20pp variability range is the most practically limiting finding in E016. It means ChatGPT cannot be used as a primary measurement platform at this sample size. Ten queries is not enough to distinguish a genuine 15pp improvement from a platform fluctuation of the same magnitude. ChatGPT results from individual experiments should be reported but not interpreted in isolation until the variability range is better characterised at larger sample sizes.

Google AIO at zero is a separate research thread entirely. The absence is structural — it requires a crawl parity audit and a domain authority investigation, not a content optimisation response. It cannot be addressed by the same interventions that affect Perplexity and ChatGPT citation rate.

The six-month System Memory hypothesis — that consistent publishing on a focused topic cluster accumulates citation rate over time — now has its interpretability reference. Month 2, Month 3, and Month 6 baselines from E014 will be evaluated against the E016 noise floor. A Month 6 combined rate above 22.0% would be interpretable as System Memory accumulation. A rate within 13.3%–20.0% would not be.

What Comes Next

E016 completes the prerequisites. The experiment queue is now unblocked.

E003 runs next — heading format as the variable, question-style H2s versus declarative H2s, Perplexity as the primary measurement platform. The noise floor makes the design clean: if declarative headings produce a Perplexity citation rate above 40%, that is signal. If they do not, the null result is credible rather than ambiguous.

E006 follows — context management and passage-level clarity. This is the experiment most directly connected to the LLM readability framework: does removing unresolved pronouns and “as discussed above” references from section openings produce measurable citation rate improvement on the same query set?

The Google AIO absence opens a separate investigation that sits outside the main experiment queue. The question is whether the absence is a domain authority threshold problem or a content-type problem. That investigation starts with a crawl parity audit: confirm GoogleBot and AI crawlers are both accessing the same pages, confirm AIO is processing the correct versions, and identify whether any specific pages are closer to AIO citation eligibility than others.

EXPERIMENT_E016:

experiment: "30-check protocol reliability / noise floor"

hypothesis: "Citation rate has a stable noise floor characterisable in 5 days"

result: "Confirmed — Perplexity 40% ±0pp, ChatGPT 10% ±10pp, AIO 0%"

noise_floor_combined: "13.3%–20.0% (6.7pp range)"

interpretability_threshold: "22.0% combined"

tier_1_perplexity: "80% (4/5 queries cited every day)"

tier_2_perplexity: "0% (0/5 queries cited on any day)"

mentions: "0 across 150 checks — citation or nothing"

platforms: "Perplexity sonar-pro, ChatGPT gpt-4o-search-preview, Google AIO DataForSEO"

sample: "150 checks (10 queries × 3 platforms × 5 days)"

publishing_freeze: "13–17 April 2026 — no content changes during window"

next_experiment: "E003 — heading format (question vs declarative H2s)"

date: "2026-04-18"What Practitioners Say

“The Perplexity zero-variance finding changes how I think about platform-level measurement. Most practitioners average across platforms and report a single number. E016 shows that doing this conflates a structurally stable signal source with a noisy one — the combined average masks the diagnostic value of each platform independently. The noise floor framing is the right approach for anyone running controlled GEO experiments.”

“The Tier 1 / Tier 2 absolute split is the finding I’ll be referencing most. The SEO instinct is to optimise format when citation rate is low. E016 demonstrates that on category queries at this domain authority level, format is not the binding variable — domain authority is. That reframes the content priority entirely. You need to build authority before format optimisation produces returns.”

Frequently Asked Questions

What is a noise floor in GEO measurement?

The noise floor is the citation rate a site receives from AI platforms without deliberate GEO intervention — accidental citations from query-passage coincidences and natural platform variability. Establishing the noise floor requires a fixed query set run across platforms on five consecutive days with no content changes. Any future experiment result within the noise floor range is uninterpretable as signal from the intervention.

Why did Perplexity produce the same result every day?

Perplexity cites 4 of 10 queries every day because those 4 pages — Retrieval Probability, GEO Stack, Extractability, System Memory — are the highest-authority sources for those specific proprietary concepts in Perplexity’s index. No other domain has produced competing content for those exact queries. The stability reflects retrieval determinism on uncontested entity-specific queries. The 5 Tier 2 category queries produce zero citations because higher-authority established sources dominate those topics.

Why is Google AIO absent when AI Overviews are present on the SERPs?

Google AIO being present on a SERP means Google is synthesising an AI Overview for that query. It does not mean thegeolab.net is cited within it. The AIO diagnostic confirmed AI Overviews were triggered on most queries — thegeolab.net simply was not selected as a source. This is a domain authority and category competition problem. Google AIO citation requires higher domain authority than Perplexity citation for the same query set.

What is the interpretability threshold and how was 22.0% determined?

The interpretability threshold is the minimum combined citation rate a future experiment must produce to be considered signal rather than noise. It is calculated as the E016 noise floor ceiling (20.0%) plus 2 percentage points. The 2pp buffer accounts for the possibility that the true noise ceiling is slightly above the observed maximum. Any experiment result at or below 22.0% combined falls within the range that natural platform variability alone could produce.

Does the Tier 1 / Tier 2 split mean category content is pointless to publish?

No. Category content builds domain authority over time — it attracts backlinks, signals topical depth to Google, and creates the entity associations that eventually support Tier 2 citation as the domain grows. The E016 finding is that category content does not produce AI citation at thegeolab.net’s current authority level, not that it never will. The priority implication is to not expect format optimisation alone to move Tier 2 citation rate at this stage.

The noise floor for thegeolab.net is 13.3%–20.0% combined citation rate. Perplexity is stable at 40% with zero variance — the primary measurement platform for all subsequent experiments. ChatGPT is noisy at 0%–20% — a secondary signal only. Google AIO is absent — a domain authority problem, not a content structure problem.

The Tier 1 / Tier 2 split is absolute: 80% citation rate on proprietary concept queries, 0% on category queries, every day across five days. Format optimisation is not the binding constraint on Tier 2 citation at this domain authority level.

Future experiments must exceed 22.0% combined to produce interpretable results. E003 — heading format — runs next.

The full methodology, five-day dataset, and annotation re-run for E016 are available as a preprint on Zenodo:

Ferreira, A. (2026). Noise Floor Measurement for AI Citation Experiments: A Five-Day Protocol and Diagnostic Framework. Zenodo. https://doi.org/10.5281/zenodo.19869156

Package includes: paper.md (5,376 words), e016_spreadsheet_export.csv (5-day citation log), e016_annotation_rerun_20260428.json (full annotations), e016_aio_check_20260428.txt. Licensed CC BY 4.0.