The measurement step that makes every phase of a GEO content strategy interpretable — and the experiment at The GEO Lab that made it unavoidable.

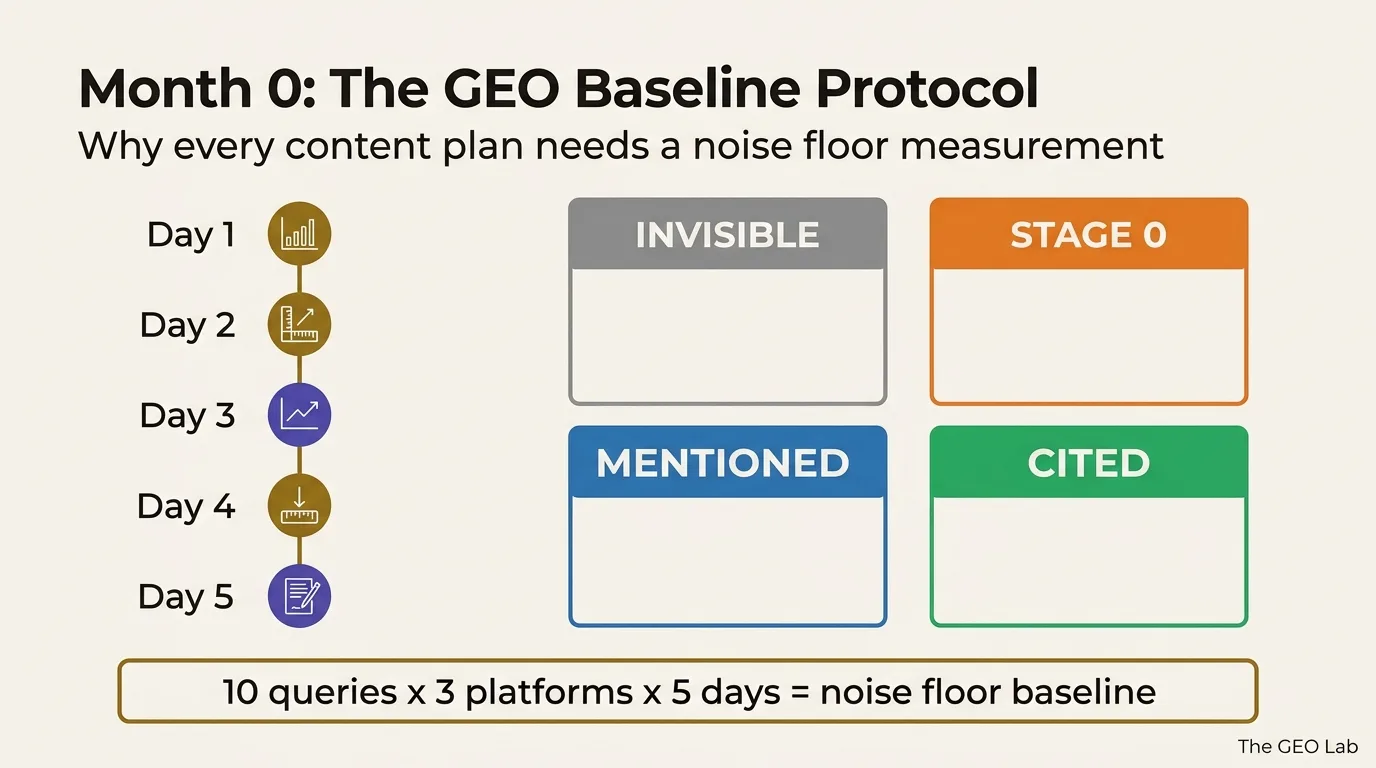

Every 12-month GEO content plan assumes you know your current citation rate. Almost nobody does. Without a pre-intervention baseline, you cannot distinguish citation rate improvement from natural platform variability — the noise floor. Month 0 is five consecutive days of measurement on a fixed query set, across three platforms, before any content changes are deployed. It identifies which of four visibility states your site is in per platform, and whether your Tier 1 or Tier 2 citation gap is the actual constraint. The plan is only interpretable if you know where you started.

The Assumption Every Content Plan Makes

There is a 12-month GEO content architecture template doing the rounds — the kind of phased framework that Search Engine Land and others have described as the next evolution of content strategy. The phased logic is sound: Foundation phase first, Authority phase second, Expansion and Dominance to follow. The content types are sensible. The schema recommendations are defensible.

There is one problem buried in the structure. The citation velocity curve — the projected growth from 12 citations per month to 340 — assumes a known starting point. It assumes you have already measured where you are before the plan begins.

I had published content, applied schema, structured pages for AI retrieval, and had no clear idea whether any of it was working. Not because I wasn’t paying attention. Because I hadn’t established a measurement baseline before making the changes. By the time I started measuring, I had no pre-intervention reference point. I couldn’t tell whether the citation rate I was observing was the result of the content decisions I’d made, or what any site at this stage of development would have received regardless.

That is the noise floor problem. And it is why E016 — the noise floor measurement experiment — is the highest-priority experiment currently running at The GEO Lab. Every other experiment result is uninterpretable without it.

What the Noise Floor Actually Is

The noise floor is the citation rate a site receives from AI platforms without deliberate GEO optimisation. Every site with indexed content receives some AI citations by accident: a query happens to match a passage, a platform retrieves the content because nothing more authoritative exists, or the system is simply in a sampling state that favours newer sources that day.

That accidental citation rate is the noise floor. Any GEO intervention that does not move your citation rate measurably above the noise floor has produced no detectable effect. You may have made the right structural changes. The right entity decisions. The right schema choices. But if the movement is within the natural variability of the platform, you cannot tell whether the change did anything.

The implication for every content plan: if you start publishing Phase 1 content without knowing your noise floor, and your citation rate goes from 8% to 12%, you do not know whether that is a 4-point improvement from your GEO work or a 4-point fluctuation from platform variability. The two are indistinguishable without a baseline.

Establishing the noise floor requires the same fixed query set run across the same platforms on five consecutive days — before any content changes are deployed. A single day’s measurement is not sufficient. AI citation behaviour is variable enough at this scale that one pass can misrepresent the stable underlying rate by several percentage points in either direction. This mirrors the measurement instability that SparkToro’s zero-click research documented for traditional search — single-day snapshots do not represent stable behaviour.

Going deeper? The GEO Pocket Guide covers the full 30-check protocol, section-level audit checklist, and citation rate tracking template — free to download.

The Four AI Visibility States

Month 0 measurement does not just establish a citation rate. It identifies which of four visibility states your site is in on each platform. The states are distinct. Each requires a different response. Running a content plan optimised for the wrong state wastes the entire phase.

The platform’s web search does not surface your domain at all. Schema, content architecture, and retrieval optimisation are irrelevant until this is resolved. The fix is domain authority and index coverage — not content format. This is precisely what happened with ChatGPT in The GEO Lab’s FAQ retrieval experiment: zero citations and zero mentions across 160 queries, with the cause identified as index coverage rather than content quality.

The platform references your brand in synthesised answers without retrieving specific passages or linking to specific pages. Entity recognition is working. Passage-level retrieval is not. The fix is Extractability signals — section-level structure, declarative openings, context-independent chunks. Stage 0 is meaningfully different from Invisible: the brand is known to the model, but content is not being cited as a source.

The platform names your site or brand in a response without a citation link. The content is being used as a background signal but not attributed. In The GEO Lab’s FAQ experiment, Gemini produced a 21.2% mention rate with 0% citations — the content is processed, the source is not credited. This is an attribution problem, not a retrieval failure, and the fixes differ accordingly.

The platform retrieves a specific passage, attributes it to your domain, and links to the source page. This is the outcome a 12-month GEO content plan is optimising for — and the only state with a direct traffic mechanism. Citation rate, measured via the 30-check protocol, is the primary GEO outcome variable at The GEO Lab.

A site starting a 12-month Foundation phase while in the Invisible state on ChatGPT will not achieve citation rate improvement on that platform regardless of content quality or schema implementation — because the platform’s web search is not surfacing the domain. Month 0 identifies this on day one.

Experiment E016: Measuring the Noise Floor at The GEO Lab

Hypothesis: The GEO Lab’s citation rate across the 30-check protocol (10 queries × 3 platforms) has a stable noise floor that can be distinguished from deliberate GEO intervention effects within five consecutive daily measurement cycles.

Method: 10 fixed queries run across Perplexity Sonar Pro, ChatGPT (gpt-4o-mini-search-preview), and Google AI Overviews on five consecutive days. No content changes deployed during the measurement window. Results recorded per-platform and as a combined citation rate.

Why this experiment is first: E016 is the prerequisite for every other experiment at The GEO Lab. Without a noise floor measurement, a citation rate delta of +4pp cannot be attributed to a specific intervention — it may fall within normal platform variability. The E014 Month 1 baseline (+4pp combined from baseline) is the data point that made this explicit: the improvement is real, but its magnitude relative to variability is unknown until E016 completes.

Status: Complete. Five consecutive measurement days ran 13–17 April 2026. Perplexity noise floor: zero variance (4/10 cited on all 5 days, same 4 Tier 1 queries). ChatGPT noise floor: 0–2 citation range. Combined citation rate: 13.3%–20.0%. Full results published in E016: Noise Floor Measurement Results.

The practical consequence of running E016 before anything else: every experiment in the GEO Lab backlog — E003, E006, E014 April baseline, E002 entity density — is now interpretable against the established noise floor. The variability reference exists: Perplexity showed zero citation variance across five days, and the only recorded movement (ChatGPT Day 2) was attributable to web search non-determinism rather than platform-level noise.

This is not a theoretical concern. The E014 Month 1 combined citation rate showed a +4pp improvement from the initial baseline. Whether that improvement exceeds the noise floor — whether it represents a real signal from deliberate GEO intervention rather than natural platform fluctuation — is precisely what E016 answered. The noise floor range — 13.3% to 20.0% combined — means a future intervention must exceed 20.0% to be considered signal.

The Month 0 Protocol

Month 0 is a measurement phase, not a content phase. Nothing is published during Month 0. The protocol is designed to produce a baseline citation rate with enough consecutive data points to estimate the noise floor.

- Fix the query set. Select 10 queries that cover your core entity terms and your primary category terms. These queries must remain identical across every measurement day and every subsequent monthly measurement cycle. Changing queries between cycles invalidates the baseline.

- Run the 30-check protocol on Day 1. 10 queries × 3 platforms (Perplexity Sonar Pro, ChatGPT web search, Google AI Overviews). Record for each query: citation (named source link), mention (named without link), no appearance. Platform-specific citation rates and a combined rate.

- Repeat identically on Days 2–5. Same queries, same platforms, same recording format. No content changes, no schema updates, no new pages published during this window. The five-day window captures day-to-day variability before any signal from GEO interventions enters the data.

- Identify your state per platform. From the five-day results: which platforms are producing citations, which are producing mentions only, and which are producing nothing. A platform that produces zero citations and zero mentions across all five days is in the Invisible state — document this before proceeding.

- Calculate your noise floor range. The range of citation rates across the five days (min to max) is your noise floor estimate. Any future intervention that produces a delta within this range is uninterpretable. Only deltas that exceed this range warrant confidence that the intervention had a measurable effect.

- Record the baseline. The five-day average per platform, combined rate, and state classification per platform become the Month 0 baseline. All subsequent monthly measurements are compared against this baseline — not against each other.

The full query design process — how to construct Tier 1 and Tier 2 query sets, how to record results across platforms, and how to interpret the monthly comparison — is documented in How to Measure AI Citation Rate.

Tier 1 vs Tier 2: The Query Split That Matters

Not all queries in the 10-query baseline set are equivalent. The distinction between Tier 1 and Tier 2 queries produces a diagnostic that a single combined citation rate cannot provide.

| Query type | What it targets | What it tells you |

|---|---|---|

| Tier 1 | Proprietary terms: framework names, methodology labels, brand-specific concepts associated exclusively with your site | Whether entity recognition is working. A site that cites only on Tier 1 queries has entity clarity but no category authority — AI systems know who you are but don’t reach for you on general category questions. |

| Tier 2 | Category terms: the generic problem your content addresses, where you compete with established sources | Whether your content has category authority. A site that cites only on Tier 2 queries has category presence without entity clarity — AI systems reach for the content but don’t attribute it to a recognised brand entity. |

The GEO Lab’s E014 Month 1 baseline measurement found exactly one citation across 30 calls, on a proprietary framework query — a Tier 1 citation with zero Tier 2 citations. That result is a specific diagnostic: entity recognition is established for the GEO Stack framework, but category authority for generalised GEO queries is not yet contributing to citation rate. The content plan priority that follows from that finding is different from what a combined citation rate alone would suggest.

The Month 0 Tier split produces the decision rule for which phase of a 12-month plan is your actual starting point. If Tier 1 citations are present but Tier 2 are absent, entity work is done — the priority is category authority content. If both are absent, entity declaration work is the correct month 1 priority. If both are present, the question is which phase’s content types are underperforming relative to query intent coverage.

What Practitioners Say

“The noise floor framing resolves an argument I’ve had with clients for eighteen months. They see a citation rate increase and attribute it to their content investment. Without a pre-intervention baseline and variability estimate, that attribution is unjustified. Having a structured Month 0 protocol gives teams the reference point they need before any phase of a GEO plan is evaluated.”

“The four visibility states — Invisible, Stage 0, Mentioned, Cited — are the clearest diagnostic framework I’ve seen for explaining to a client why their GEO content plan isn’t working. Knowing which state you’re in on each platform before writing a single piece of Foundation phase content changes which work gets prioritised entirely.”

Frequently Asked Questions

What is Month 0 in a GEO content plan?

Month 0 is the measurement phase that precedes any deliberate GEO content intervention. It establishes your baseline citation rate across Perplexity, ChatGPT, and Google AI Overviews using a fixed 10-query set run across five consecutive days. Without this baseline, you cannot distinguish citation rate improvement caused by content changes from natural platform variability — the noise floor.

What is the noise floor in GEO measurement?

The noise floor is the citation rate your site receives without deliberate GEO optimisation — accidental citations from query-passage coincidences, platform variability, and existing content quality. Any GEO intervention that does not move your citation rate measurably above the noise floor has produced no detectable effect. Five consecutive days of measurement on identical query sets establishes the variability range that defines the floor.

What are the four AI visibility states?

Invisible: the platform does not surface your domain — schema and content structure are irrelevant. Stage 0: the platform references your brand without retrieving specific passages or links. Mentioned: the platform names your site without attribution links — content is used as background signal. Cited: the platform retrieves a specific passage, attributes it, and links to the source. Each state requires a different intervention. Running Foundation phase content while Invisible on a platform produces no citation improvement on that platform.

How many queries do you need for a GEO baseline?

The GEO Lab 30-check protocol uses 10 fixed queries × 3 platforms. For a Month 0 baseline, this set must run on five consecutive days before any content changes are deployed. A single day’s measurement is insufficient — AI citation behaviour is variable enough at this sample size that one pass can misrepresent the stable underlying rate.

What is the difference between Tier 1 and Tier 2 citation queries?

Tier 1 queries target proprietary terms — framework names, methodology labels, and brand-specific concepts associated exclusively with your site. Tier 2 queries target category terms — the generic problem your content addresses, where established sources compete. A site that cites only on Tier 1 queries has entity recognition but no category authority. Month 0 identifies which gap exists before you decide which phase of a GEO plan is your actual starting point.

Is this different from a standard SEO audit?

Yes. A standard SEO audit checks page-level technical health, backlink profile, and keyword rankings. Month 0 GEO measurement checks section-level citation rate across AI retrieval platforms — a different measurement unit, different platforms, and a different outcome variable. Standard audit tooling is not designed to measure AI citation rate and produces no data relevant to establishing a GEO noise floor.

A 12-month GEO content plan is only interpretable if you know where you started. Month 0 is five consecutive days of fixed-query measurement before any content is published to plan specifications.

It tells you three things no content plan template provides: your noise floor (the variability range within which delta is uninterpretable), your visibility state per platform (which determines which phase is your actual starting point), and your Tier 1 vs Tier 2 citation gap (which determines which content type is the binding constraint).

Experiment E016 at The GEO Lab established this baseline in April 2026. The noise floor — 13.3% to 20.0% combined citation rate with zero content changes — is now the reference against which all future GEO interventions are measured.

Ready to measure your baseline? Start with the 30-check protocol to build your Month 0 query set, then track your results against The GEO Lab’s experiment series.

Questions? Contact The GEO Lab.