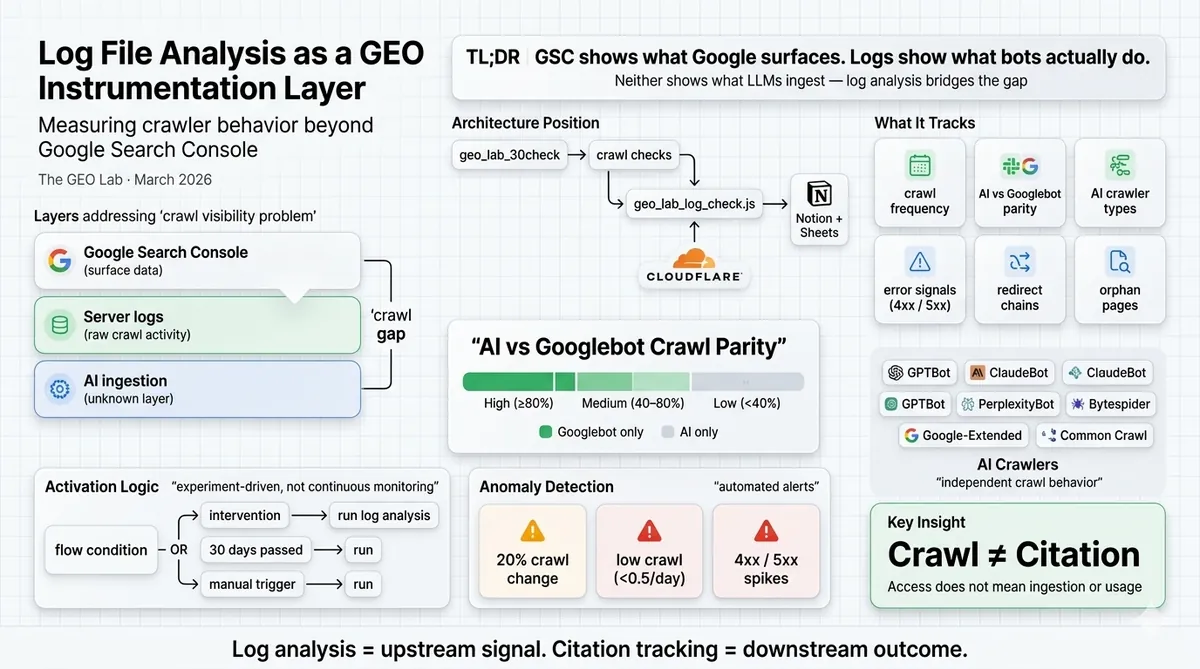

GSC tells you what Google surfaced. Server logs tell you what Googlebot actually did. Neither tells you what an LLM ingested. Log file analysis bridges that gap by measuring Googlebot and AI crawler behaviour independently — crawl frequency, parity between bot types, orphan pages, and error signals. The GEO Lab implements this as a conditional component of the 30-check protocol, activated around interventions rather than running continuously.

The gap this solves

Google Search Console shows you what Google chose to surface. Server logs show you what Googlebot actually did. Neither shows you what an LLM ingested.

Log analysis is the verification layer for GEO Stack Layer 1 — it confirms whether AI crawlers are reaching pages the way the crawlability architecture predicts, and surfaces mismatches before they appear as citation drops.

That three-way split is the crawl visibility problem at the centre of GEO instrumentation. Most SEO practitioners treat GSC as ground truth. It isn’t. It’s a sampled, delayed, Google-interpreted view of crawler activity. Log files are the raw signal underneath it.

For GEO, the problem compounds. AI crawlers — GPTBot, ClaudeBot, PerplexityBot, Bytespider — operate on completely different cadences from Googlebot. They have different content preferences, different frequency patterns, and no equivalent of GSC to surface what they did or didn’t access. You cannot manage what you cannot see.

This post documents how log file analysis is implemented in the GEO Lab stack, what it measures, and why AI vs Googlebot parity is the metric that matters most for GEO experiments.

Architecture position

The GEO Lab automation stack runs a 30-check protocol across all active experiments. Log file analysis is one component of that protocol, implemented as geo_lab_log_check.js.

Log anomalies feed into specific branches of the AI citation rate measurement protocol — a gap between crawler hit frequency and citation outcomes triggers the crawlability diagnostic branch before any content hypothesis is tested.

It sits inside the crawl checks layer, runs conditionally (not on every check), and writes results to both the Notion experiment database and the GEO Lab Google Sheets tracker.

geo_lab_30check.js

└── runCitationChecks()

└── runRankingChecks()

└── runCrawlChecks()

└── geo_lab_log_check.js ← conditional

└── writeToNotion()

└── writeToSheets()

What the script tracks

Googlebot crawl frequency

Hits per day to the experiment URL, averaged across the log window (default 30 days). This is compared against a stored baseline — the first time the script runs for an experiment, it sets the current frequency as baseline. Subsequent runs measure delta.

This creates the before/after structure that matches GEO Lab’s experimental design philosophy: every intervention should have a documented baseline before it’s applied.

AI vs Googlebot parity

This is the GEO-specific metric. It compares AI crawler hits (across all tracked bots) against Googlebot hits and assigns a parity category:

| Category | Condition |

|---|---|

| High Parity | AI hits ≥80% of Googlebot hits |

| Medium Parity | AI hits 40–80% of Googlebot hits |

| Low Parity | AI hits <40% of Googlebot hits |

| Googlebot Only | No AI crawlers detected |

| AI Only | AI crawlers present, Googlebot absent |

| Neither Crawling | No bots detected at all |

The pattern emerging from early experiments: pages that rank well get crawled by Googlebot at a predictable cadence. Pages that get cited by LLMs often show a different crawl pattern — sometimes lower frequency, sometimes by completely different bot signatures, sometimes with strong parity despite weak organic rankings.

The implication: Googlebot crawl frequency is not a reliable proxy for AI crawler access. These need to be measured independently.

AI crawlers tracked

The script classifies the following bot signatures:

- GPTBot (OpenAI)

- ClaudeBot (Anthropic)

- PerplexityBot

- YouBot (You.com)

- anthropic-ai

- cohere-ai

- Google-Extended (Google AI training)

- CCBot (Common Crawl — training data source)

- Bytespider (ByteDance)

- PetalBot (Huawei)

- Applebot-Extended

This list is maintained in the script config and updated as new crawlers are identified.

Error and redirect signals

- 4xx count: Client errors served to bots on the experiment URL cluster

- 5xx count: Server errors — spikes here are invisible in GSC

- Redirect chains: Consecutive redirects within a 5-second window, grouped into chains and counted by hop count

Redirect chains are particularly relevant for GEO: LLM crawlers may have lower tolerance for redirect hops than Googlebot, and may abandon crawl paths that GSC would still process.

Orphan page detection

Pages that bots accessed with no HTTP referer and very low frequency (≤2 hits in the window). These are candidates for pages that have no internal links pointing to them — reachable by direct URL or old sitemap entries, but not discoverable via normal crawl paths.

This is a validation signal for the internal linking automation layer. If the automation is working, orphan page count should trend toward zero across successive runs.

Activation conditions

The script runs when any of the following are true:

internal_linking_change, migration, new_content_launch, robots_txt_change, noindex_change, redirect_update, schema_markup_change--force flag is passed via CLIThis conditional structure keeps log analysis experiment-driven. It’s not a monitoring dashboard — it’s a measurement tool that activates around interventions.

Anomaly detection

An anomaly is flagged if any of the following conditions are met:

Sustained drops in crawler frequency without corresponding ranking changes are one of the clearest early signals of degrading GEO visibility at the crawlability layer — they appear in logs weeks before any citation metric reflects the change.

- Crawl frequency changed >20% from the stored baseline

- Crawl frequency drops below 0.5 hits/day (under-crawl threshold)

- >10 bot-facing 4xx errors in the window

- >5 bot-facing 5xx errors in the window

When an anomaly is flagged, the script writes to both:

- Notion:

Log Anomaly Flag = truewith reason text - GEO Lab Sheets tracker: Alerts tab with full metric context

Notion schema additions

Log analysis results write to Section 9 of the GEO Lab Notion database, which extends the existing 34-field schema with 11 new fields:

| Field | Type |

|---|---|

| Log Last Run Date | Date |

| Crawl Freq Target Page | Number |

| Crawl Freq Baseline | Number |

| Orphan Page Count | Number |

| Redirect Chain Count | Number |

| 4xx Count | Number |

| 5xx Count | Number |

| AI Crawler Hit Count | Number |

| AI vs Googlebot Parity | Select |

| Log Anomaly Flag | Checkbox |

| Log Source | Select |

What this doesn’t tell you

Log files show access — not comprehension. A bot hitting a URL confirms it was crawled. It does not confirm the content was parsed correctly, indexed, or used for training or citation.

Converting confirmed crawl events into citation likelihood estimates requires the retrieval probability framework — the model that separates access frequency from extraction likelihood and explains why high crawl volume does not guarantee citation.

For that, the citation tracking layer (also in the 30-check protocol) is needed: querying AI systems directly with probe questions and recording whether they cite the experiment URL.

Log analysis and citation tracking are complementary. Log analysis is the upstream signal — it tells you whether the bots can reach the content. Citation tracking is the downstream outcome — it tells you whether reaching it resulted in use.

Both are required for a properly instrumented GEO experiment.

Running the script

node geo_lab_log_check.js

node geo_lab_log_check.js --experiment E001

node geo_lab_log_check.js --force

node geo_lab_log_check.js --dry-run --verbose

Required environment variables: CLOUDFLARE_ZONE_ID, CLOUDFLARE_API_TOKEN, NOTION_DATABASE_ID. Full setup in the README.

Related

- GEO Lab 30-Check Protocol —

geo_lab_30check.js - Cloudflare Markdown for Agents — AI crawler access layer

- Citation Rate Tracking — downstream outcome measurement

- Internal Linking Automation — intervention layer validated by orphan page count

Frequently Asked Questions

What is log file analysis in the context of GEO?

Log file analysis for GEO examines server access logs to measure how Googlebot and AI crawlers (GPTBot, ClaudeBot, PerplexityBot) access your content. Unlike GSC which shows what Google chose to surface, logs show what crawlers actually did — and critically, whether AI crawlers are accessing the same pages as Googlebot or different ones entirely.

What is AI vs Googlebot crawl parity?

Crawl parity measures whether AI crawlers and Googlebot access the same pages at similar frequencies. Categories range from High Parity (AI hits at 80%+ of Googlebot) to AI Only (AI crawlers present but Googlebot absent). Early GEO Lab experiments show these crawlers often make independent access decisions.

Which AI crawlers does the GEO Lab track?

The GEO Lab tracks 11 AI crawler signatures: GPTBot (OpenAI), ClaudeBot (Anthropic), PerplexityBot, YouBot (You.com), anthropic-ai, cohere-ai, Google-Extended (Google AI training), CCBot (Common Crawl), Bytespider (ByteDance), PetalBot (Huawei), and Applebot-Extended (Apple AI).

When does the log analysis script run?

The script runs conditionally — not on every check. It activates when an intervention has been applied in the last 30 days, or when 30+ days have passed since the last log check. This keeps measurement experiment-driven rather than generating noise on every run.

How does log file analysis complement citation tracking?

Log analysis is the upstream signal — it confirms whether bots can reach the content. Citation tracking is the downstream outcome — it confirms whether reaching the content resulted in citation. Both are required for a properly instrumented GEO experiment.

- GSC is not ground truth for GEO. It shows what Google surfaced, not what crawlers did. Logs show actual access patterns — including AI crawlers GSC doesn’t cover.

- AI vs Googlebot parity is the metric that matters. These crawlers make independent decisions. Measuring both reveals whether your content is visible to AI systems or only to Google.

- Log analysis should be experiment-driven. Run it around interventions (linking changes, new content, migrations) with documented baselines — not as a continuous monitoring dashboard.

- Logs show access, not comprehension. A crawled page isn’t necessarily a cited page. Pair log analysis with citation tracking for the full picture.

- Orphan detection validates internal linking. If automation is working, orphan page count should trend toward zero across successive runs.