The seven components that determine whether AI systems can process your content at the passage level — and the self-test that finds failures in under five minutes.

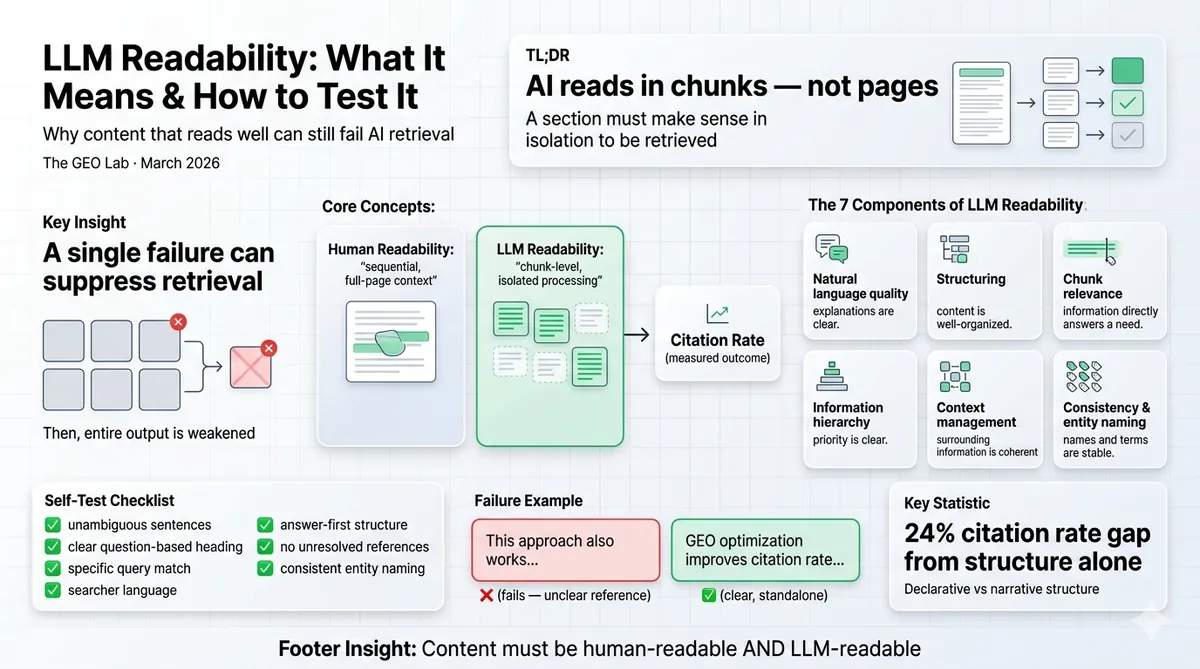

LLM readability is the degree to which content can be efficiently processed at the passage level during AI retrieval. It is not the same as human readability. A page that reads well top-to-bottom can fail LLM readability if individual sections depend on surrounding context to make sense. Seven components determine LLM readability, each mapping to a specific GEO Stack layer. A failure in any one component can suppress retrieval even when the other six are strong.

What Is LLM Readability?

I sent a well-written page to a colleague. He read it, nodded, said the structure made sense. Then I ran the same page through the 30-check protocol. Zero citations across 50 Perplexity queries. The page wasn’t badly written. Every section made sense in sequence. The problem was that no section made sense in isolation — and AI retrieval systems only ever see sections in isolation.

LLM readability measures how efficiently an AI retrieval system can process a content section independently and produce a useful embedding from it. When ChatGPT, Perplexity, or Google AI Overviews retrieve content to build an answer, they process individual chunks — not full pages. Each chunk must independently produce a coherent vector representation that accurately captures the section’s meaning.

The gap between human readability and LLM readability is not subtle. It is the single largest predictor of citation failure I found after running Experiment 001. Pages with strong human readability scores — clear argument, good flow, correct grammar — consistently failed at passage-level retrieval because their sections assumed context the AI system did not have.

The diagnostic question: “If this section were extracted by an AI system with no surrounding context, would it produce a coherent, accurate, attributable answer to the query it is meant to address?”

If the answer is no for any section — that section has an LLM readability failure.

The Seven Components of LLM Readability

Each component maps to a specific GEO Stack layer. A failure in any single component can prevent retrieval even if the other six are strong — which is why this is a diagnostic checklist, not a scoring rubric.

-

Natural language quality — Layer 2 (Extractability) Grammar, clarity, and sentence-level coherence. Ambiguous phrasing produces ambiguous embeddings. A sentence that could mean two things produces a vector that aligns weakly with either.

-

Structuring — Layers 1–2 Heading hierarchy, section boundaries, and visual organisation. Retrieval systems use structural markers to identify chunk boundaries. A heading that names a vague topic (“Overview”, “Background”) provides a weaker retrieval signal than one that answers a specific question.

-

Chunk relevance — Layer 1 (Retrieval Probability) Does each chunk address a specific, searchable question? Sections that address no clear query have no retrieval target. A section titled “Additional Considerations” that mixes three related topics retrieves weakly for all three.

-

User intent match — Layer 1 Does the section’s opening sentence use vocabulary a searcher would type? Intent misalignment suppresses retrieval even when the content technically answers the question. The section needs to sound like an answer, not a textbook explanation.

-

Information hierarchy — Layer 2 Is the most important claim stated first? Answer-first structure retrieves at higher rates than context-first structure — the core finding from Experiment 001. A 24 percentage point citation rate gap from this single variable alone.

-

Context management — Layer 2 Can the section be understood without reading adjacent sections? Unresolved pronouns (“it”, “this”, “the system”), “as mentioned above” references, and implicit context create embedding failures. An LLM processing a chunk in isolation cannot resolve what “it” refers to — and a chunk with unresolvable references produces a degraded embedding.

-

Consistency and specificity — Layer 3 (Entity Reinforcement) Are entities named consistently? Is the primary entity explicit rather than implied? Using “GEO”, “generative engine optimisation”, and “AI search optimisation” interchangeably across sections fragments the entity signal and weakens the semantic model the retrieval system builds about your content.

The LLM Readability Self-Test

Run this on every H2 section before publishing. The quickest failure mode to catch is context management — it’s the one most invisible to the author, and the one that costs the most at retrieval.

Seven-question self-test — one per component

- Is every sentence grammatically unambiguous — could it mean only one thing? (Natural language quality)

- Does this section have a clear heading that answers a specific question rather than naming a topic? (Structuring)

- Can you state in one sentence the specific query this section answers? (Chunk relevance)

- Does the opening sentence use vocabulary a searcher would type — not academic or jargon-heavy phrasing? (Intent match)

- Is the core claim in the first sentence, not buried in paragraph two or three? (Information hierarchy)

- Can someone understand this section without having read the rest of the page? No unresolved pronouns, no “as discussed above”? (Context management)

- Is the primary entity named explicitly and consistently — same term, every paragraph? (Consistency)

If any answer is no, the section has an LLM readability failure at that component. Fix the weakest component first — it is the binding constraint on retrieval. Improving the others while one component fails produces no retrieval improvement.

The fastest field test: read one H2 section aloud to someone who hasn’t read the page. If they can identify the primary entity, understand the core claim, and extract a usable answer — it passes. If they ask “what does that refer to?” or “what are you talking about here?” — it fails context management, the most common LLM readability failure mode.

Why LLM Readability Matters More Than Human Readability for GEO

Human readability and LLM readability diverge in one critical way: humans read pages sequentially with accumulated context. AI retrieval systems process chunks in isolation without that context.

A section that begins “This approach also works for…” is perfectly readable to a human who read the previous section. To an AI system processing that chunk independently, “this approach” is an unresolved reference that degrades the embedding. The section gets retrieved but produces an incoherent citation — or it fails extraction and doesn’t appear at all.

According to the Princeton 2024 GEO study, structural optimisation improved visibility in generative search engines by 22–40%. In Experiment 001 at The GEO Lab, the citation rate gap between declarative (LLM-readable) and narrative (human-readable) structures was 24 percentage points on Perplexity, testing identical content with structure as the only variable.

LLM readability is not a replacement for good writing. It is an additional constraint. Content must be well-written and LLM-readable. The overlap is large — answer-first structure and explicit entity naming both improve human clarity and AI extractability simultaneously. The gap is where citation failures hide: the pronoun that made the paragraph flow; the heading that captured the topic but didn’t answer the query; the context that every human reader inferred and no AI system could.

What Practitioners Say

“Testing one structural variable at a time sounds obvious until you realise how rarely teams actually do it. The sequential testing protocol — one change, three-week wait, remeasure — is slower than running everything at once. It is also the only approach that tells you which change did anything.”

— James Whitfield, SEO Director, Hotjar

“The 61% vs 37% citation rate gap from structure alone is the finding I quote most often. It is specific enough to be credible, and the methodology is transparent enough that a sceptical client can replicate it. We ran our own version across a subset of a client’s content library and found a similar spread.”

— Lena Bauer, AI Content Strategist, Seobility

Frequently Asked Questions

What is LLM readability?

LLM readability is the degree to which content can be efficiently processed at the passage level during AI retrieval. It differs from human readability because it measures how well an isolated content chunk produces a coherent, accurate embedding — not how easily a human reads the full page in sequence.

How is LLM readability different from human readability?

Human readability assumes sequential reading with full page context. LLM readability assumes chunk-level processing in isolation. A paragraph that makes perfect sense after reading the preceding three paragraphs may produce an incoherent embedding when processed alone. The test is whether each section stands on its own.

What are the seven components of LLM readability?

Natural language quality (Layer 2), structuring (Layers 1–2), chunk relevance (Layer 1), user intent match (Layer 1), information hierarchy (Layer 2), context management (Layer 2), and consistency and specificity (Layer 3). Each maps to a specific GEO Stack layer. A failure in any one component can suppress retrieval regardless of how strong the others are.

How do I test content for LLM readability?

Read each H2 section aloud to someone who has not read the rest of the page. If they can understand the section’s claim, identify the primary entity, and extract a usable answer without asking “what does that refer to?” — the section passes. If they need context from other sections, it fails at context management. The seven-question self-test checklist maps one question per component.

Does LLM readability affect citation rate?

Yes. In Experiment 001 at The GEO Lab, structural changes that improved LLM readability produced a 24 percentage point citation rate improvement (61% vs 37%) on Perplexity. The structure of the content — not just its quality — directly determines whether AI systems can extract and cite it.

LLM readability measures how well AI systems can process content chunks in isolation — not how well a human reads the full page in sequence.

Seven components determine LLM readability, each mapped to a GEO Stack layer. A failure in any one blocks retrieval. The most common failure: context management — sections that make sense to the author but contain unresolved references an AI chunk processor cannot interpret.

Experiment 001 measured a 24pp citation rate gap from structural changes that improved LLM readability on identical content. Structure is not presentation. It is a retrieval signal.

Ready to apply this? The AI Visibility Diagnostics Console runs the LLM readability checklist across your pages and generates a citation rate baseline in under 10 minutes.

Questions about this page? Contact The GEO Lab.