A versioned schema, a contamination architecture, and a learning layer — the three things Karpathy’s 1.7-million-view post left out

Andrej Karpathy described an LLM that compiles raw sources into a structured wiki. The pattern works — but degrades across sessions without a versioned schema, and produces a reference library without a learning layer. The LLM Knowledge Compiler adds both: AGENTS.md v1.1.0 (a 14-section schema standard), Steph Ango’s two-vault contamination model with an insights/ directory the agent never touches, and a spaced repetition system that turns compiled knowledge into mastered knowledge. The full schema is open source at github.com/arturseo-geo/llm-knowledge-base.

What Karpathy actually built

Andrej Karpathy’s April 2026 post on LLM knowledge bases reached 1.7 million views in 48 hours. Dozens of articles summarised it. None of them finished it. Karpathy described an LLM that compiles raw sources into a structured markdown wiki — but left out two things that make the system actually work: a versioned schema so agent behaviour stays consistent across sessions, and a learning layer so compiled knowledge becomes mastered knowledge. This post adds both, documents what Steph Ango (@kepano, co-creator of Obsidian) contributed to the contamination problem, and explains the AGENTS.md v1.1.0 schema standard that formalises the full pattern.

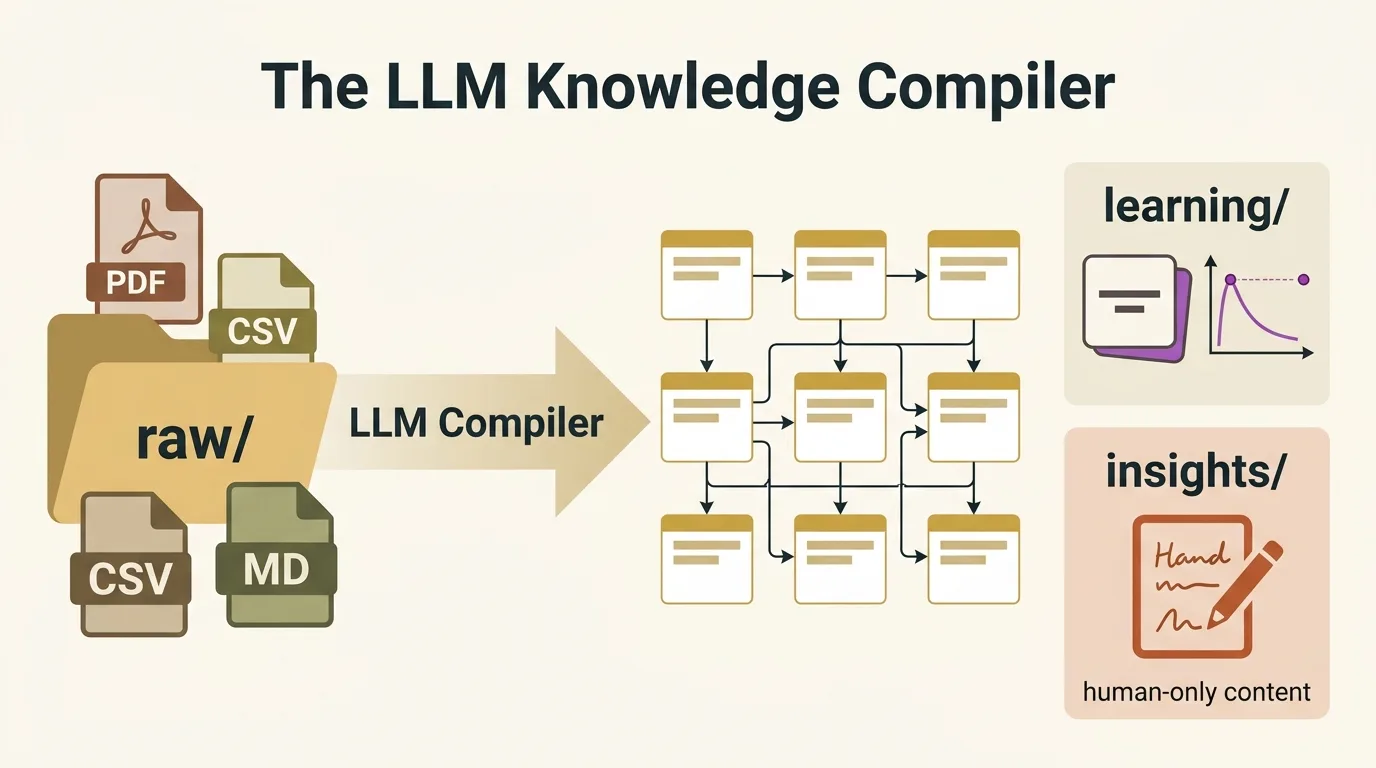

The Karpathy workflow has four operations. Raw source material — research papers, web articles, GitHub repos, datasets, images — lands in a raw/ directory. An LLM reads those files and compiles a structured wiki: concept articles, source summaries, backlinks, and three index files that let the agent navigate without loading the full corpus. The agent then runs linting passes to find contradictions, gaps, and stale claims. Finally, query outputs get filed back into the wiki, so every exploration compounds.

At approximately 100 articles and 400,000 words — Karpathy’s reported scale — the index-first navigation approach outperforms vector RAG on every practical dimension: zero infrastructure, full auditability, and answers that trace directly to source files. VentureBeat called it “a blueprint for the next phase of the second brain — one that is self-healing, auditable, and entirely human-readable.” That framing is accurate. It is also incomplete.

The gap Karpathy left open

Karpathy described a system for organising and querying knowledge. He did not describe a system for mastering it. The distinction matters. The 2013 meta-analysis by John Dunlosky et al. in Psychological Science in the Public Interest rates retrieval practice and spaced repetition as the two highest-utility learning techniques — significantly outperforming re-reading and highlighting. A compiled wiki without a retrieval practice layer is a well-organised library you visit but never internalise.

| Component | Karpathy workflow | LLM Knowledge Compiler |

|---|---|---|

| Schema | Implicit, conversational | AGENTS.md v1.1.0 — 14 sections, versioned |

| Agent contract | None — agent drifts across sessions | Session startup checklist, quality rules, naming conventions |

| Contamination control | Not addressed | Two-vault model + insights/ directory (human-only) |

| Learning layer | Not present | Flashcards, FSRS spaced repetition, gap tracker |

| Confidence tracking | Mentioned informally | 4-level system: high, medium, low, speculative |

| Freshness | Not addressed | Per-concept freshness tiers: 30d / 90d / 365d |

| Finetune path | Mentioned in passing | Concrete: concept corpus + flashcard JSONL + query/report pairs |

Karpathy also left the workflow underdocumented. The pattern is described conversationally — no versioned schema, no file naming conventions, no quality rules, no agent startup protocol. Every implementation starts from scratch. The agent has no contract to read. This is why most people who try the workflow find it degrades across sessions: the agent drifts, confidence levels become inconsistent, and the index files fall out of sync with the actual article set.

The contamination problem — and Steph Ango’s contribution

Steph Ango, co-creator of Obsidian, made an observation that sharpens the problem considerably. He drew an analogy to low-background steel: in the 1940s, nuclear weapons testing contaminated nearly all steel produced after the detonations with elevated background radiation. Sensitive instruments now require steel smelted before 1945 — salvaged from pre-war shipwrecks. The contamination was invisible, pervasive, and irreversible in the general supply. LLM-generated content is the new contamination problem.

More specifically, Ango identified something the standard contamination framing misses: in Obsidian, the risk goes beyond content quality. Obsidian’s search, graph view, backlinks, quick switcher, and Bases all operate at the vault level. Once agent-compiled articles mix with your personal notes, your Obsidian experience reflects a mixture of your thinking and the LLM’s synthesis. The graph maps connections the agent made, not connections you made. Search surfaces articles the agent wrote, not notes you wrote. Over time, Obsidian stops being a representation of your knowledge and becomes a representation of your LLM’s output.

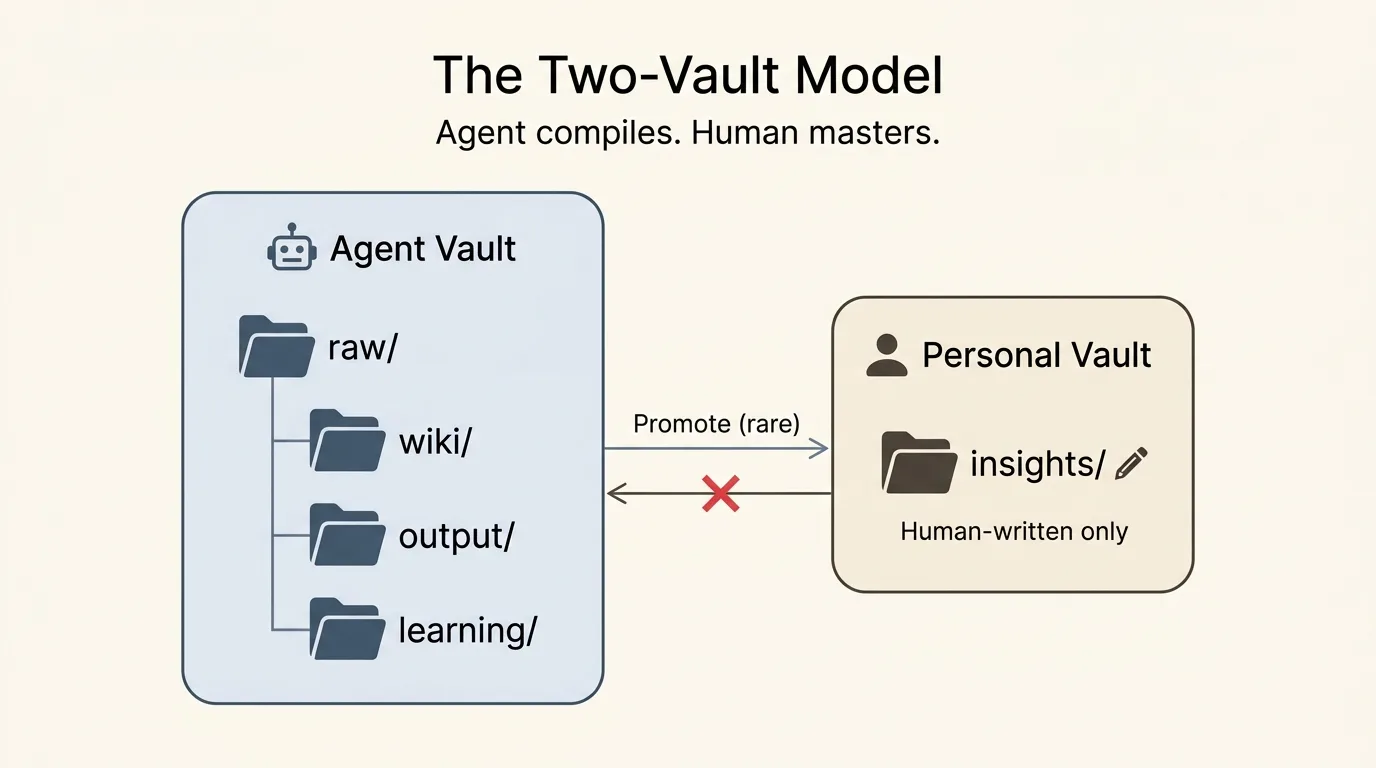

His solution: keep two completely separate Obsidian vaults. One for the agent — the full compiled wiki, all the linting and query outputs, the flashcard system. One for you — personal notes only, human-written, high signal-to-noise. And his sharpest observation: a summary of a PDF is noise. An insight you formed from reading it is signal. These belong in different directories with different authorship rules.

The LLM Knowledge Compiler: a formalised extension

The LLM Knowledge Compiler extends Karpathy’s workflow in three directions: a versioned schema that makes the agent’s behaviour consistent across sessions and LLMs, a structured learning layer that converts the compiled wiki into a mastery system, and a contamination architecture informed by Ango’s two-vault model. All three are codified in AGENTS.md v1.1.0 — a single file you drop into any directory.

The AGENTS.md schema

AGENTS.md is the contract between the human and the LLM. It defines the directory layout, file naming conventions, frontmatter schema for every article type, step-by-step compilation and linting workflows, quality rules (no hallucinated citations, confidence levels, orphan prevention), and a session startup checklist the agent runs before every operation.

| Section | What it defines |

|---|---|

| §1 Directory layout | raw/, wiki/, insights/, output/, learning/ — ownership rules |

| §2 Agent scope | Agent owns wiki/ + output/ + learning/. Never writes to insights/. |

| §3–4 Article schema | Frontmatter: title, type, confidence, sources, related, tags |

| §5 Index files | _index.md (table), _concepts.md (flat list), _graph.md (adjacency list) |

| §6 Compilation | Step-by-step: summarise → extract concepts → update indices → flashcards |

| §7 Query workflow | Load indices → select articles → synthesise → file output → update wiki |

| §8 Linting | Contradiction scan, orphan detection, confidence audit, gap detection |

| §9 Learning layer | Flashcard generation, FSRS scheduling, gap tracking |

| §10 Output formats | Reports, briefs, slides (Marp), figures |

| §11 Quality rules | No hallucinated citations, confidence levels, orphan prevention |

| §12 Contamination | insights/ boundary, two-vault model, provenance tracking |

| §13 Session startup | Checklist: read schema → load indices → check review queue → report |

| §14 Versioning | Changelog, backwards compatibility rules |

The schema introduces three index files that are the core of the retrieval architecture. _index.md is a table with one row per article — title, type, confidence level, last updated date, tags. _concepts.md is a flat list of every named concept with a one-sentence definition. _graph.md is an adjacency list of concept-to-concept relationships. The agent reads all three before deciding which full articles to load for any query. This keeps context windows targeted and token costs proportional to what’s actually changing, not the size of the whole wiki.

The insights/ directory — signal separated from noise

Version 1.1.0 adds an insights/ directory that the agent never touches. Human-written notes only. This is where your own thinking goes — observations you formed from engaging with the compiled wiki, connections you made, hypotheses you’re testing. The agent compiles wiki/. You write insights/. The schema enforces this boundary: “Agent never writes to insights/. This directory is reserved exclusively for human-written notes. Agent-generated content — even high-confidence, well-sourced synthesis — does not belong here. The separation is the point.”

This maps directly to Ango’s framing. The compiled wiki is scaffolding. The insight you form from engaging with it is the thing itself. Keeping them in separate directories with separate authorship rules isn’t just clean architecture — it’s the only way to know, six months from now, which ideas are yours and which are the LLM’s.

The two-vault model as primary setup

The schema now recommends the two-vault Obsidian model as the primary setup, not an optional enhancement. Agent vault: the full schema, raw/, wiki/, output/, learning/. Personal vault: insights/ only, human-written. Obsidian’s search, graph, and backlinks in the personal vault reflect only your thinking. The agent vault’s graph reflects the compiled knowledge base. The two are distinct tools for distinct purposes.

Promotion from agent vault to personal vault is the highest-value action in the workflow — and the rarest. It happens only when a compiled article, flashcard session, or query output has produced something you genuinely understand and want to own. Most compiled articles never get promoted. That’s correct. The wiki compiles. The human masters.

The learning layer: from archive to mastery system

| Component | File | Purpose |

|---|---|---|

| Flashcards | learning/flashcards/*.md | 4 question types per concept: definitional, mechanistic, relational, applied |

| Review queue | learning/_review.md | FSRS spaced repetition scheduling — due dates, intervals, ease factors |

| Gap tracker | learning/gaps.md | Structural gaps (missing concepts) + epistemic gaps (unanswerable questions) |

The learning layer adds three components under a learning/ directory: automatically generated flashcards, a spaced repetition review queue, and a gap tracker that converts weak spots in the wiki into a prioritised research agenda. No equivalent exists in Karpathy’s original workflow, in NotebookLM, or in any existing PKM tool.

Automatic flashcard generation

Every time the agent creates or substantially updates a concept article, it generates a corresponding flashcard file in learning/flashcards/. Each file contains four question types: definitional (can you state the concept precisely?), mechanistic (do you understand how it works?), relational (how does it connect to other concepts?), and applied (when would you use it?). The questions are grounded in the wiki article, not in general LLM knowledge — they test your understanding of your specific sources and framing, not a generic version of the concept.

NotebookLM generates flashcards from source documents. The LLM Knowledge Compiler generates flashcards from the compiled, linted, cross-linked wiki — after the LLM has already synthesised sources, resolved contradictions, and established concept relationships. The flashcards test the synthesised understanding, not the raw material.

Spaced repetition scheduling

The review queue in learning/_review.md tracks due dates for every concept using a simplified implementation of the FSRS (Free Spaced Repetition Scheduler) algorithm — the same algorithm used by Anki, rated in a 2022 comparison study by Jarrett Ye et al. as more accurate than the SM-2 algorithm across all tested retention targets. After each review session, the agent updates intervals based on recalled performance: correct recall multiplies the interval by the ease factor (default 2.5), failed recall resets the interval to one day and decreases ease.

Concepts you find easy get reviewed less and less often. Concepts you consistently struggle with stay at short intervals until they stick. The agent runs the scheduling update automatically at the end of each session.

Gap tracking as a research agenda

learning/gaps.md accumulates two types of gaps. Structural gaps are concepts mentioned in articles that don’t have their own entry — detected during linting and sorted by how many articles reference the missing concept. Epistemic gaps are questions the wiki can’t answer, detected when a query returns low-confidence results or when a claim rests on a single stale source. The gaps file is a prioritised list of what to ingest next — turning every linting pass into a research agenda, not just a quality check.

Why not RAG?

The compiled wiki and vector RAG are not competing for the same use case. RAG was designed for corpora too large to fit in a context window, with low-latency retrieval at query time. At millions of documents, heterogeneous content types, and sub-second latency requirements, RAG is the right architecture. At personal research scale — 50 to 2,000 well-chosen sources — the compiled wiki outperforms RAG on every practical dimension except raw document count.

| Dimension | Compiled wiki | Vector RAG |

|---|---|---|

| Best scale | 50–2,000 sources | 1,000+ documents |

| Infrastructure | None — markdown files | Vector DB, embeddings pipeline, chunking config |

| Auditability | Full — every claim traces to a source file | Partial — chunk retrieval is opaque |

| Structure preservation | Complete — coherent articles with backlinks | Lost — 512-token chunks destroy context |

| Contradiction handling | Resolved during compilation | Not handled — conflicting chunks coexist |

| Learning integration | Flashcards + spaced repetition built in | Not available |

| Finetune readiness | High — clean, structured, confidence-tagged | Low — unstructured chunks |

| Latency | Context window load (~2s) | Sub-second retrieval |

The core issue with RAG at small scale: chunking destroys structure. A 512-token chunk of a research paper contains no backlinks, no confidence level, no cross-references to related concepts. It is an arbitrary fragment. The compiled wiki preserves the full structure of each concept — the LLM writes coherent articles, not arbitrary windows. When the agent loads a concept article to answer a query, it reads a document that already synthesises all sources on that concept, resolves known contradictions, and links explicitly to related concepts.

The crossover where RAG earns its complexity is approximately 1,000 to 2,000 articles — when the index files become too long for efficient navigation and context costs become significant. Below that threshold, a well-maintained _index.md combined with targeted article loading is simpler, cheaper, more auditable, and produces better answers.

The finetune path

A compiled wiki that has been repeatedly linted is more than a context store. Karpathy mentioned this in passing. The AGENTS.md schema makes the path concrete: concept and topic articles form a clean pretraining corpus; flashcard Q&A pairs in learning/flashcards/ export directly to JSONL for instruction fine-tuning; query/report pairs in output/reports/ are task fine-tuning examples. Filter to confidence: high and confidence: medium only before fine-tuning. Training on speculative content degrades model quality more severely than it degrades a wiki.

Karpathy built a compiler. This schema adds the missing contract (AGENTS.md), the missing discipline (contamination mitigation via two-vault model + insights/), and the missing outcome (a learning layer that turns compiled knowledge into mastered knowledge). The repo ships everything you need to start: schema, templates, worked example, and four deep-dive docs. MIT licensed.

Getting started

The repo ships AGENTS.md v1.1.0, templates for every file type, a fully populated worked example (AI alignment, 8 articles, flashcards, review queue, gaps file), and four deep-dive docs: why-not-RAG, learning layer design, contamination mitigation, and the finetune path. MIT licensed.

Three entry points depending on where you are. Starting from scratch: clone the repo, copy templates into a new directory, drop source documents into raw/, tell Claude: “Read AGENTS.md, then compile everything in raw/ into the wiki.” The agent reads the schema, creates the index files, writes concept articles, and generates flashcards. From that point, incremental ingest is one instruction: “File this to our wiki: raw/papers/new-paper.pdf”.

Already have an Obsidian vault: copy AGENTS.md into the vault root and tell the agent to audit existing notes against the schema. It will create the index files from existing content, identify orphaned notes, flag low-confidence claims, and propose the graph structure. No need to start over.

Want only the learning layer: copy AGENTS.md and templates/flashcard.md into an existing compiled wiki. Tell the agent to generate flashcards for all concept articles and populate learning/_review.md. The learning layer is additive.

Build your own GEO knowledge base. The LLM Knowledge Compiler schema is the foundation of The GEO Lab’s research methodology. Clone the repo, drop your sources into raw/, and let the agent compile. Every citation check, rank report, and experiment result compounds into a queryable, linted, mastery-ready wiki.

Frequently asked questions

What is an LLM knowledge compiler?

An LLM knowledge compiler is a workflow where a language model reads raw source documents and writes a structured, interlinked wiki — rather than indexing documents for retrieval. The LLM is the primary author and editor of the knowledge base. The human drops in sources and queries. The pattern was described by Andrej Karpathy in April 2026 and formalised in the AGENTS.md schema standard at github.com/arturseo-geo/llm-knowledge-base.

How is this different from Karpathy’s original workflow?

Karpathy’s workflow describes compilation and query operations but leaves the schema implicit and omits the learning layer entirely. The LLM Knowledge Compiler adds a versioned AGENTS.md schema (consistent across sessions and LLMs), a contamination architecture informed by Steph Ango’s two-vault model and insights/ directory, and a full learning layer with automatic flashcard generation, FSRS spaced repetition scheduling, and gap tracking.

When should I use a compiled wiki instead of RAG?

Use a compiled wiki for focused research domains with 50 to 2,000 well-chosen sources where auditability, structure, and mastery matter. Use RAG for corpora exceeding roughly 2,000 documents, heterogeneous content types, or sub-second latency requirements. A compiled wiki optimises for understanding. RAG optimises for retrieval at scale.

What does AGENTS.md contain?

AGENTS.md is a 14-section schema specification: directory layout, agent scope (including the rule that agent never writes to insights/), file naming conventions, article frontmatter schema, index file maintenance rules, compilation workflow, query workflow, linting workflow, learning layer with FSRS scheduling, output formats, quality rules, contamination mitigation, session startup checklist, and schema versioning.

What is the two-vault model?

Two completely separate Obsidian vaults: an agent vault containing the full compiled wiki and all agent-generated content, and a personal vault containing only human-written insights/ notes. Obsidian’s search, graph, and backlinks in the personal vault reflect only your own thinking. The agent never touches the personal vault. Content is promoted from agent to personal vault only when a compiled article produces genuine insight you understand and want to own.

Can the wiki be used to fine-tune a model?

Yes. A linted wiki generates three training data types automatically: concept articles as a pretraining corpus, flashcard Q&A pairs as instruction fine-tuning data exportable to JSONL, and query/report pairs as task fine-tuning examples. Filter to confidence: high and confidence: medium only. Training on speculative or agent-inferred content degrades model quality.

What LLMs work with this schema?

Any model with a context window of roughly 32K tokens minimum. Claude Sonnet is recommended for compilation and Q&A. Claude Haiku for cost-efficient linting sweeps. GPT-4o and Gemini 1.5 Pro handle the schema correctly. Local models via Ollama work at smaller wiki sizes but degrade on complex multi-concept queries.