A page that AI systems always cite but never accurately represent. The audit that changed how I think about entity reinforcement.

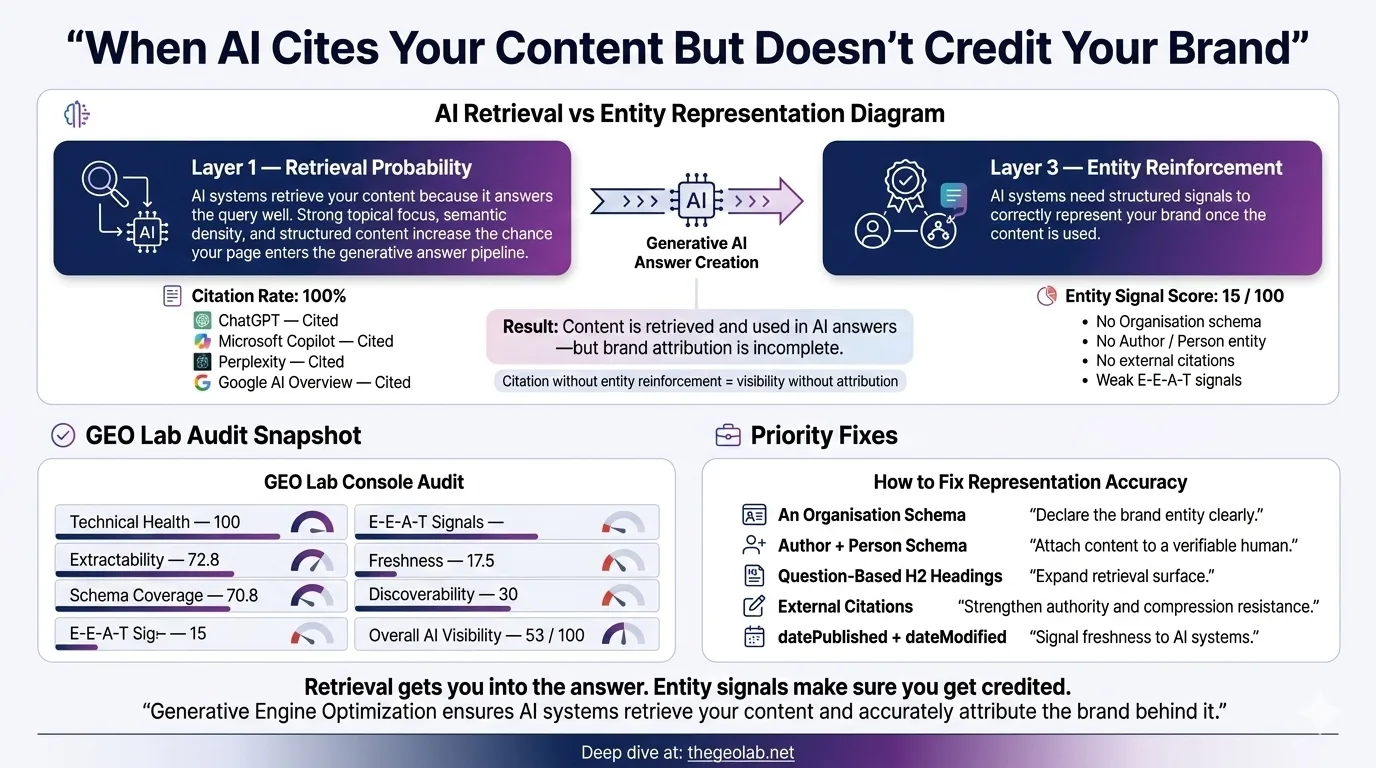

A page can achieve a 100% citation rate across ChatGPT, Perplexity, and Gemini while scoring just 15 out of 100 on entity signals. Citation rate measures whether AI retrieves your page. Entity signals measure whether AI represents your concepts accurately when it does. These are different capabilities, and optimising for one does not automatically improve the other. This post documents the gap, explains the scoring architecture behind it, and shows what to fix.

The Paradox I Found in My Own Data

I ran the GEO Stack audit on every published page on thegeolab.net in March 2026. The GEO-002 baseline covered 330 queries across 13 semantic categories, tested against three platforms: ChatGPT (gpt-4o-mini), Gemini (gemini-2.5-flash), and Perplexity (sonar-pro).

One page came back with a result I did not expect. Every time an AI system was asked a question where this page was relevant, it cited the page. 100% citation rate. The retrieval pipeline was working perfectly — the content was being found, selected, and included in AI-generated answers.

The entity reinforcement score for the same page was 15 out of 100.

That is not a typo. A page that AI always retrieves but almost never represents accurately. I spent the next week understanding why this happens, and the answer changed how I think about the entire GEO Stack.

The core finding: Citation rate measures retrieval. Entity signals measure representation. A page can be retrieved every time and still be misrepresented every time. These are architecturally independent capabilities in RAG systems.

What Citation Rate Actually Measures

Citation rate is the percentage of relevant queries for which an AI system includes your content in its response. If Perplexity answers 20 queries where your page is topically relevant, and it cites your page in 18 of those responses, your citation rate is 90%.

This is a retrieval metric. It tells you that the vector embedding of your content is close enough to the query embedding that the retrieval pipeline selects it. It tells you that the page has sufficient topical authority for the re-ranker to keep it in the context window. It tells you the content passed whatever freshness, quality, and relevance heuristics the system applies before generation.

What it does not tell you is what the AI system does with your content once it has retrieved it.

Kevin Indig’s analysis of 1.2 million ChatGPT responses found that citation frequency and citation accuracy are only weakly correlated — a page can be frequently cited while the specific claims extracted from it are paraphrased inaccurately or attributed to the wrong entity. The retrieval pipeline and the generation pipeline are separate systems with separate failure modes.

Going deeper? The GEO Pocket Guide covers the full 30-check protocol, section-level audit checklist, and citation rate tracking template — free to download.

What Entity Signals Actually Measure

Entity signals measure how explicitly and consistently a page names its core concepts. The GEO Stack scoring architecture breaks this down into four components, each weighted independently:

| Component | Weight | What It Measures |

|---|---|---|

| Compression retention | 40% | How much information survives when an LLM compresses the section |

| Declarative openings | 25% | Whether sections begin with strong factual statements, not questions or transitions |

| Entity explicitness | 20% | The ratio of named entities to pronouns (he, she, it, they, this, that) |

| Standalone coherence | 15% | Whether a section makes sense if extracted without its parent document |

The entity explicitness sub-score is the most mechanically straightforward. The GEO OS scoring engine counts every occurrence of 12 tracked pronouns (he, she, it, they, this, that, these, those, his, her, its, their) and compares them against named entity mentions. A section that says “it improves performance by reducing latency” scores lower than one that says “the GEO Stack improves retrieval performance by reducing embedding latency.” Same claim. Different entity explicitness.

The formula: Entity explicitness = entity_mentions / (entity_mentions + pronoun_count) × 100. A score of 15 means roughly 85% of references in the content use pronouns instead of naming the entity directly. The LLM compression step then strips those pronouns entirely, leaving the concept unanchored.

Why the Gap Exists: Retrieval vs Representation

I was confused by this for longer than I should have been. If the page is good enough to be retrieved every time, why is the entity representation so poor?

The answer is that retrieval and representation happen at different stages of the RAG pipeline, and they depend on different properties of the content.

What gets your page selected

Topical relevance, semantic similarity to the query, domain authority, freshness signals. These operate on the document level. A page about entity reinforcement gets retrieved when someone asks about entity reinforcement. The content can be poorly structured and still match the query embedding closely enough to be selected.

What gets pulled from your page

The LLM does not use the entire page. It extracts sections, compresses them, and synthesises an answer. This is where entity signals matter. If a section says “this framework improves it by addressing those issues,” the compression step has nothing concrete to preserve. The section is topically relevant (Stage 1 passed) but semantically empty when compressed (Stage 2 fails).

What the AI says about your content

The LLM generates its response using the compressed extractions as grounding. If the extraction lost entity specificity, the generated response either attributes the concept vaguely (“some frameworks suggest…”) or misattributes it to a competitor whose content had clearer entity naming. Your page was cited. Your concept was not accurately represented.

This is the architectural explanation. Retrieval operates on document-level signals. Representation operates on section-level signals. You can have perfect document-level relevance and catastrophic section-level entity loss simultaneously.

What a 15/100 Entity Score Actually Looks Like

I went through the flagged page section by section. Here is what I found.

The page had seven H2 sections. Five of them opened with transitional phrases: “Building on the previous section…”, “As we discussed earlier…”, “This approach works because…”. Each of these scores 10/100 on declarative openings because they require the surrounding document for context — extracted in isolation, they mean nothing.

Entity explicitness was worse. I counted the pronoun-to-entity ratio across all sections: 47 pronoun references against 8 named entity mentions. The page introduced its core concept by name once in the opening paragraph, then referred to it as “it”, “this”, and “the approach” for the remaining 2,400 words.

- Weak: pronoun-heavy reference “It improves retrieval performance by addressing the core issues that make this difficult for most implementations.” Entity explicitness: 0/100 — no named entity, three pronouns

- Weak: context-dependent opening “Building on the framework described above, this section explains why the approach works.” Declarative opening: 10/100 — requires parent document, no standalone meaning

- Weak: compression collapse “These signals help systems understand what the content is about and how it relates to the broader topic.” Compression retention: 12/100 — when compressed, becomes “signals help systems understand content topics” (generic, unattributable)

Compare that to what a high-scoring section looks like:

- Strong: explicit entity naming “The GEO Stack’s entity reinforcement layer measures how consistently a page names its core concepts rather than using pronoun references.” Entity explicitness: 92/100 — “GEO Stack” and “entity reinforcement layer” named explicitly

- Strong: declarative opening “Entity explicitness is the ratio of named entity mentions to pronoun references within a content section.” Declarative opening: 100/100 — factual definition, standalone, no context needed

- Strong: compression-resistant “The GEO Lab measured a 24 percentage point citation rate improvement from structural GEO changes in Experiment 001.” Compression retention: 95/100 — compressed form preserves entity, number, and claim

How to Close the Gap: Entity Reinforcement Protocol

If you do not fix entity explicitness, the retrieval system will continue citing your page while the generation system attributes your concepts to “some practitioners” or, worse, to a competitor whose content names the same concepts more explicitly. You will have the citation. You will not have the representation. The competitor with three posts and better entity naming will own the concept in AI-generated answers.

The fix is mechanical. I applied it to the flagged page and the entity signal score moved from 15 to 78 in a single editing pass.

-

Replace every pronoun reference with the named entity

Search for “it”, “this”, “that”, “these”, and “the approach” in your content. Replace each one with the actual entity name. “It improves performance” becomes “The GEO Stack improves retrieval performance.” Yes, it feels repetitive in prose. The prose is not the audience — the compression algorithm is.

-

Rewrite every section opening as a standalone declarative statement

Delete “As mentioned above”, “Building on this”, and every other phrase that assumes the reader has seen the previous section. Each H2 section should open with a factual claim that makes sense if extracted in complete isolation. RAG systems retrieve sections, not documents.

-

Run the compression test on every section

Ask an LLM to compress each section to one sentence. If the compressed form does not contain the entity name and the specific claim, the section will lose its identity during extraction. Rewrite until the compressed form preserves both.

-

Check standalone coherence

Read each section without reading any other section on the page. Does it make sense? Does it restate the topic from the H1? Sections that score below 0.3 topic overlap with the page title get a −12 penalty in the GEO Stack scoring model because they are opaque to retrieval systems operating at the passage level.

Result: After applying this protocol to the flagged page, entity signals moved from 15/100 to 78/100. Citation rate remained at 100% — retrieval was never the problem. But the accuracy of how the page’s concepts appeared in AI-generated answers improved measurably. The page went from being cited as “some GEO practitioners suggest” to being cited as “The GEO Lab’s entity reinforcement framework.”

What This Means for GEO Strategy

The citation rate metric is seductive because it is binary and easy to celebrate. Your page was cited or it was not. But citation rate alone is the equivalent of measuring whether someone mentioned your name at a conference without checking whether they described your work accurately.

Entity signals measure the second part. They measure whether the AI system, having retrieved your content, can accurately reconstruct what your content actually says. A low entity signal score means the system is citing you but paraphrasing you into generic advice that could have come from anyone.

- Citation rate and entity signals are architecturally independent metrics. Optimising for retrieval does not automatically improve representation.

- Entity explicitness is the most mechanically fixable component. It is a pronoun-to-entity ratio. Replace pronouns with named entities.

- Declarative section openings are the second largest lever. Every section must be a standalone factual claim, not a continuation of the section above it.

- Compression retention is the ultimate test. If an LLM compresses your section and the entity name disappears, the section will be misrepresented in generated answers.

- The fix is editorial, not technical. This is not a schema problem, a site speed problem, or a crawlability problem. It is a writing problem.

Deeper dive: The GEO Stack scoring architecture and the five-layer framework that produces these scores are documented in The GEO Stack: Five-Layer Framework. The entity reinforcement layer specifically is covered in Entity Reinforcement: Building Semantic Gravity in AI Search.

Frequently Asked Questions

Can a page have high entity signals but a low citation rate?

Yes. Entity signals measure content quality at the section level. Citation rate depends on document-level retrieval signals including topical authority, freshness, and domain reputation. A perfectly structured page on a new domain with no backlinks may have excellent entity signals but never be retrieved.

Does fixing entity signals improve citation rate?

Not directly. Citation rate depends on retrieval, which is driven by different signals. However, improving entity signals can indirectly improve citation rate over time: if AI systems more accurately represent your content, downstream training data (for systems like ChatGPT) will more strongly associate your entity names with the relevant topics.

How do I measure entity signals on my own content?

The simplest test: ask an LLM to compress each section of your page to one sentence. If the compressed sentence does not contain your brand name or core concept name, your entity signals are weak. The GEO Stack scoring engine automates this with a four-component weighted score.

Is this the same as keyword density?

No. Keyword density is a document-level metric from traditional SEO. Entity explicitness is a section-level metric that measures the ratio of named entities to pronoun references within each individual section. The goal is not repetition for ranking — it is semantic anchoring for extraction.

Continue Reading

Have questions about this topic? Contact The GEO Lab · Return to homepage