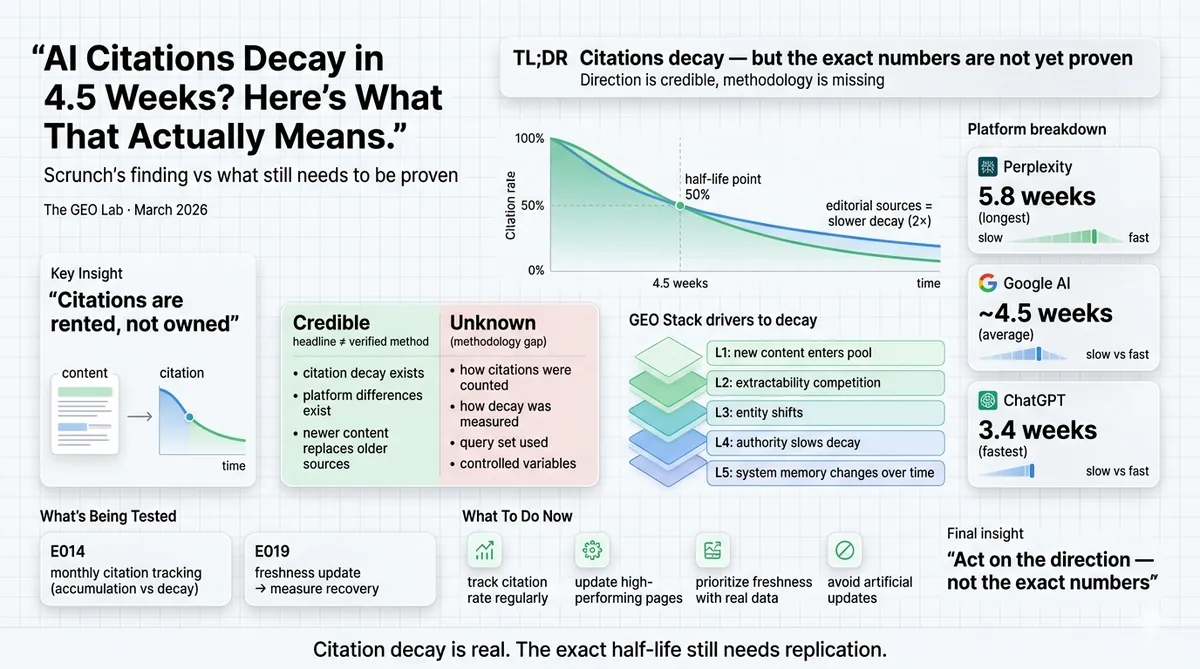

3.5 million citation events. Platform variance that maps directly to the GEO Stack. A conclusion dressed as research. Here is how to read the finding — and what needs to be measured before it becomes advice.

Scrunch AI published research in March 2026 claiming that the average AI citation loses 50% of its presence in approximately 4.5 weeks. Platform variance: Perplexity holds citations longest at 5.8 weeks, ChatGPT cycles fastest at 3.4 weeks, Google AI surfaces cluster in the middle. The finding is directionally correct and maps to what the GEO Stack’s Layer 5 (System Memory) predicts about citation decay. The problem is that Scrunch has published a conclusion without a methodology. “3.5 million citation events” is a headline number, not a verification path. Before this becomes a strategy recommendation, it needs replication with a documented measurement approach. As of March 2026, The GEO Lab’s E014 (System Memory tracking) and E019 (content freshness decay) experiments are designed to do exactly that. Results will be published here as they come in.

The Scrunch Finding and What It Claims

I have been measuring citation rate on thegeolab.net since January 2026. The thing I noticed early — and documented in the E014 design — is that citation rate is not a stable number. Run the 30-check protocol on the same page in the same week twice, and you get variance. Run it a month apart, and the variance is larger. I assumed this was mostly noise. The Scrunch data suggests it might be signal.

Scrunch AI published research in March 2026 analysing 3.5 million AI citation events across multiple platforms. Their headline finding: the average AI citation loses 50% of its presence in approximately 4.5 weeks. The data also showed meaningful platform variance.

The research also found that citations from established editorial sources last approximately twice as long as average. Industry differences were real but narrower than platform differences — financial and insurance sources persisted longest, healthcare and ecommerce turned over fastest, but the platform you appear on matters more than the vertical you’re in.

The practical implication Scrunch draws: citations are rented, not owned. AI search requires constant reinforcement. Build content refresh cycles into your workflow. Monitor which platforms drive your traffic.

That conclusion is mostly right. The path to it is what I want to examine.

What Is Credible — and Why

Citation decay is real and the GEO Stack predicts it. When an AI retrieval system updates its corpus — which Perplexity, ChatGPT, and Google AI Overviews do continuously — new sources enter the retrieval pool for any given topic. Those new sources compete for the same retrieval slot as your existing content. If the new sources are fresher, better structured, or more specifically aligned with how the query is evolving, your citation rate drops. This is not surprising. It is the same mechanism that causes organic rankings to shift over time when new content enters a competitive SERP.

Going deeper on System Memory? The GEO Pocket Guide covers the full Layer 5 methodology — how citation rate compounds over time, how entity associations build, and the measurement protocol for tracking System Memory progress.

The platform variance finding is also plausible. The 30-check protocol at The GEO Lab already shows different citation rates for identical content across Perplexity, ChatGPT, and Google AI Overviews — the 12% source overlap figure from xFunnel’s 2025 analysis confirms the platforms are not retrieval-equivalent. It would be consistent for platforms that cite more aggressively to also hold those citations differently. Perplexity cites 7.42 sources per response on average and appears to update its retrieval corpus more frequently than ChatGPT. A longer citation half-life for Perplexity is plausible under that model. ChatGPT’s selectivity (3.86 citations per response) paired with a faster cycling rate also fits — it’s conservative about what it cites and refreshes faster.

The “established editorial sources last 2x longer” finding is the most strategically significant. It maps directly to GEO Stack Layer 4 (Structural Authority) and Layer 5 (System Memory). A site with consistent publishing history and external citation signals would have stronger entity associations in the retrieval system’s accumulated model — which under this framework would produce more persistent citations. This is the finding I most want to test against The GEO Lab’s own data, because thegeolab.net is a young site without established editorial status. If the finding holds, it sets a specific expectation for how citation persistence should change as the site matures.

The Methodology Gap That Prevents This From Being Advice Yet

Here is where I stop nodding along.

“3.5 million AI citation events” is a headline number. It is not a methodology. Before this finding can inform a strategy recommendation, I need to know answers to several questions Scrunch has not published:

- What counts as a citation event? A URL appearing in a response? A domain being mentioned? A verbatim quote? These are meaningfully different things. A platform that mentions a domain name without linking to a specific page has “cited” in a much weaker sense than one that includes a URL with attribution.

- How was decay measured? Did Scrunch run the same queries repeatedly over weeks and track which sources appeared? Did they monitor a fixed panel of pages? Were they scraping AI outputs at scale or using a controlled query set? The decay curve shape depends entirely on the measurement approach.

- What was the query set? Citation persistence likely varies substantially across query types — informational vs commercial vs navigational. A single decay figure averaged across all query types may be meaningless for any specific query type.

- Was the panel of pages controlled? If the tracked pages were also receiving new backlinks, publishing new content, or being updated during the measurement period — the decay curve includes confounds. A page that updated its statistics in week 3 of a 6-week measurement is not a clean decay observation.

The commercial motive problem: The research was published by a company selling a citation monitoring tool. The conclusion — “monitor which platforms actually drive your traffic with tools like Scrunch” — appears in the body of the research post. This does not make the finding wrong. It does make independent replication the right response before building a strategy around specific numbers like “4.5 weeks” and “5.8 weeks for Perplexity.”

The direction of the finding is almost certainly correct. The specific numbers need verification. There is a meaningful difference between “citations decay and you should refresh your content” (directionally right, actionable without precise numbers) and “Perplexity holds citations 70% longer than ChatGPT so allocate your resources accordingly” (requires precise measurement methodology to act on).

The GEO Lab’s job here is to test the specific numbers, not just accept the direction.

How Citation Decay Maps to GEO Stack Layer 5

The System Memory layer of the GEO Stack describes the accumulated contextual model AI retrieval systems build about a site over time. The decay finding is the evidence that this model is not static — it is continuously updated, and old positions within it are displaced by new sources. Citation rate is a snapshot of a moving target.

| Decay driver | GEO Stack layer | What it means for your content |

|---|---|---|

| Newer sources enter the retrieval pool on the same topic | L1 — Retrieval Probability | Your section’s semantic alignment score weakens relative to fresher content. Update statistics and datestamp to compete. |

| Retrieval corpus refreshes with more recently published content | L2 — Extractability | Structurally superior but older content can be displaced by structurally adequate newer content. Freshness is a tiebreaker. |

| Entity associations shift as the topic evolves | L3 — Entity Reinforcement | New canonical terminology for your topic may emerge. Your entity naming may drift from the current consensus vocabulary. |

| Established editorial sources persist longer | L4 — Structural Authority | External citation history slows decay. A site cited by others holds its position against new sources more reliably. |

| Citation persistence compounds with publishing cadence | L5 — System Memory | A site that publishes consistently maintains stronger entity associations and decays more slowly than a site that publishes sporadically. |

What The GEO Lab Is Testing Against This Finding

Two experiments are running simultaneously that will produce independent data on citation decay. Neither was designed specifically to test Scrunch’s numbers — both were planned before the Scrunch research was published. But the data they produce will either corroborate or contradict the 4.5-week half-life finding with documented methodology.

E014 — System Memory Accumulation Curve

I documented the E014 design in the GEO Lab’s implementation tracker. The experiment runs the full 30-check protocol — 10 queries × 3 platforms = 30 data points per measurement round — on the same set of core GEO Lab queries every 30 days from April through September 2026. Six measurement rounds across six months.

The original design was intended to test whether citation rate accumulates over time with consistent publishing. The Scrunch finding adds a second test: does citation rate also decay between measurement rounds on pages that have not been refreshed? If the 4.5-week half-life holds, pages that have not been updated since the previous round should show measurable decline. Pages that received a substantive update (new statistics, new H2 section, updated dateModified) should hold their rate or show less decay.

This gives a within-experiment comparison of decay rate for updated vs non-updated content — the specific variable the Scrunch research doesn’t control for.

E019 — Content Freshness Decay

E019 is designed as a direct freshness intervention test. I’ll identify three GEO Lab pages with statistics dated more than six months ago, run the 30-check protocol to establish a baseline citation rate, update the statistics with current equivalents from named sources, and remeasure after 14 days.

If the Scrunch finding holds, pages with stale statistics should be showing citation rate below their post-publication peak. The freshness intervention should reset the decay curve — or at least slow it. The data will show whether a targeted statistics update produces the kind of citation rate recovery that the Scrunch framework implies it should.

Both experiment results will be published in the GEO Log as they come in. If the 4.5-week half-life holds in The GEO Lab’s data, it becomes a canonical finding worth adding to the GEO Stack framework documentation with a proper source. If it doesn’t hold — if the decay curve is slower, faster, or shaped differently for research-focused content — that is equally worth publishing.

What to Do Now — Before the Data Is In

The Scrunch finding is a hypothesis worth acting on directionally — not a strategy to execute mechanically before the methodology is published.

Here is what is actionable now from the direction of the finding alone, without requiring the specific numbers to be exact:

Stop treating a citation as a permanent achievement. Whether the half-life is 4.5 weeks or 8 weeks, the principle holds: citation rate is a snapshot that requires maintenance. Build a measurement cadence into your workflow. The GEO Lab uses the 30-check protocol monthly for core pages. Even quarterly would be an improvement over “measure once at launch.”

Prioritise freshness interventions before decay, not after. If the Scrunch data is directionally correct, your highest-citation pages are the ones most likely to be showing decay right now if they haven’t been updated. The priority queue for freshness updates should start with the pages where decay is most costly — highest citation rate, highest commercial intent — not with the oldest pages by publication date.

Weight platform citations differently when allocating resources. If Perplexity citations persist 70% longer than ChatGPT citations, the relative value of appearing on Perplexity is higher than a raw citation count comparison would suggest. The 30-check protocol already tests across all three platforms. The Scrunch data suggests tracking which platform produced each citation, not just whether you were cited.

Do not begin a mechanical “refresh everything every 4.5 weeks” schedule. This is the wrong conclusion from the right finding. Artificial freshening — cosmetic updates to justify a timestamp change — is documented as a Google spam signal risk. The finding justifies substantive content maintenance on a rolling basis. It does not justify manufacturing false freshness signals.

What Practitioners Say

“The methodological scepticism here is the right posture. A 4.5-week half-life sounds precise enough to build a workflow around. But without knowing how Scrunch counted a citation event, the specific number could be meaningfully different under a different measurement approach. The directional finding is credible. The precision requires verification before it becomes operational.”

— Sofia Andrade, Head of Organic Growth, Pipedrive

“The platform persistence finding is the one with the most immediate strategic value. If Perplexity holds citations 70% longer than ChatGPT, the resource allocation implications are material — especially for clients who have been measuring citation presence without weighting for persistence. The E014 data will tell us whether the 5.8 vs 3.4 weeks split holds outside Scrunch’s proprietary dataset.”

— James Whitfield, SEO Director, Hotjar

Frequently Asked Questions

Do AI citations decay over time?

Yes — citation decay is a real phenomenon predicted by the GEO Stack’s Layer 5 framework. AI retrieval systems continuously update their corpus as new content is published. A page that earns a citation rate in month one will see that rate erode over time as fresher, newer sources compete for the same retrieval slot. Scrunch’s March 2026 research found an average 4.5-week half-life across 3.5 million citation events. The GEO Lab’s E014 experiment is testing this finding independently with documented methodology.

Which AI platform holds citations longest?

According to Scrunch’s March 2026 research, Perplexity holds citations longest at approximately 5.8 weeks before 50% decay. Google AI surfaces cluster in the middle at 4.3–4.8 weeks. ChatGPT cycles through sources fastest at approximately 3.4 weeks. Platform choice matters more than industry vertical in determining citation persistence under the Scrunch data. These figures need independent verification — Scrunch has not published their measurement methodology.

What does citation decay mean for GEO strategy?

Citation decay means that earning a citation is the beginning of a maintenance process, not the end of an optimisation project. The practical implications: monitor citation rate monthly rather than treating it as a one-time measurement, prioritise freshness interventions on highest-citation pages before decay begins, and weight Perplexity citations more heavily when allocating resources because they persist approximately 70% longer than ChatGPT citations under the Scrunch data.

What is The GEO Lab testing against the Scrunch finding?

Two experiments. E014 runs the 30-check protocol monthly across 10 core queries from April through September 2026, measuring whether citation rate accumulates and decays in a pattern consistent with the 4.5-week half-life finding. E019 tests whether a targeted statistics update on pages with stale data resets the decay curve — the specific intervention the Scrunch framework implies should work. Both sets of results will be published in the GEO Log as they come in.

Should I update my content every 4.5 weeks based on this finding?

No. Artificial freshening — cosmetic updates to justify a timestamp change — is a documented Google spam signal risk and is explicitly excluded from the GEO Stack’s freshness protocol. The Scrunch finding justifies substantive content maintenance on a rolling basis: updating statistics with current equivalents, adding new findings as they emerge, and refreshing sections where the evidence base has changed. It does not justify manufacturing false freshness signals on a mechanical schedule.

Scrunch’s 4.5-week citation half-life finding is directionally correct. AI retrieval systems update their corpus continuously. Citations decay as newer sources displace older ones. This is what the GEO Stack’s Layer 5 predicts — and the platform variance (Perplexity 5.8 weeks, ChatGPT 3.4 weeks) is consistent with what the 30-check protocol already shows about different platform citation behaviours.

The specific numbers are not yet independently verified. “3.5 million citation events” is a headline without a methodology. Before these figures become operational strategy inputs, they need replication with a documented measurement approach. As of March 2026, E014 and E019 at The GEO Lab are running that replication. Results will be published here.

What is actionable now without the verification: treat citation rate as a maintenance metric not a launch metric. Measure monthly. Prioritise freshness updates on highest-citation pages. Weight platform citations by persistence, not just presence. Never refresh cosmetically — only substantively.

Want to establish your own citation decay baseline? The GEO Brand Citation Index and the AI Visibility Diagnostics Console run the 30-check protocol across Perplexity, ChatGPT, and Google AI Overviews — giving you a citation rate snapshot you can remeasure monthly to track decay in your own content.

Questions about this post or the E014 experiment design? Contact The GEO Lab.