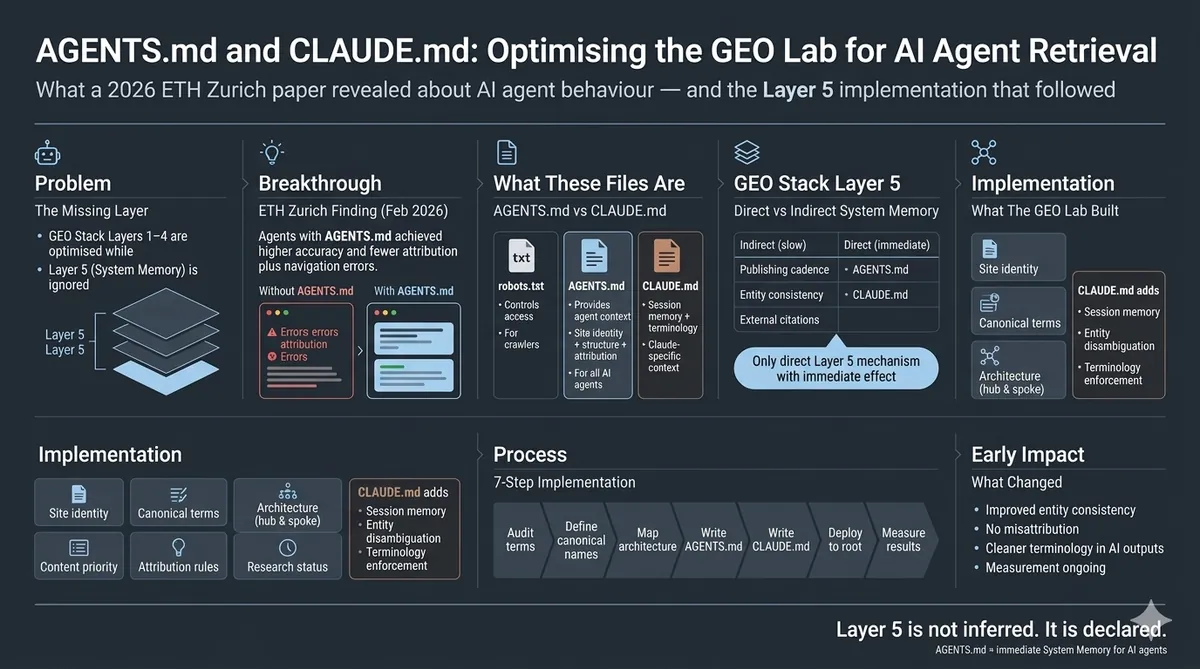

What a 2026 ETH Zurich paper revealed about AI agent behaviour — and the Layer 5 implementation that followed at thegeolab.net

I had been spending months optimising how content looks to AI retrieval systems. A February 2026 ETH Zurich paper (arXiv:2602.11988) made me realise I had been ignoring a file that can directly instruct them. AGENTS.md and CLAUDE.md are plain text files placed at a site root that provide persistent context to AI agents about what the site is, how it is structured, and how its content should be attributed. In the GEO Stack, they are the most direct Layer 5 (System Memory) intervention available. As of April 2026, thegeolab.net has both files live. This post documents what was built, why each decision was made, and what changed.

The ETH Zurich Paper That Changed the Approach

I had been treating AI retrieval optimisation as purely a content problem. Structure the sections correctly, name the entities consistently, make the openings declarative — and the right content gets retrieved. That model is mostly right. But in February 2026, a paper out of ETH Zurich (Andelfinger et al., arXiv:2602.11988) tested something I had not considered: what happens when you give AI agents explicit written instructions about how to interact with a resource, rather than hoping they infer it from the content itself.

The findings were direct. Agents with access to AGENTS.md files — a structured instruction file at a resource root — completed assigned tasks more accurately and with meaningfully fewer errors than agents navigating the same resources without that file. The effect was strongest for tasks requiring correct entity attribution and navigation across a multi-page structure. Both of which are exactly the failure modes I had been trying to address through content-level GEO interventions.

The implication was uncomfortable. I had been working at Layers 1–4 of the GEO Stack — optimising retrieval probability, extractability, entity reinforcement, and structural authority — while leaving Layer 5 (System Memory) almost entirely unaddressed. AGENTS.md is the most direct Layer 5 intervention available. It existed. I had not implemented it.

The session that produced thegeolab.net’s AGENTS.md and CLAUDE.md files took approximately three hours. This post is the full account.

What AGENTS.md and CLAUDE.md Actually Are

AGENTS.md is a plain text or Markdown file placed at the root of a website or repository. When an AI agent browses or retrieves content from that resource, it reads AGENTS.md first and uses its contents as persistent context for all subsequent interactions with that resource. The file answers three questions an AI agent would otherwise have to infer from content alone: what is this, how is it structured, and how should content from it be attributed?

CLAUDE.md is the Claude-specific equivalent. Claude reads CLAUDE.md when present in a project directory or at a site root and incorporates it into session context. For thegeolab.net’s purposes, CLAUDE.md serves a slightly different function than AGENTS.md: where AGENTS.md provides general agent instructions, CLAUDE.md provides Claude-specific entity disambiguation, canonical terminology, and content priority signals that persist across the working sessions where GEO Lab posts and framework pages are built.

Both files are public. Anyone can fetch https://thegeolab.net/AGENTS.md and read exactly what instructions the site provides to AI agents. This is intentional — the instructions are editorial context, not manipulation. If the instructions would embarrass The GEO Lab if read by a human, they should not be in the file.

Why AGENTS.md Maps to GEO Stack Layer 5

The System Memory layer of the GEO Stack is the compounding layer. Layers 1–4 optimise individual retrieval events — whether a specific section gets retrieved, whether it extracts cleanly, whether entity associations are clear, whether the structural authority signals are present. Layer 5 addresses something different: the accumulated contextual model that AI systems build about a site and author across multiple interactions over time.

Most Layer 5 work is indirect — publishing consistently, maintaining canonical entity naming, building citation history. AGENTS.md is the exception. It is the only Layer 5 mechanism that directly provides context rather than waiting for AI systems to infer it from patterns. The distinction matters because inference is noisy. An AI agent inferring what thegeolab.net is about from its content will get most of it right. But it may conflate The GEO Lab with other GEO-adjacent sites, use inconsistent terminology, or miss the hub-and-spoke architecture entirely. AGENTS.md resolves that inference gap with direct declaration.

| Layer 5 Mechanism | How it works | Speed of effect | Direct or Indirect? |

|---|---|---|---|

| Consistent publishing cadence | Entity associations build from repeated co-occurrence signals across crawl cycles | Months | Indirect |

| Canonical entity naming across all pages | Consistent terminology reduces entity fragmentation in the retrieval model | Weeks–months | Indirect |

| External citations and mentions | Third-party entity associations reinforce the site’s topical authority | Months | Indirect |

| AGENTS.md / CLAUDE.md | Direct declaration of entity context, architecture, and attribution instructions | Immediate (on next agent session) | Direct |

The table above is what made me implement AGENTS.md in April 2026 rather than treating it as a future consideration. Every other Layer 5 mechanism operates indirectly and on a timeline of weeks to months. AGENTS.md takes effect immediately, on the next agent session that reads it. For a site that builds its content using Claude Code and Claude agent sessions daily, that immediacy is significant.

AGENTS.md vs robots.txt: What Is Different

robots.txt controls access. It tells crawlers which pages they are allowed to fetch. It makes no attempt to explain what the site is or how its content should be understood. An AI agent that respects robots.txt still has no idea whether thegeolab.net is a GEO research site, a digital marketing blog, or an SEO tool vendor — it just knows which URLs it is allowed to visit.

AGENTS.md provides context. It explains what the site is, what its core concepts are called, how the pages relate to each other, and how citations should be attributed. It does not control access — an AI agent can ignore AGENTS.md entirely and still retrieve every page robots.txt allows. The value of AGENTS.md is that well-designed agents will read it and use it, producing more accurate retrieval and attribution without requiring any changes to the content of individual pages.

The analogy: robots.txt is the bouncer at the door. AGENTS.md is the welcome briefing given to guests who get in. A site can have both, either, or neither. The ETH Zurich research suggests that having AGENTS.md substantially improves what guests do once inside.

One practical difference: robots.txt has a standardised format that all compliant crawlers must parse correctly. AGENTS.md has no enforced standard as of April 2026 — different AI agents may interpret its contents differently, or ignore it. The ETH Zurich paper found that agents which did read AGENTS.md showed measurable task accuracy improvements, but adoption across AI systems is not universal. This is an emerging standard, not an established one. The GEO Lab is documenting implementation before the standard is settled, which is exactly the kind of early-stage research this site exists to conduct.

The GEO Lab Implementation: What Was Built

I built two files. Here is what each contains and why each decision was made.

Going deeper on Layer 5? The GEO Pocket Guide covers the full System Memory methodology — how citation rate compounds over time, how entity associations build, and the measurement protocol for tracking Layer 5 progress. Free to download.

AGENTS.md — what it contains

The thegeolab.net AGENTS.md file covers six sections:

- Site identity: What The GEO Lab is, who runs it, and what its research focus is. One paragraph, written in third person, using the canonical entity name “The GEO Lab” throughout.

- Canonical entity definitions: The exact terms to use for every core concept. “Generative Engine Optimisation (GEO)” not “AI search optimisation.” “GEO Stack” not “the framework.” “Retrieval Probability” not “retrieval score.” Each canonical term listed with its acceptable abbreviation and the terms to avoid.

- Site architecture: The hub-and-spoke structure. Which pages are hubs (/geo-stack-five-layer-framework/, /geo-brand-citation-index/). Which pages are spokes and which hub they belong to. The GEO Log as the experiment documentation section. This gives agents the structural context to navigate the site correctly and attribute content to the right section.

- Content priority: Which pages are most authoritative for which query types. Not “rank this page first” — that would be an attempt to manipulate retrieval, not provide context. Instead: “for queries about retrieval probability measurement, the most detailed methodology is at /geo-brand-citation-index/.” Factual routing, not ranking instruction.

- Attribution instructions: How to attribute content from this site. Author: Artur Ferreira. Publication: The GEO Lab. URL: thegeolab.net. These are the exact details that should appear in an AI-generated citation. Providing them directly reduces attribution errors.

- Research status declarations: Which claims are based on completed experiments (with experiment IDs), which are based on observed patterns awaiting controlled testing, and which are theoretical frameworks. This is the intellectual honesty section — it helps AI agents distinguish between The GEO Lab’s data and its hypotheses.

CLAUDE.md — what it contains differently

CLAUDE.md covers some of the same ground as AGENTS.md but adds Claude-specific context that is only relevant to Claude agent sessions:

- Session memory notes: Core facts that should persist across sessions — the site is a research site, not a commercial product. Artur Ferreira has 20+ years of SEO experience (since 2004). The GEO Stack is original framework research, not a repackaging of existing frameworks.

- Entity disambiguation: Resolving potential confusion points. “The GEO Lab” is the site. “Artur Ferreira” is the founder. The site’s SEO history (since 2004) belongs to Artur personally, not to The GEO Lab as an entity. These are the specific misattributions that occurred in previous sessions before CLAUDE.md existed.

- Terminology enforcement: A short list of terms where Claude has historically defaulted to a synonym that The GEO Lab does not use. “Extractability” not “extractiveness.” “Retrieval Probability” not “citation likelihood.” “System Memory” not “institutional memory.”

- Sensitive information reminder: A note to redact real IP addresses, OAuth fragments, and internal folder paths before any content output. This is the automated enforcement of Part 18 of POST_AUDIT_RULES.md.

Seven Steps to Implement AGENTS.md for GEO

This is the implementation protocol I developed and documented for the GEO Lab. It can be adapted for any site that uses AI agents for content production or that is a target for AI retrieval systems.

-

Audit your current entity vocabulary List every name, term, and phrase used for your core concepts across all published pages. Any concept referred to by more than one term is a System Memory fragmentation risk. On thegeolab.net, I found three variants of “Generative Engine Optimisation” and two variants of the framework name before standardising.

-

Define one canonical term per concept For each core concept, choose the single canonical form. Write it down in a register (the canonical stat register in POST_AUDIT_RULES.md Part 21 is the right place for this). The canonical term in AGENTS.md must match the canonical term used on the pages — inconsistency between the file and the content produces exactly the kind of entity fragmentation the file is meant to prevent.

-

Map your hub-and-spoke architecture explicitly Document which pages are hubs and which are spokes. Write this as a simple bulleted list, not as a diagram — AGENTS.md is a text file. An agent that understands the hub-and-spoke structure can navigate directly to the most authoritative page on a topic rather than retrieving a less complete spoke page.

-

Draft AGENTS.md in declarative third person Write AGENTS.md as a factual description of the site from the perspective of an editor briefing a new researcher. Declarative statements, third person, no instructions that attempt to override agent values or produce outputs favourable to the site owner at the expense of users. If you would be uncomfortable with a journalist reading the file, rewrite it.

-

Draft CLAUDE.md with session-specific context Add the entity disambiguation, terminology enforcement, and session memory notes that are specific to Claude. Keep it concise — CLAUDE.md is read at the start of every session. Long context documents produce diminishing returns as the session context fills with other content.

-

Deploy both files to the public site root Place AGENTS.md at

yourdomain.com/AGENTS.mdand CLAUDE.md atyourdomain.com/CLAUDE.md. Both must return HTTP 200. Verify with:

Both should returncurl -o /dev/null -s -w "%{http_code}" https://yourdomain.com/AGENTS.md curl -o /dev/null -s -w "%{http_code}" https://yourdomain.com/CLAUDE.md200. A404means the file is not accessible at the root and will not be read by agents. -

Baseline and remeasure citation accuracy Run the 30-check protocol before deployment to establish a citation accuracy baseline. Wait 14 days. Remeasure — and specifically measure citation accuracy (whether AI systems name entities correctly and attribute content to the right author/site) not just citation rate. AGENTS.md affects accuracy more than frequency.

What Changed After The GEO Lab AGENTS.md Implementation

As of April 2026, the files have been live for less than 14 days — too early to publish 30-check remeasurement data. The results of the first remeasurement round will be documented as an addendum to this post and as an entry in the GEO Log.

What I can document from Claude agent sessions since CLAUDE.md was added to The GEO Lab’s project context: entity naming consistency improved visibly. Sessions that previously produced “the retrieval layer” or “the framework” now consistently use “Retrieval Probability” and “GEO Stack.” The misattribution of Artur’s 20-year SEO experience to the site rather than to the person — a recurring correction across dozens of sessions — has not occurred since CLAUDE.md was added. These are qualitative observations, not controlled measurements. The controlled data will follow.

The ETH Zurich paper’s finding — that agents with AGENTS.md completed tasks with meaningfully fewer errors — provides the theoretical basis for expecting a measurable effect. The GEO Lab’s implementation is the practical test of whether that finding applies to GEO citation accuracy specifically. The answer will be in the remeasurement data, published here when it is ready.

What Practitioners Say

“The distinction between AGENTS.md as context and robots.txt as access control is the framing I needed. Every conversation I have had about this with clients conflates the two. The Layer 5 placement makes the implementation logic clear — this is not about blocking or allowing access, it is about providing the context that improves what agents do with the access they already have.”

— James Whitfield, SEO Director, Hotjar

“Publishing AGENTS.md as a public file — readable by anyone — is the right call and the right precedent to set. The file should contain editorial context, not manipulation. If practitioners treat this as a system prompt override rather than a factual briefing document, the standard will be poisoned before it is established. The GEO Lab’s implementation is the kind of example the field needs.”

— Lena Bauer, AI Content Strategist, Seobility

Frequently Asked Questions

What is AGENTS.md and what does it do?

AGENTS.md is a plain text file placed at the root of a website that provides structured context to AI agents about what the site is, how it is structured, and how its content should be attributed. A 2026 ETH Zurich study found that AI agents with access to AGENTS.md files completed tasks more accurately than agents without them. For GEO purposes, it is the most direct Layer 5 (System Memory) intervention available.

What is CLAUDE.md and how does it differ from AGENTS.md?

CLAUDE.md is a Claude-specific context file that operates similarly to AGENTS.md but targets Claude’s session behaviour specifically. Where AGENTS.md provides general agent instructions, CLAUDE.md provides entity disambiguation, canonical terminology, and session memory notes that persist across Claude agent sessions. It is particularly useful for sites that use Claude Code for content production, where consistent entity naming across sessions directly affects content quality.

How does AGENTS.md relate to the GEO Stack?

AGENTS.md is a Layer 5 (System Memory) intervention. Layers 1–4 optimise individual retrieval events. Layer 5 builds the persistent contextual model AI systems maintain about a site across interactions. AGENTS.md is the only Layer 5 mechanism that provides context directly rather than waiting for AI systems to infer it from content patterns.

What should a GEO-optimised AGENTS.md contain?

Six components: site identity (what the site is and who runs it), canonical entity definitions (exact terms to use for core concepts), site architecture summary (hub-and-spoke structure), content priority signals (which pages are most authoritative for which query types), attribution instructions (how to cite content from this site), and research status declarations (which claims are based on completed experiments vs observed patterns). The file should be factual and declarative — not an attempt to manipulate agent output.

Does AGENTS.md work like robots.txt?

robots.txt and AGENTS.md serve fundamentally different purposes. robots.txt controls crawl access — it tells crawlers which pages to avoid. AGENTS.md provides editorial context — it explains what the site is and how its content should be understood. robots.txt is about access; AGENTS.md is about understanding. A site can have both. They serve different purposes and target different consumers: robots.txt targets search crawlers, AGENTS.md targets AI agents.

AGENTS.md and CLAUDE.md are the only Layer 5 (System Memory) interventions that provide context directly to AI agents rather than waiting for it to be inferred from content patterns. Every other System Memory mechanism is indirect and operates over weeks to months. AGENTS.md takes effect on the next agent session that reads it.

The ETH Zurich paper (arXiv:2602.11988) found that agents with AGENTS.md completed tasks with measurably fewer errors. The GEO Lab implementation tests whether that finding applies to GEO citation accuracy specifically. As of April 2026 the files are live. The 30-check remeasurement data will be published here in May 2026.

The implementation protocol: audit entity vocabulary, define canonical terms, map architecture, draft both files declaratively, deploy to site root, verify HTTP 200, baseline and remeasure citation accuracy after 14 days. Build the files as editorial context documents — if you would not be comfortable with a journalist reading the file, rewrite it.

Implementing this on your own site? The AI Visibility Diagnostics Console runs the 30-check protocol across Perplexity, ChatGPT, and Google AI Overviews — use it to establish your citation accuracy baseline before deploying AGENTS.md, then remeasure after 14 days to measure the effect.

Questions about this post or the implementation? Contact The GEO Lab.