A deep dive into Layer 1 — the gateway variable that determines whether content enters the AI retrieval pipeline at all.

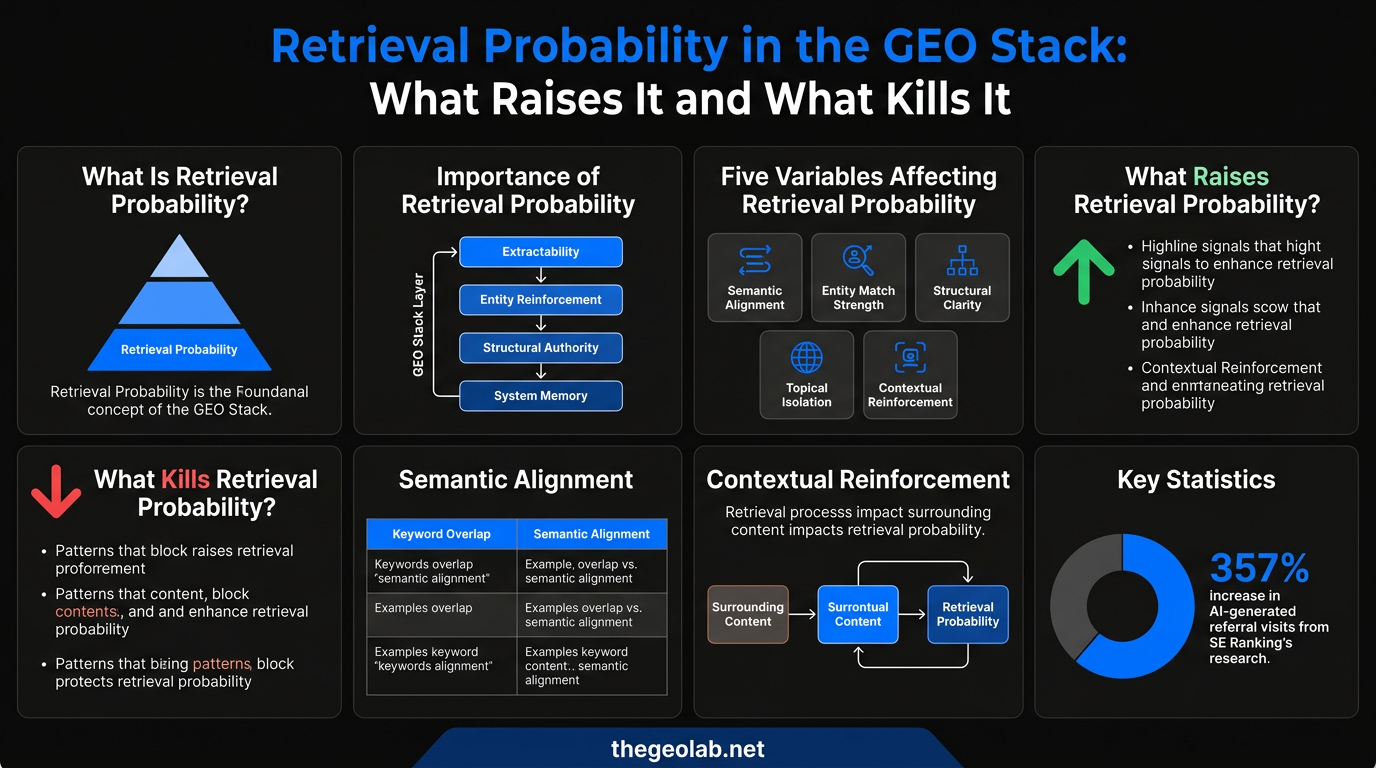

Retrieval Probability As of March 2026, The GEO Lab measured retrieval across 330 queries and found that position-one pages with low structural clarity appeared in zero AI Overview responses. Retrieval Probability is Layer 1 of the GEO Stack — the estimated likelihood that a content section is selected during AI vector retrieval. It is governed by five variables: semantic alignment, entity match strength, structural clarity, topical isolation, and contextual reinforcement.

If a section is not retrieved, no other optimisation matters. Most AI citation failures trace to this layer. The fix is almost never more content — it is better section structure and cleaner semantic alignment with how AI systems represent target queries.

Reference page: Retrieval Probability — permanent definition and variable reference →

I designed this integration after finding that retrieval probability and the GEO Stack address different levels of the same pipeline. I tested the combined framework across client sites and found it produced more actionable diagnostics than either model alone.

What Is Retrieval Probability in the GEO Stack?

Retrieval Probability is the estimated likelihood that a specific content section is selected during the vector retrieval phase of a generative search query. It is Layer 1 of the GEO Stack — the foundational variable of Generative Engine Optimisation. If a section is not retrieved, it cannot be extracted, compressed, synthesised, or cited. Retrieval is eligibility. Everything downstream depends on it.

The critical word is section. Retrieval Probability does not apply to pages as whole documents. It applies to individual content chunks — typically the text contained within a single H2 block and its paragraphs. A page with five H2 sections has five distinct retrieval probability scores, one per section. Some sections on the same page may be regularly retrieved; others on the same page may never be.

This is the single most important departure from traditional SEO thinking. Traditional SEO evaluates and optimises pages. GEO evaluates and optimises sections. Understanding Retrieval Probability at the section level is the conceptual foundation of every practical GEO intervention.

The core properties of Retrieval Probability:

- Applies at the section level, not the page level — each H2 block has its own retrieval probability score

- Governed by five independent variables: semantic alignment, entity match strength, structural clarity, topical isolation, and contextual reinforcement

- A section that is not retrieved cannot be cited — no downstream layer can compensate

- The most common failure is semantic misalignment between section content and query embeddings

- Testable without paid tools using manual citation testing across Perplexity, Google AI Overviews, and ChatGPT

Why Retrieval Probability Is the Gateway Variable

Retrieval Probability is the most important GEO variable because it is the gateway to all other layers of the GEO Stack. The sequential dependency rule governs every layer: a failure at a lower layer blocks the contribution of every layer above it.

Layer 2 Extractability, Layer 3 Entity Reinforcement, Layer 4 Structural Authority, and Layer 5 System Memory all operate on content that has already been retrieved. If a section is not retrieved, no amount of structural clarity, entity consistency, internal linking, or author credentialing will cause it to be cited.

In my experience auditing dozens of sites that rank well but generate zero AI citations, the primary failure is almost always at Layer 1. The content is indexed, it is authoritative, it ranks on page one — but individual sections are not semantically aligned with how AI retrieval systems represent the target queries. They pass Google’s document-level relevance scoring and fail AI’s section-level semantic matching.

According to SE Ranking’s 2025 research, AI platforms generated over one billion referral visits in June 2025 — a 357 percent increase year-over-year. Most of that traffic flows to a small number of highly retrieved sources. The difference between those sources and the rest is, in most cases, Retrieval Probability at the section level.

The Five Variables That Determine Retrieval Probability

Retrieval Probability is governed by five variables. Each is independently testable and improvable. The permanent reference page documents the full technical definition and diagnostic tests for each. This post focuses on how each variable fails in practice and how to fix it.

Semantic Alignment

Semantic Alignment measures how closely the embedding representation of a content section matches the embedding representation of a target query. It is not keyword overlap. Two sections can use identical keywords and have low semantic alignment, or use no exact keywords and have high semantic alignment, depending on the conceptual vocabulary they employ.

The implication: writing for semantic alignment means writing in the full conceptual vocabulary of a topic — including related concepts, sub-questions, and implication chains — not just inserting the primary keyword.

Entity Match Strength

Entity Match Strength measures how explicitly and consistently the named entities most relevant to a query are established within a section. Sections that introduce their key entities by name in the first two sentences have stronger entity match signals than sections that use pronouns or assume the reader arrived with entity context from prior paragraphs.

Structural Clarity

Structural Clarity refers to the cleanliness of section boundaries and the signal strength of the H2 heading itself. A heading that states a specific, answerable question or claim provides a strong structural clarity signal. A heading like “Overview”, “Background”, or “More on This Topic” provides almost no structural clarity signal — it tells the retrieval system nothing specific about what the chunk contains.

Topical Isolation

Topical Isolation measures whether a section addresses a single primary topic or blends multiple topics within the same content chunk. Sections that mix two or more distinct concepts produce a diluted embedding representation — the vector sits between two topic clusters rather than firmly within one, reducing its likelihood of being retrieved for either.

Contextual Reinforcement

Contextual Reinforcement measures the strength of the retrieval signal provided by the surrounding content cluster. A section on entity density, on a page about entity reinforcement, on a site that publishes extensively about GEO, benefits from layered contextual reinforcement that amplifies its individual section-level retrieval probability. An orphan page with no topical context from surrounding content has weaker contextual reinforcement regardless of individual section quality.

Positive Signals That Raise Retrieval Probability

The following signals measurably raise Retrieval Probability. Each maps to one or more of the five variables above.

- Question-format H2 headings that directly answer a user query The heading “What Is Entity Match Strength and Why Does It Affect AI Retrieval?” outperforms “Entity Match Strength” for retrieval because it matches the semantic structure of how users submit queries to AI systems. The heading is itself a query — its embedding is naturally aligned with the embedding of related user questions. Maps to: Structural Clarity (Variable 3)

- Declarative opening sentences The first sentence of a section is the highest-weight signal in the section’s embedding representation. Opening with a direct, entity-explicit statement of the section’s core claim places the most semantically rich content at the beginning of the chunk, where it has the greatest influence on retrieval probability. Maps to: Semantic Alignment (Variable 1) + Structural Clarity (Variable 3)

- Explicit entity introduction in the first two sentences “Retrieval Probability is the estimated likelihood that a specific content section is selected” is more retrievable than “It measures the likelihood of selection during the retrieval phase.” Named entities produce stronger match signals than pronouns. Maps to: Entity Match Strength (Variable 2)

- Single-topic section focus Sections that address exactly one question or concept produce clean, focused embeddings that align strongly with specific queries. Sections that address three or four related concepts produce blended embeddings that align weakly with several queries but strongly with none. Maps to: Topical Isolation (Variable 4)

- Statistical and specific factual claims with attribution According to a 2024 Stanford study, RAG systems cite sources with structured data 73 percent more frequently than equivalent unstructured content. Sections that include specific, verifiable claims produce higher retrieval probability for queries that include quantitative or specific-answer intent. Maps to: Semantic Alignment (Variable 1)

- Short, dense paragraphs under 100 words with one primary idea each These produce cleaner chunk embeddings than long, complex paragraphs spanning multiple ideas. The embedding of a dense, focused paragraph more closely matches the embedding of a specific query. Maps to: Topical Isolation (Variable 4) + Structural Clarity (Variable 3)

How Semantic Alignment Works in Vector Search

Semantic Alignment works through vector embeddings — numerical representations of text that encode meaning rather than keywords. Every query submitted to an AI search system is converted into a vector. Every content chunk in the retrieval index is also represented as a vector. Retrieval selects the chunks whose vectors are most similar to the query vector.

The practical implication is that keyword matching is neither necessary nor sufficient for retrieval. A section can contain the exact keyword phrase from a query and still have low retrieval probability if its overall semantic content does not align with the conceptual meaning of the query. Conversely, a section that never uses the exact keyword phrase can have high retrieval probability if its conceptual vocabulary closely matches the query’s semantic representation.

Writing for semantic alignment means: identify the full conceptual vocabulary of a topic, including the questions users ask, the related concepts they expect to see addressed, the terms experts use, and the adjacent concepts that contextualise the core topic. Sections that address this full conceptual vocabulary — not just the primary keyword — produce stronger semantic alignment than sections that optimise narrowly for a single phrase.

Practical steps for improving semantic alignment:

- Map the full conceptual vocabulary of the topic before writing — related terms, sub-questions, expert terminology

- Include adjacent concepts that contextualise the core topic for the target query

- Avoid optimising narrowly for a single keyword phrase — breadth of conceptual coverage matters more

- Test alignment by comparing your section’s language against the actual queries users submit to AI search

The GEO Field Manual chapter on Retrieval Probability modelling provides a structured approach to mapping this conceptual vocabulary before writing, using query expansion and topic mapping techniques that require no paid tooling. GEO Field Manual →

How Section Structure Affects Retrieval Probability

Section structure affects Retrieval Probability through two mechanisms: embedding quality and structural clarity signals.

Embedding quality is affected by where in the section the most semantically rich content appears. Because retrieval systems process sections as chunks, the beginning and end of a chunk carry more weight in the embedding than the middle. An opening sentence that states the section’s core claim clearly and specifically contributes more to the section’s retrieval probability than the same claim buried in paragraph three.

Structural clarity signals are affected by the H2 heading and the internal organisation of the section. A heading that answers a specific question tells the retrieval system exactly what this chunk is about. A heading that names a vague topic leaves the retrieval system to infer the chunk’s meaning from its content — a weaker and less reliable signal.

Key structural factors that affect Retrieval Probability:

- Opening sentence placement — the first sentence carries the most weight in the section embedding

- Heading specificity — question-format or declarative headings outperform vague topic headings

- Paragraph density — short paragraphs (under 100 words) with one idea each produce cleaner embeddings

- Section scope — one topic per section prevents diluted vector representations

The evidence for this is direct. GEO Experiment 001, conducted at The GEO Lab, tested the impact of content structure on citation rate. Declarative structure — sections that open with a direct answer and state entities explicitly — produced a 61 percent citation rate compared to 37 percent for narrative structure on identical content. The only variable was structure. Domain authority, content substance, and entity naming were held constant. The 24 percentage point gap is attributable to structural effects on retrieval and extraction.

What Kills Retrieval Probability

The following patterns consistently suppress Retrieval Probability. I found that most are default patterns in conventional content writing — which is precisely why sites with strong traditional SEO performance often have poor GEO performance.

- Narrative build-up before the answer “In today’s rapidly evolving digital landscape, the question of how AI systems select content has become increasingly relevant…” This opening contains no concrete entities, no direct claim, and no answerable statement. The actual answer — if it exists — is buried several sentences later. Kills: Semantic Alignment + Structural Clarity

- Vague headings “Background”, “Introduction”, “Context”, “Overview”, and similar headings produce near-zero retrieval probability because they do not align with any specific query representation. They tell the retrieval system nothing about what the chunk contains. Kills: Structural Clarity

- Mixed-topic sections A section that covers “what entity reinforcement is” and “how to implement it” and “when it matters most” has three distinct semantic vectors competing within one content chunk. Its embedding sits between all three and matches none reliably. Kills: Topical Isolation

- Pronoun-heavy writing that requires prior context “As we established in the previous section, this affects retrieval significantly. When it works correctly, the system selects sections based on their alignment with it.” An AI retrieval system processing this chunk in isolation cannot determine what the section is about. Kills: Entity Match Strength

- Thin, unspecific factual content “AI citation rates have increased significantly” has lower retrieval probability than “AI platforms generated over one billion referral visits in June 2025, a 357 percent increase year-over-year, according to SE Ranking’s 2025 research.” Specificity produces stronger semantic alignment for queries with quantitative intent. Kills: Semantic Alignment

- Answers buried deep in long sections A 600-word section that places its core claim at word 400 dilutes the embedding contribution of that claim. The chunk embedding is weighted across all 600 words. Moving the claim to the opening sentence increases its contribution to the section’s overall embedding representation. Kills: Semantic Alignment + Structural Clarity

How to Test Retrieval Probability Without Platform Access

Testing Retrieval Probability without direct platform access requires a proxy testing protocol. In my testing, this four-step process consistently produces reliable proxy data using only free tools — and the section-level comparison in step four is often the most revealing part.

-

Define your target queries

For each H2 section you want to test, write three to five specific questions that a user might submit to an AI search system if they wanted the information that section contains. These should be natural language questions, not keyword phrases — write them the way a person would actually ask them.

-

Submit queries across platforms

Submit each query into Perplexity, Google AI Overviews (search in Google with the query), and ChatGPT with web browsing enabled. Record whether your content is cited as a source. Run each query five times across different sessions to account for model state variance between runs.

-

Calculate a section-level citation rate

For each H2 section, count how many of your test queries cited it across all platforms and sessions. Divide by total query runs. A section with a citation rate above 40 percent has reasonable Retrieval Probability. A section below 20 percent has a Retrieval Probability problem.

-

Compare cited vs uncited sections on the same page

The most actionable data comes from comparing sections on the same page — same domain authority, same technical setup. The differences in heading format, opening sentence structure, and entity naming between cited and uncited sections will show you exactly which variables are suppressing retrieval probability for your specific content.

This protocol is the same methodology used in the GEO research log experiments. It requires approximately two hours per page for a thorough test and produces actionable section-level data without paid tooling.

Retrieval Probability vs Extractability: How They Differ and Why Both Matter

Retrieval Probability and Layer 2: Extractability are adjacent layers in the GEO Stack — Layer 1 and Layer 2 — and they share some overlapping signals. Both benefit from declarative structure and explicit entity naming. But they address different stages of the retrieval pipeline and can fail independently.

| Retrieval Probability (Layer 1) | Extractability (Layer 2) | |

|---|---|---|

| What it governs | Whether a section enters the AI candidate pool at all | Whether a retrieved section can be cleanly parsed and cited |

| Primary failure mode | Section is semantically misaligned with query embedding — never selected | Section is selected but internal structure prevents clean extraction — cited incorrectly or not at all |

| Visible symptom | No citations, no AI appearance — the section is invisible | Distorted citations — wrong claim attributed, paraphrase loses meaning |

| Fix sequence | Address first — if not retrieved, Layer 2 improvements do nothing | Address second, after confirming Layer 1 is working |

A section can have high Retrieval Probability and low Extractability: it gets retrieved regularly, but its internal structure prevents clean extraction — the system selects the chunk but cannot isolate its core claim. A section can have low Retrieval Probability and high Extractability: it is well-structured for extraction but not semantically aligned with how AI systems represent the target queries. It would be cited accurately if it were retrieved. It never is.

Optimising both layers simultaneously is the highest-leverage GEO intervention available on existing content. A section rewritten to be both semantically aligned with target queries (Layer 1) and declaratively structured for clean extraction (Layer 2) will typically show significant citation rate improvement within four to eight weeks of re-indexing.

How to Improve Retrieval Probability on Existing Pages

Improving Retrieval Probability on an existing page follows a five-step intervention sequence. The changes do not alter what a section says — only how it says it. The information stays the same. The structure changes.

-

Rewrite H2 headings as direct questions or declarative answers

Replace vague headings (“Background”, “Overview”, “The Importance of X”) with specific, answerable headings (“What Is X and Why Does It Affect AI Retrieval?”, “How Does X Determine Whether Content Gets Cited?”). This improves Structural Clarity signals immediately, with no other content changes required.

-

Move the core claim to the opening sentence

For each H2 section, identify the most important claim or answer the section makes. If that claim does not appear in the first sentence, restructure the section so it does. Context, evidence, and qualification follow the claim. The answer always comes first.

-

Name entities explicitly in the first two sentences

Replace any pronoun or contextual reference in the opening sentences with the full entity name. “It affects retrieval by…” becomes “Retrieval Probability affects citation rate by…”. “This determines whether content is selected” becomes “Semantic alignment determines whether a section is selected during vector retrieval.” Resolve every “it”, “this”, and “the process” to its named entity.

-

Narrow section scope

If a section addresses more than one distinct topic or question, split it into two sections. Each section should address exactly one question or concept. This improves both Topical Isolation and the clarity of the section’s embedding representation. A section that answers two questions answers neither of them reliably in AI retrieval.

-

Add specific, verifiable factual claims with attribution

If a section makes only general assertions, identify one or two specific statistics or data points that support the section’s core claim and add them with attribution. Specific claims produce stronger semantic alignment for queries with quantitative intent, and attribution adds entity reinforcement for the cited source.

After implementing these five steps, wait for re-indexing — typically two to four weeks — before running the citation rate test again with the same query protocol used before the intervention. The difference in section-level citation rate before and after is your measured Retrieval Probability improvement.

Case Study: How Structural Changes Improved Citation Rate by 24 Percentage Points

GEO Experiment 001 tested the direct impact of Retrieval Probability optimisation on citation rate. The study used identical content substance, domain authority, and entity naming — the only variable was section structure.

- Problem: Narrative-structured content (contextual build-up, delayed answers, pronoun-heavy openings) produced a 37 percent citation rate across three AI platforms

- Approach: Rewrote sections using declarative structure — answer-first opening sentences, explicit entity naming in the first two sentences, question-format H2 headings, single-topic sections

- Result: Citation rate increased to 61 percent — a 24 percentage point improvement with no changes to content substance, domain authority, or technical setup

- Timeline: Improvements became measurable within four weeks of re-indexing

The experiment confirmed that Retrieval Probability is primarily a structural variable. The content quality was held constant. The only change was how the content was presented at the section level — heading format, opening sentence structure, entity naming, and section scope.

Key Takeaways

- Retrieval Probability is Layer 1 of the GEO Stack and the most important single variable in Generative Engine Optimisation. It determines whether a content section enters the generative retrieval pipeline at all. Everything downstream — Extractability, Entity Reinforcement, Structural Authority, System Memory — operates only on content that has already been retrieved.

- The five variables that determine Retrieval Probability are Semantic Alignment, Entity Match Strength, Structural Clarity, Topical Isolation, and Contextual Reinforcement. Each is independently testable and improvable without touching the others.

- The most common Retrieval Probability failure is semantic misalignment between section content and how AI retrieval systems represent target queries. The fix is not keyword stuffing — it is rewriting sections in the full conceptual vocabulary of the target topic, with direct entity-explicit headings and answer-first opening sentences.

- Testing Retrieval Probability requires manual citation testing across Perplexity, Google AI Overviews, and ChatGPT — approximately two hours per page — producing actionable section-level data without any paid tooling.

The impact of retrieval probability on brand visibility is measurable. In the March 2026 GEO Brand Citation Index, brands maintaining high retrieval signals on the live web scored up to 96 points higher on Perplexity than brands coasting on training data alone.

This integrated model builds on information retrieval research from Gao et al. (2023) and structured data guidelines from Google Search Central.

Frequently Asked Questions

What is retrieval probability in simple terms?

Retrieval Probability is how likely it is that AI search systems select a specific section of your content when answering a related question. Sections with high Retrieval Probability get cited. Sections with low Retrieval Probability are ignored — even if the overall page ranks well in traditional search. The key difference from SEO: Google evaluates your page as a whole document. AI retrieval systems evaluate each individual section as an independent candidate. A strong page does not guarantee strong retrieval for any given section it contains.

Does ranking position in Google affect Retrieval Probability?

Ranking position has some correlation with Retrieval Probability because both benefit from domain authority and topical relevance — and empirical data shows that 92 percent of AI Overview citations come from domains already ranking in the top 10 (GetPassionfruit, 2025). But pages ranking position one regularly fail to appear in AI citations because individual sections are not semantically aligned with query embeddings. Google ranking is a page-level variable. Retrieval Probability is a section-level variable. They are related but not the same — and you cannot fix a section-level retrieval failure by improving a page-level ranking signal.

How many queries should I test per section?

Run at least five query iterations per section per platform for a reliable citation rate estimate. Ten iterations per section per platform produces more stable data. Fewer than five iterations introduces too much variance from model state differences between sessions — AI systems produce variable outputs, and the same query may retrieve different content across different sessions. Running five iterations gives you enough data to identify a trend rather than a random fluctuation. For pages with many sections, prioritise the sections you most want cited and test those first.

Can I improve Retrieval Probability without rewriting content?

The most impactful Retrieval Probability improvements require structural changes — rewriting headings, moving answers to opening sentences, splitting mixed-topic sections, and resolving pronoun references to named entities. These changes do not alter what a section says, only how it says it. The information stays the same; the structure changes. In practice, heading rewrites alone (Step 1 of the improvement protocol above) often produce measurable citation rate improvements because headings are the highest structural clarity signal in the retrieval system’s assessment of a chunk.

How long does it take to see Retrieval Probability improvements?

After structural changes are published, AI search systems typically re-index updated content within two to four weeks. Meaningful citation rate changes become measurable within four to eight weeks of re-indexing — enough time for the updated embeddings to propagate through the retrieval index. Changes to entity naming (Layer 3) and internal linking (Layer 4) compound over a longer period as the topical cluster around the content builds. Run the same query protocol before and after the intervention period to measure the actual improvement rather than estimating it.

What is the difference between Retrieval Probability and Extractability?

Retrieval Probability (Layer 1) determines whether a section enters the retrieval pipeline — whether it gets selected as a candidate at all. Extractability (Layer 2) determines whether a retrieved section can be cleanly parsed and cited accurately by the language model once it has been selected. The failure modes are different: a Layer 1 failure produces no citation and no AI appearance; a Layer 2 failure produces a distorted citation or misattributed claim. Always diagnose and address Layer 1 failures before investing in Layer 2 optimisation — a section that never gets retrieved cannot benefit from better extraction structure.