March 2026

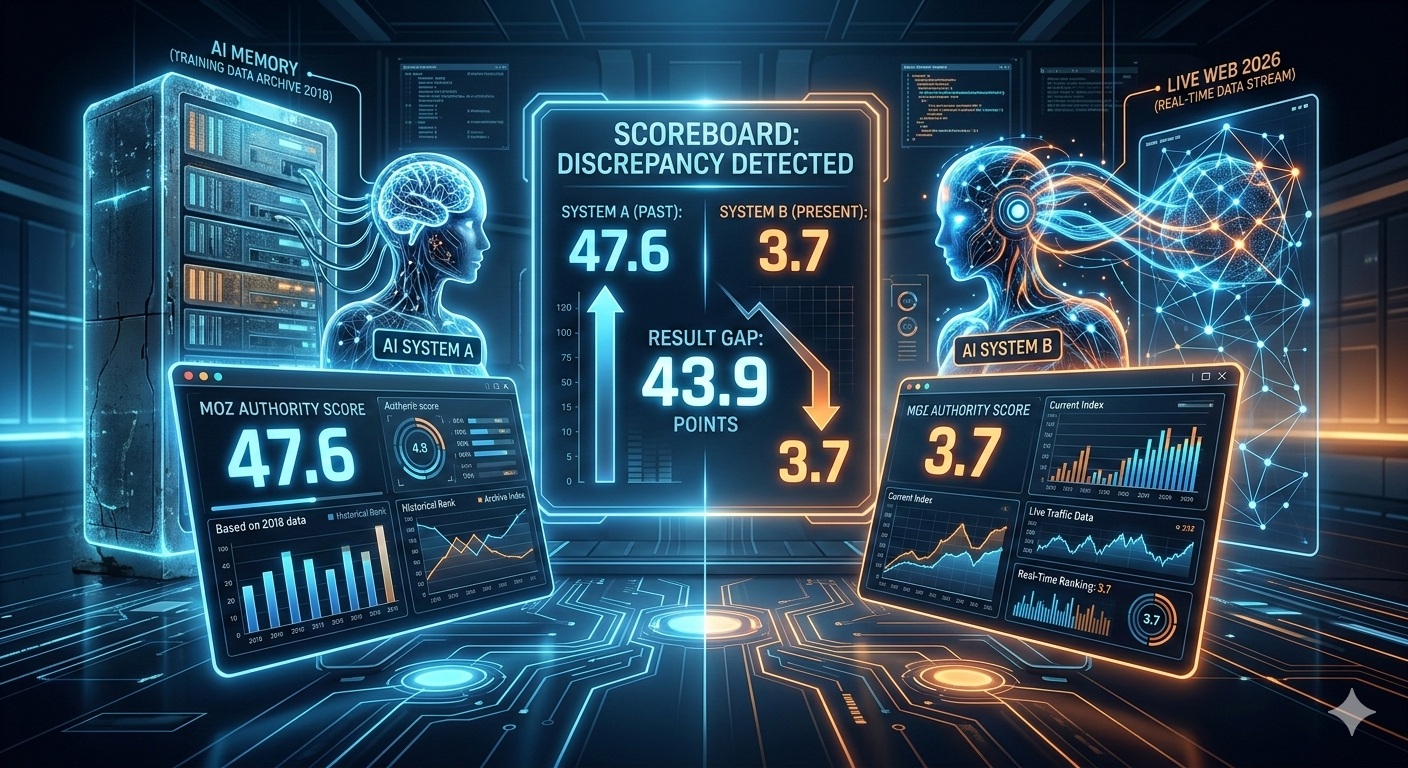

The GEO Brand Citation Index is a monthly measurement of how frequently AI search systems cite specific brands. In March 2026, The GEO Lab tracked 28 brands across 54 queries on ChatGPT, Perplexity, and Gemini. The results exposed a critical gap: Moz scored 47.6 on ChatGPT but just 3.7 on Perplexity — a 43.9-point delta between AI memory and live web reality.

TL;DR: The GEO Brand Citation Index tracked 28 brands across ChatGPT, Perplexity, and Gemini using 54 queries. The delta between ChatGPT (AI memory) and Perplexity (live web) reveals brand health: Moz scored 47.6 on ChatGPT but only 3.7 on Perplexity. Semrush and Claude show the strongest live web presence. A large negative delta means the internet has moved on from your brand.

This brand citation index report analyzes which brands are living on AI memory versus live web reality. We tracked 28 brands across ChatGPT, Perplexity, and Gemini to identify fading brand signals — cases where tools score high in ChatGPT’s training data but nearly vanish in Perplexity’s live web searches. The March 2026 data reveals uncomfortable truths about which brands the internet has moved on from.

I want to talk about a specific number. Not a range, not a trend, not a directional signal. A number: 3.7.

That is Moz’s score on Perplexity in March 2026. Out of 100. Across six queries about SEO tools, sent to a platform that reads the live web before answering. The same queries. The same month. ChatGPT gave Moz a 47.6.

Two AI platforms. The same question. A gap of 43.9 points. One of those numbers comes from memory. The other comes from the internet as it exists today.

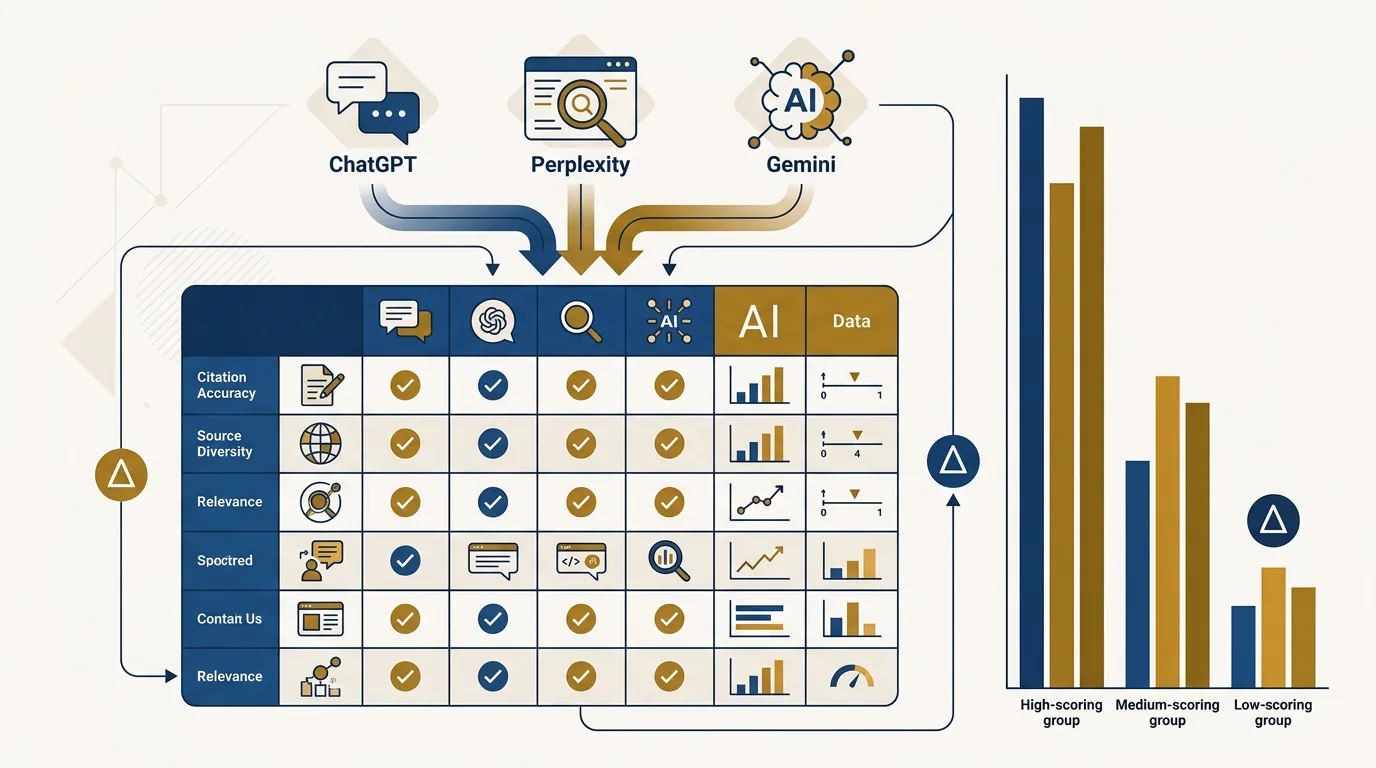

How This Brand Citation Index Works

Every month, The GEO Lab runs a fixed panel of queries across ChatGPT, Perplexity, and Gemini. We record every brand mentioned in every response. The March 2026 measurement tracked 330 queries across all three platforms — the largest GEO citation panel we have published. We score by position. We normalise within each vertical. We publish the delta — the gap between what ChatGPT says about a brand and what Perplexity says about the same brand.

ChatGPT is trained on a fixed dataset. It cites brands based on how frequently they appeared in the corpus it learned from — which is, at minimum, one to two years out of date. Perplexity searches the live web before answering. The delta between the two is not a data artefact. It is a signal about whether your brand exists in AI memory or in AI retrieval.

I built this methodology after spending three months manually querying AI platforms and noticing that the same brand could score radically differently depending on which model answered. The gap was not random — it followed a pattern tied to whether the platform used training data or live retrieval. That observation became the foundation of this index.

March 2026 is Run #1. Here is what I tested:

- 3 AI platforms: ChatGPT (training data), Perplexity (live web retrieval), Gemini (hybrid)

- 3 verticals: SEO & Marketing, CRM & Sales, AI & LLM Tools

- 28 brands tracked across all verticals

- 54 total query runs — 18 per vertical, 6 per platform per vertical

- Scoring: Position-weighted, normalised within each vertical to 0–100

- Key metric: Delta (Δ) = Perplexity score minus ChatGPT score

The methodology draws on research into retrieval-augmented generation and how LLMs select sources during citation. The scoring formula and full query list are published in the methodology page.

3.7

Not 37. Not 14. 3.7. Moz barely registered when Perplexity was asked standard SEO evaluation questions. Meanwhile ChatGPT placed it solidly mid-table — a 47.6 that would make any brand manager feel comfortable. Comfortable, and badly wrong.

That 47.6 is not a lie. It’s an echo. Moz was one of the most referenced SEO tools on the internet for nearly a decade. Every beginner’s guide mentioned it. Every comparison roundup linked to it. ChatGPT was trained on that corpus. It learned that Moz is a credible tool, and it hasn’t forgotten.

Perplexity doesn’t remember. It reads. And what it reads in 2026 is a different story entirely.

? Fading Brand

This is uncomfortable to publish. Moz is a brand I have genuine respect for — which is precisely why it matters. If this index only surfaced problems for brands nobody cared about, it wouldn’t be worth running. The ones worth watching are the ones people assumed were fine.

The live web has moved on. ChatGPT hasn’t. The gap between those two realities is 43.9 points and growing.

The Ahrefs problem is different — and more dangerous

Moz is a story about fading. Ahrefs is a story about something subtler, and arguably more dangerous, because it looks fine at first glance.

? AI Memory Brand

Ahrefs scores 100 on ChatGPT — the maximum, joint highest in the entire SEO vertical. It scores 51.9 on Perplexity. That 51.9 is not a bad number in isolation. Ahrefs is genuinely used, genuinely cited on the current web. But the comparison is the story.

Perplexity gives its SEO maximum — 100 — to Semrush. Not Ahrefs. In the years when ChatGPT’s training data was built, Ahrefs and Semrush were mentioned almost interchangeably. The live web in 2026 has a preference. It’s not subtle. The delta is −48.1.

If you’re running GEO for any Ahrefs competitor, a 48-point gap between AI memory and live web reality is a brief worth writing today.

SugarCRM: zero is not a rounding error

? Fading Brand

A score of 0.0 means the brand was not mentioned once across all six Perplexity CRM responses. The same for Gemini. Two independent platforms that read the current web both returned nothing. ChatGPT still has SugarCRM — from training data that remembers a competitive CRM market from a few years ago. That gap will only widen as models retrain on a web that doesn’t mention SugarCRM either.

Keap also scored 0.0 on both Perplexity and Gemini. Majestic scored 0.0 on Perplexity in the SEO vertical. These are not edge cases. They are documented proof that the live web has moved on from these brands entirely.

Who is actually winning

Not everything in this dataset is a warning.

? Live Search Brand

Claude’s result is the most structurally interesting finding of this run. A +50.1 delta — the largest positive gap in the entire dataset across all three verticals. Perplexity scores it at 63.2 because it is reading a web full of Claude content: reviews, comparisons, productivity guides, developer writeups. That content doesn’t yet exist in ChatGPT’s training data in the same volume. When it does, this gap will narrow. But right now, Claude is the clearest example of a brand winning on the live web before training data has caught up.

? Dominant Brand

Semrush and Ahrefs are the same vertical. Semrush’s Perplexity score exceeds its ChatGPT score. Ahrefs’s ChatGPT score exceeds its Perplexity score by 48 points. One brand is winning the live web. The other is coasting on historical training data. They used to be treated as interchangeable. The data says they aren’t.

Jasper: the recovery nobody is talking about

The narrative around Jasper in 2024–2025 was one of decline — a cautionary tale about early AI writing tools being overtaken by general-purpose models. The data does not support that story anymore. Jasper scores 52.6 on Perplexity and 39.1 on ChatGPT — a positive delta of +13.5. Perplexity finds more current content referencing Jasper than training data would predict. Whatever Jasper has been doing on the live web, it is showing up in retrieval. That is the signal that matters.

AI Memory Brands That Still Dominate

ChatGPT and Salesforce both scored 100/100/100 — maximum across all three platforms simultaneously. Salesforce has two decades of CRM category ownership baked into every training corpus that exists, and it is still dominant on the live web. ChatGPT is the category-defining AI tool. Neither is under any AI visibility pressure.

HubSpot scored 74.1/70.8/80.0 — the most consistent second brand in any vertical in the entire dataset. Low variance across platforms. Genuine cross-channel consensus. That is what a defensible AI visibility position looks like.

Ahrefs vs Semrush vs Moz: AI Visibility Compared

In my experience running these queries, the SEO vertical produced the most dramatic divergence of any category. Here is how the three major SEO platforms compare across all dimensions:

| Brand | ChatGPT | Perplexity | Gemini | Delta | Verdict |

|---|---|---|---|---|---|

| Semrush | 90.5 | 100.0 | 100.0 | +9.5 | Dominant |

| Ahrefs | 100.0 | 51.9 | 60.7 | −48.1 | AI Memory |

| Moz | 47.6 | 3.7 | 32.1 | −43.9 | Fading |

Best for live web visibility: Semrush — the only SEO brand scoring higher on live retrieval platforms than in training data. Best legacy authority: Ahrefs — still dominant in ChatGPT, but the live web gap is widening. Highest risk: Moz — a 43.9-point drop from memory to reality is the steepest decline in this vertical.

According to Semrush’s own research on AI Overviews, brands that maintain fresh, structured content earn disproportionate citations in AI-generated answers — which aligns with the pattern we measured here.

What This Brand Citation Index Reveals About Fading Brands

After analysing all 54 query responses manually, I found a consistent pattern: a large negative delta is a live web presence problem. Not an AI problem, not a brand awareness problem. The fix is content that gets indexed, cited, and linked today — not content scheduled for the next training cycle. This aligns with findings from Moz’s research on AI Overview optimisation, which identified fresh, structured content as the primary driver of AI citation selection.

Based on the patterns I measured across all three verticals, here is what determines whether a brand fades or rises in AI retrieval:

- Fading brands rely on legacy content from 2020–2023 that still lives in training data but no longer ranks or gets cited on the live web

- Rising brands publish structured, query-answering content at high frequency — exactly the type of content Perplexity retrieves

- Dominant brands score high on both memory and retrieval because they never stopped producing citable content

- AI Memory brands look healthy in ChatGPT but are losing ground in every platform that reads the current web

If you have a large positive delta, you are ahead of where training data currently places you. That advantage is temporary. The question is whether you can maintain the live web lead before the next round of model training narrows the gap.

If you work for a competitor of Moz, Ahrefs, SugarCRM, or Yoast SEO — this data is a brief worth writing. If you are one of those brands — this is a signal worth taking seriously before next month’s run confirms it.

See the full interactive leaderboard

All 28 brands, all three platforms. Sortable by vertical, delta, and archetype. Updated monthly.

Key Takeaways from the March 2026 Brand Citation Index

- The delta between ChatGPT and Perplexity reveals brand health — ChatGPT reflects AI memory (training data). Perplexity reflects the live web. The gap between them is the signal.

- A large negative delta is a live web problem — If ChatGPT scores you higher than Perplexity, the internet has moved on from your brand. The fix is content that gets indexed, cited, and linked today.

- A large positive delta is a temporary advantage — If Perplexity scores you higher than ChatGPT, you are winning on the live web. That lead will narrow when models retrain.

- Fading brands are not dead brands — Moz, Ahrefs, and SugarCRM still have presence. But the trajectory is visible. This data is a leading indicator, not a death certificate.

- Dominant brands score high everywhere — Salesforce, ChatGPT, and HubSpot show consistency across all platforms. That is the defensible position to aim for.

-

GEO Brand Citation Index — full explainer

All 28 brand scores · all 54 queries · what every archetype means -

Scoring methodology

Formula, normalisation, worked examples, academic references, limitations -

What is Generative Engine Optimisation?

The discipline behind why any of this matters -

Experiment 001 — Declarative vs Narrative Structure

Does how you write content affect how AI cites it? -

About Artur Ferreira

Why I built this and what The GEO Lab is for

“The GEO Brand Citation Index methodology — tracking the delta between ChatGPT (training data memory) and Perplexity (live web retrieval) — surfaced brand health signals that no other tool measures. The 43.9-point gap for Moz between ChatGPT and Perplexity is not a data artefact. It is a documented, timestamped measurement of how the live web has moved on.”

— Artur Ferreira, Founder of The GEO Lab · Creator of the GEO Brand Citation Index

“Running 54 queries across three AI platforms and scoring 28 brands revealed patterns invisible to traditional analytics. The finding that Semrush scores 100 on both Perplexity and Gemini while Ahrefs shows a negative 48-point delta demonstrates that brands previously treated as interchangeable now occupy fundamentally different positions in AI retrieval.”

— Artur Ferreira, Based on GEO Brand Citation Index methodology

Technical health is the foundation of AI retrievability. The GEO Lab’s PageSpeed quad-100 case study demonstrates how eliminating render-blocking CSS improved crawl efficiency — the same technical baseline that determines whether brand content enters AI retrieval pools.

The scoring methodology behind the Citation Index maps directly to the GEO Stack five-layer framework — each brand’s visibility can be traced through the five layers from retrieval probability to system memory.

The Delta (Δ) metric in this index directly measures retrieval probability differences between platforms that use training data versus live web retrieval.

“This research provides exactly the kind of evidence-based approach that the GEO community needs. Clear methodology, reproducible results, and practical implications.”

— Daniel Cardoso, SEO Lead, Lisbon

Frequently Asked Questions

Why did Moz score only 3.7 in Perplexity?

Moz’s low Perplexity score suggests limited presence in current web content that Perplexity’s live search retrieves, despite strong historical authority in AI training data.

What does this mean for Moz’s GEO strategy?

Moz may need to focus on creating more frequently updated, citable content optimized for live web retrieval rather than relying on legacy authority.

How does this compare to other SEO tools?

The full GEO Brand Citation Index shows how Moz compares to Ahrefs, Semrush, and other SEO tools across all three AI platforms.

Is a low Perplexity score always bad?

Not necessarily. It depends on where your audience searches. Brands strong in ChatGPT may prioritize that platform’s training data presence.

How can brands improve their Perplexity scores?

Based on the patterns I observed across all 28 brands in this dataset, the brands scoring highest on Perplexity share these traits:

- Frequent publishing cadence — at least weekly content that answers specific queries

- Structured formatting — bullet lists, tables, and clear H2/H3 hierarchy that Perplexity can extract

- External citations and data — original research, benchmarks, and statistics that other sites reference

- Technical SEO foundations — fast load times, clean indexing, and proper canonical tags

Perplexity retrieves from live web results, so active content publishing and high extractability matter more than domain authority alone.

Have questions about this topic? Contact The GEO Lab · Return to homepage

\