Most people tracking GEO are measuring the wrong thing — or measuring the right thing once and calling it data. Here’s the protocol that actually produces numbers you can act on.

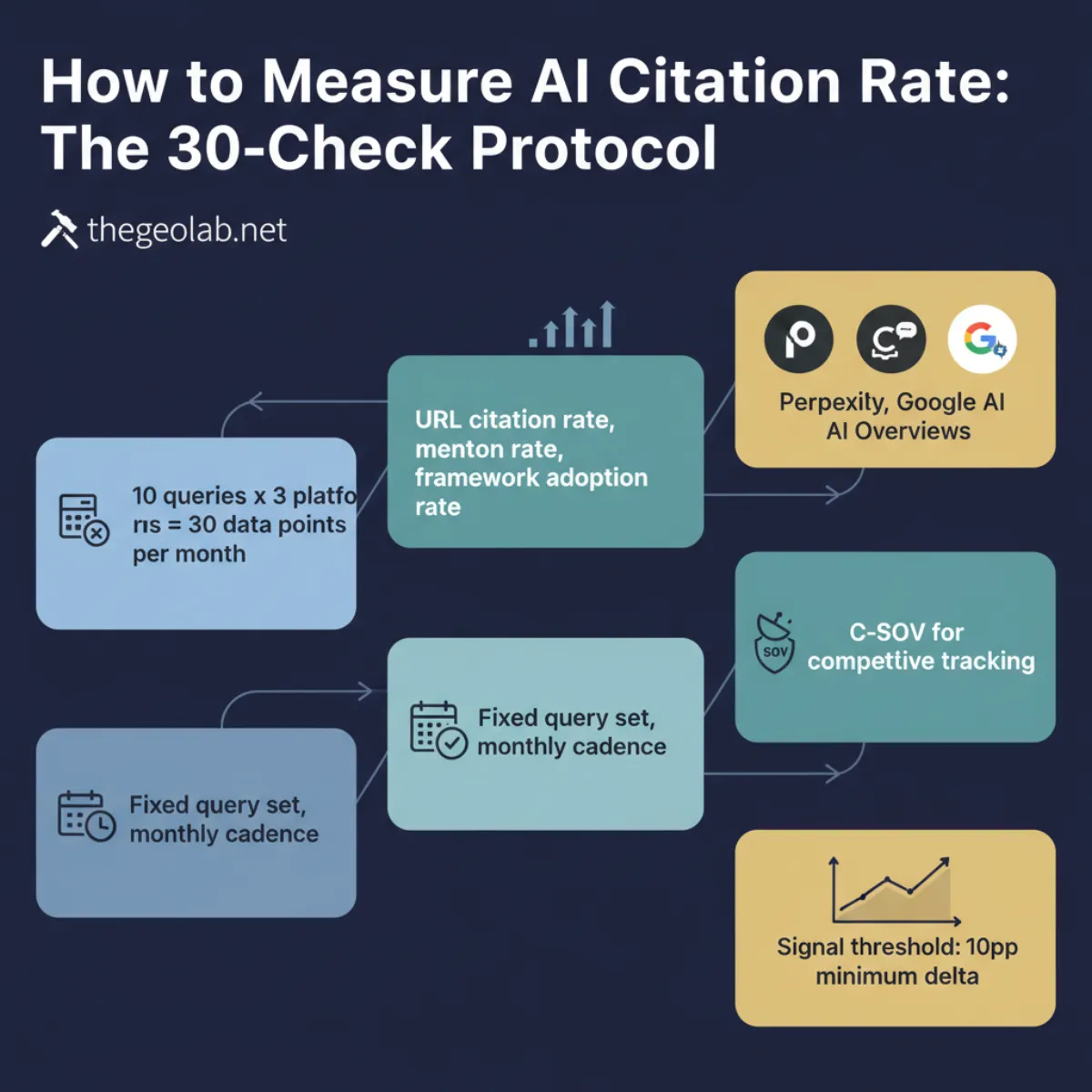

AI citation rate is the percentage of test queries where your domain URL appears as a named source in an AI-generated response. The GEO Lab 30-check protocol runs 10 fixed queries across Perplexity, ChatGPT, and Google AI Overviews — monthly, same query set, same platforms. The combined rate gives you a longitudinal tracking metric. The per-platform breakdown tells you where you’re winning and where you’re not. A second metric — framework adoption rate — tracks responses where AI uses your concepts or terminology as the answer structure, even without a URL citation. Both are required for a complete picture.

Why Measuring AI Citation Rate Is Harder Than It Looks

There’s a spreadsheet floating around in every GEO community that tracks “AI visibility”. It has a column for ChatGPT, a column for Perplexity, and a column marked “score”. Someone runs a few queries, types yes or no, and announces a citation rate.

That’s not a citation rate. That’s an anecdote with a spreadsheet attached.

The problem is not that people are lazy. It’s that AI search results are non-deterministic — the same query on the same platform can return different sources on different runs. Run it once and you’ve measured a moment. Run it consistently, with a fixed query set, on a fixed schedule, and you’ve measured a trend. Only the trend is interpretable.

The second problem is conflation. Most measurement attempts don’t distinguish between a citation (URL appears as a named source), a mention (brand referred to without a link), and framework adoption (platform builds its answer from your concepts without naming you at all). These are three different signals. Treating them as one produces a number that means nothing.

The 30-check protocol was built to solve both problems: fixed query set, fixed platforms, fixed cadence, and a clear distinction between the three signal types.

Three Metrics, Three Different Signals

Before running a single query, get the definitions straight. The GEO Lab uses three distinct measurements, each tracking a different stage of AI retrieval.

URL citation rate

URL citation rate is the percentage of test queries where your domain URL appears in the AI platform’s source panel or inline citations. This is the primary longitudinal tracking metric — the number that goes in your monthly log and gets compared month-on-month.

URL citation rate = citations received ÷ total queries × 100

Example: 2 citations on 10 queries = 20% URL citation rate on that platform.

Mention rate

Mention rate is the percentage of test queries where the AI response refers to your brand, site, or author by name — without including a URL link. Mentions don’t drive traffic. They are a signal of brand recognition in the retrieval corpus, not a citation. Record them separately. Don’t count them as citations.

Framework adoption rate

Framework adoption rate is the percentage of test queries where the AI platform builds its answer using your terminology, framework structure, or methodology — whether or not a URL appears. This is the leading indicator. When framework adoption rate is high and URL citation rate is low, it means your content is structuring the answer but your domain authority is not yet strong enough to trigger a direct URL cite. That gap closes as authority accumulates.

The GEO Lab’s E014 data (March 2026): URL citation rate on Perplexity was 20% (2 of 10 queries). Framework adoption rate on the same query set was 90% (9 of 10). Both metrics are required to understand what’s actually happening — the 20% figure alone would suggest a weak result; the 90% figure reveals a strong one at a different layer.

Going deeper? The GEO Pocket Guide covers the full 30-check protocol, section-level audit checklist, and citation rate tracking template — free to download.

The 30-Check Protocol: Step by Step

The 30-check protocol runs 10 queries across 3 platforms. 30 data points per measurement round. Here is the exact procedure The GEO Lab uses for every monthly E014 measurement.

-

Build a fixed query set of 10 Select 10 queries that map to your core topic cluster and to pages that exist on your site. These queries must remain identical across every measurement round — never swap a query mid-experiment. Swapping queries breaks longitudinal comparability. If you want to test new queries, start a separate query set and run it in parallel for at least 3 rounds before treating it as primary data.

-

Calibrate query specificity Mix concept-specific queries (“What is Retrieval Probability in AI search?”) with category-level queries (“What is GEO?”). Concept-specific queries test your namespace ownership. Category-level queries test your domain authority relative to established players. Both are required. A citation rate built entirely on branded concept queries is not the same achievement as a citation rate that includes category-level wins.

-

Run each query on Perplexity Use Perplexity’s standard web search mode (not the deep research or focus modes). Record: whether your URL appeared in the source panel or inline, whether your brand was mentioned without a link, and whether the answer structure uses your framework terminology. Do not click sources — read only the answer and the source panel as rendered.

-

Run each query on ChatGPT Use ChatGPT with web search enabled. The default model does not always trigger web search — confirm the search icon shows active before recording a result. If web search did not trigger, re-run the query. Record the same three signals: URL citation, brand mention, framework adoption.

-

Run each query on Google AI Overviews Run each query in Google Search and record whether an AI Overview appeared. If it did: record whether your URL appeared as a source, whether your brand was mentioned in the overview text, and whether the AIO structure follows your framework. If no AIO appeared, record “no AIO” — this is a data point, not a gap in the measurement.

-

Record results immediately Don’t rely on memory. Record each result immediately after running the query — platform, query number, cited (Y/N), mentioned (Y/N), framework adoption (Y/N), and a short note on what was cited or why not. The notes are what make the data actionable.

Calculating the three rates

After running all 30 checks, calculate three figures:

Platform citations ÷ 10 queries × 100

Calculate separately for Perplexity, ChatGPT, and Google AI Overviews.

Total citations across all platforms ÷ 30 data points × 100

This is the headline number for longitudinal tracking.

Queries where answer uses your framework ÷ 10 queries × 100

Track on Perplexity first — it’s the most transparent about answer sourcing.

What a Good Citation Rate Actually Looks Like

There is no universal benchmark. Anyone offering one without context is guessing. What citation rate means depends on three variables: site age, domain authority, and query specificity.

| Site profile | Realistic range | What it signals |

|---|---|---|

| New site, concept queries | 5–20% Perplexity | Namespace ownership building. Normal for a site under 3 months with structured content on proprietary concepts. |

| New site, category queries | 0–5% Perplexity | Expected. DR 80+ domains dominate category queries. Content alone cannot overcome authority gap in months 1–6. |

| Established site, concept queries | 30–60% Perplexity | Strong retrieval presence on owned terms. Content and authority are aligned. |

| Established site, category queries | 10–30% Perplexity | Competing with dominant sources. Achievable with DR 50+ and strong extractability signals. |

| Any site, ChatGPT | 0–10% | ChatGPT’s web search retrieval is less transparent and more conservative than Perplexity. Lower citation rates are the norm, not the exception. |

| Any site, Google AIO | 0–15% | Google AI Overviews pull from the top-ranking pages. AIO citation correlates more strongly with organic ranking position than with GEO-specific signals. |

The more useful benchmark than any absolute figure is your own month-on-month trend. A site at 6.7% combined citation rate in Month 1, moving to 12% in Month 2 and 18% in Month 3, is a site with a working accumulation curve — regardless of how that compares to an “industry average”.

The signal threshold rule

At a sample size of 10 queries per platform, a delta of less than 10 percentage points between two measurement rounds is within the noise margin of single-pass measurement. The GEO Lab’s measurement protocol treats a ≥10pp delta as a real signal and a <10pp delta as inconclusive until replicated across multiple rounds. Don’t over-interpret small movements on a single data point.

Citation Share of Voice (C-SOV): The Competitive Metric

Citation rate measures your domain in isolation. Citation share of voice (C-SOV) measures your domain relative to the field. Both are required for competitive GEO tracking.

C-SOV = your domain’s citations ÷ total citations across all domains × 100

Run on the same query set. Count every domain cited across all responses, then calculate your share.

C-SOV requires running competitor tracking alongside your own measurement. For each query where you were not cited, record which domains were. After 10 queries, you have a citation landscape: who owns what, and where you’re being displaced.

How to use C-SOV for content decisions

A query where Semrush holds 40% C-SOV and you hold 0% is a domain authority problem — no amount of content optimisation closes that gap quickly. A query where a niche blog holds 40% C-SOV and you hold 0% is a content structure problem — extractability and entity clarity improvements directly target the gap. Knowing which type of problem you have determines where to spend effort.

Single-Pass vs Iterated Measurement

AI search results are non-deterministic. The same query can return different sources on different runs. Single-pass measurement — running each query once — produces higher variance than iterated measurement. The GEO Lab uses single-pass for the first two monthly rounds and moves to 3-iteration measurement from Month 2 onwards.

Single-pass measurement

Single-pass is acceptable for early-stage longitudinal tracking. The month-on-month delta is more informative than the absolute number at this stage. A citation rate of 6.7% on a single-pass 10-query test carries a confidence interval of roughly ±15 percentage points. The trend across multiple rounds, not the individual data point, is the interpretable signal.

3-iteration measurement

From Month 2 onwards, run each query 3 times per platform and take the mean. This reduces the confidence interval to roughly ±8 percentage points on a 10-query set. The tradeoff: 90 queries per platform instead of 10. For monthly tracking, 3 iterations is the right balance of precision and effort. More than 3 iterations per query produces diminishing returns relative to the time investment.

When to use the 75-iteration approach

The 75-iteration approach used in Experiment 001 is for controlled experiments testing a specific variable — not for longitudinal tracking. 75 iterations per query produces a tight confidence interval suitable for publishing a causal finding. For monthly citation rate monitoring, 3 iterations is sufficient.

What to Do With the Data

A citation rate figure with no action attached is an interesting number. The per-query breakdown is what makes it useful.

Queries where you’re cited: defend them

Pages currently generating citations are subject to the Scrunch citation decay finding — a 4.5-week half-life for the average site. Keep cited pages fresh. Update them monthly with new data, corrected figures, or expanded FAQs. A page that stops being refreshed will start losing citation presence within 4–9 weeks.

Queries where you’re not cited: diagnose before acting

A 0% citation on a query has two possible causes with different fixes. If the dominant sources have DR 80+ (Semrush, Wikipedia, major publications), the problem is domain authority — content changes won’t close the gap quickly. If the dominant sources are DR 20–50 comparable sites, the problem is extractability or entity clarity — structural changes directly target the gap.

Check which type of problem you have before writing new content. The Extractability page covers the structural signals that determine whether a page can be cited even when it’s already in the retrieval pool.

High framework adoption, low URL citation: build authority

If framework adoption rate is high (your terminology is structuring AI answers) but URL citation rate is low (your URL isn’t appearing), the content is working but the domain isn’t trusted enough for the platform to cite it directly. The fix is authority accumulation: external links, consistent publishing, entity associations across the web. This is System Memory work — Layer 5 of the GEO Stack.

Ready to measure your own AI citation rate? Submit your domain and queries at The GEO Lab Console — we will run the 30-check protocol on your site and send you the results.

Questions? Contact The GEO Lab.

What Practitioners Say

The distinction between URL citation rate and framework adoption rate is the most practically useful thing I’ve seen written about GEO measurement. We had high framework adoption for months without realising it — the platform was using our terminology but not linking to us. Knowing the gap exists is the first step to closing it.

The signal threshold rule — treating deltas under 10pp as noise at this sample size — is the kind of methodological discipline most GEO measurement guides skip entirely. Without it, practitioners chase measurement noise and waste effort on interventions that weren’t causing anything.

Frequently Asked Questions

What is AI citation rate?

AI citation rate is the percentage of test queries where your domain URL appears as a named source in an AI-generated response. It is measured across a fixed query set on platforms including Perplexity, ChatGPT, and Google AI Overviews. A citation requires a URL link — mentions without a link are recorded separately and do not count toward the citation rate.

How do you measure AI citation rate?

Run 10 fixed queries across 3 platforms (Perplexity, ChatGPT, Google AI Overviews). Record whether your domain URL appears as a named source in each response. Citation rate = citations received ÷ total queries × 100. Use the same query set every round, never swap queries, and measure on the same week each month. The GEO Lab 30-check protocol (10 queries × 3 platforms = 30 data points) is the standard measurement approach.

What is a good AI citation rate benchmark?

For new sites under 6 months targeting concept-specific queries, 5–20% on Perplexity is a realistic range. For category-level queries, 0–5% is expected regardless of content quality — domain authority determines those positions. The more useful benchmark is your own month-on-month trend. An accumulating citation rate over 6 months outperforms a static or declining one regardless of the absolute figure.

What is the difference between URL citation rate and framework adoption rate?

URL citation rate measures how often your domain URL appears in the AI platform’s source panel or inline citations. Framework adoption rate measures how often the platform builds its answer using your concepts, terminology, or methodology — with or without the URL. URL citation rate is the longitudinal tracking metric. Framework adoption rate is the leading indicator: high adoption with low URL citation signals that content is working but domain authority needs to catch up.

What is citation share of voice (C-SOV)?

C-SOV measures your domain’s citations as a percentage of all citations received across the same query set. Formula: your citations ÷ total citations across all domains × 100. C-SOV tells you how you’re performing relative to competitors on the same queries — essential for identifying whether a citation gap is a domain authority problem or a content structure problem.

How often should you measure AI citation rate?

Monthly, on the same week of each month. Never remeasure within 7 days of the previous round — AI retrieval corpus updates are not instantaneous, and rapid remeasurement produces noise rather than signal. Six monthly measurements is the minimum data set for detecting a reliable trend in citation rate accumulation or decay.

What is the GEO Lab’s E014 experiment?

E014 is The GEO Lab’s System Memory accumulation experiment — a 6-month longitudinal measurement of thegeolab.net’s citation rate across the 30-check protocol. Month 1 (March 2026) recorded a combined citation rate of 6.7% (up from 3% at February baseline) with a 90% framework adoption rate on Perplexity. Results publish monthly through September 2026.

AI citation rate is measured by running a fixed query set across Perplexity, ChatGPT, and Google AI Overviews monthly. The 30-check protocol (10 queries × 3 platforms) produces three figures: URL citation rate (the longitudinal tracking metric), mention rate (brand recognition signal), and framework adoption rate (the leading indicator). Deltas under 10 percentage points at this sample size are noise. Trends across 6 monthly rounds are signal.

Track both URL citation rate and framework adoption rate. High adoption with low URL citation is the most common pattern for new sites building authority — and it’s the most actionable finding in the data.