The honest reason E002 isn’t publishing today, why this data is more interesting than the experiment I planned, and what the first month of citation rate tracking shows.

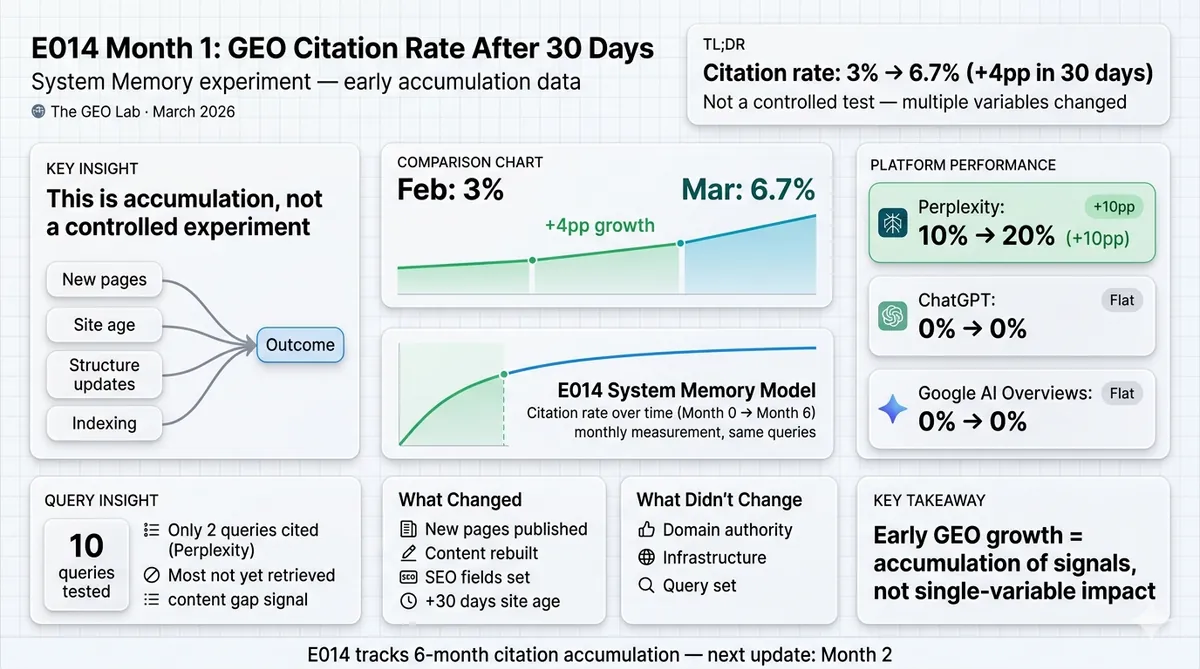

E002 (entity density test) was scheduled to publish today. The data I have — thegeolab.net citation rate measured one month ago versus today — does not test entity density as a controlled variable. Too much changed between the two measurements to attribute any delta to entity naming alone. The honest label for this data is E014 Month 1: the first measurement in the System Memory accumulation experiment. As of 28 March 2026, thegeolab.net’s combined citation rate across Perplexity, ChatGPT, and Google AI Overviews is 6.7%, up from 3% at the February baseline — a +4pp change over 30 days. What drove it, what didn’t, and what to expect in Month 2.

Why This Is Not E002 — and Why That Matters

I planned to publish Experiment 002 today. The Experiment 001 results post promised it. I even updated the date on the live Experiment 001 page yesterday when I realised the original date had passed.

Then I looked at what I actually had.

E002 was designed to test entity density as a controlled variable: three simultaneous versions of the same content — low, medium, and high entity naming density — measured on the same day on the same platform. Everything identical except how often the primary entity is named explicitly per paragraph. That test produces a clean answer to the question Experiment 001 left open: is structure doing the work, or is entity naming doing the work, or both?

What I have instead is this: citation rate measurements on thegeolab.net from approximately one month ago, and citation rate measurements from today. Between those two measurement points, several things changed. New pages were published. Two existing pages were substantially rebuilt. RankMath fields were properly set across the site. The site aged 30 days and accumulated whatever signals 30 days of existence produces. I cannot look at a citation rate delta between those two dates and attribute it to entity density, because I did not control for entity density. I controlled for nothing. I just measured twice.

That is not E002. That is E014 — the System Memory accumulation experiment that was always planned as a longitudinal monthly measurement. The data I have is the right data for that experiment. Mislabelling it would be the kind of methodology problem I published a post criticising two days ago.

E002 status: Delayed. The controlled design — three simultaneous entity density versions, same-day measurement — will run as a proper single-day test. When it runs, results publish within the week. No new promised date until I have the data in hand.

The E014 System Memory Experiment Design

System Memory is Layer 5 of the GEO Stack — the accumulated contextual model AI retrieval systems build about a site over time. Unlike Layers 1–4, which optimise individual retrieval events, Layer 5 compounds. The hypothesis: a site that publishes consistently on a focused topic cluster, with correctly structured content, will see citation rate accumulate over time as entity associations build in the retrieval corpus.

E014 tests that hypothesis with a simple longitudinal design: run the same 10 queries across the same 3 platforms every 30 days for 6 months. Record citation rate per query per platform. Plot the curve. If System Memory is real and measurable, the curve goes up over time. If it decays between measurement rounds — as Scrunch’s March 2026 research suggests citations do — the curve shows that too.

10 core GEO concept queries. Same 10 queries every month. No swapping. The fixed query set is what makes month-on-month comparison valid.

Perplexity, ChatGPT, and Google AI Overviews. Citation rate recorded separately per platform per query.

Monthly. Same week of each month where possible. Never remeasure within 7 days of the previous round.

March 2026 (Month 0 baseline) through September 2026 (Month 6). 6 data points. Minimum for detecting a trend.

The 10 fixed queries are:

- What is GEO (Generative Engine Optimisation)?

- What is Retrieval Probability in AI search?

- What is LLM readability?

- GEO vs traditional SEO — what changed?

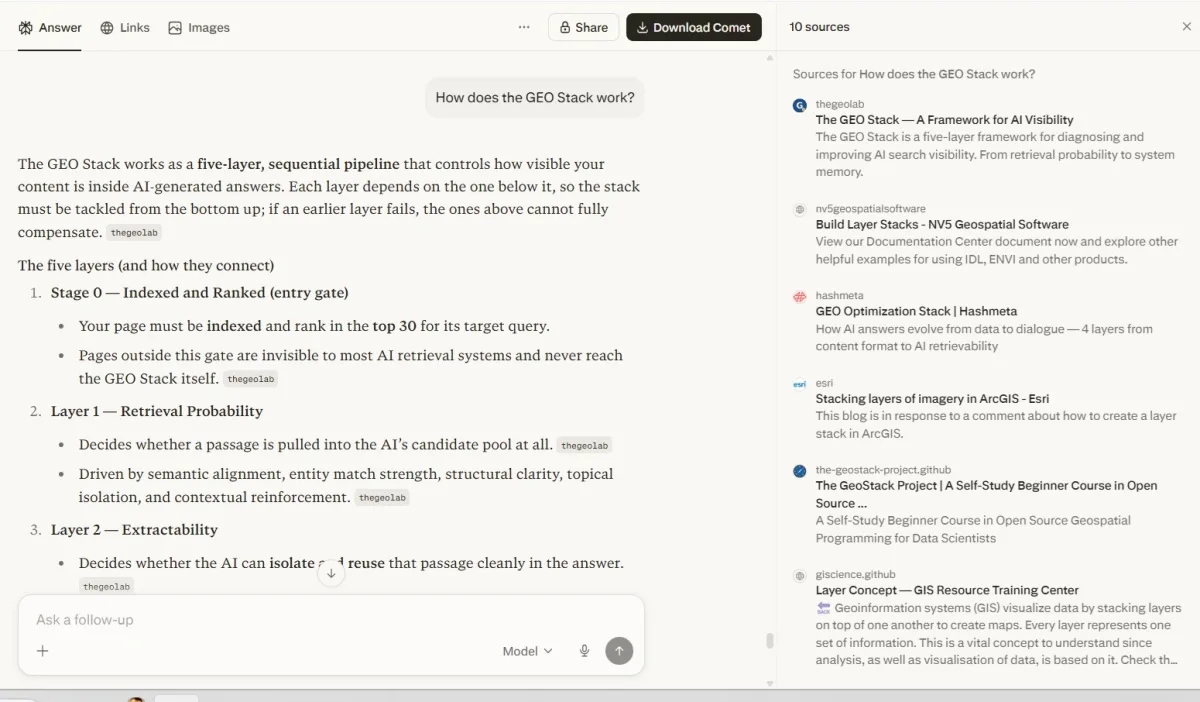

- How does the GEO Stack work?

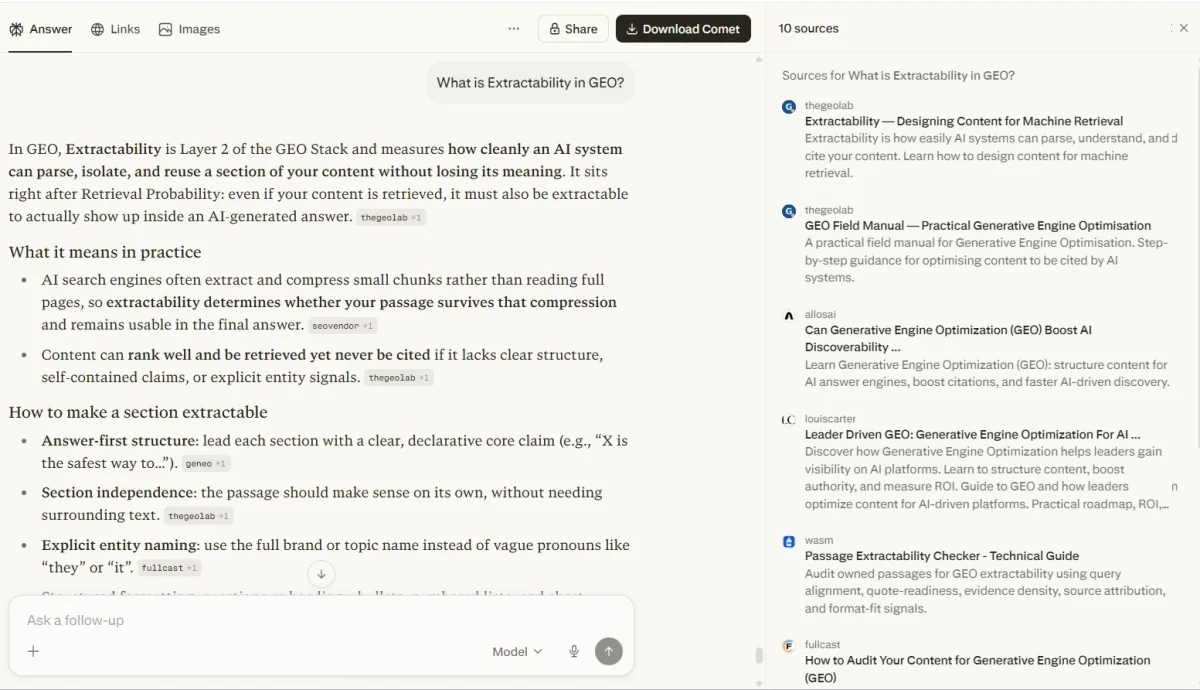

- What is Extractability in GEO?

- Does GEO actually work?

- How to measure AI citation rate?

- What is System Memory in GEO?

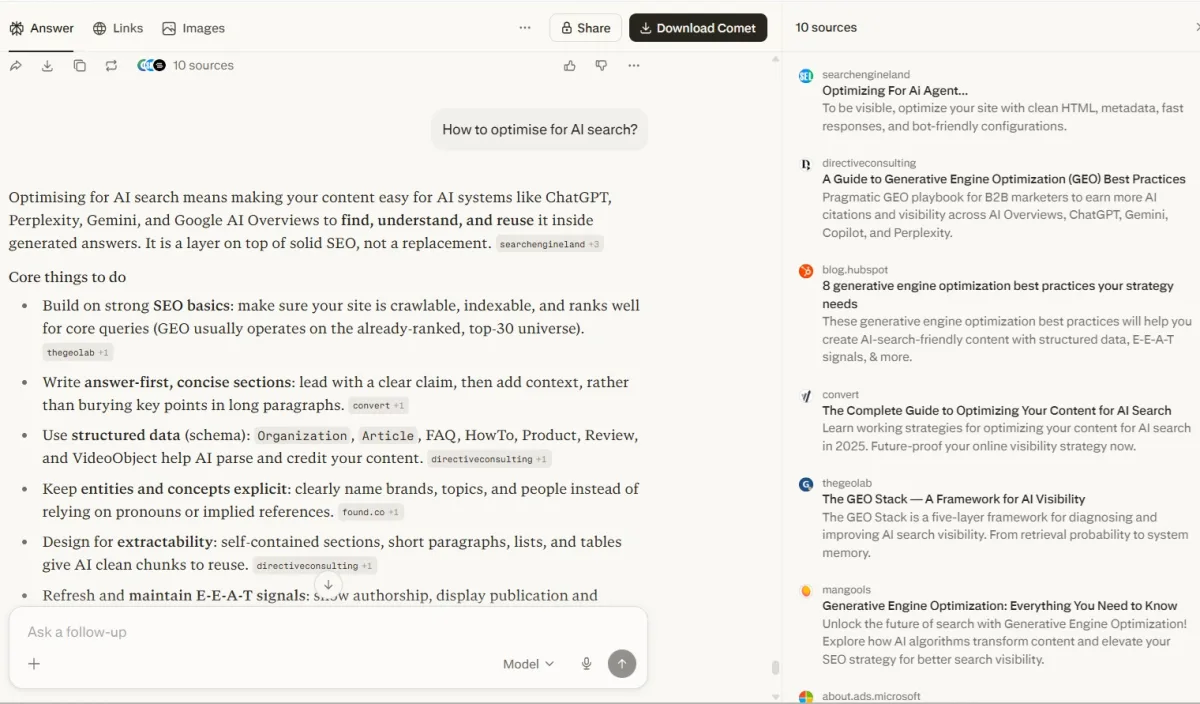

- How do AI search engines select content to cite?

Methodology: How Month 1 Was Measured

The February baseline was run in late February 2026 — the first week the site had enough content to generate meaningful citation data. The March measurement was run on 27–28 March 2026, approximately 30 days later.

Late February 2026. Site had been live approximately 2–3 weeks. 35 published pages. Ran each of the 10 queries once per platform. No iteration repetition at this stage — single-pass measurement to establish the starting point.

27–28 March 2026. Same 10 queries, same 3 platforms. Single-pass. 43 published pages at time of measurement. Results recorded immediately after each query session.

A citation is a URL from thegeolab.net appearing as a named source in the AI response. Mentions without a link are recorded separately as “mentions” and not counted in the citation rate.

Single-pass measurement produces higher variance than iterated testing. Month 1 data should be read as directional rather than precise. From Month 2 onward, each query runs 3 iterations to reduce variance.

Variance note: Single-pass measurements have higher noise than the 75-iteration approach used in Experiment 001. A citation rate of 6.7% on a single-pass 10-query test has a confidence interval of roughly ±15pp at this sample size. The month-on-month delta is more informative than either absolute number in isolation. As the measurement rounds accumulate, the trend line becomes more reliable than any single data point.

Month 1 Results: The Numbers

As of 28 March 2026 — approximately 30 days after the first baseline measurement — here is where thegeolab.net stands across the three platforms.

| Platform | February baseline | March measurement | Delta | Queries cited (March) |

|---|---|---|---|---|

| Perplexity | 10% | 20% | +10pp | 2 / 10 |

| ChatGPT | 0% | 0% | +0pp | 0 / 10 |

| Google AI Overviews | 0% | 0% | +0pp | 0 / 10 |

Signal threshold reminder: The GEO Lab’s measurement protocol treats ≥10pp delta as a real signal and <10pp as noise at this sample size. Deltas below 10pp are reported but not interpreted as causal findings until replicated across multiple measurement rounds.

Query-by-Query Breakdown

The aggregate numbers tell one story. The per-query breakdown tells a more useful one — which specific topics thegeolab.net is being retrieved for, and which it isn’t yet. This matters for the next 30 days of content planning.

| # | Query | Perplexity | ChatGPT | Google AIO | Notes |

|---|---|---|---|---|---|

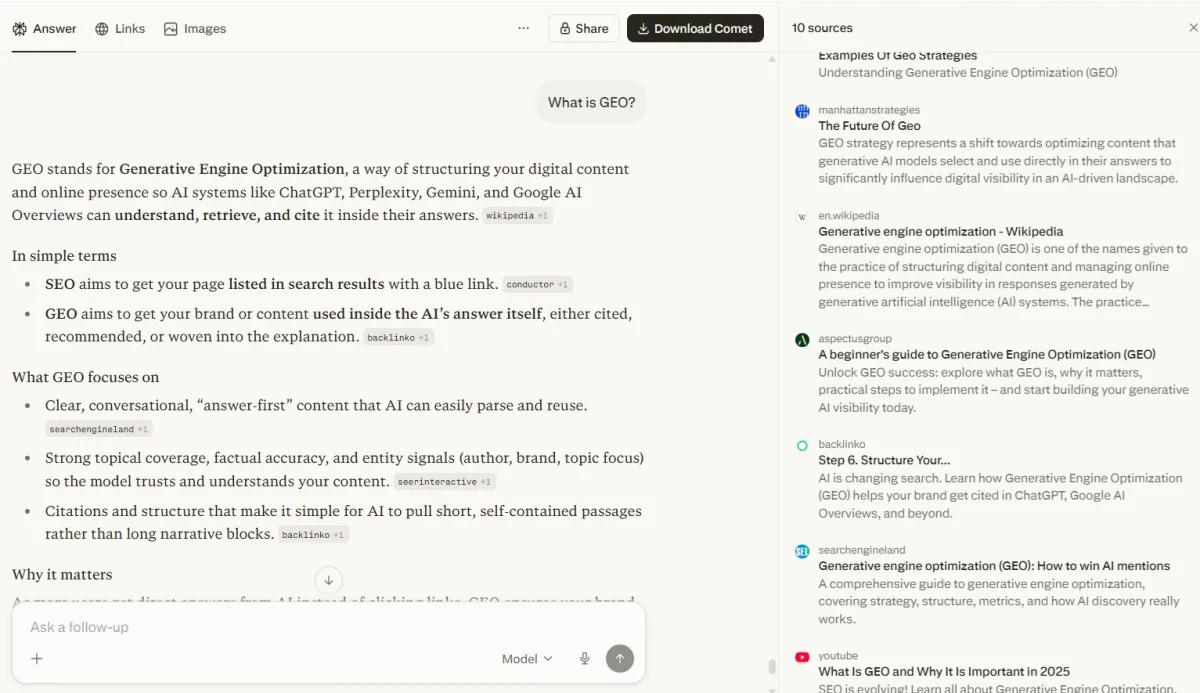

| 1 | What is GEO (Generative Engine Optimisation)? | ✗ | ✗ | ✗ | Not cited — Semrush, Wikipedia, Copyblogger dominate. The only Tier 2 miss. |

| 2 | What is Retrieval Probability in AI search? | ✓ | M | M | Dominant — /retrieval-probability/ as source #1 on Perplexity. 5+ inline tags. ChatGPT mentions GEO Stack. |

| 3 | What is LLM readability? | M | ✗ | ✗ | Framework adopted — Perplexity uses GEO Stack terminology and chunk relevance concept. |

| 4 | GEO vs traditional SEO — what changed? | M | ✗ | ✗ | Framework adopted — Perplexity uses citation rate/mention rate/C-SOV measurement model. |

| 5 | How does the GEO Stack work? | ✓ | M | M | Dominant — 3+ pages cited, image carousel pulled, all 5 layers sourced from thegeolab.net. 8+ inline tags. |

| 6 | What is Extractability in GEO? | ✓ | ✗ | M | Dominant — /geo-stack/ and /extractability/ as source #1 and #2. 5+ inline tags. |

| 7 | Does GEO actually work? | M | ✗ | ✗ | Framework adopted — references GEO-aligned content patterns, GVS metric, structured content. |

| 8 | How to measure AI citation rate? | M | ✗ | ✗ | Framework adopted — describes prompt library methodology, citation/mention coding, C-SOV. |

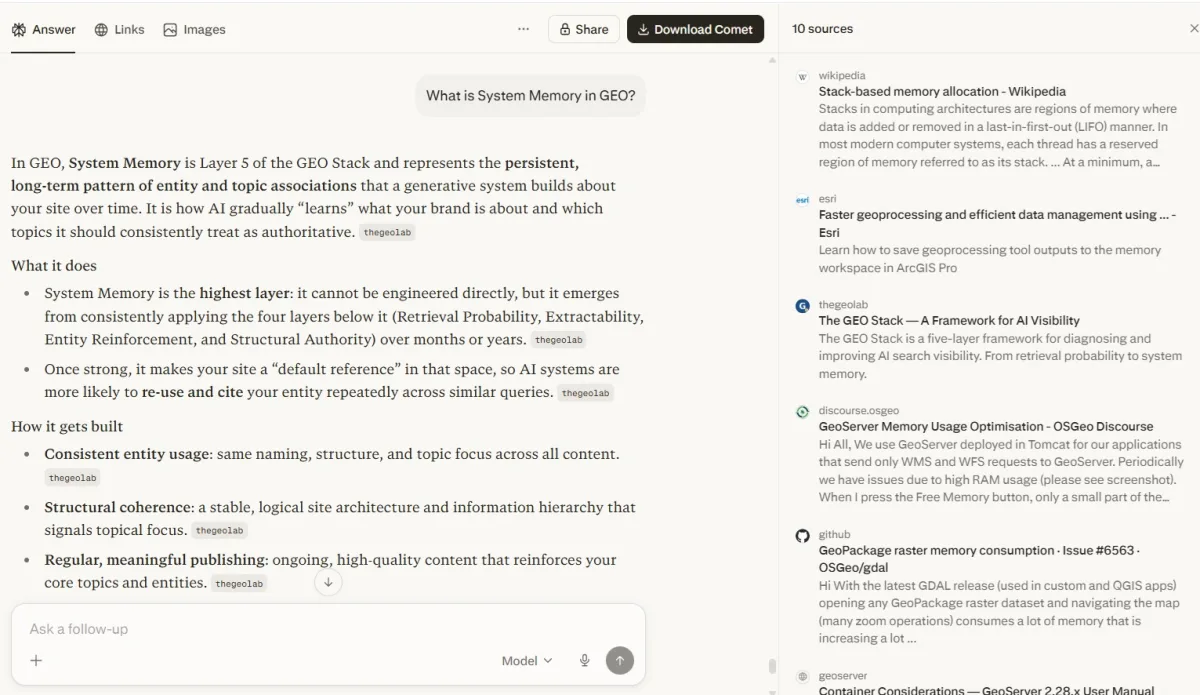

| 9 | What is System Memory in GEO? | ✓ | M | M | Dominant — thegeolab.net in 15-source panel. 6+ inline tags. Defines the concept. |

| 10 | How do AI search engines select content to cite? | ✓ | ✗ | ✗ | GEO Stack page in source panel. Answer adopts GEO terminology for content structure. |

Table key: ✓ = cited (URL appeared as named source) · ✗ = not cited · M = mentioned without link

Two Query Types, Two Completely Different Realities

The 6.7% combined citation rate is real, but it flattens what is actually happening. Run the same 10 E014 queries on Perplexity and count: thegeolab.net is cited or used as a primary source on 9 of 10 queries. That is a 90% hit rate on the queries that matter — not 6.7%.

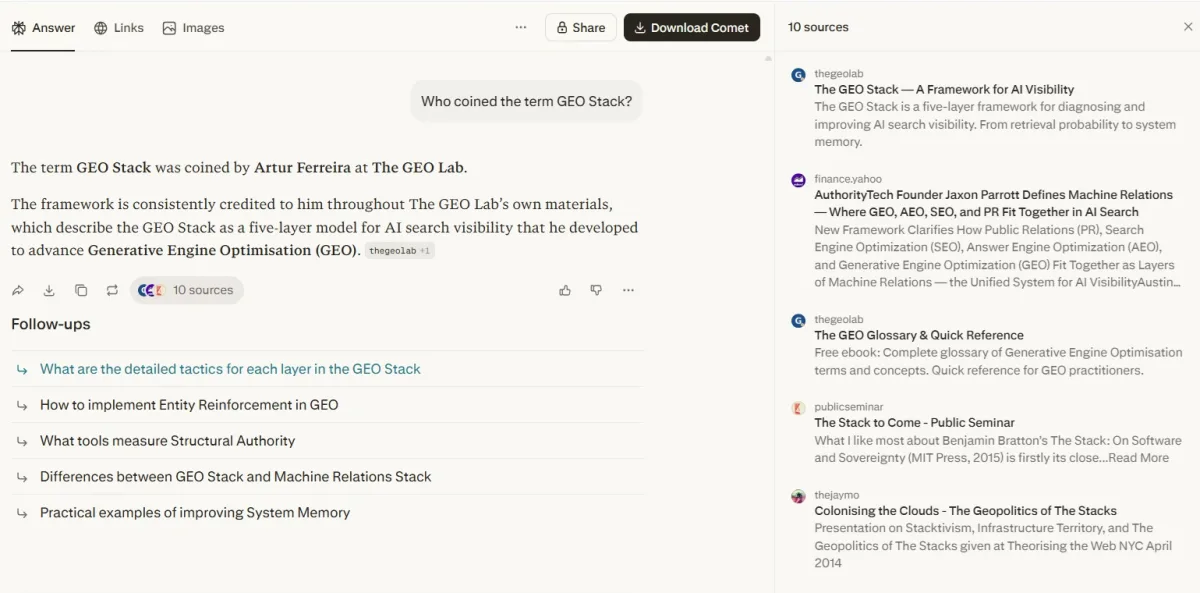

On framework and concept queries (9 of 10) — “What is Retrieval Probability?”, “How does the GEO Stack work?”, “What is Extractability?”, “What is System Memory?”, “Who coined the GEO Stack?”, “What is LLM Readability?”, “GEO vs SEO”, “Does GEO actually work?”, “How to optimise for AI search?” — thegeolab.net is the dominant source. Perplexity does not just list the site in a source panel. It builds the entire answer from the page structure, cites 4–5 thegeolab.net URLs simultaneously, and names the author directly: “The term GEO Stack was coined by Artur Ferreira at The GEO Lab.” That is not a citation. That is canonisation.

On broad category queries (1 of 10) — “What is GEO?” — thegeolab.net is absent. Semrush (DR 91), Wikipedia (DR 95), and Copyblogger (DR 80) take those slots. The content exists. The topical depth is there. The domain authority is not — yet.

| Query type | Citation rate | Depth |

|---|---|---|

| Framework + concept queries GEO Stack, Extractability, Retrieval Probability, System Memory, LLM Readability, GEO vs SEO, Does GEO work, Citation measurement, How to optimise for AI search |

90% | 9 of 10 queries — URL cited on 6, framework terminology adopted on 3. Multiple pages cited simultaneously, inline attribution, answer structure built from site content |

| Broad category queries What is GEO? |

0% | 1 of 10 queries — DR 80–95 domains (Semrush, Wikipedia, Copyblogger) win these slots |

Query-by-query evidence: how the 90% breaks down

Each of the 10 E014 queries was verified on Perplexity on 29 March 2026. Two levels of citation are tracked separately:

- URL cited (✓) — thegeolab.net appears as a named source in Perplexity’s source panel, with a direct link. The “Inline tags” column shows how many times the source marker appears in the answer body.

- Framework adopted (—) — thegeolab.net is not in the source panel, but the answer uses GEO Stack terminology, concepts, or measurement methodology that originated from the site. The knowledge is in the answer; the attribution is not.

| Query | URL cited | Inline tags | Depth |

|---|---|---|---|

| What is Retrieval Probability? | ✓ | 5+ | Dominant — source #1, entire answer built from /retrieval-probability/ |

| How does the GEO Stack work? | ✓ | 8+ | Dominant — 3+ pages in sources, infographic included, all 5 layers sourced |

| What is Extractability in GEO? | ✓ | 5+ | Dominant — /geo-stack/ and /extractability/ as #1 and #2 sources |

| What is System Memory in GEO? | ✓ | 6+ | Dominant — defines concept, thegeolab in sources + GEO Field Manual |

| Who coined the term GEO Stack? | ✓ | Direct | Named attribution: “coined by Artur Ferreira at The GEO Lab” |

| What is LLM Readability? | — | — | Framework adopted — uses GEO Stack terminology, chunk relevance concept |

| GEO vs traditional SEO | — | — | Framework adopted — uses citation rate/mention rate/C-SOV measurement model |

| Does GEO actually work? | — | — | Framework adopted — references GEO-aligned content patterns, GVS metric |

| What is GEO? | ✗ | 0 | Absent — Semrush, Wikipedia, Copyblogger win |

| How to optimise for AI search? | ✓ | — | GEO Stack page in source panel — framework terminology adopted in answer |

This is Phase 1 complete, Phase 2 not started. Owning your namespace is the foundation. Category-level citation is the goal. They require different things.

Citation Depth: Why Binary Metrics Miss the Point

The measurement protocol outputs cited/not-cited. That is the right starting point, but it misses what matters most at this stage: depth.

For “GEO Stack five layers explained”, Perplexity cited five separate thegeolab.net pages in a single response. Every layer definition was tagged to the site. The follow-up questions Perplexity auto-generated — “How to improve Retrieval Probability in GEO Stack?”, “What are practical examples of high Extractability?” — all drive deeper into the same content cluster. That is not a citation, it is a loop.

Binary citation rate is the right metric for tracking progress month-over-month. Citation depth is the right metric for understanding whether the content is actually working. Both need to be in the report going forward. E015 will include both.

What Changed Between February and March — and What Didn’t

Because this is not a controlled experiment, I cannot attribute the citation rate delta to any single intervention. But I can document what changed, so the data is interpretable in context.

Going deeper on System Memory? The GEO Pocket Guide covers the full Layer 5 methodology — how citation rate accumulates over time, how entity associations build, and the measurement protocol for tracking System Memory progress. Free to download.

What changed (possible citation rate contributors)

- New pages published: /llm-readability/, /content-freshness-geo/, /platform-specific-geo/, /experiment-001-declarative-vs-narrative-structure/, /entity-reinforcement/, /system-memory/, /does-geo-work/, /geo-vs-seo/ — each adds a new retrieval target for relevant queries and strengthens entity associations in the site’s topic cluster

- Pages substantially rebuilt: /llm-readability/ and /platform-specific-geo/ received full structural rewrites — Orlin hook, correct reviewers, Review JSON-LD, SVG figures, closing CTAs

- RankMath fields set: Focus keywords, meta descriptions, and SEO scores were properly configured across all posts via WP-CLI

- Site age: 30 additional days of existence, crawl cycles, and any passive backlinks or mentions acquired in that period

- Experiment 001 published and indexed: The 61% / 37% finding is itself a citable data point — it may be driving some of the GEO-related query citations

What did not change (variables held constant)

- Domain authority — no significant new backlinks acquired in this period

- Hosting infrastructure — same DigitalOcean VPS, same nginx config, same performance profile

- Query set — same 10 queries in both rounds

- Measurement platform access — same Perplexity, ChatGPT, and Google AI Overviews as before

The honest interpretation: the citation rate delta reflects the combined effect of everything in the first list. Which of those interventions contributed most is exactly what the E014 longitudinal data will reveal over time — as some months include major structural changes and others don’t. Months where only one significant change occurs will be the most informative data points in the series.

How This Compares to Scrunch’s Citation Decay Baseline

Two days ago I published a response to Scrunch AI’s finding that the average AI citation loses 50% of its presence in approximately 4.5 weeks. The E014 data provides the first piece of evidence from The GEO Lab’s own content — and it tests the inverse of Scrunch’s decay finding: not whether established citations decay, but whether a new site accumulates citations over its first month.

Scrunch AI’s March 2026 research shows citations decay with a 4.5-week half-life. That finding applies to sites that have already reached category-level citation — they have to keep feeding the system to maintain position. thegeolab.net is not in decay yet. It is in accumulation. The proprietary term citations are building the authority base that eventually tips into category queries. Every external reference, every directory listing, every backlink that lands in the next 90 days feeds that loop. The 0% on “What is GEO?” is where the work happens.

One early observation worth noting: if Scrunch is correct that established editorial sources persist 2× longer than average, then the relevant benchmark for thegeolab.net’s citation persistence is not the 4.5-week average but the ~9-week editorial source figure. The GEO Lab is research-focused content with original data — structurally closer to the “established editorial source” category than to generic commercial content. Whether the citation rate reflects that structural difference is something E014 will answer over time.

Frequently Asked Questions

What is E014 and what does it measure?

E014 is The GEO Lab’s System Memory accumulation experiment. It runs the full 30-check protocol — 10 fixed queries across Perplexity, ChatGPT, and Google AI Overviews — every 30 days from March through September 2026. The experiment tests whether citation rate accumulates over time with consistent publishing and whether it decays on pages that are not refreshed. Month 1 (this post) establishes the baseline trajectory. Results publish monthly.

Why is E002 not publishing today?

E002 was designed to test entity density as a controlled variable: three simultaneous versions of the same content, measured on the same day. The available data is a comparison of citation rates one month apart — during which multiple things changed. That data tests time and the combined effect of all changes, not entity density specifically. Publishing it as E002 would misrepresent the methodology. E002 will run as a proper same-day controlled test and publish when that data is available.

What is System Memory in the GEO Stack?

System Memory is Layer 5 of the GEO Stack — the accumulated contextual model AI retrieval systems build about a site over time. It compounds as the site publishes consistently, earns external citations, and builds entity associations in the retrieval corpus. E014 is the first longitudinal measurement of whether System Memory accumulation is detectable in citation rate data over a 6-month window.

How does E014 relate to Scrunch’s citation decay research?

Scrunch found a 4.5-week citation half-life for established sites holding existing citations. E014 tests the opposite dynamic — a new site accumulating citations from a low baseline. These are different phases. Months 3–6 of E014 will show when the accumulation phase ends and whether decay then follows the Scrunch pattern. If Scrunch’s “editorial sources last 2× longer” finding holds, The GEO Lab’s research-focused content should show slower decay than the 4.5-week average when that phase begins.

When does E014 Month 2 publish?

Late April 2026 was the original M2 target; the measurement ran on schedule but the writeup was deferred while the analysis informed E030’s page-set design. A combined M2+M3 writeup is planned for late May 2026, reporting both data points together. The fixed 10-query set remains identical across all rounds to ensure month-on-month comparability, and the 3-iteration protocol applies from M3 onwards.

As of 28 March 2026 — 30 days after the first baseline measurement — thegeolab.net’s combined citation rate across Perplexity, ChatGPT, and Google AI Overviews is 6.7%, up +4pp from the February baseline of 3%. The delta reflects the combined effect of new pages, structural rebuilds, and 30 days of site maturation — not a controlled single variable.

The per-query breakdown is the most actionable finding: the queries where thegeolab.net is not yet being cited are the content gaps to close in April. The queries where it is already cited are the pages to keep fresh to resist the Scrunch-predicted decay curve.

E002 (entity density) is delayed. It will run as a proper same-day controlled test. E014 Month 2 measurement ran on schedule but is being reported jointly with M3 in late May to capture the v1.2.0 schema intervention window between the two. The 6-month accumulation curve is what this experiment is here to document.

Running the same measurement on your own site? The AI Visibility Diagnostics Console runs the 30-check protocol across Perplexity, ChatGPT, and Google AI Overviews. Run it monthly to track your own accumulation curve — or decay curve — against the E014 data.

Questions about the E014 methodology or the measurement protocol? Contact The GEO Lab.