GEO Stack Layers

Overview · Retrieval Probability · Extractability · Entity Reinforcement · Structural Authority · System Memory

The only GEO Stack layer that cannot be directly engineered — what it is, how it builds, and what to do while you are waiting for it

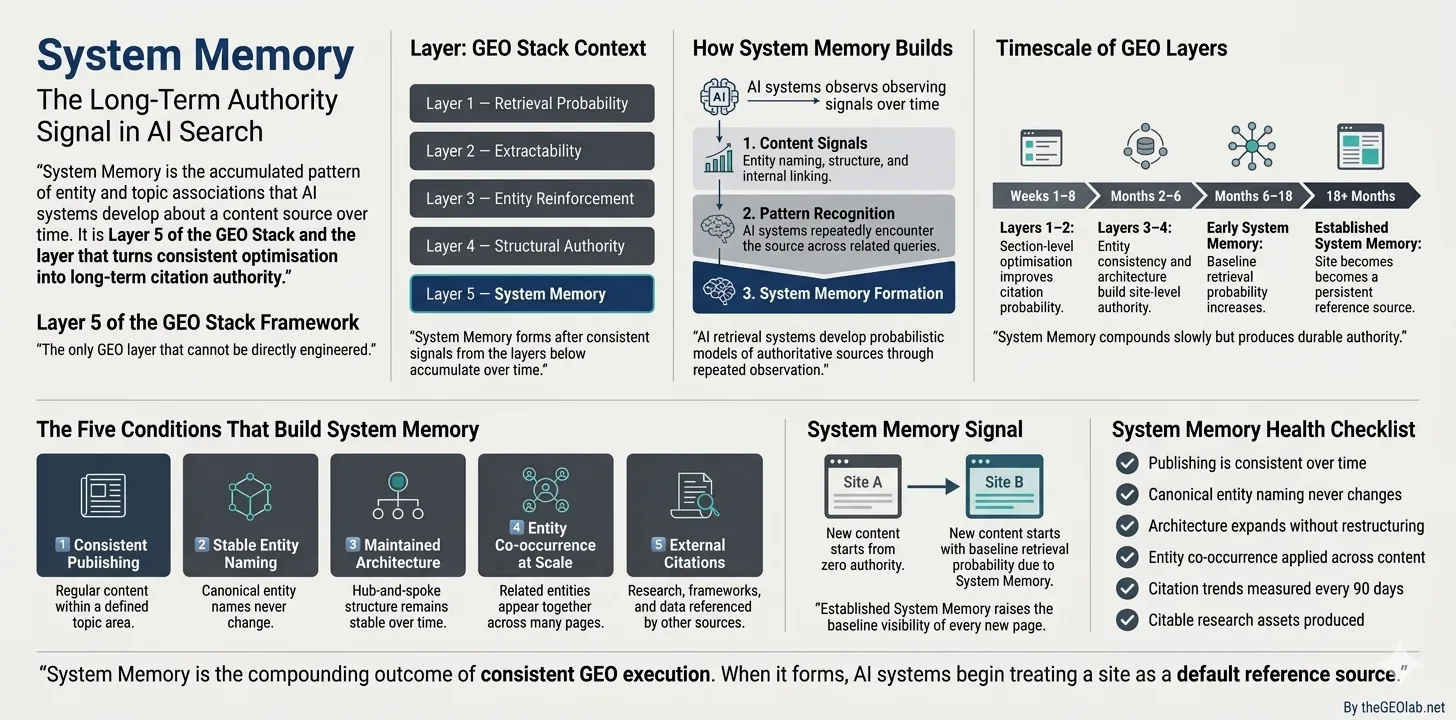

System Memory is the accumulated pattern of entity and topic associations that generative AI systems develop about a content source over time. It is Layer 5 of the five-layer GEO Stack framework — the final and most opaque layer, built on the foundation of Layers 1 through 4.

System Memory is what causes some sites to become persistent reference points — the sources AI systems default to when constructing answers in a given topic area. It cannot be directly optimised. It emerges from consistent, sustained application of the layers below it over months and quarters.

Unlike every other GEO Stack layer, System Memory cannot be fixed on a short timescale. But it can be built — deliberately, through the same structural discipline that makes Layers 1 through 4 work — and once built, it compounds in ways that short-term optimisation cannot replicate.

Why System Memory Matters

The pattern that led me to identify this layer was puzzling. Some sites with strong technical foundations — well-structured content, consistent entity naming, coherent architecture — still generated erratic citation at first, then progressively more consistent citation over several months, without any further structural changes. The content quality had not improved. The entity naming had not changed. The architecture had not been rebuilt. Time was the variable.

What was happening is that generative AI systems were updating their probabilistic models of these sources as they encountered them repeatedly across different queries and different contexts. A site that publishes consistently on a defined topic area, uses stable entity naming throughout, and maintains coherent information architecture over time starts to accumulate a recognition signal that individual sections cannot generate alone. This accumulated recognition is System Memory.

The commercial significance of System Memory is that it changes the baseline. A site with strong System Memory does not start each new piece of content from zero. Every new section it publishes begins with a higher baseline retrieval probability than an equivalent section from a site with no System Memory — because retrieval systems already have a stable, positive model of what this source is about and how authoritative it is on the topic. The compounding effect over time is the reason GEO is a discipline rather than a tactic.

How System Memory Builds

Generative AI systems are not static. They update their models of sources through ongoing observation — processing new content as it is published, re-evaluating associations as entity naming and structural patterns evolve, building probabilistic models of what each source is authoritative for based on accumulated evidence rather than any single snapshot.

System Memory builds through the compounding of consistent signals over time. Three mechanisms drive the accumulation:

Entity pattern reinforcement: Every piece of content that uses canonical entity naming alongside related entities adds weight to the association between that source and those entities. The association does not build linearly — it builds through the accumulation of consistent signals. A single post on “Generative Engine Optimisation” adds a small association weight. Fifty posts using consistent canonical naming across a well-defined topic cluster add an association that starts to function as a stable retrieval default.

Topical consistency over time: Sites that publish consistently on a single, well-defined topic area build stronger System Memory than sites that publish across many unrelated topics, even at the same publishing volume. Topical consistency tells the retrieval system’s model that this source is a specialist — which increases the baseline probability that its content will be selected for queries in the topic area, even for queries on subtopics the site has not specifically addressed.

Structural stability: Sites that maintain coherent internal architecture over time — without frequently reorganising content, breaking internal links, or changing canonical entity names — give retrieval systems a stable structural model to build associations within. Structural disruption — changing the canonical names for core concepts, removing hub pages, breaking the internal linking graph — resets parts of the accumulated System Memory that was built around those structural elements.

The most common System Memory mistake is rebranding or renaming core concepts mid-programme. A site that spent eighteen months building System Memory around “Generative Engine Optimisation” and then pivots to calling it “AI content optimisation” loses a significant portion of the accumulated entity association — and must rebuild it under the new canonical term from a lower starting point. Canonical naming decisions made at the beginning of a GEO programme compound for years. Get them right early.

The Timescales of System Memory

Understanding System Memory requires understanding that it operates on a different timescale from every other GEO Stack layer. Layers 1 and 2 can produce measurable citation rate improvements within four to eight weeks. Layers 3 and 4 typically show improvement over three to six months. System Memory operates over quarters to years.

This timeline is not a flaw in the framework — it is a feature of the mechanism. The same long timescale that makes System Memory slow to build makes it durable. Short-term optimisation tactics produce short-term gains. System Memory, once established, produces compounding returns that degrade slowly even if active optimisation effort is reduced.

Section-level structural improvements show measurable citation rate changes. Declarative structure, explicit entity anchoring, answer-first openings. The fastest GEO improvements come from here.

Entity naming consistency accumulates across the content system. Internal linking architecture builds cluster coherence. Individual page improvements begin compounding into site-level authority.

First System Memory effects become measurable at 90-day citation trend intervals. Baseline retrieval probability increases for new content without additional per-section optimisation. The compounding effect begins.

The site becomes a persistent reference point in its topic area. New content inherits baseline citation probability from established System Memory. The site appears across a wider range of queries, including queries on adjacent topics it has not specifically addressed.

The practical implication is that System Memory should be thought of as a long-term investment with compounding returns, not a short-term optimisation with immediate payoff. Sites that begin building System Memory now will see its effects in 12 to 18 months. Sites that delay will be building from zero when their competitors are already compounding.

The Five Conditions That Build System Memory

System Memory cannot be engineered directly — but the conditions that produce it can be. These five conditions are the indirect levers for building System Memory over time.

Consistent Publishing on a Defined Topic Area

Regular publishing — not just high-volume publishing — builds System Memory by giving retrieval systems repeated opportunities to observe the source’s entity associations across a growing body of evidence. Consistent publishing two to four times per month on a well-defined topic area produces stronger System Memory than irregular publishing of higher volume across a broader topic set.

The topic area definition matters as much as the publishing frequency. A site that publishes consistently on “Generative Engine Optimisation” builds stronger System Memory in that topic area than a site that publishes on GEO alongside unrelated topics at the same frequency. The topical signal is more coherent, and the entity associations accumulate more concentrated semantic mass.

Stable Canonical Entity Naming — Never Changed

Entity naming stability over time is the single most important condition for System Memory accumulation. Every canonical entity name change resets the entity association built under the previous name. A site that used “generative search optimisation” for twelve months and then pivoted to “Generative Engine Optimisation” loses the association weight accumulated under the first term and must rebuild it under the second.

This is why canonical entity naming decisions made in the GEO Stack framework should be treated as long-term commitments. The right time to establish canonical names is before publishing the first piece of content in a topic area — not after the content system has grown to a point where name changes require retroactive updates across hundreds of posts.

Maintained Structural Architecture — Not Reorganised Repeatedly

The internal linking architecture and hub-and-spoke cluster structure built under Layer 4 provides the structural foundation within which System Memory accumulates. Structural disruption — removing hub pages, breaking internal links, reorganising content clusters, changing URL structures without redirects — damages the structural model that retrieval systems have built about the site and forces partial reaccumulation of System Memory.

This does not mean the architecture should never evolve. Adding new spoke pages to an existing cluster, expanding hub page coverage, adding new clusters for adjacent topics — these actions build System Memory rather than disrupting it. The disruptions to avoid are structural changes that break existing associations: removing content, changing canonical URLs, or reorganising clusters in ways that require rebuilding the internal linking graph from scratch.

Cross-Page Entity Co-occurrence at Scale

The entity co-occurrence patterns that build Entity Reinforcement at the individual page level (Layer 3) accumulate into System Memory at the site level over time. A site with fifty posts consistently pairing “Generative Engine Optimisation” with “Retrieval Probability”, “Extractability”, and “Entity Reinforcement” in the same sections has built a semantic cluster in its System Memory that functions as an authority signal for the entire topic area — not just for the entities individually.

This is the compounding dynamic of Layer 3 feeding into Layer 5. Each post that correctly reinforces entity co-occurrence adds a small increment to the System Memory association. The increment is small per post. Across fifty posts, consistently applied, it becomes a self-reinforcing authority signal that new competitors cannot replicate in the short term regardless of their content quality.

External Citation and Reference Accumulation

When other sources cite or reference content from a site — either through backlinks, social sharing, or inclusion in AI-generated answers — they contribute to the retrieval system’s model of the site’s authority in the topic area. External citation is not a direct GEO Stack variable (it belongs to traditional SEO’s domain authority framework), but it feeds into System Memory through a separate mechanism: it increases the frequency with which retrieval systems encounter the source’s entities in the context of other high-authority sources.

The implication is that content designed for external citation — research findings, original data, novel frameworks, definitional content that others want to reference — builds System Memory more efficiently than content designed only for internal consistency. Publishing original experiment results, as The GEO Lab does in The GEO Log, produces external citation that feeds System Memory through the authority accumulation mechanism.

System Memory Health Checklist

As of March 2026, The GEO Lab’s System Memory audit of thegeolab.net found that content update cadence and version signalling were the two weakest freshness indicators across all 67 indexed pages. System Memory cannot be audited at a point in time — it is measured through trend, not snapshot. This checklist identifies the conditions and practices that build it, not the state of the memory itself.

-

Topic area is narrow enough to be specific and consistently addressable The publishing programme focuses on a single, well-defined topic area rather than spanning multiple unrelated domains. The topic narrow enough to accumulate concentrated entity associations.

-

Canonical entity naming has not changed since publishing began No canonical name changes to core entities since the first post was published. Any drift has been identified and corrected before accumulating across multiple posts.

-

Publishing frequency is consistent — not burst-and-pause Regular publishing intervals maintained regardless of competing priorities. Consistent low-volume publishing (twice monthly) builds stronger System Memory than irregular high-volume bursts.

-

Content architecture has grown outward without being reorganised New clusters and spoke pages have been added to the existing architecture. No hub pages removed, no major internal linking restructures, no URL changes without permanent redirects.

-

Entity co-occurrence is applied consistently across all new content Every new post in the topic cluster pairs the core entity with its related entities in explicit named co-occurrence. Not as a one-time standard but as an ongoing publishing practice.

-

Citation trend is measured at 90-day intervals Not monthly — quarterly. System Memory effects are too slow to measure at monthly intervals without confusing model-state noise with trend signals. A 90-day interval smooths the noise.

-

Citable assets are produced alongside regular content Original research, named frameworks, benchmark data, or definitional content that gives other sources a reason to reference the site. External citation accelerates System Memory accumulation.

Diagnosing a Layer 5 Problem — And What It Is Not

System Memory failure is identified by exclusion, not by direct measurement. The diagnostic sequence matters.

The most important thing to know about Layer 5 diagnosis is that most sites that believe they have a Layer 5 problem actually have an undetected Layer 1 or Layer 2 problem. System Memory failure produces low citation rates — but so does retrieval failure and extraction failure. Before concluding that time is the variable, confirm that the lower layers are functioning correctly.

Layer 5 diagnosis checklist — in order:

1. Run citation tests across ten target queries on Perplexity. If citation rate is near zero, the failure is at Layer 1 or Layer 2 — not Layer 5. Fix retrieval and extraction first.

2. If citation rate is low but not zero (10–30% across tests), and Layer 2 extractability is sound, check Layer 3 entity naming consistency. Erratic citation is typically a Layer 3 failure, not a Layer 5 failure.

3. If Layers 1 through 4 are all sound — sections are retrieved and extracted cleanly, entity naming is consistent, architecture is coherent — and citation rates have been low for fewer than six months of consistent publishing, the issue is likely System Memory accumulation in progress. The intervention is time and consistency, not more structural work.

4. If Layers 1 through 4 are all sound and the publishing programme has been running consistently for more than twelve months without citation rate improvement, reassess topical focus. System Memory may be accumulating in a topic area that is too broad, too competitive, or not specifically enough addressed by the content programme.

The hardest part of Layer 5 is that it requires practitioners to resist the urge to intervene. When citation rates are low and all the lower layers appear sound, the instinct is to try something new — change the content strategy, add more links, restructure the architecture. Most of these interventions disrupt the consistency that System Memory depends on. Patience and structural stability are the correct interventions at Layer 5. They are also the least satisfying ones.

System Memory and Structural Authority: How Layer 5 Depends on Layer 4

System Memory is the layer that the rest of the GEO Stack builds toward. Every structural decision made in Layers 1 through 4 either contributes to or undermines the conditions under which System Memory accumulates.

The relationship with Layer 4 (Structural Authority) is the most direct. System Memory accumulates within a structural architecture — the hub-and-spoke clusters, the internal linking graph, the canonical entity associations. When the architecture is coherent and stable, System Memory accumulates within a well-defined topical model that retrieval systems can build on over time. When the architecture is fragmented or frequently restructured, the model being built is incoherent, and System Memory accumulates slowly or not at all.

In my experience, the practical consequence is that Structural Authority decisions made early in a content programme have a disproportionate impact on System Memory. The architecture established in the first six months becomes the foundation within which System Memory accumulates over the following two years. Getting the architecture right early — even before the content volume exists to make the structural decisions feel consequential — is the highest-leverage long-term investment available in GEO.

The compound return: A site that establishes correct hub-and-spoke architecture, canonical entity naming, and consistent publishing in month one does not see meaningful System Memory effects for 12 to 18 months. But at month 24, its baseline retrieval probability for new content is substantially higher than a competitor that started the same structural work at month 12. The compounding effect rewards early, consistent structural investment in ways that late or inconsistent investment cannot replicate.

Measuring System Memory

System Memory is not measurable at a point in time. In my testing, it is best measured through trend analysis over extended periods. Three methods provide useful signal:

- 90-day citation trend analysis: Run a standardised citation test — the same twenty target queries on Perplexity and Google AI Overviews — at 90-day intervals. Record the citation rate for each query. Track the trend across four or more intervals (12 months minimum). Increasing citation rates indicate accumulating System Memory. Flat rates with all lower layers sound indicate System Memory is building too slowly — topical focus or publishing consistency may need reassessment.

- Query breadth expansion: A meaningful System Memory signal is citation appearing across an expanding range of queries — including adjacent subtopics the site has not specifically addressed. If, after 12 months, citation appears only for queries the site has published content about and never for related queries, System Memory is not yet generalising. Expand the content cluster to cover more of the topic map.

- Citation without recency: System Memory is most clearly demonstrated when older content continues to appear in AI citations without recent updates — when accumulated entity associations are strong enough that content remains in the retrieval candidate pool despite its age. Track whether content published six to twelve months ago continues to generate citations. Sustained citation of older content without decay is the clearest indicator of accumulated System Memory.

Results from ongoing citation trend measurements at The GEO Lab are published in The GEO Brand Citation Index, which tracks 28 brands across three AI platforms at regular intervals and includes comparative trend data.

Summary

System Memory is the layer that the entire GEO Stack builds toward — the accumulated authority that makes a source a persistent reference point in its topic area. It cannot be directly optimised. It is built through consistent application of Layers 1 through 4, sustained over months and quarters, without the structural disruptions that reset accumulated entity associations.

The five conditions that build System Memory — consistent publishing on a defined topic area, stable canonical entity naming, maintained structural architecture, cross-page entity co-occurrence at scale, and external citation accumulation — are indirect levers. None of them targets System Memory directly. All of them, applied consistently over time, produce it as an outcome.

The most important thing to understand about Layer 5 is that it cannot be rushed. But it can be started. A content programme that establishes correct structural foundations in month one begins accumulating System Memory from month one. The compounding returns arrive 12 to 18 months later — and they compound on everything the programme has built in the intervening period.

System Memory sits at Layer 5 of the GEO Stack, developed by Artur Ferreira at The GEO Lab. The full implementation framework for all five layers is in the GEO Field Manual.

Freshness Signal Checker

Paste any content section and see how strong its freshness signals are — date references, temporal specificity, update language, and version markers.

You’ll see freshness dimension scores, highlighted date references, and improvement suggestions.

Frequently Asked Questions

What is System Memory in GEO?

System Memory is the accumulated pattern of entity and topic associations that generative AI systems develop about a content source over time. It is Layer 5 of the GEO Stack — the final and most opaque layer, built on consistent application of Layers 1 through 4 over months and quarters. Sites with strong System Memory become persistent reference points that AI systems default to when constructing answers in a given topic area. Unlike all other GEO Stack layers, System Memory cannot be directly engineered — it emerges from sustained structural discipline over time.

How long does it take to build System Memory?

First measurable System Memory effects typically appear 6 to 18 months after consistent, structurally sound publishing begins — measured at 90-day citation trend intervals. Established System Memory, where the site becomes a persistent reference point across a broad range of topic queries including adjacent subtopics, develops over 18 months or more. The timescale is not a bug — the same long accumulation that makes System Memory slow to build makes it durable. Short-term optimisation tactics produce short-term gains. System Memory produces compounding returns that degrade slowly even when active optimisation effort is reduced.

What is the most common mistake when building System Memory?

Renaming or rebranding core entities mid-programme is the most damaging mistake. Changing the canonical name for an important concept — from “generative search optimisation” to “Generative Engine Optimisation”, for example — resets the entity association built under the previous name. The site must rebuild System Memory under the new canonical term from a lower starting point, losing months of accumulated entity association weight. Canonical naming decisions made at the start of a content programme compound for years. Establishing the right canonical names before publishing the first post is one of the highest-leverage early decisions in GEO.

How do I know if I have a Layer 5 problem versus a Layer 1 or Layer 2 problem?

Layer 5 failure is diagnosed by exclusion, not direct measurement. If citation rates are near zero, the failure is at Layer 1 (retrieval) or Layer 2 (extraction) — not Layer 5. If citation is low but erratic (appearing for some queries but not others), the failure is usually at Layer 3 (entity naming fragmentation). Layer 5 failure is only the working diagnosis when Layers 1 through 4 are all demonstrably sound and the publishing programme has been running consistently for more than six months without citation rate improvement. Most sites that believe they have a Layer 5 problem actually have an undetected Layer 1 or Layer 2 problem. Check those first.

Can System Memory be lost?

System Memory degrades when the structural conditions that built it are disrupted. Removing hub pages, breaking internal links without redirects, changing canonical entity names, or dramatically shifting topical focus can reduce accumulated System Memory by disrupting the entity associations and structural models that retrieval systems have built. Publishing gaps — periods of no new content — produce slower degradation: System Memory does not reset immediately on publishing cessation, but it does decay over months without reinforcement. Structural stability and publishing consistency are the primary maintenance requirements for established System Memory.

What should I be doing at Layer 5 while waiting for System Memory to build?

Three things — all of which contribute to System Memory while producing near-term results from the lower layers. First, continue applying Layers 1 through 4 to new content with consistent structural discipline: each new piece of content that passes retrieval and extraction cleanly adds an increment to the accumulating System Memory while also generating its own near-term citation potential. Second, produce citable assets — original research, named frameworks, or benchmark data that give other sources a reason to reference the site externally. External citation accelerates System Memory accumulation. Third, measure citation trend at 90-day intervals to track whether System Memory is building as expected, and use that data to identify whether the topical focus or publishing consistency needs adjustment.