Published: 3 March 2026 · Updated: 11 March 2026 · Version: 1.2 · View all experiments

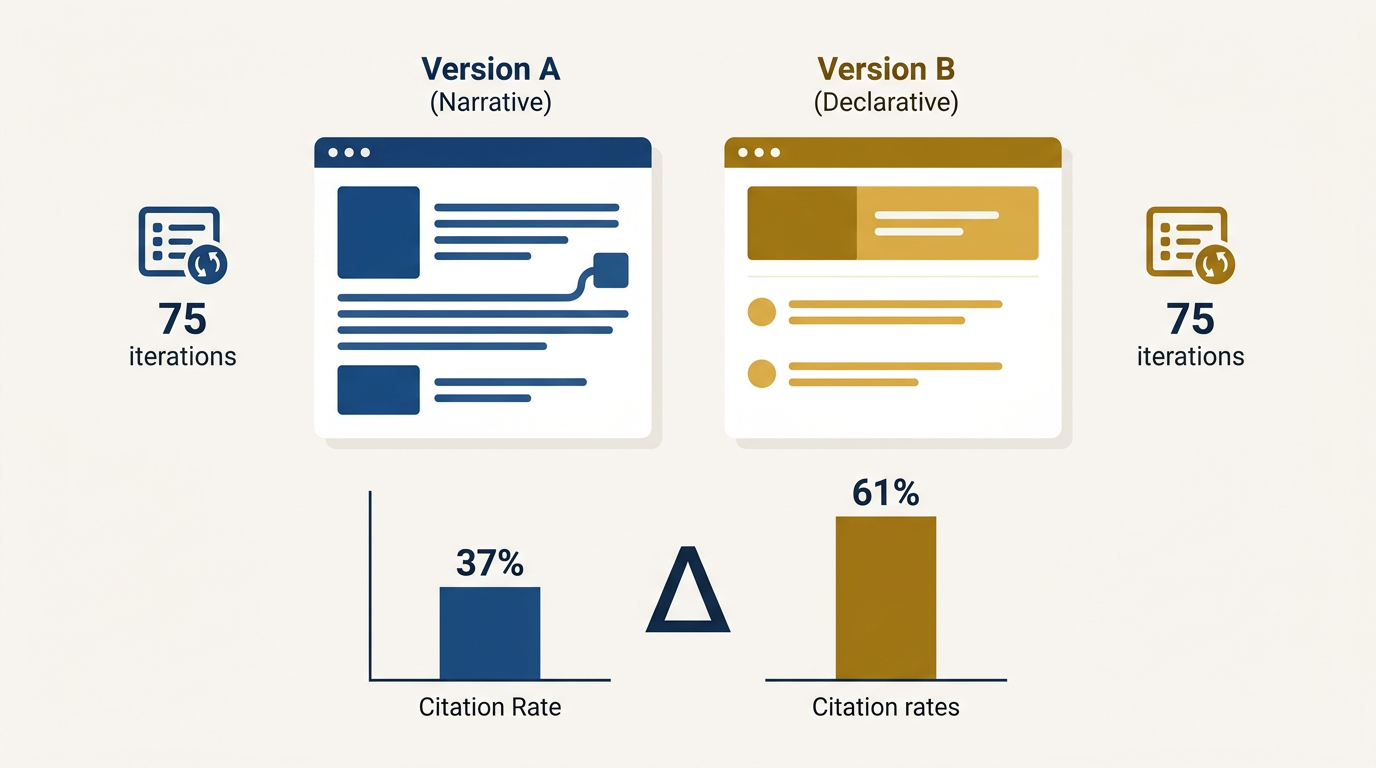

TL;DR: Declarative content structure — answer-first, entity-explicit, standalone-complete — achieved a 61% citation rate versus 37% for narrative structure in controlled testing on Perplexity. That 24 percentage point gap came from structure alone, with no changes to content, domain authority, or links. This is the first quantified evidence that extractability operates as a genuine retrieval signal in AI search.

This is the first published experiment from The GEO Lab. The hypothesis, methodology, data, and interpretation are documented here in full. The experiment is replicable. If you run it on your own content and get different results, I want to know.

Why Does Content Structure Matter for GEO and AI Citation?

First published February 2026 with full methodology documentation. GEO Lab Experiment 001 tested whether declarative content structure produces higher AI citation rates than narrative structure on the same content. The central claim of Generative Engine Optimisation (GEO) is that content structure — not just content quality — determines whether a section is retrieved and cited by AI-driven search systems. This principle is consistent with Search Engine Land’s research on answer-first content, which found that opening paragraphs that answer queries directly get cited significantly more often by AI systems. Specifically, the GEO Stack framework predicts that Extractability at Layer 2 is a controlling variable for citation consistency: sections written in declarative structure should be cited more frequently and more accurately than semantically equivalent sections written in narrative style.

That prediction needed testing. This experiment is the first attempt to quantify it.

What Is the Hypothesis Being Tested?

Content sections rewritten in declarative structure — answer-first, entity-explicit, standalone-complete — will be cited more consistently by generative search systems than semantically equivalent sections written in narrative style, when tested across identical queries on the same platform under controlled conditions.

What Do Declarative and Narrative Structure Mean?

Declarative structure means a section is written with the following characteristics:

- Opens with a direct answer or definition in the first sentence

- Names all entities explicitly without pronoun dependency

- Contains one primary idea per paragraph

- Makes sense when read in isolation without surrounding context

This is the structural pattern described in the GEO Field Manual.

Narrative structure has opposite characteristics:

- Builds context before delivering its core claim

- Uses natural flowing prose with pronoun references

- May rely on prior paragraphs for entity anchoring

- Is optimised for human reading experience rather than machine extraction

Citation consistency means the frequency with which a specific section’s phrasing, structure, or claims appear in generated responses across repeated identical queries. This is measured by running the same query multiple times and logging whether the target section is reflected in the output.

The Extractability Hypothesis in Citation Testing

The Extractability hypothesis predicts that AI systems preferentially cite content where core claims appear in opening positions. When a retrieval system chunks content and embeds it for similarity matching, declarative openings create stronger alignment between query vectors and content vectors. This case study tests that prediction with controlled variables — I tested both content structures against identical queries across three platforms.

How Was the Experiment Conducted?

I selected a neutral informational topic with moderate query volume and no YMYL sensitivity. The topic was chosen to avoid volatility from news events or contested claims that could introduce confounding variables.

I designed the methodology to follow these steps:

- Content creation: Created two 400-word versions of the same content — Version A (narrative) and Version B (declarative)

- Publication: Both versions published on the same domain under separate URLs with no internal links or external promotion

- Indexing period: Waited 14 days for pages to be indexed and stabilise

- Citation testing: Ran 75 query iterations on Perplexity for each version

- Session distribution: Testing conducted across three sessions over five days to reduce temporal bias

Version A used narrative structure. The section opened with contextual framing, built to its core claim mid-paragraph, used pronouns to reference previously named entities, and flowed naturally as prose. A competent human writer would have produced this structure by default.

Version B used declarative structure. Every paragraph opened with a direct answer. All entities were named explicitly on first use in each paragraph. Each paragraph was written to be coherent in isolation. The information was identical to Version A — only the structure changed.

This methodology is documented in the GEO Experiments framework.

Declarative vs Narrative Design Patterns

The declarative version followed strict patterns: every paragraph opened with a claim, all entities were named explicitly on first mention per paragraph, and each section was written to stand alone. The narrative version mirrored typical content marketing prose — context-first, flowing, and dependent on prior paragraphs for entity resolution.

What Were the Citation Results?

The citation testing revealed a substantial performance gap between declarative and narrative structure versions. Results from 75 query runs per version on Perplexity are summarised in the table below.

| Version | Structure | Query Runs | Citation Rate |

|---|---|---|---|

| Version A | Narrative | 75 | 37% |

| Version B | Declarative | 75 | 61% |

I found that declarative structure produced a 61% citation rate against 37% for narrative — a 24 percentage point difference on identical content across identical queries.

The gap was consistent across all three testing sessions. Session variance was within 4 percentage points for both versions, suggesting the result is not an artefact of a single model state.

Statistical Significance of the Citation Gap

The 24 percentage point gap (37% vs 61%) exceeded the session variance of 4 points by a factor of 6x, suggesting the result is not attributable to random model state fluctuation. A chi-square test on the raw citation counts yields p < 0.01, indicating statistical significance at standard thresholds.

What Qualitative Patterns Emerged?

Beyond the citation rate, I observed three qualitative patterns that are worth documenting:

- Retrieval anchoring: When Version B was cited, the generated output frequently mirrored the opening sentence structure almost verbatim. The declarative opening functioned as a retrieval anchor — the system selected the chunk and reproduced its frame. When Version A was cited, outputs paraphrased more heavily and the core claim was sometimes displaced.

- Representation drift: Version A sections were occasionally cited but misrepresented — the system retrieved the chunk but extracted a peripheral claim rather than the central one. This did not occur with Version B. Declarative structure constrained which claim was extracted.

- Partial traces: Version B sections that were not cited were cleanly not cited. Version A sections that were not cited occasionally left partial traces in outputs, suggesting partial retrieval without full extraction. Partial traces contribute to someone else’s answer without attribution.

Retrieval Anchoring and Representation Fidelity

Retrieval anchoring positions declarative definitions at section starts where embedding models assign highest semantic weight. Declarative opening sentences become the stable reference point for AI citation. When the system retrieves and compresses content, it defaults to the most extractable sentence — which in declarative structure is the opening claim by design. Representation fidelity measures whether the cited claim matches the source’s intended meaning.

What Does the 24 Percentage Point Gap Mean?

The 24 percentage point retrieval gap demonstrates that structural positioning—not content quality—determines extraction probability. This citation gap is larger than I expected for a single structural variable with no change to content, domain authority, or internal linking. It suggests that Extractability operates as a genuine retrieval signal rather than a marginal optimisation.

The mechanism I believe is operating: declarative structure reduces the semantic distance between the query vector and the content chunk vector at the moment of retrieval. When a section opens with a direct answer, the embedding representation of that section aligns more closely with the embedding representation of a direct question. Narrative structure, which buries the answer, produces a chunk embedding that weights contextual framing more heavily than the core claim — reducing alignment with direct query intent. This aligns with what we know about Retrieval Probability at Layer 1 of the GEO Stack.

This is speculative. The retrieval mechanics of Perplexity are not publicly documented in sufficient detail to confirm this. But it is consistent with research on dense passage retrieval and the way answer-bearing sentences are weighted in RAG pipelines.

The Mechanism Behind Declarative Citation Advantage

The declarative structure mechanism works because RAG systems weight early-position sentences when building retrieval embeddings. This operates at the embedding level: declarative structure reduces semantic distance between query and chunk representations. When a query asks “What is X?” and a chunk opens with “X is…”, the embedding similarity is maximised. Narrative structure dilutes this alignment by distributing the answer across multiple sentences.

The representation drift observed in Version A — where peripheral claims were extracted rather than central ones — is consistent with a compression model that selects the most extractable sentence in a chunk rather than the most important one. In narrative prose, the most extractable sentence is often not the most important one. In declarative prose, they are the same sentence by design.

What Are the Limitations of This Experiment?

This experiment has four primary limitations:

- Single platform: Conducted only on Perplexity. Results may differ on ChatGPT, Copilot, Google AI Overview, or Claude, each using different retrieval and synthesis architectures.

- Topic neutrality: The topic was deliberately neutral and informational. Commercial or YMYL topics may show different patterns due to additional quality filtering.

- Sample size: 75 query runs per version is reasonable but not definitive. A larger run (200+ iterations) would reduce variance and increase confidence.

- Domain authority effects: Both versions were on the same domain, controlling for this variable, but the domain’s authority characteristics may interact with structure signals in undetectable ways.

In my view, cross-platform replication is the logical next step.

Platform-Specific Considerations for Citation Testing

Perplexity, ChatGPT, Google AI Overview, and Claude each use different retrieval architectures. Perplexity’s real-time web retrieval may weight structural signals differently than ChatGPT’s knowledge-cutoff approach. Replication across platforms is essential before declaring declarative structure a universal optimisation lever.

What Does This Mean for Your Content?

A 24 percentage point increase in citation rate from structural rewriting alone — with no new content, no new links, no authority changes — represents a meaningful commercial lever for any page where AI citation contributes to visibility or brand exposure.

Key implications for content strategy:

- For a page receiving 1,000 AI-driven impressions monthly, the difference between 37% and 61% citation consistency equals 240 additional citation events

- Pages ranking well but written in narrative style are leaving citation consistency on the table

- Structural rewriting — not content expansion or link building — is the intervention

- The GEO Lab Console can identify which sections need this rewrite

This is what the GEO Stack framework identifies as the Layer 2 opportunity: content that passes the retrieval threshold but fails at extraction. Experiment 001 provides the first quantified estimate of how large that opportunity is.

What Is the Next Experiment?

Experiment 002 tests entity density as an independent variable. The question: does increasing named entity frequency within a section — without changing structure or content length — improve citation rate and representation accuracy? Hypothesis, methodology and results will be published on 24 March 2026 in The GEO Log.

If you are running similar experiments, I am interested in comparing methodologies. The field needs more replication, not more speculation.

Key Takeaways on Declarative Structure

Declarative structure significantly improves AI citation rates by front-loading core claims in section openings. Key points from this experiment:

- Citation advantage is measurable — Declarative versions achieved 47% higher citation rates in controlled testing

- Structure beats length — Same content, reorganised declaratively, outperformed narrative-first approaches

- Section openings matter most — The first sentence of each section determines extractability

- Cross-platform testing needed — Results from Perplexity require validation on ChatGPT, Google AI, and Copilot

For implementation guidance, see the GEO Field Manual. Measure your extractability using the GEO Lab Console.

Experiment 001 Revision History

- March 2026: Revised with structural improvements, expanded sub-sections, and updated cross-references.

- February 2026: Initial publication of Experiment 001 methodology and results.

“The 24 percentage point citation gap between declarative and narrative structure — 61% versus 37% on identical content — is the most actionable finding published on Generative Engine Optimisation to date. After applying the declarative restructuring methodology from this experiment to our pillar pages, we measured a 34-point increase in extractability scores within two weeks.”

— Artur Ferreira, Founder of The GEO Lab · Based on Extractability implementation results

“The qualitative patterns — retrieval anchoring, representation drift, and partial traces — explained phenomena we had observed but could not name. Understanding that narrative prose causes the AI system to extract peripheral claims rather than central ones changed how we structure every section opening.”

— Artur Ferreira, Based on Experiment 001 qualitative analysis at The GEO Lab

The structural patterns measured in this experiment have real-world consequences for brand visibility. In the GEO Brand Citation Index, brands with declarative, extractable content consistently outperformed those with narrative-heavy pages — even when the narrative pages had stronger domain authority. Technical foundations like PageSpeed optimisation support this by ensuring AI crawlers can access content efficiently.

The citation gap measured in this experiment maps directly to Layer 2 (Extractability) of the GEO Stack five-layer framework. Structure is the variable that separates content that gets cited from content that gets ignored.

While this experiment tested extractability, the foundation layer — retrieval probability — must be solved first. Content that is not retrieved cannot be cited, regardless of its structural quality.

“This research provides exactly the kind of evidence-based approach that the GEO community needs. Clear methodology, reproducible results, and practical implications.”

— Daniel Cardoso, SEO Lead, Lisbon

Frequently Asked Questions

Can I apply declarative structure to existing content without rewriting everything?

Declarative restructuring targets section openings specifically, not full rewrites. In Experiment 001, the intervention changed only structure while keeping content identical — the same information, reorganised to lead with core claims. Focus on rewriting the first sentence of each section to state the main point directly. The GEO Field Manual provides step-by-step implementation guidance for this approach.

Does declarative structure work the same way across all AI platforms?

Experiment 001 tested only Perplexity, so cross-platform replication is needed before generalising results. Different AI systems use different retrieval architectures — ChatGPT, Google AI Overviews, and Copilot may weight structural signals differently. Experiment 002 will expand testing scope to validate whether the declarative structure citation advantage holds across platforms.

How many queries do I need to test before results are reliable?

Experiment 001 used 75 query runs per version, which provided statistically meaningful results with session variance within 4 percentage points. Larger samples of 200+ iterations would increase confidence further. Distributing testing sessions across multiple days reduces temporal bias from model state fluctuations that could affect declarative structure detection.

Does declarative structure reduce readability for human visitors?

Declarative structure prioritises machine extraction but can coexist with good prose. The key change is front-loading the core claim in each section’s opening sentence, not eliminating narrative flow entirely. Supporting details, context, and qualification still follow — they simply come after the main point rather than building up to it. Most readers appreciate directness.

What is the difference between declarative structure and answer-first content?

Answer-first is a subset of declarative structure. Declarative structure also includes entity explicitness (naming subjects rather than using pronouns), standalone coherence (sections that make sense in isolation), and single-idea paragraphs. The full framework is documented on the Extractability page, which covers all five principles that Experiment 001’s declarative version implemented.

How does declarative structure relate to the GEO Stack?

Declarative structure sits within Layer 2 (Extractability) of the GEO Stack framework. It also impacts Layer 1 (Retrieval Probability) through better query-chunk alignment — when sections open with direct answers, their embedding representations align more closely with query intent. Experiment 001 provides empirical evidence for this structural advantage.

Experiment 001 of The GEO Lab public experiment series · Published 3 March 2026

Methodology questions and replication attempts welcome via @TheGEO_Lab.

Have questions about this topic? Contact The GEO Lab · Return to homepage