GEO Experiments: Design, Measure & Learn — 2026 Edition

GEO Experiments is a free guide to running structured, evidence-based tests on AI citation — covering the five-phase GEO Experiment Loop, hypothesis writing, control and treatment setup, proxy metric selection, analysis methodology, and ten ready-to-run experiment templates across all five GEO Stack layers. It is Book #3 in the GEO Lab Library, built on the same experimental methodology used in The GEO Lab’s published research.

Most GEO advice is based on what should work. GEO Experiments is about what does work — on your specific site, for your specific queries, measured against real citation data. The scientific method applied to AI search visibility: one variable, one change, one observation window, one conclusion.

What’s Inside GEO Experiments

Why GEO Needs Experiments

The case for evidence over intuition in AI citation optimisation — and why single-variable testing produces insights that general advice cannot.

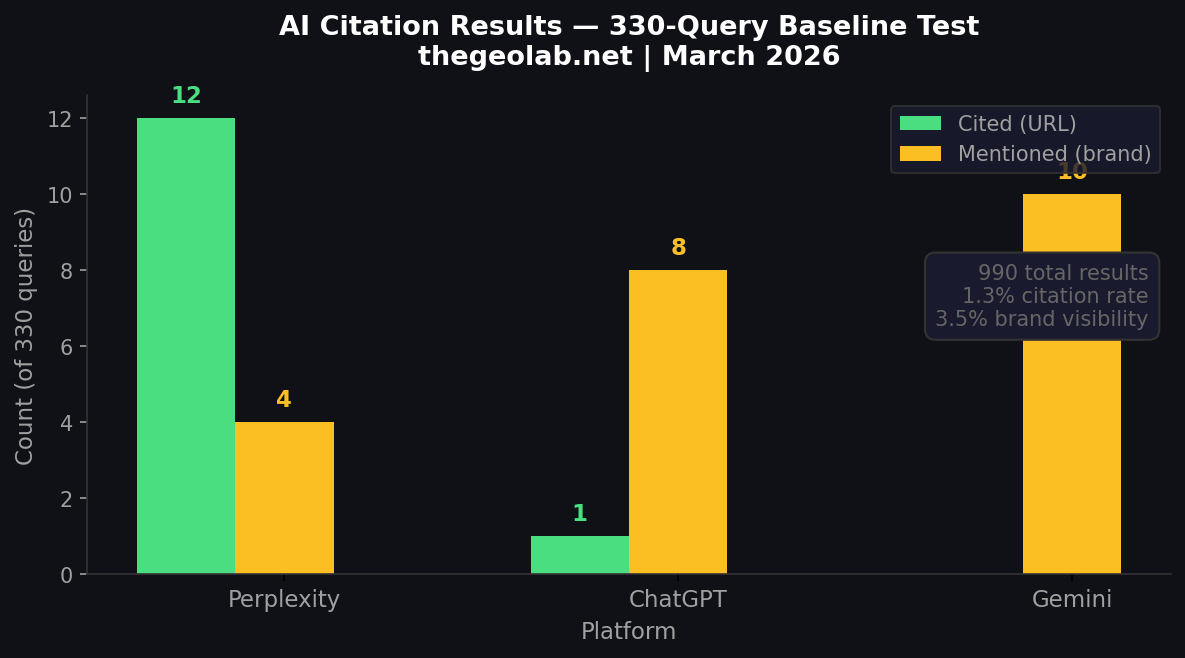

Live Data: 330-Query Citation Test Results

Updated March 2026 — Real results from The GEO Lab Console running 330 queries across ChatGPT, Gemini, and Perplexity.

Data from GEO Lab Console — AI Visibility OS | Next measurement: March 26, 2026

Chapter 1 — The GEO Experiment Loop

The five-phase framework: Hypothesise → Design → Execute → Measure → Learn. How each phase feeds the next, and why the loop never ends.

Chapter 2 — Writing a GEO Hypothesis

The GEO Hypothesis Template: IF / ON / THEN / BECAUSE / MEASURED BY / OVER. How to write a testable, specific prediction mapped to a single GEO Stack layer.

Chapter 3 — Control and Treatment Setup

How to isolate variables correctly. What counts as a control condition. How to avoid contaminating results with multiple simultaneous changes.

Chapter 4 — Proxy Metrics for GEO

What to measure when direct citation attribution is hard: citation rate sampling, brand mention tracking, query testing protocols across ChatGPT, Perplexity, and Gemini.

Chapter 5 — Running the Experiment

The practical execution checklist: making the change, observing the correct window (7–21 days for AI crawl cycles), and documenting without bias.

Chapter 6 — Analysis and Interpretation

How to read GEO experiment results. What counts as a meaningful change. Common interpretation errors and how to avoid them.

Chapter 7 — Reporting and Documentation

How to write an experiment report that builds a reusable body of evidence — and how to share findings with the GEO community.

10 GEO Experiment Templates

Ready-to-run experiments across all five GEO Stack layers: opening sentence structure, FAQ block addition, author schema implementation, heading format changes, and more. Each template includes hypothesis, variable, observation window, and measurement method.

Get All 10 Experiment Templates

Each template includes: hypothesis, control/treatment setup, observation window, measurement method, and analysis framework. Ready to copy and run.

Download the GEO Experiments Ebook (Free PDF)No email required. No signup. Direct download.

Recommended Experiment Sequence

The optimal order for running GEO experiments — starting with highest-leverage changes on highest-traffic pages.

Common Pitfalls and Advanced Techniques

The most common GEO experiment mistakes: testing too many variables, observing too short a window, and misattributing results. Advanced techniques for multi-page and longitudinal experiments.

Case Studies from The GEO Lab

Real experiments run by The GEO Lab — with hypotheses, methodology, results, and conclusions documented.

Frequently Asked Questions

How do you measure AI citation rate?

AI citation rate is measured by defining a set of target queries, submitting them to AI engines (ChatGPT, Perplexity, Gemini, and Copilot), and recording how often your content is cited in the responses. A citation rate is calculated as: citations received divided by queries tested, expressed as a percentage. GEO Experiments provides a step-by-step query testing protocol and tracking template.

What is a GEO experiment?

A GEO experiment is a structured test that changes one variable on one or more pages, observes the effect on AI citation rate over a defined window (typically 7–21 days), and draws a conclusion about whether the change increased, decreased, or had no effect on citation probability. The key principle is single-variable discipline — changing only one thing at a time so results are attributable.

What is the GEO Experiment Loop?

The GEO Experiment Loop is a five-phase cycle: Hypothesise (form a specific prediction), Design (set up control and treatment conditions), Execute (make the change and wait), Measure (collect citation data), and Learn and Act (analyse results and feed them into the next hypothesis). The loop is continuous — every experiment generates new questions.

How long does a GEO experiment take?

A typical GEO experiment requires a 7–21 day observation window after making the change, to allow AI crawl cycles to process the updated content. Faster-crawling platforms like Perplexity may show results within a week; training-data-dependent platforms like ChatGPT may require longer observation periods.

What GEO experiments should I run first?

The recommended sequence starts with the highest-leverage changes on your highest-traffic pages: (1) opening sentence structure — rewriting to place a direct answer first, (2) FAQ block addition with FAQ schema, (3) heading format changes to match natural query phrasing. These consistently produce the largest measurable citation impact and are covered in the first three experiment templates.

Continue in the GEO Lab Library

- Apply the results: The GEO Workbook — 30 daily tasks that put experimental insights into systematic action.

- Advanced testing: GEO Authority Playbook — competitive citation intelligence and measurement at scale.

- Browse all: thegeolab.net/ebooks

The only way to know what works is to test systematically, measure with proxy metrics,

and build a body of evidence. This guide shows you exactly how.

What you’ll learn: Hypothesis design for GEO · Control and treatment setup · Proxy metrics for AI citation · Statistical approaches for small samples · Analysis templates · 10 ready-to-run experiment designs

Contents

Why GEO Needs Experiments

Part of The GEO Lab Library · thegeolab.net

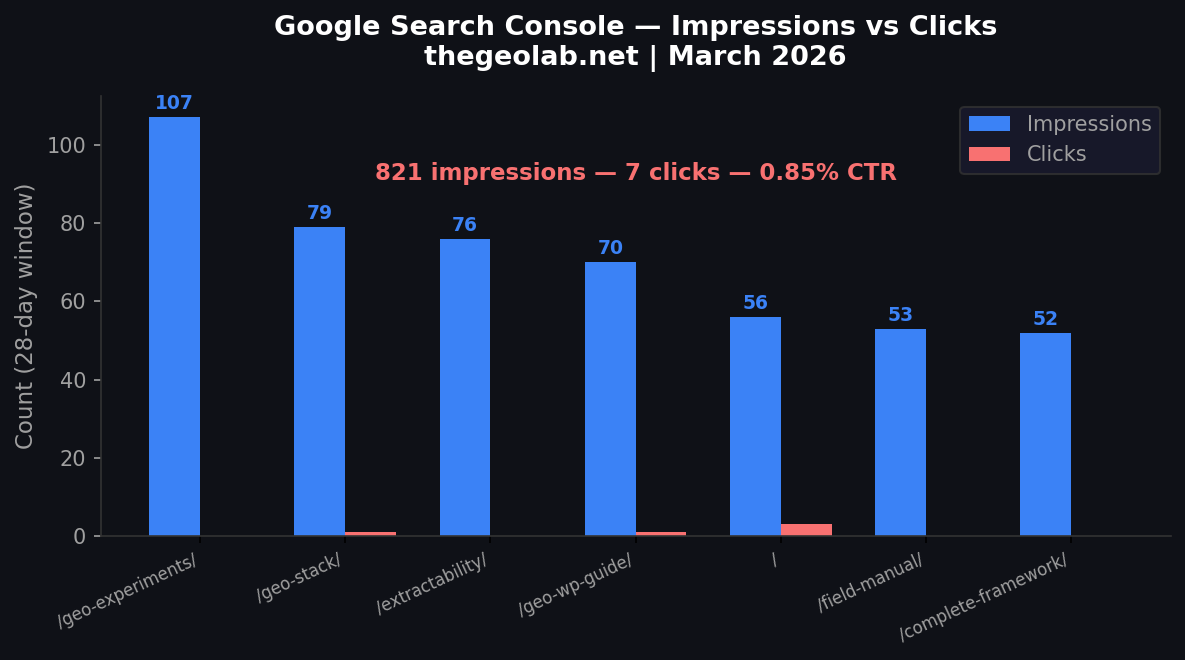

Traditional SEO has decades of tooling. You can track rankings, impressions, clicks, and conversions for every keyword. GEO has none of that — yet. AI engines don’t report who they cited, when, or why. There’s no “AI Search Console” that shows your citation rate.

This creates a dangerous temptation: to guess. To follow advice without testing it. To assume that what worked for one site will work for yours.

The alternative is to experiment. Run structured tests. Change one variable at a time. Measure with the best proxies available. Build a body of evidence about what gets your content cited — and what doesn’t.

“In GEO, the practitioner who tests

will always outperform the one who follows.”

The GEO Measurement Problem

❌ What GEO Doesn’t Have

- No “AI impressions” metric

- No citation click-through rate

- No official API for citation tracking

- No attribution model for AI traffic

- No equivalent of Google Search Console

✔ What We Can Measure

- Manual citation checks across AI engines

- Structural proxies (extractability scoring)

- Entity signal density and consistency

- Before/after comparisons on changed pages

- Cross-platform citation variance

Who This Guide Is For

This guide is for practitioners who have already read The GEO Pocket Guide or The GEO Field Manual and understand the GEO Stack. It’s for people who want to move beyond theory into evidence — who want to know not just what to change, but whether the change actually worked.

The GEO Experiment Loop

Every GEO experiment follows the same five-phase loop. This is the core methodology used in The GEO Lab’s published experiments and the structure you’ll use for every test in this guide.

Form a specific, testable prediction about what will change AI citation behaviour. Map it to a GEO Stack layer.

Set up control and treatment conditions. Define what changes, what stays the same, and how you’ll measure the difference.

Make the change. Publish. Wait the appropriate observation window (typically 7–21 days for AI crawl cycles).

Collect proxy metrics. Run citation checks across ChatGPT, Perplexity, and Gemini. Record results in your tracking template.

Analyse results. Document findings. Apply what works to other pages. Share your evidence with the GEO community.

Single-Variable Discipline

The most important rule in GEO experimentation: change one thing at a time. If you rewrite a page’s opening, add schema, update the author bio, and add FAQ blocks in one session, you’ll never know which change drove the result. Isolate your variable.

Mapping Experiments to the GEO Stack

Every experiment should target a specific layer of the GEO Stack. This keeps your testing focused and your results attributable.

| GEO Stack Layer | What You’re Testing | Example Variable |

|---|---|---|

| Retrieval Probability | Will AI find this content? | Robots.txt rules, sitemap inclusion |

| Extractability | Can AI extract a quotable passage? | Opening sentence structure, heading format |

| Entity Reinforcement | Does AI recognise the author/brand? | Author bio presence, schema types |

| Structural Authority | Does AI trust this content? | Backlinks, brand mentions, citations |

| System Memory | Does AI remember this over time? | Update frequency, freshness signals |

Writing a GEO Hypothesis

A good hypothesis is specific, testable, and tied to a single GEO Stack layer. A bad hypothesis is vague, unmeasurable, and tries to test everything at once.

The GEO Hypothesis Template

IF I change [specific variable]

ON [specific page or set of pages]

THEN [expected measurable outcome]

BECAUSE [reasoning tied to GEO Stack layer]

MEASURED BY [specific proxy metric]

OVER [observation window]

Good vs Bad Hypotheses

❌ Bad Hypothesis

“If I improve my content, AI will cite me more.”

Why it fails: “Improve” is vague. “Content” is unspecific. “More” isn’t measurable. No layer targeted.

✔ Good Hypothesis

“If I rewrite the first two sentences of my top 5 pages to provide direct answers, citation rate for those pages will increase from 10% to 30% within 14 days, because Extractability improves when answers are front-loaded.”

Why it works: Specific variable, specific pages, measurable target, clear reasoning, defined timeline.

10 Hypothesis Starters by GEO Stack Layer

| Layer | Hypothesis Starter |

|---|---|

| Retrieval | “If I submit my sitemap to Google and verify AI crawlers are not blocked…” |

| Retrieval | “If I add internal links from my homepage to my target pages…” |

| Extract. | “If I rewrite opening sentences to answer the H2 question directly…” |

| Extract. | “If I add a Key Takeaway section at the end of each page…” |

| Entity | “If I add Person schema with full credentials to my author profile…” |

| Entity | “If I make my author name consistent across site, LinkedIn, and Medium…” |

| Authority | “If I publish a guest post on an industry blog linking back to my guide…” |

| Authority | “If I earn brand mentions in 3 Reddit threads within my niche…” |

| Memory | “If I update my cornerstone guide weekly with fresh data…” |

| Memory | “If I add a visible ‘Last Updated’ date and modify the content monthly…” |

Control & Treatment Setup

GEO experiments don’t use traditional A/B testing — you can’t serve different content to AI crawlers. Instead, you use sequential testing (before/after on the same page) or matched-pair testing (similar pages, one changed, one not).

Method 1: Sequential Testing (Before/After)

Control: The page’s performance before the change (baseline citation checks).

Treatment: The same page after the change.

Observation window: 7–21 days minimum (AI crawl cycles vary).

Run 10 citation checks across 3 AI engines. Record results. This is your “before” data.

Change only the variable you’re testing. Don’t touch anything else on the page.

Allow time for AI engines to re-crawl and re-index. Don’t check too early — patience is data.

Run the same 10 citation checks with the same queries. Record results. Compare to baseline.

Method 2: Matched-Pair Testing

Control: Page A — no changes. Treatment: Page B — change applied.

Comparison: Both pages measured simultaneously over the same window.

Method 3: Cross-Platform Variance Testing

What you’ll learn: Different engines weight different signals. Perplexity may favour recency. ChatGPT may favour structural clarity. Gemini may favour entity signals. Document the variance.

Choosing Your Method

| Scenario | Best Method | Why |

|---|---|---|

| Testing content rewrites | Sequential | Most pages don’t have matched pairs |

| Testing schema addition | Matched-pair | Schema can be added to one page, not the other |

| Testing what engines prefer | Cross-platform | Same content, different AI responses |

| Testing author signals | Sequential | Author changes apply site-wide |

Proxy Metrics for GEO

Since there’s no official “GEO analytics” dashboard, you need proxy metrics — measurable signals that correlate with AI citation behaviour. The GEO Lab uses five categories of proxy metrics.

The 5 GEO Proxy Metrics

Primary Metric

1. Manual Citation RateSearch 10 queries across 3 engines. Count citations. Citation rate = citations ÷ total checks × 100. This is your north star metric.

Structural Metric

2. Extractability ScoreScore each page section 0–5 on: direct answer opening, question heading, evidence present, self-contained passage, clear attribution.

Entity Metric

3. Entity Signal DensityCount entity signals: author name, schema types, brand mentions, cross-platform consistency, About page links. Score out of 10.

Context Metric

4. Citation Context AnalysisWhen you ARE cited, what was quoted? Which section? Which sentence? Track the pattern — it reveals what AI finds extractable.

Competitive Metric

5. Competitor Displacement RateTrack which competitors are cited for your target queries. Over time, are you displacing them? Are new competitors appearing?

How to Calculate Citation Rate

queries_tested = 10

engines_checked = 3 // ChatGPT, Perplexity, Gemini

total_checks = queries_tested × engines_checked = 30

times_cited = [count your citations]

citation_rate = (times_cited ÷ total_checks) × 100

// Baseline: most sites start at 0–5%

// Good: 15–25% after 30 days of GEO work

// Excellent: 30%+ sustained over 90 days

Extractability Scoring Rubric

| Criterion | 0 Points | 1 Point |

|---|---|---|

| Direct answer in first 2 sentences | Buried or missing | Clear, quotable answer up front |

| Question-format heading (H2/H3) | Statement or keyword heading | Phrased as a question |

| Evidence or statistic present | Claims without support | Verifiable data cited |

| Self-contained passage | Requires context from other sections | Standalone — makes sense in isolation |

| Clear attribution possible | No author, no date, no source | Author, date, and/or source visible |

Running the Experiment

An experiment is only as good as its execution. This chapter covers the practical workflow: tools, timing, and data collection discipline.

The Experiment Execution Checklist

Using the template from Chapter 2

10 queries × 3 engines before changes

Only one thing changing per test

Visual record of current state

Only the treatment variable modified

Visual record of new state

Calendar reminder for Day 7, 14, 21

Same 10 queries × 3 engines after window

Observation Windows by Experiment Type

| Type of Change | Min. Window | Recommended | Why |

|---|---|---|---|

| Content rewrite | 7 days | 14 days | AI needs to re-crawl and re-index |

| Schema addition | 7 days | 14 days | Structured data processed in crawl cycle |

| Author signal changes | 14 days | 21 days | Entity signals take longer to propagate |

| Off-page signals | 21 days | 30+ days | Backlinks and mentions need discovery time |

| Freshness/update signals | 7 days | 14 days | AI checks for recent modifications |

Tools You’ll Need

→ ChatGPT (free tier works)

→ Perplexity (free tier)

→ Google Gemini (free tier)

→ A spreadsheet for tracking

→ Google Rich Results Test

→ Google PageSpeed Insights

→ Google Search Console

→ Schema.org validator

Analysis & Interpretation

GEO experiments produce small datasets. You won’t have thousands of data points — you’ll have 30 citation checks. The goal isn’t statistical significance in the academic sense. The goal is directional evidence that informs your next action.

The 4-Question Analysis Framework

Compare baseline vs post-change. Any change ≥10 percentage points is worth noting.

Did all 3 engines respond similarly? Cross-engine consistency strengthens evidence.

When you gained citations, which specific passage was quoted? Was it from the section you changed?

Apply the same change to a second page. If the pattern holds, you have a genuine finding.

Interpreting Small Samples

| Change Observed | Interpretation | Confidence | Next Step |

|---|---|---|---|

| +0% (no change) | No detectable effect | — | Wait longer or test different variable |

| +3–7% (1–2 citations) | Weak signal — may be noise | Low | Replicate on second page |

| +10–20% (3–6 citations) | Meaningful directional evidence | Medium | Apply to more pages, monitor |

| +20%+ (6+ citations) | Strong signal — likely real | High | Roll out broadly, document |

| Negative (citations lost) | Change may have harmed visibility | Check | Consider reverting, investigate |

Confounding Variables to Watch For

Model version changes can shift citation patterns globally

A competitor improving their page can displace you

Even slight query rewording changes AI responses

Breaking news on your topic can shift what AI surfaces

Reporting & Documentation

Every experiment should produce a written report. This isn’t bureaucracy — it’s how you build a body of evidence. Six months from now, you’ll have a library of what works for your site.

The GEO Experiment Report Template

Action: Roll out / Revert / Test further / No action

Next Experiment: What question does this raise next?

Building Your Experiment Log

| ID | Date | Layer | Variable | Baseline | Result | Δ | Action |

|---|---|---|---|---|---|---|---|

| EXP-001 | Mar 2026 | Extract. | Direct answer rewrite | 10% | 23% | +13% | Roll out |

| EXP-002 | Mar 2026 | Entity | Person schema added | 23% | 27% | +4% | Test further |

| EXP-003 | Apr 2026 | Extract. | FAQ section added | 27% | 33% | +6% | Roll out |

10 GEO Experiment Templates

Each template is a complete experiment design you can run immediately. Start with Experiment 1 — it’s the highest-impact, lowest-effort test.

Hypothesis: Rewriting the first 2 sentences to directly answer the H2 question will increase citation rate.

Variable: Opening sentence structure (background context → direct answer).

Method: Sequential. Baseline 10 queries, rewrite, wait 14 days, re-check same 10 queries.

Expected impact: High. This is consistently the highest-ROI GEO change.

Hypothesis: Changing H2 headings from statements to questions will increase extractability and citation rate.

Variable: H2 heading format only (e.g. “Schema Markup Benefits” → “What Are the Benefits of Schema Markup?”).

Method: Matched-pair. Two similar pages, one converted, one unchanged.

Expected impact: Medium. Questions map directly to AI query patterns.

Hypothesis: Adding Person schema with full credentials will increase citation rate through stronger entity signals.

Variable: Person schema added to author profile (name, title, sameAs links, credentials).

Method: Sequential. Site-wide change, measure across all target queries.

Expected impact: Medium. Entity signals compound over time — measure again at 30 days.

Hypothesis: Adding a 5-question FAQ section with FAQ schema will increase the number of queries for which AI cites the page.

Variable: FAQ section added to bottom of page with FAQ schema markup.

Method: Sequential. Test 10 queries including FAQ-specific questions.

Expected impact: Medium-high. FAQ questions are direct query matches.

Hypothesis: Adding 3+ statistics with cited sources will increase AI citation rate for that page.

Variable: Number of statistics/data points (from 0 to 3+), with source attribution.

Method: Sequential. Baseline, add evidence, wait 14 days.

Expected impact: Medium. AI favours verifiable, data-backed claims.

Experiment Templates (Continued)

Hypothesis: Adding 5 internal links from high-traffic pages to a target page will improve retrieval probability and citation rate.

Variable: Internal link count pointing to target page (from current → current + 5).

Method: Sequential. Measure target page citations before and after links added.

Expected impact: Medium. Internal links signal content importance to crawlers.

Hypothesis: Updating content with new data and a visible “Last Updated” date will increase citation rate for time-sensitive queries.

Variable: Content freshness (old data → new data + visible update date).

Method: Sequential. Best tested on content with “2024” or “2025” data that can be updated to 2026.

Expected impact: Medium-high for time-sensitive topics. Low for evergreen content.

Hypothesis: Different AI engines cite different sources for the same query, revealing engine-specific signal preferences.

Variable: None — this is an observational study, not an intervention.

Method: Cross-platform. Same 20 queries across ChatGPT, Perplexity, and Gemini.

Expected impact: Diagnostic. Reveals which engine to optimise for first.

Hypothesis: Generating 10 genuine brand mentions across LinkedIn, Reddit, and forums over 30 days will improve citation rate.

Variable: Off-page brand mention count (baseline → baseline + 10).

Method: Sequential with 30-day window.

Expected impact: Medium. Off-page signals take time but compound with on-page quality.

Hypothesis: Adding a structured “Key Takeaway” section at the end provides AI with a pre-packaged summary to cite.

Variable: Presence of a 3–5 bullet “Key Takeaway” section at page bottom.

Method: Matched-pair. Two similar pages — one with takeaway section, one without.

Expected impact: Medium. Provides a highly extractable passage.

Recommended Experiment Sequence

Don’t run all 10 at once. Sequence them for maximum learning with minimum noise.

Start with what you can control completely — your own content structure.

Add schema, evidence, and internal linking. These compound the content improvements.

Update content, build brand mentions. These require more time but have lasting effects.

Cross-platform analysis and takeaway testing. Run alongside other experiments.

Data Collection Template

Use this spreadsheet structure for every experiment. One tab per experiment.

| Column | What to Record | Example |

|---|---|---|

| Date | Date of check | 2026-03-15 |

| Phase | Baseline / Post-change | Post-change (Day 14) |

| Query | Exact query used | What is schema markup? |

| Engine | AI engine tested | Perplexity |

| Cited? | Y / N | Y |

| Source Cited | URL cited by AI | thegeolab.net/schema |

| Passage Quoted | Text AI cited | “Schema markup is code…” |

| Notes | Anything notable | Full sentence cited |

Common Pitfalls & Advanced Techniques

The 7 Mistakes That Kill GEO Experiments

Rewriting content, adding schema, AND updating bios in one session. You won’t know which change mattered.

Measuring after 2 days instead of 14. AI crawl cycles need time. Premature measurement gives false negatives.

“What is schema?” vs “What is schema markup?” yields different results. Use identical prompts every time.

Without a “before” snapshot, your “after” data is meaningless. Always baseline first.

A single experiment is a data point, not proof. Replicate before rolling out broadly.

A change might work on Perplexity but not ChatGPT. Always check all three engines.

Running experiments without reports means losing your learnings. Every experiment needs a written report.

Advanced: Compound Testing

Once you’ve established individual variable effects through isolated tests, you can begin compound testing — combining multiple proven changes and measuring the combined effect.

Phase 1: Test variable A alone (e.g. direct answer rewrite → +13%)

Phase 2: Test variable B alone (e.g. FAQ section → +6%)

Phase 3: Apply A + B together → Measure combined effect

If combined > A + B individually, the changes compound (synergy)

If combined ≈ A + B, the changes are additive

If combined < A + B, there may be diminishing returns

How The GEO Lab Runs Experiments

The GEO Lab publishes controlled experiments at thegeolab.net/log. Here’s the methodology behind our published work — and what we’ve learned so far.

Our Testing Principles

Every test uses the Experiment Loop. Hypotheses are documented before changes are made. We never retrofit explanations to results.

All results — including failures — are published in The GEO Log. Negative results are as valuable as positive ones.

We don’t declare findings from a single test. Every significant result is replicated on at least one additional page.

Every experiment uses the report template from Chapter 7. Exact queries, exact dates, exact results.

What We’ve Learned So Far

After dozens of published experiments, here are the patterns that have held up consistently:

| Finding | Confidence | Impact |

|---|---|---|

| Direct answer openings dramatically increase citation probability | High | ★★★★★ |

| FAQ sections with schema generate citations for long-tail queries | High | ★★★★ |

| Question-format H2 headings improve extractability scores | High | ★★★★ |

| Statistics with sources increase AI’s willingness to cite | Medium-High | ★★★ |

| Author schema improves entity recognition over time | Medium | ★★★ |

| Different AI engines cite different sources for identical queries | High | ★★★ |

| “Last Updated” freshness signals affect time-sensitive queries | Medium | ★★ |

GEO Experiment Quick Reference

“Test everything. Assume nothing.

The data is the strategy.”

📚 The GEO Lab Library

All ebooks free at thegeolab.net/ebooks · By Artur Ferreira · The GEO Lab

“The best answer wins. Not the best-optimised page.”

AI search visibility research, field experiments, and the complete GEO Lab Library — all free.

#2 SEO to GEO: Complete Framework

#3 GEO Experiments ✓

#4 The GEO Workbook

#5 GEO for WordPress

#6 The GEO Glossary

#7 GEO Field Manual

#8 GEO Authority Playbook

#9 AI SEO OS