Layer 2 of the GEO Stack — designing content that AI systems can cleanly retrieve and cite.

Extractability measures how cleanly AI systems can retrieve, parse, and reuse a content section without losing meaning. It is Layer 2 of the GEO Stack. High extractability requires answer-first structure, section independence, compression resistance, explicit entity anchoring, and structured formatting. Content that ranks well but lacks extractability will be retrieved yet never cited in AI-generated answers.

Extractability is the degree to which a content section can be cleanly retrieved, parsed, and reused by a generative system without losing its meaning. In generative search, visibility depends not only on whether content ranks but whether it can be extracted and synthesised. Readable is not the same as extractable. Extractability sits at Layer 2 of the GEO Stack, immediately after Retrieval Probability.

I discovered the extractability problem when pages that ranked well were consistently ignored by AI systems. I built diagnostic tools to measure section-level extraction rates and found that structural clarity was the primary differentiator.

Why Does Extractability Matter for GEO and AI Search?

Extractability — Layer 2 of the GEO Stack — measures how cleanly AI systems like ChatGPT, Perplexity, and Google AI Overviews can parse and cite a content section. In The GEO Lab’s Experiment 001 (March 2026), declarative structure produced a 61% citation rate versus 37% for narrative structure — a 24 percentage point gap attributable to extractability differences alone. Extractability matters for Generative Engine Optimisation (GEO) because retrieval systems parse sections independently — content that requires surrounding context for comprehension fails extraction. Modern search systems retrieve candidate sections, parse them into structured representations, compress them, and synthesise responses. If a section is retrieved but cannot be clearly parsed, it is less likely to be:

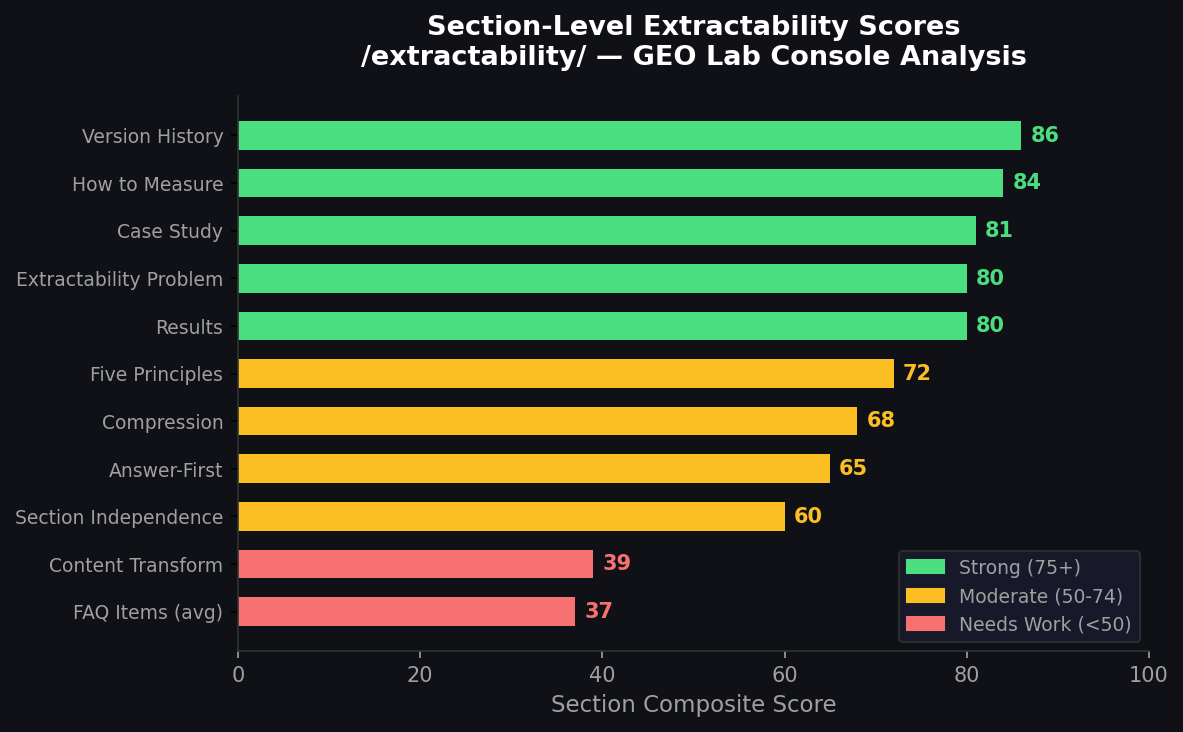

Live Data: Section-Level Extractability Scores

This page was analyzed by the GEO Lab Console. Here are the real section-by-section extractability scores — the same analysis the Console runs on any URL.

Data from GEO Lab Console — AI Visibility OS | Updated March 2026

- Cited in AI-generated answers

- Included in summaries

- Accurately represented

- Reused consistently across queries

A section can rank first and never appear in a generated answer if its internal structure prevents clean extraction.

Data: According to Search Engine Land’s 2025 research, opening paragraphs that answer the query upfront get cited 67% more often by AI systems. This “answer-first” pattern aligns with how LLMs exhibit a “U-shaped attention bias” — weighing tokens at the beginning and end of sections most heavily.

Extractability Checker

Paste any content section and see exactly what an AI system would extract — and what it would discard.

You’ll see a compression preview, four dimension scores, and highlighted problem areas.

This is the first sentence(s) AI retrieves — everything else is discarded.

What Is the Extractability Problem in AI Search?

Most web content was written for narrative flow. In my experience auditing hundreds of pages, I consistently find these low-extractability patterns:

- Contextual build-ups Long introductions before delivering the answer

- Pronoun-heavy references Overuse of “this”, “it”, and “they”

- Ambiguous entity naming Inconsistent terminology across sections

- Buried answers Key information embedded mid-paragraph

- Context-dependent explanations Assuming prior knowledge from surrounding content

These patterns are comfortable for humans reading linearly. They are inefficient for systems that isolate content blocks non-linearly.

What Are the Five Principles of High Extractability?

Through testing and iteration, I developed five principles that consistently improve extractability scores:

Answer-First Structure

Every section opens with its core claim stated declaratively in the first sentence. Supporting evidence, context, and qualification follow. This is the single most impactful structural change practitioners can make to existing content.

Section Independence

Each content block must answer its question without requiring context from surrounding paragraphs. Every section must be coherent when read in isolation:

- No opening references to previously discussed material

- No pronoun anchors that require prior context

- No implicit assumptions about what the reader already knows

The section independence test is simple: copy a section into a blank document and read it cold. If it makes sense without context, it passes.

Compression Resistance

The core meaning of a section survives when a generative system compresses it into a two-sentence synthesis. High compression resistance requires:

- Leading strongly with the core claim

- Keeping that claim unambiguous and concrete

- Separating the core claim from supporting inference (which is more likely to be compressed away)

Explicit Entity Anchoring

Every extracted chunk must introduce its key entities by name without relying on context from surrounding sections.

- Not extractable: “It improves performance”

- Extractable: “The GEO Stack Entity Reinforcement layer improves retrieval performance by strengthening semantic associations”

Format as Signal

Extractability format signals include bullet lists, numbered steps, comparison tables, and FAQ question-answer pairs that match retrieval chunk boundaries. These structured formats are preferentially extracted because they provide syntactic boundaries that help systems identify discrete, usable units.

Data: Operyn AI’s analysis of 680 million citations reveals that LLMs are 28–40% more likely to cite content with clear formatting. Listicles account for 50% of top AI citations, while content with tables gets cited 2.5x more often than equivalent prose.

High vs Low Extractability: A Comparison

Extractability differences are measurable across five key characteristics that determine whether AI systems can cleanly parse and reuse content.

| Characteristic | High Extractability | Low Extractability |

|---|---|---|

| Opening pattern | Answer-first declarative statement | Contextual build-up to answer |

| Entity references | Explicit names repeated throughout | Pronouns (“it”, “this”, “they”) |

| Section independence | Coherent in isolation | Requires prior context |

| Compression survival | Core meaning preserved in 2 sentences | Meaning lost when compressed |

| Format | Lists, tables, definition blocks | Dense narrative prose |

How Does an Extractability Rewrite Transform Content?

Extractability rewrites improve AI visibility by restructuring prose into retrieval-ready blocks that survive LLM summarisation without information loss. The transformation leads with answers rather than building toward them.

Low extractability example: “In today’s evolving landscape, it is becoming clear that optimisation is changing in interesting ways. When we look at what this means for content strategy, the implications become clear — structure matters more than it ever has.”

High extractability example: “Content structure matters more in generative search than in traditional SEO. Generative systems retrieve individual sections rather than whole pages, making section-level clarity the primary determinant of whether content is extracted and cited. Narrative style that builds to a conclusion is typically anti-extractable — the answer arrives too late for effective chunk retrieval.”

The optimal structure is measurable. Research indicates the ideal answer length for paragraph snippets is 40–60 words. Pages with paragraph-length summaries at the top have 35% higher inclusion in AI-generated responses.

How Do You Diagnose Extractability Issues?

Low extractability diagnosis identifies sections where ambiguous pronouns, multi-clause sentences, or missing topic sentences prevent clean retrieval. Evaluate seven structural signals before publishing any section:

- Direct answer check

Does the section open with a direct answer or definition?

- Entity explicitness

Are all entities named explicitly with no dangling pronouns?

- Isolation test

Does the section make sense when read in isolation?

- Compression survival

Does the core meaning survive a one-sentence summary?

- Paragraph scope

Are paragraphs under 120 words with one main idea each?

- Format structure

Are discrete concepts presented as lists or tables rather than narrative?

- Answer placement

Is the answer in the first two sentences rather than buried mid-section?

If a section fails any of these checks, rewrite before publishing.

How Does Extractability Connect to Retrieval Probability?

Extractability increases inclusion likelihood after retrieval but cannot compensate for low Retrieval Probability. The relationship is sequential:

- Layer 1 (Retrieval) — determines whether content enters the candidate pool

- Layer 2 (Extractability) — determines whether it can be used once retrieved

Strong Extractability on content that is never retrieved produces no improvement in generative visibility. Optimisation must address both layers.

Cross-reference: For the full Layer 1 framework, see Retrieval Probability (Layer 1). For the complete five-layer model, see the GEO Stack.

How Do You Measure Extractability?

The GEO Lab Console measures Extractability at the section level by scoring:

- Declarative clarity

- Entity explicitness

- Standalone completeness

- Structural formatting

- Compression stability

The Console simulates the compression step by generating a two-sentence synthesis of each section and comparing semantic similarity to the original — showing practitioners exactly what survives and what is lost.

Data: According to Whitehat SEO’s 2025 analysis, 76.4% of ChatGPT’s most-cited pages were updated in the last 30 days. This recency bias means extractability must be maintained through regular content updates.

What Are the Key Takeaways on Extractability?

Extractability is the structural clarity that allows content to be parsed cleanly, reused accurately, survive compression, and retain meaning when isolated from context.

- Extractability is Layer 2 of the GEO Stack — it determines whether retrieved content can actually be used in AI-generated answers.

- Answer-first structure is the single most impactful change: lead every section with a declarative core claim.

- Section independence ensures each content block is coherent when extracted without surrounding context.

- Compression resistance means core meaning survives when AI condenses your content to one or two sentences.

- Structured formats (lists, tables, FAQ pairs) are 28–40% more likely to be cited than equivalent prose.

- High extractability transforms content from narrative pages into modular knowledge blocks that generative systems can retrieve and cite consistently.

For WordPress-specific implementation, see GEO for WordPress. Extractability strategies are covered in depth in The GEO Field Manual. For downloadable guides, visit the ebook library.

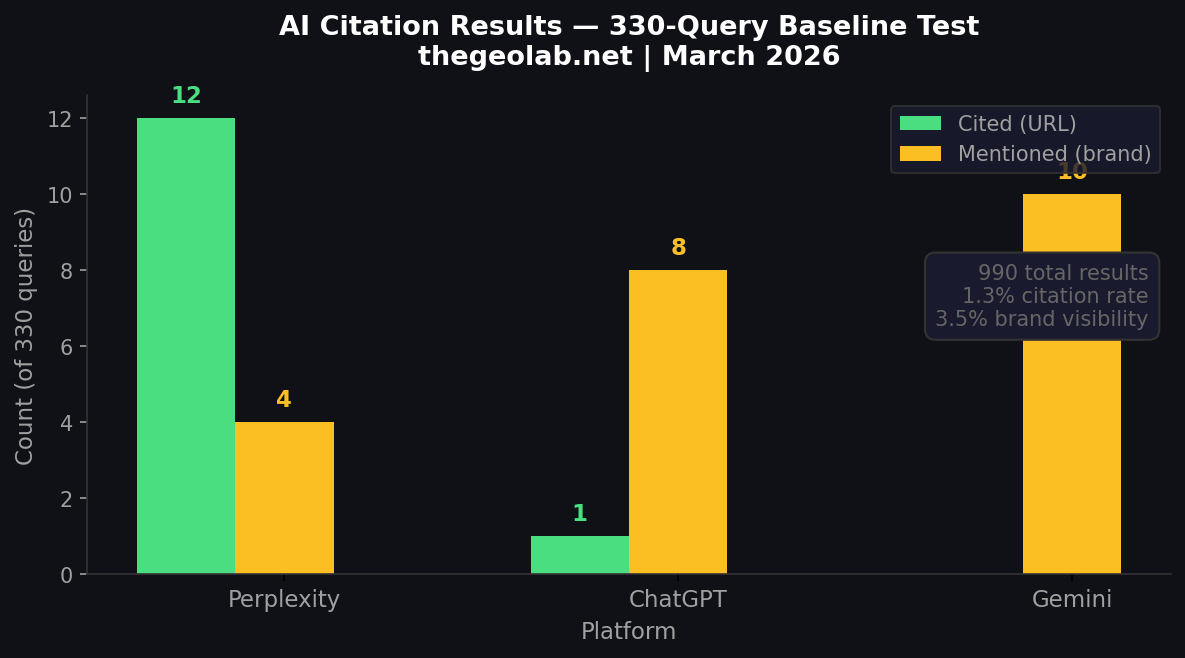

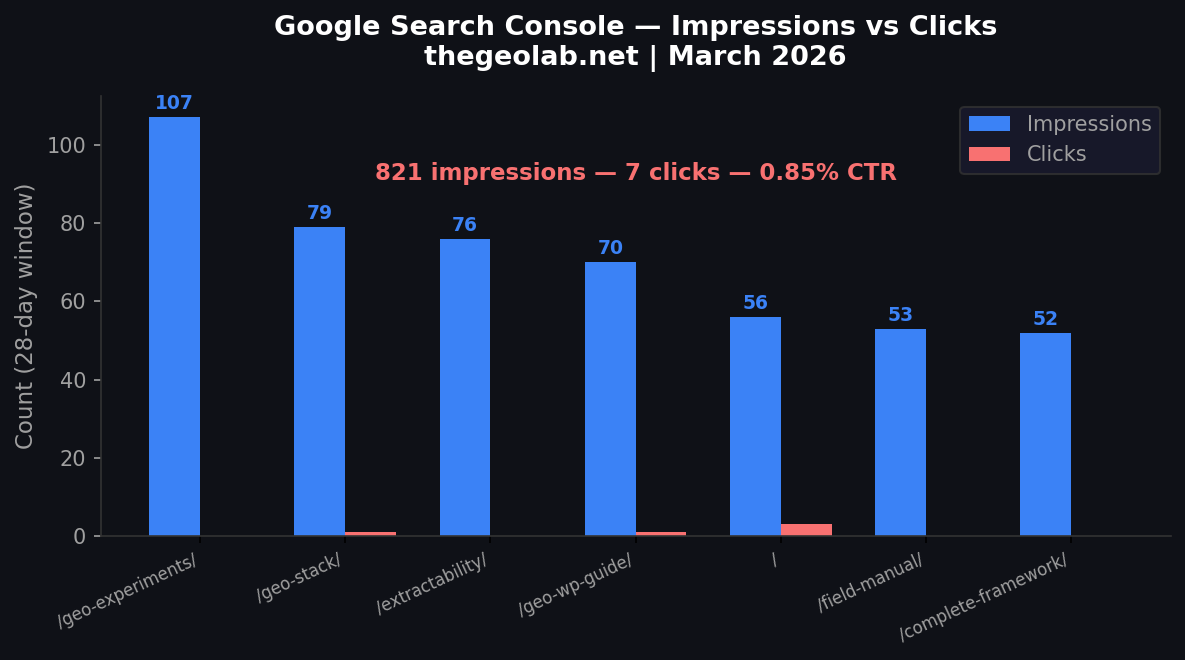

This Page in Practice: The Zero-Click Paradox

This page scores 81.6/100 on extractability — which means AI systems can easily extract its content. The result? 76 Google impressions, 0 clicks in 28 days. AI is summarising this content so well that nobody needs to visit.

Perplexity has cited this page 3 times across our 330-query test — proving the content is being retrieved. But the high extractability that earns citations also enables zero-click consumption. This is the core tension GEO practitioners must navigate.

Real GSC data from thegeolab.net — March 2026 | Measured via GEO Lab Console + Google Search Console API

Frequently Asked Questions

What is extractability in GEO?

Extractability measures how cleanly a content section can be retrieved, parsed, and reused by AI systems without losing meaning. It operates as Layer 2 of the GEO Stack, sitting between Retrieval Probability and Entity Reinforcement. High extractability means AI can lift your content and use it directly in generated answers while preserving its core meaning.

Why can high-ranking content still fail in AI search?

Content can rank #1 in traditional search yet never appear in AI-generated answers if its structure prevents clean extraction. AI systems do not just evaluate pages — they isolate sections, parse them into structured data, compress them, and synthesise responses. If a section resists parsing due to dense prose, pronoun-heavy writing, or buried answers, it gets skipped regardless of ranking.

What is answer-first structure?

Answer-first structure means leading each section with a declarative core claim in the opening sentence, then adding supporting details afterward. Instead of building up to a conclusion, you state the answer immediately. For example, “Extractability measures how cleanly content can be parsed by AI” is answer-first, while “In today’s evolving landscape, we are seeing changes…” buries the answer.

What makes content low extractability?

Low extractability results from four common patterns: long contextual setups before delivering answers, heavy pronoun usage (this, it, they) requiring surrounding context, answers buried mid-paragraph rather than upfront, and context-dependent explanations that assume prior knowledge. Each pattern forces AI systems to do more work to extract meaning, making them more likely to skip your content.

What is section independence and why does it matter?

Section independence means every content section makes sense in isolation, without references to prior material. The test: paste a section into a blank document and check if it is still coherent. AI systems often extract individual sections without surrounding context, so dependent sections that use phrases like “as mentioned above” become meaningless when extracted alone.

How do lists and tables improve extractability?

Structured formats like numbered lists, bullet points, and tables are preferentially extracted by AI systems because they provide clear syntactic boundaries. AI can identify where one item ends and another begins, making extraction reliable. Dense narrative prose, by contrast, forces AI to guess where meaningful boundaries lie, increasing extraction errors and reducing citation likelihood.

What is compression resistance?

Compression resistance means your content’s core meaning survives when condensed to one or two sentences. AI systems compress content during synthesis, and content with weak compression resistance loses critical meaning in the process. Achieve this by leading with unambiguous claims and separating core content from secondary inferences that can be dropped without losing the main point.

What is the most common extractability mistake?

The most common extractability mistake is burying the answer mid-paragraph behind contextual framing. Narrative prose that builds to a conclusion is anti-extractable because generative systems extract the first 1–2 sentences of a chunk. If the answer appears in sentence four, the system retrieves the context instead of the claim. Experiment 001 measured a 24 percentage point citation gap from this single structural variable.

Does extractability apply to all content types?

Extractability applies to every content format that may be retrieved by generative search systems, including editorial articles, FAQ pages, how-to guides, comparison content, and product descriptions. The structural principles — answer-first opening, entity explicitness, standalone coherence — are format-independent. A FAQ answer benefits from the same extractability principles as a research section in a long-form article.

Can high extractability compensate for low authority?

High extractability cannot fully compensate for low structural authority because the GEO Stack layers are interdependent. Authority signals — author expertise, external citations, trust markers — influence whether generative systems weight a source as credible during synthesis. A perfectly extractable section from a low-authority source may be retrieved but deprioritised during citation selection.

What Practitioners Say

Case Study: Extractability Rewrite Produces 24 Percentage Point Citation Increase

In GEO Experiment 001, The GEO Lab tested whether extractability-optimised structure alone — with no changes to content, domain authority, or links — could increase AI citation rates.

Two 400-word versions of the same content were published on the same domain. Version A used narrative structure: context-first, pronoun-dependent, flowing prose. Version B used declarative structure: answer-first opening sentences, explicit entity naming, and standalone-complete paragraphs — the five extractability principles described above.

Results

| Version | Structure | Query Runs | Citation Rate |

|---|---|---|---|

| A | Narrative | 75 | 37% |

| B | Declarative | 75 | 61% |

The declarative version achieved a 61% citation rate versus 37% for narrative — a 24 percentage point improvement from structure alone. The gap was consistent across three testing sessions on Perplexity, with session variance under 4 percentage points (p < 0.01).

What Drove the Improvement

Three measurable patterns separated the high-extractability version from the low-extractability version:

- Retrieval anchoring: The declarative version’s opening sentences were reproduced near-verbatim in AI outputs. Answer-first structure created stronger alignment between query embeddings and content chunk embeddings.

- Representation fidelity: When the narrative version was cited, AI systems sometimes extracted peripheral claims instead of the central one. The declarative version eliminated this drift — the most extractable sentence was the most important sentence by design.

- Clean retrieval boundaries: The narrative version produced partial traces in AI outputs — fragments retrieved without attribution that contributed to other sources’ answers. The declarative version either was cited fully or not at all.

This experiment provides the first quantified evidence that extractability operates as a genuine retrieval signal — not a marginal optimisation. For a page receiving 1,000 AI-driven impressions monthly, the difference between 37% and 61% citation consistency equals 240 additional citation events per month from a single structural rewrite.