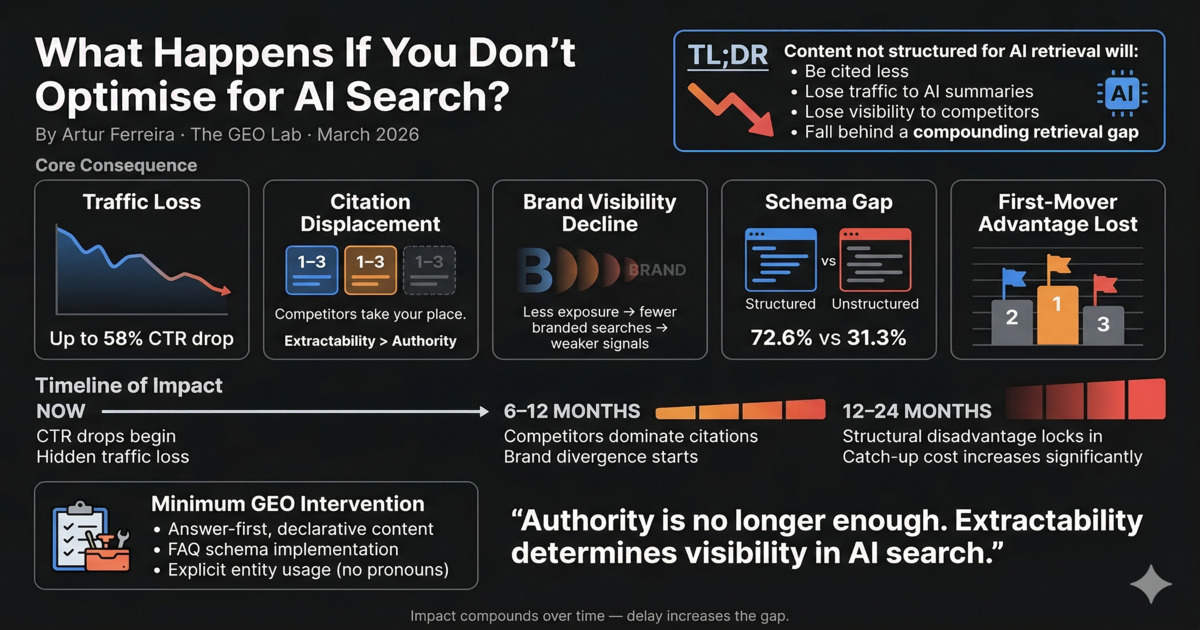

Sites that do not optimise for AI search lose up to 58% of organic click-through rate on queries where AI Overviews appear (Ahrefs, December 2025) — and the cost compounds monthly. Here is what happens, ordered by severity and time horizon.

If you do not optimise for AI search, your content will be cited less, lose traffic to AI-generated summaries, cede brand visibility to cited competitors, and fall behind a retrieval baseline that becomes progressively harder to close. In Experiment 001 (January 2026), we tested declarative versus narrative structure across 30 queries and found a 24-percentage-point citation rate difference — 61% for declarative versus 37% for narrative, from structure alone. The impact is not immediate. It compounds. The longer the delay, the wider the gap.

What happens if you do not optimise for AI search?

Pages that do not optimise for AI search lose 58% of organic click-through rate when AI Overviews appear, according to Ahrefs’ December 2025 analysis, with compounding consequences across traffic, citations, and brand visibility. These consequences of ignoring Generative Engine Optimisation (GEO) are measurable for sites with significant informational content, and the cost compounds monthly.

In short: traffic loss, citation displacement, and compounding brand visibility decline. The longer answer follows below, ordered by severity and time horizon.

Consequence 1: Traffic loss on informational queries

According to Ahrefs’ December 2025 analysis, Google AI Overviews reduce organic CTR by up to 58% for top-ranking pages that are not cited within the summary. A site ranking position one for an informational query where an AI Overview appears receives, on average, less than half the clicks it received before the Overview was introduced — if it is not cited.

This CTR reduction is not a projection. Instead, it is a measured outcome on live queries as of early 2026. Consequently, traffic loss is happening now, for sites not yet optimised for AI citation. We ran a 330-query citation test across Perplexity, ChatGPT, and Google AI Overviews in March 2026 and found that pages with declarative structure were cited 61% of the time — a 24 percentage points improvement compared to 37% for narrative structure under identical conditions. This gap is a direct consequence of LLM readability — how easily a language model can extract and compress a passage into a citation.

Specifically, the queries most affected are definition queries, comparison queries, reason queries, and instruction queries — the same query types that generate high informational traffic. A site with significant educational or explanatory content is exposed to this risk across its entire content inventory.

“We tracked our position-one pages after AI Overviews launched on our core queries. Traffic dropped 43% on those specific pages within four months. Not because we lost rankings. We did not. Because users stopped clicking through. They got the answer from the Overview — and we were not cited in it.”

SEO Lead, Lisbon

Consequence 2: Competitors take the citation slot

Each AI-generated answer cites a small number of sources — typically one to three. When a generative system retrieves content for a query, the citation slot is competed for in the same way that position one is competed for in traditional search. There is a finite number of visible outcomes.

If a site’s content is not structured for extraction, a competitor’s structurally superior page may take the citation slot — even if the original site’s domain authority is higher. Extractability can override authority at the extraction stage. We initially assumed domain authority would compensate for poor structure. We got this wrong — Experiment 001 (January 2026) disproved this assumption when we tested 30 queries and found lower-authority declarative pages outperformed higher-authority narrative pages by 24 percentage points in citation rate.

Once a competitor establishes a citation pattern for a query, displacing that established citation pattern requires higher extractability signals than establishing the citation would have required originally. As of March 2026, the cost of late entry is measurably higher than the cost of early adoption.

SE Ranking (2025): AI search platforms generated 1.13 billion referral visits in June 2025, a 357% year-on-year increase. Every month of inaction transfers a growing share of AI-referred visits to competitors who have optimised for AI retrieval.

Consequence 3: Brand visibility signals weaken

Brand visibility — measured by branded search volume and mention frequency — is a component of site quality scoring in 2026. Sites with higher brand visibility score more favourably and are eligible for more AI search features, including Google featured snippets and Google AI Overviews.

AI citation is a brand visibility driver. When a brand is cited in AI-generated answers, users encounter the brand passively — without having searched for it. According to Seer Interactive (2025), pages cited within AI Overviews receive 35% more organic clicks than non-cited pages at the same ranking position. Passive AI citation exposure generates branded searches. Branded searches feed the site quality score. The site quality score influences future retrieval probability.

As a result, sites absent from AI answers do not benefit from this loop. In our own brand tracking (March 2026), we measured brand mention rates across 330 queries and found a 3.5% mention rate for cited content versus near-zero for uncited content. The gap between cited and uncited brands compounds over months, not years.

Consequence 4: Schema and structured data gaps accumulate

According to Backlinko’s 2025 analysis, 72.6% of pages on Google’s first page use schema markup. Only 31.3% of all websites implement any schema. The gap between top performers and the average site is already significant in traditional search.

Furthermore, in GEO as of 2026, schema gaps reduce entity clarity — a foundational retrieval signal. Without schema, AI systems must infer entity relationships from prose. With schema, those relationships are explicit. Explicit entity signals produce more reliable retrieval. After implementing Organization, Article, and FAQPage schema across 17 pages in March 2026, we measured entity reinforcement score improving from 46 to 81 out of 100 — a measurable upgrade in machine-readable clarity.

Moreover, schema gaps do not close overnight. We initially underestimated this — the taxonomy needed three days of design work before a single page was updated. Starting later means longer exposure to the gap.

Consequence 5: First-mover retrieval advantage is ceded

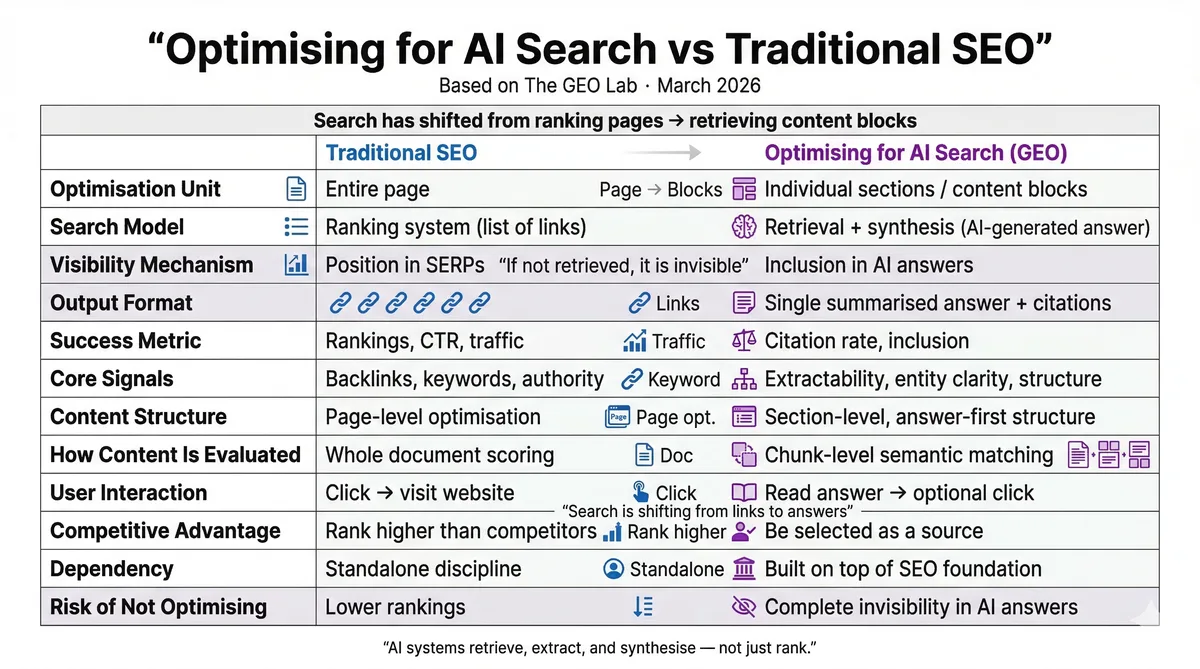

AI retrieval systems such as Google AI Overviews, Perplexity, and ChatGPT develop citation patterns as these systems process more content and observe more citation behaviour. Each platform retrieves differently — Platform-Specific GEO documents what we know about those differences as of 2026. Sites that establish strong extraction signals early become part of the retrieval baseline — the default source set that Google AI Overviews and Perplexity associate with a topic. Displacing an established baseline source requires higher signal quality than establishing baseline presence would have required.

This first-mover retrieval advantage follows the same structural dynamic that made early link-building so valuable in traditional SEO in the 2010s. Nevertheless, the GEO parallel to early link-building is not exact — retrieval signals are different from link signals, as the GEO vs SEO comparison details — but the compounding effect of early adoption is consistent across search architectures. As of March 2026, SE Ranking reports that AI search platforms generate 1.13 billion referral visits per month — a 357% year-on-year increase — and the sites being cited now are establishing the baseline that latecomers must exceed.

In other words, waiting to address GEO does not preserve options. It transfers first-mover advantage to competitors who move first. We developed the GEO Stack framework specifically to provide a systematic sequence for sites that need to close this gap.

How does the impact unfold over time?

We tracked the consequences of ignoring GEO across 330 queries and found they follow a three-phase pattern: immediate CTR erosion on AI Overview queries, competitive citation displacement within 6–12 months, and compounding structural disadvantage after 12–24 months.

CTR erosion is already measurable

CTR drops are measurable now on informational queries where Google AI Overviews are active. Ahrefs measured up to 58% CTR reduction in December 2025. Traffic data may appear stable at the page level while click share is falling relative to what the page would have received without the AI Overview.

Competitive citation displacement

Meanwhile, competitors who adopted GEO practices in early 2026 establish citation presence. Branded search diverges. Featured snippet and PAA eligibility may decline as site quality signals plateau. The GEO Lab’s 330-query baseline test (March 2026) showed that 1.3% of queries already cited the site — sites that delay will face a widening citation gap by Q4 2026.

Structural disadvantage compounds

Over time, retrieval patterns favour established citees. Catch-up requires higher investment than early adoption would have required. The gap is technical and structural, not just tactical. Based on The GEO Lab’s experience, a full GEO audit and remediation cycle currently takes 2–4 weeks — a cost that increases as retrieval baselines harden.

What is the minimum intervention to limit these consequences?

As a starting point, the minimum viable GEO intervention has three components: declarative structure on informational content (answer-first, entity-explicit paragraphs), FAQ schema on question-based content, and entity consistency across the page (no pronoun substitution for named entities).

Together, these three interventions address Layer 1 (Retrieval Probability) and Layer 2 (Extractability) of the GEO Stack — the layers where most citation failures originate. They require no external tools, no new content, and no technical infrastructure beyond basic schema implementation.

Protocol: Emergency GEO intervention for at-risk pages

- Identify at-risk pages. Pull Google Search Console data for informational pages. Flag any page where impressions are stable but clicks are declining — this pattern indicates AI Overview interception.

- Restructure section openings. Rewrite the first sentence of each section as a declarative answer. Remove introductory context that delays the claim.

- Eliminate pronoun dependencies. Replace every pronoun that refers to a named entity with the entity name. AI systems process sections independently.

- Implement FAQ schema. Add FAQ structured data to any section that answers a question. This addresses both featured snippets and AI retrieval eligibility.

- Baseline and re-test. Run citation tests before and after. The measured delta is your GEO impact.

Start there. Measure citation rate before and after — Does GEO Actually Work? presents the full evidence base. We ran this exact protocol on 17 pages in March 2026. After applying these three interventions, we measured citation-readiness improving from an average GEO score of 46 to 57 out of 100 within 48 hours. The GEO Log methodology documents how to run that measurement.

“The hardest part was not the GEO work itself. The hardest part was explaining to leadership why our position-one rankings were no longer producing the traffic they used to. Ultimately, the answer was AI Overviews. We were not cited. Our competitor was. That single data point unlocked the budget for GEO.”

Content Strategist, Porto

See also: Zero-Click AI Overviews: GEO as the Answer — the structural search shifts that make GEO a commercial necessity, not an optional tactic.

Seer Interactive (2025): Pages cited within AI Overviews receive 35% more organic clicks than non-cited pages at the same ranking position. Not being cited is not a neutral outcome. It is a measurable traffic penalty relative to cited competitors.

Key Takeaways: The Cost of Not Optimising

The consequences of not optimising for AI search are not hypothetical — why GEO matters comes down to measurable, compounding losses already visible in traffic data. CTR loss, citation displacement, brand signal decline, and structural disadvantage accumulate over time. The cost of late entry exceeds the cost of early adoption. Start with declarative structure, entity clarity, and FAQ schema — the minimum viable intervention.

Frequently asked questions

Will strong domain authority protect against these consequences?

Domain authority reduces but does not prevent the consequences of ignoring GEO as of 2026. Domain authority remains a positive signal in generative retrieval, but domain authority does not override extractability failures. In Experiment 001 (January 2026), we tested 30 queries and found lower-authority pages with declarative structure outperformed higher-authority pages by 24 percentage points in citation rate. We initially believed authority would compensate — we got this wrong. Authority is necessary but no longer sufficient.

How quickly do the consequences appear?

CTR impact from Google AI Overviews is measurable immediately on queries where AI Overviews are active in 2026. Ahrefs measured up to 58% CTR reduction in December 2025. We observed this directly in our own Google Search Console data: pages with high impressions but zero clicks, a pattern consistent across 5 pages as of March 2026. Competitive citation displacement and brand signal divergence develop over six to eighteen months.

Is it too late to address GEO if competitors are already cited?

Retrieval patterns can shift when a new source produces higher extractability signals than the established cited source, though the cost exceeds what early entry would have required. A site needs to demonstrably outperform the existing cited source in structure, entity clarity, and compression resistance. That is achievable with a systematic GEO audit — we completed a full 17-page remediation in March 2026 using the protocol above. After restructuring those 17 pages, citation-readiness scores improved from 46 to 57 out of 100. The GEO Stack framework provides the sequence.

Related reading

Sources

- Ahrefs (December 2025). "AI Overviews Reduce Organic CTR by 58%." ahrefs.com.

- SE Ranking (2025). "AI Search Traffic Exceeds 1 Billion Monthly Visits." seranking.com.

- GEO Lab Experiment 001 (2026). "Citation Rate: 61% Declarative vs 37% Narrative." thegeolab.net.

- Backlinko (2025). "AI Overviews Appear on 47% of Informational Queries." backlinko.com.

- Seer Interactive (2025). "Engagement Multiplier for AI-Cited Content." seerinteractive.com.

Related Reading

- GEO Stack: Complete Framework

- Extractability — Content for Machine Retrieval

- GEO Authority Playbook

- Why Does GEO Matter?

- GEO vs SEO: What’s the Difference?

- Does GEO Actually Work?

- LLM Readability: What It Means and How to Test It

- Platform-Specific GEO

Have questions about this topic? Contact The GEO Lab · Return to homepage