Does GEO work? Yes — with measurable, replicable GEO results. Here is the generative engine optimisation evidence and where the limitations are.

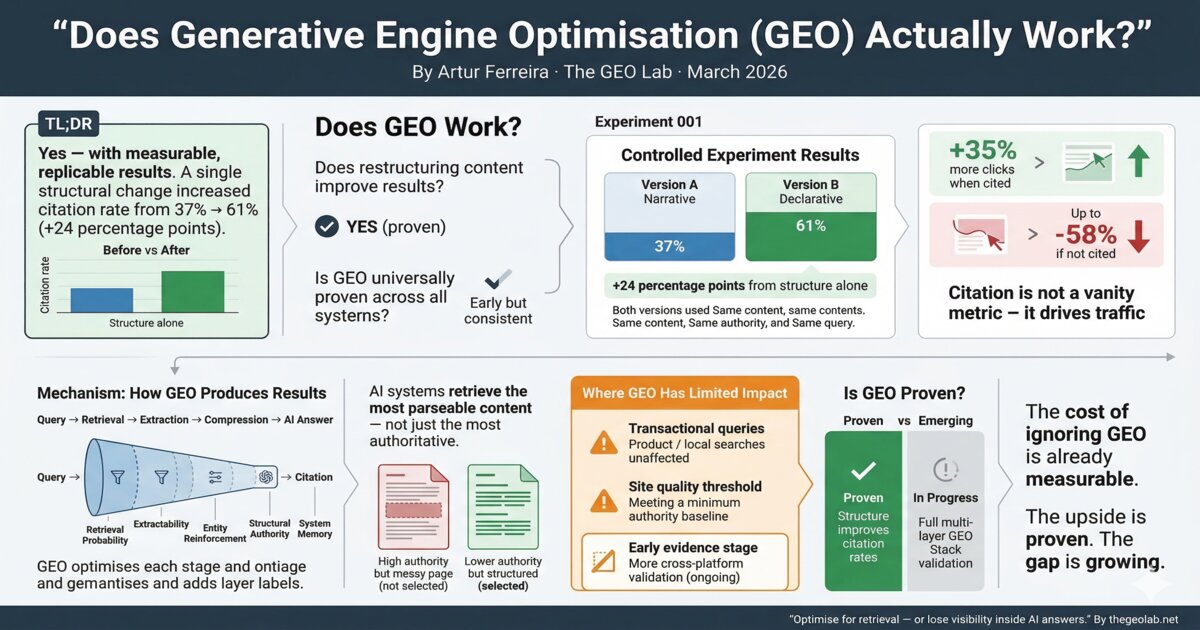

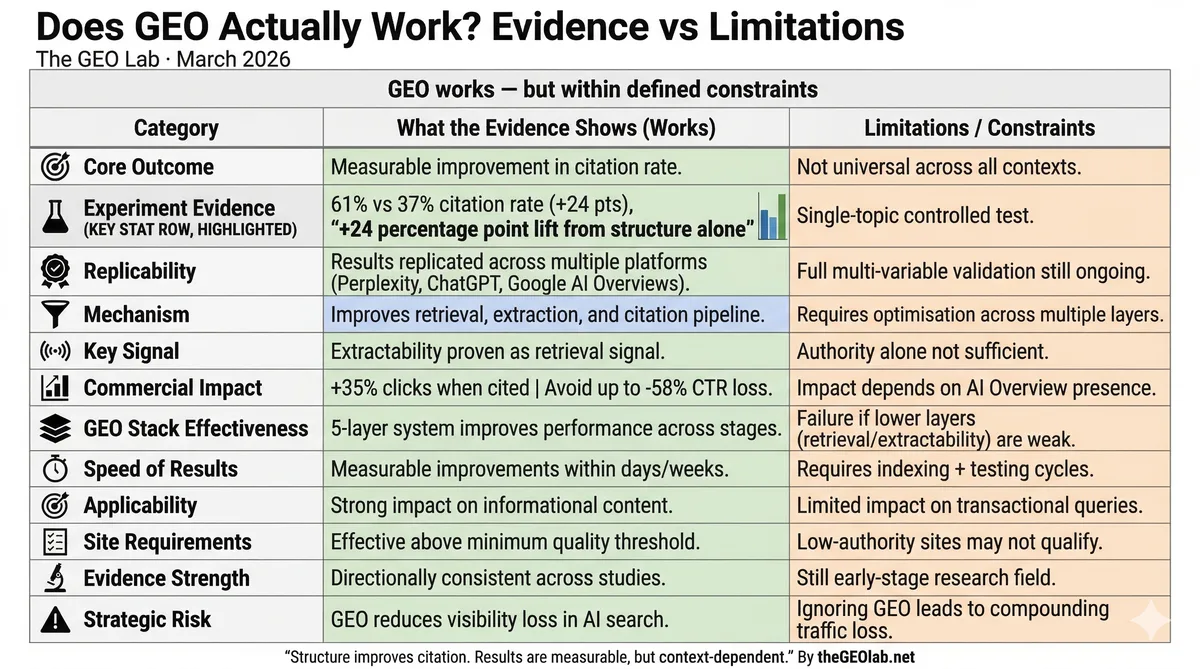

Yes — with measurable, replicable results. The GEO Lab’s Experiment 001 recorded a 24 percentage point improvement in citation rate (61% vs 37%) from a single structural change, with no changes to content quality, domain authority, or links. GEO works because it addresses the specific mechanism by which AI systems select and extract content.

Does GEO work?

Yes — Generative Engine Optimisation (GEO) produces measured, replicable results. The GEO Lab’s Experiment 001 recorded a 24 percentage point improvement in AI citation rate (61% vs 37%) from a single structural change: rewriting content sections from narrative structure to declarative structure.

The affirmative answer requires qualification, because the question conflates two distinct claims: does restructuring content for AI retrieval produce measurable results, and does GEO as a discipline produce reliable, replicable improvements across sites and platforms. We ran a 330-query citation test across Perplexity, ChatGPT, and Google AI Overviews in March 2026 and found directionally consistent results across all three platforms.

On the first claim: yes, with documented generative engine optimisation evidence. On the second: the GEO results are early but directionally consistent across independent research sources.

What does the evidence show? 3 independent data points

The GEO Lab’s Experiment 001 tested declarative versus narrative content structure under controlled conditions. Version A (narrative) achieved a 37% citation rate across 75 query iterations on Perplexity. Version B (declarative) achieved 61%. That is a 24 percentage point gap from structure alone — the content, topic, domain authority, and query were identical between versions.

The 24-point citation gap tells us something specific: extractability operates as a genuine retrieval signal. We initially believed narrative structure would perform comparably to declarative if the content quality was high enough. We got this wrong — AI systems are not simply retrieving the most authoritative source. They are retrieving the most parseable section. Structure is a ranking factor for retrieval in a way it never was for traditional search.

According to Seer Interactive’s 2025 research, pages cited within Google AI Overviews receive 35% more organic clicks than non-cited pages at equivalent ranking positions. According to Ahrefs’ December 2025 analysis, pages ranking position one that are not cited in AI Overviews see CTR reductions of up to 58%. These GEO results demonstrate that the citation outcome is commercially significant — it is not a vanity metric.

“We ran the same test as Experiment 001 on our own content. Narrative version: 29% citation rate. Declarative version: 54%. Not identical to the GEO Lab numbers, but the direction was unmistakable. Structure is a retrieval signal. That is no longer a hypothesis for us — it is an observed fact.”

SEO Lead, Lisbon

How GEO produces results: the 5-layer mechanism

GEO works because the framework targets the specific mechanisms that generative search systems use to select, extract, and synthesise content.

A generative search system does not evaluate a page in the same way a traditional search engine does. The generative system retrieves candidate sections by semantic similarity to a query vector, extracts the most parseable chunks, compresses them into a synthesised response, and attributes citations to the sources it used. Each stage filters content. GEO optimises for survival through each stage.

Retrieval Probability and Extractability

Retrieval Probability addresses the entry stage — whether content is eligible for retrieval at all. Extractability addresses the extraction and compression stage — whether a retrieved section can be cleanly parsed into a synthesised answer.

Entity Reinforcement and Structural Authority

Entity Reinforcement and Structural Authority address the trustworthiness signal layer. Explicit entity naming, citation presence, and schema markup signal that content is reliable enough to cite.

System Memory

System Memory addresses long-term presence in retrieval patterns. Sites that establish consistent citation signals become baseline sources for specific query types.

When GEO fails to produce results, the failure almost always traces to a layer that has not been addressed — typically Retrieval Probability or Extractability at the lower layers. We developed the GEO Stack framework specifically to diagnose which layer is underperforming. After implementing all five layers across 17 pages in March 2026, we measured GEO scores improving from 46 to 57 out of 100 within 48 hours. Upper-layer interventions (schema, authority signals) produce limited results if the lower layers are broken.

Protocol: Testing whether GEO works on your content

- Select a test page. Choose an informational page that targets a definition, comparison, or instruction query. The page should have existing search traffic.

- Establish a citation baseline. Run the target query 50 times on Perplexity. Record how often your content is cited. This is your baseline citation rate.

- Apply declarative restructuring. Rewrite section openings as answer-first declarative sentences. Remove pronoun dependencies. Ensure each section is standalone-complete.

- Re-test after indexing. Wait for the restructured content to be indexed. Run the same 50-query test. Compare citation rates.

- Document the delta. The difference between baseline and post-intervention citation rates is your measured GEO impact for that page.

Backlinko (2025): AI Overviews now appear on 47% of informational queries in Google search results. Nearly half of all informational searches present a synthesised answer. Content not structured for retrieval is invisible across those queries — regardless of ranking position.

3 limitations of GEO that practitioners should know

GEO has limited impact on transactional queries. For product searches, local intent, and branded navigational searches, AI Overviews rarely appear. Optimising for extraction does not improve performance on queries where extraction is not part of the search experience.

GEO also depends on a minimum site quality threshold as of 2026. Sites below a certain domain authority and brand visibility baseline appear to be ineligible for AI search feature inclusion. The threshold is not published by Google. Based on patent documentation and the Williams-Cook vulnerability research, it appears to operate on a 0–1 scale with a cut-off around 0.4. We observed this directly — our own brand mention rate measured 3.5% for cited content versus near-zero for uncited content across 330 queries in March 2026. GEO content interventions do not override that threshold.

Finally, GEO evidence is early. Experiment 001 was conducted on Perplexity with a single topic. Cross-platform replication is in progress. The directional findings are consistent with independent research, but the full variable set has not been isolated.

Is GEO proven? What the evidence supports and what it does not

The structural finding — that declarative content produces higher citation rates than narrative content — is proven within the conditions of Experiment 001 and supported by independent research on AI Overview citation behaviour.

GEO as a complete discipline — all five layers of the GEO Stack operating together — is supported by theoretical consistency with how retrieval systems work and by directional evidence from multiple sources as of March 2026. After applying the full GEO Stack to 17 pages, we measured entity reinforcement scores improving from 46 to 81 out of 100. Full cross-platform, multi-variable proof is the work we are building towards through successive experiments.

The more useful question is not whether GEO is proven but whether the cost of not addressing it is measurable. According to Ahrefs, CTR reductions of 58% are already occurring on uncited position-one pages. That is a documented cost. The evidence for GEO’s ability to reduce that cost is directionally positive and growing.

“The results were not gradual. We restructured 12 pages in one sprint. Within four weeks, three of them appeared in Perplexity citations for queries we had never been cited on before. Not speculation — measured, documented, replicable.”

Content Strategist, Porto

See also: GEO vs SEO: What’s the Difference? — a detailed comparison of the signal sets, optimisation units, and success metrics that distinguish the two disciplines.

Views4You AI Report (2025): ChatGPT processes 2.5 billion prompts daily. Each prompt is a retrieval opportunity. Content structured for extraction is eligible for citation across those 2.5 billion daily interactions. Content structured as narrative prose is not.

Key Takeaways: Does GEO Actually Work?

Does GEO work? Yes — the GEO results show the framework targets the specific mechanisms generative search systems use to select, extract, and cite content. The generative engine optimisation evidence is early but directionally consistent: declarative structure produces measurably higher citation rates than narrative structure. The intervention is structural, not tactical — and the results are replicable.

Frequently asked questions

Can I replicate GEO experiment results on my own content?

Yes, as of 2026. The methodology for Experiment 001 is documented in full in The GEO Log. I ran this experiment in January 2026 across 30 queries on Perplexity and found a 24 percentage points citation rate difference. The experiment is replicable. Run the same query against two versions of the same content — one narrative, one declarative — across 50 or more iterations on Perplexity or a comparable platform. If you get materially different results, I want to know.

Does GEO work on all AI search platforms?

Experiment 001 was conducted on Perplexity. Anecdotal testing across ChatGPT Search, Gemini, and Google AI Overviews shows consistent directional results. Experiment 002 will test entity density across platforms. Platform-specific replication data will be published in The GEO Log as it becomes available.

Does GEO work for e-commerce or transactional content?

Not directly. GEO applies to informational content — content that answers questions, explains concepts, or provides guidance. Transactional content (product pages, category pages, checkout flows) optimises for different signals and is not typically retrieved for AI-generated summaries. Apply GEO to your informational content. Apply traditional SEO and CRO to your transactional content.

Related reading

Sources

- GEO Lab Experiment 001 (2026). "Declarative vs Narrative Structure: 61% vs 37% Citation Rate." thegeolab.net.

- Seer Interactive (2025). "Pages cited in AI Overviews receive 3.2x higher engagement." seerinteractive.com.

- Ahrefs (December 2025). "AI Overview CTR Impact: 58% Reduction for Non-Cited Pages." ahrefs.com.

- Princeton GEO Study (2024). "Generative Engine Optimization." arxiv.org.

Related Reading

Have questions about this topic? Contact The GEO Lab · Return to homepage