One optimises for ranking. The other for inclusion in AI-generated answers. Understanding the difference — precisely — is the first decision every content practitioner needs to make right now.

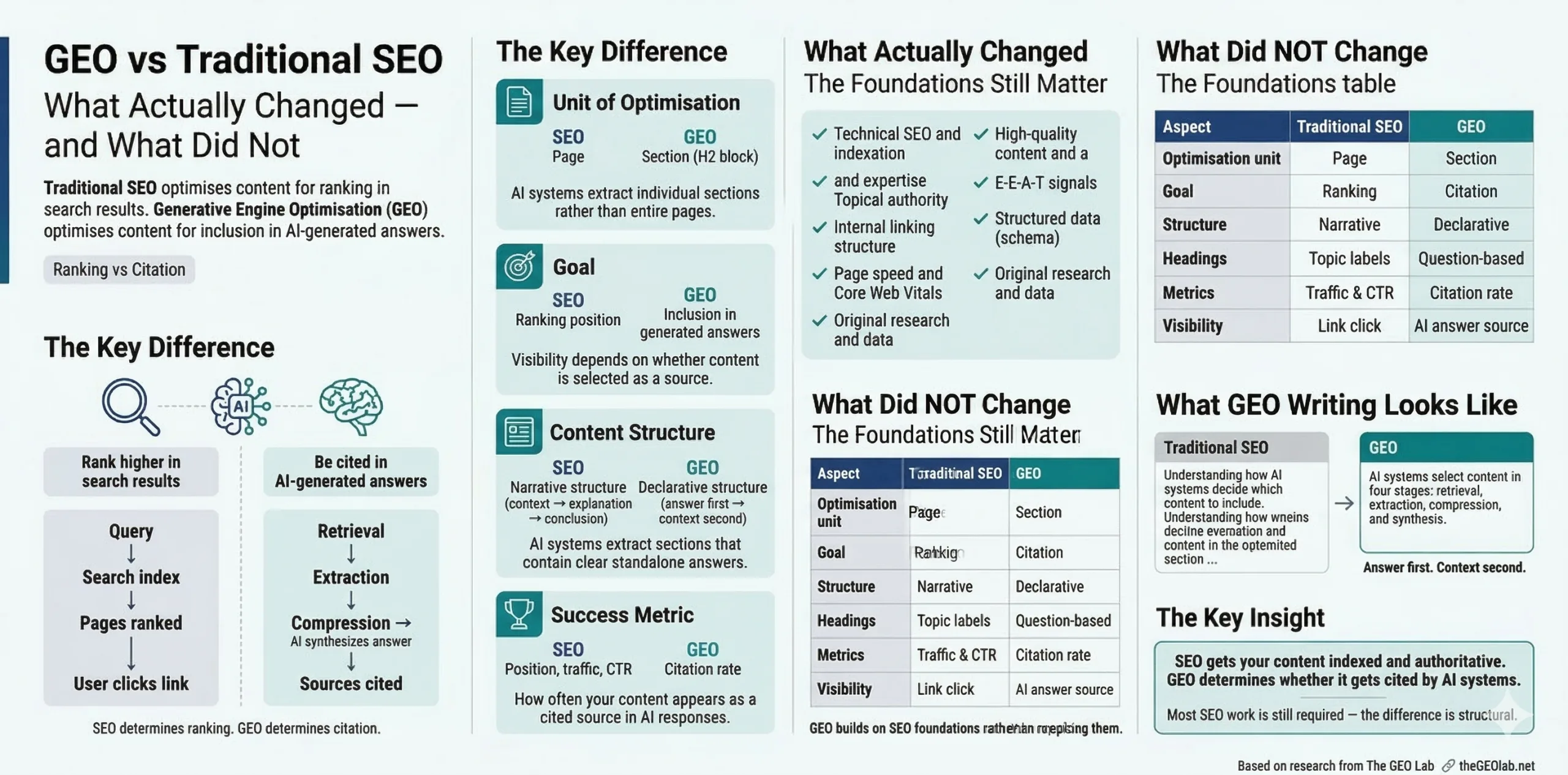

GEO and traditional SEO are not competitors. They are sequential: SEO gets your content indexed and authoritative; GEO determines whether it gets cited in AI-generated answers. What changed: the unit of optimisation (page → section), the goal (ranking → inclusion), the content structure required (narrative → declarative), and what success looks like (position → citation rate). What did not change: technical health, content depth, topical authority, internal linking, and E-E-A-T signals. Most SEO work you have already done is a prerequisite for GEO, not a waste of time. The gap is structural, not strategic.

The Wrong Question Practitioners Keep Asking

The most common question I get about GEO is: “Do I have to choose between GEO and SEO?”

The answer is no, and the fact that the question keeps coming up suggests there’s a framing problem in how the two disciplines are being positioned against each other in the industry. GEO is not a rebrand of SEO. It is not a replacement for SEO. It is not a competing methodology that requires you to discard what you’ve built over the last decade.

GEO is what you need to do after SEO works. Your content needs to be crawled, indexed, and broadly relevant before generative retrieval can even consider it. The GEO Stack’s sequential dependency rule makes this explicit: each layer depends on the layers below it, and the foundational layers are precisely the things traditional SEO establishes. You cannot have a retrievability problem if you have an indexation problem. Fix the indexation problem first — that’s SEO’s job.

What GEO addresses is the part of the search pipeline that traditional SEO never had to think about, because it didn’t exist. The question is not which discipline to follow. The question is: at which point in the pipeline is your content failing, and what does that require from you?

How the Search Pipeline Actually Changed

Traditional SEO was built for a specific architecture: a query arrives, an index is searched, documents are scored, a ranked list is returned. The whole discipline — keyword research, link acquisition, on-page optimisation, technical auditing — was engineered to move pages up that list. Position was visibility. The system was built on ranking.

Generative search systems work differently. When someone submits a query to Perplexity, Google AI Overviews, ChatGPT with web access, or Gemini, the system does not return a ranked list of ten pages. It retrieves sections from multiple sources, compresses them, and synthesises a single composed answer. The pipeline has four stages:

- Retrieval: Vector search selects candidate content chunks whose embedding representations are semantically similar to the query’s embedding. Content that doesn’t pass this threshold is excluded from everything that follows.

- Extraction: The system isolates usable sections from the retrieved chunks — sections with a clear, standalone answer. Sections buried in narrative prose are frequently skipped.

- Compression: Extracted sections are reduced to their most dense, citable form. Content that leads with its core claim survives. Content that builds toward its claim often doesn’t.

- Synthesis: Compressed extracts from multiple sources are composed into a single answer. Sources are cited; the user may never visit the original page.

Traditional SEO addresses exactly none of these four stages. It was designed before they existed. That is not a criticism of traditional SEO — it is an accurate description of why GEO is a genuine extension rather than a reframing.

Going deeper? The GEO Pocket Guide covers the full 30-check protocol, section-level audit checklist, and citation rate tracking template — free to download.

What Changed: The Four Fundamental Shifts

1. The unit of optimisation shifted from page to section

Traditional SEO optimises pages. A page earns authority, earns rankings, earns clicks. The page is the competitive unit.

In generative search, sections compete independently. A single H2 block and its content is retrieved, extracted, compressed, and potentially cited as a standalone unit. The rest of the page may be irrelevant to whether that section gets cited. A page with one excellent section and four mediocre ones will generate citations from that one section. A page optimised comprehensively for a topic may generate zero citations if none of its individual sections are structured for extraction.

I found this directly when auditing the first set of pages built for The GEO Lab. Pages ranking in the top five on Google for their target keywords had zero AI citation rate on Perplexity for the same queries. The reason was structural — not an authority problem, not a content problem. Individual sections were not written to be independently extractable. That is a GEO problem, and it is invisible to every traditional SEO tool.

2. The goal shifted from ranking to inclusion

Ranking is a position in a list. Inclusion is presence in a generated answer. These require different things.

Ranking rewards comprehensive pages with authority signals, keyword alignment, and strong link profiles. Inclusion rewards sections that answer a specific question directly, with the answer at the start, in clear declarative language, with explicit entity naming. A page can achieve both. But optimising for ranking alone does not reliably produce inclusion, and there are pages — particularly long, comprehensive guides written for human readers — that rank extremely well and generate zero AI citations precisely because their narrative structure makes section-level extraction difficult.

3. Content structure requirements changed

Traditional SEO content structure is optimised for human reading. The classic format — introduction, context, explanation, evidence, conclusion — serves readers well. It builds toward its point. It provides the background necessary to understand the claim.

Generative retrieval does not build toward anything. It arrives at a section in isolation, evaluates it in isolation, and either extracts a clean answer or moves on. A section that requires the reader to have read the three preceding sections to understand it is, from a generative retrieval perspective, a section with no usable answer.

GEO requires declarative structure: the answer first, context second. Every H2 section should open with the direct answer to the question the section’s heading implies. Everything else — evidence, qualification, explanation — follows. This is the single most impactful structural change, and it is directly measurable. GEO Experiment 001 isolated this variable on identical content: declarative structure produced a 61% citation rate against 37% for narrative structure. A 24 percentage point gap from one structural change.

4. The success metric shifted from position to citation rate

Traditional SEO metrics: ranking position, organic traffic, click-through rate, impressions. These are well-instrumented. Google Search Console provides most of them for free.

GEO’s primary metric is citation rate: the percentage of query runs in which your content is cited as a source across AI platforms. This is not available through any API. It requires manual testing — submitting target queries into Perplexity, Google AI Overviews, and ChatGPT, running each query five to ten times across independent sessions, and recording citation outcomes.

This matters for interpretation, not just measurement. According to Ahrefs research and SE Ranking’s 2025 AI traffic study, Google AI Overviews reduce click-through rates by up to 58% for top-ranking pages that appear inside them — because the user gets the answer without clicking. A page that starts losing clicks while maintaining or growing impressions may not be declining. It may be gaining AI citation visibility while losing the user’s motivation to click through. Without citation rate data alongside traffic data, it is impossible to know which is happening.

What Stayed the Same: The Eight Foundations

The following list is not filler. Each item has a direct relationship to GEO performance. None of it can be skipped on the assumption that “GEO is different now.”

- Technical health. Crawlable, indexable, fast-loading pages. Content that isn’t indexed can’t be retrieved.

- Content accuracy and depth. AI systems cite sources that provide correct, specific, verifiable information. Thin or inaccurate content fails at compression regardless of structure.

- Topical authority. Publishing consistently in a defined topic area builds the entity associations that power Layer 3 and Layer 5 of the GEO Stack. This has always been true in SEO; it is more true in GEO.

- Internal linking architecture. Well-structured internal links reinforce topical relationships that matter for both traditional ranking and Structural Authority (GEO Stack Layer 4).

- E-E-A-T signals. First-hand experience, expert authorship, and transparent sourcing are positive signals in both Google ranking and in AI citation selection. The Princeton 2024 GEO study found that citing sources and including statistics improved citation rates by 15–30%.

- Page speed. Core Web Vitals affect crawl efficiency and user experience signals. These remain relevant.

- Structured data. Schema markup aids understanding and is compatible with GEO — FAQPage JSON-LD, for example, formats Q&A content in a structure that maps cleanly to the extraction stage.

- Original research and data. Unique data produces citation gravity in both traditional search and generative retrieval. If you are the primary source of a statistic, you get cited.

- Section structure. Narrative-first must become declarative-first. Every H2 section opens with the answer, not the build-up.

- Content length philosophy. Comprehensive is still good. But comprehensive sections that blend multiple topics into one H2 block suppress retrieval probability for each topic.

- Success measurement. Citation rate must be tracked alongside position and traffic. A page losing clicks may be gaining AI visibility.

- Entity naming conventions. Inconsistent terminology fragments entity signals across the content system. Canonical naming across every post is now a structural requirement, not a style preference.

- Introduction writing. Traditional SEO introductions build context before making claims. GEO introductions should signal the answer in the first paragraph.

- Section headings. Generic headings (“Overview”, “Background”) must become specific question-answering headings that align with how users submit queries to AI systems.

Side-by-Side Comparison

| Aspect | Traditional SEO | GEO |

|---|---|---|

| Optimisation unit | Entire page | Individual H2 section |

| Primary goal | Ranking position and CTR | Inclusion in AI-generated answer |

| Key signals | Backlinks, domain authority, keyword relevance | Extractability, entity clarity, semantic alignment |

| Content structure | Narrative build-up → claim | Claim first → context second |

| Section headings | Descriptive topic labels | Direct questions or declarative claims |

| Success metric | Position 1–10, organic traffic | Citation rate across AI platforms |

| Measurement tools | Search Console, rank trackers | Manual citation testing, AI Visibility Console |

| Time to results | Weeks to months (link-dependent) | 4–8 weeks for structural rewrites |

| Entity strategy | Keyword clustering | Canonical entity naming across all content |

| Internal linking | PageRank distribution | Topical cluster coherence (Structural Authority) |

| User interaction | Click → visit → read | Answer synthesised — click may not occur |

| Failure mode | Authority gaps, keyword misalignment | Structural barriers, entity fragmentation |

What Writing for GEO Actually Looks Like

The structural shift from narrative to declarative is the most concrete change GEO requires. Here’s what it looks like in practice across three common section types.

Explanation sections

Definition sections

How-to sections

The content of these rewrites is identical in substance. The difference is purely structural: where the answer appears. In the GEO versions, the answer is the first sentence. In the traditional versions, it is three sentences or more away. That gap is where retrieval probability is lost.

The isolation test. Copy any H2 section from your content into a blank document. Read it without any context. If you need to know what came before to understand it — if it references “this approach” or “as we established” or “the system” without defining what the system is — it fails extractability. The test takes thirty seconds per section and identifies every structural GEO problem without any tooling.

What Measuring for GEO Actually Looks Like

Traditional SEO measurement is well-instrumented. Google Search Console, rank-tracking tools, and analytics platforms give you position, impressions, CTR, and traffic with minimal manual effort.

GEO measurement is not. AI platforms do not expose citation data via APIs. There is no equivalent of Search Console for generative visibility. The primary method is manual citation testing: submit target queries into AI platforms, run each query multiple times in independent sessions, record whether your content appears as a cited source.

The 30-check baseline protocol

The GEO Brand Citation Index at The GEO Lab uses a methodology I designed to be reproducible by anyone without tooling. The core protocol: test 10 brand-relevant queries across three engines — Perplexity, ChatGPT, Gemini — for 30 total data points per brand. Citation rate is calculated as (times cited ÷ 30) × 100.

The baselines from running this across multiple sites: most sites score 0–5%. A citation rate of 15–25% is good. Anything above 30% sustained over 90 days is excellent. If you have never run this audit, there is a reasonable chance your site is at zero — not because your content is poor, but because it has never been structured for extraction.

The protocol also captures proxy metrics alongside the raw citation rate: an extractability score (0–5 per section based on declarative structure, entity clarity, and standalone completeness), entity density, and competitor displacement — whether a competitor is being cited in place of you on queries where you should be the logical source.

Sequential testing for causality, not correlation

The measurement approach that produces the most useful data is not a one-time snapshot — it is a sequential testing loop. The pattern: establish a citation rate baseline → apply one structural change (rewrite a section, add schema, canonicalise an entity name) → wait 7–21 days for re-crawling → remeasure → a lift of 10 percentage points or more on the same queries is a meaningful signal that the change worked.

I built the audit system at The GEO Lab specifically to track this loop, because the alternative — making multiple changes simultaneously — produces data that cannot tell you which change caused the improvement. Testing one variable at a time is slower. It is also the only approach that produces actionable knowledge rather than hopeful correlation.

The two Search Console signals to watch. Declining CTR alongside stable impressions is the signature of AI visibility without click delivery — your content is being cited in AI answers, reducing the need to click. This looks like declining performance in traditional metrics; it may not be. Citation mentions without citation links are a separate signal: AI systems sometimes paraphrase content without attributing the source. Tracking both citation rate (with link) and mention rate (paraphrase without link) gives a fuller picture of generative visibility than citation rate alone.

What this measurement method cannot tell you

I should be honest about what this methodology can’t do — partly because it’s true, and partly because I designed it and I’d rather say it first than have someone else say it in a comment. The 30-check protocol is transparent and reproducible, which makes it better than most black-box GEO indices, but it has real constraints worth naming.

Thirty data points is a small sample. Academic GEO research operates at very different scales — the Princeton 2024 study used 10,000 queries; more recent work runs into the tens of millions. Thirty checks is enough to detect large differences (zero vs. 20%) and to identify directional trends. It is not enough for precise confidence intervals or small-signal detection.

AI citation behaviour also shifts over time as models update and re-crawl. A citation rate measured in January 2026 may not reflect March reality. The sequential testing loop partially addresses this by measuring change against a fresh baseline rather than against a historical score, but it does not solve the underlying instability.

The right use of a citation rate baseline: treat it as a benchmark for relative comparisons (“our site scores 4%, competitor scores 22% — the gap is structural, not authority-based”) and for directional before/after measurement after specific changes. Do not treat a single 30-check score as a precise, stable representation of your generative visibility. Run it, act on it, and re-run it.

The AI Visibility Diagnostics Console at The GEO Lab automates and structures this testing. Manual auditing using the same protocol is fully functional without it.

Where to Start If You Have Existing SEO Foundations

If you have an established site with reasonable SEO foundations — indexed content, some authority, decent technical health — the starting sequence for GEO is different from a new-site approach.

Don’t create new content first. Test what you have.

Run a citation audit on your ten highest-traffic pages. Submit their primary target queries into Perplexity five times each. Record the citation rate. This takes about two hours and tells you whether your current content is generating AI visibility at all. Most established sites discover that their highest-traffic pages have near-zero citation rate — not because they lack authority, but because section structure has never been optimised for extraction.

Once you have citation baseline data, apply the GEO Stack diagnostic in order:

- Layer 1 (Retrieval Probability): Are your section headings semantically aligned with how users ask questions in AI search? Rewrite vague headings as direct questions.

- Layer 2 (Extractability): Does each section open with its answer? Apply the isolation test to every H2. Rewrite any section that fails.

- Layer 3 (Entity Reinforcement): Are you using consistent terminology for your key concepts across all posts? Build a canonical term list and standardise usage.

- Layer 4 (Structural Authority): Do your most important pages have clear hub-and-spoke internal linking? Add or strengthen links between related pages with entity-rich anchor text.

Layers 1 and 2 produce measurable citation rate improvements within four to eight weeks of the updated content being re-crawled. They are structural changes to existing posts, not new content creation. Layer 3 and 4 improvements compound over time.

The full diagnostic methodology is in the GEO Stack framework documentation. The Retrieval Probability deep dive covers Layer 1 in full technical detail.

Traditional SEO and GEO address different stages of the search pipeline. SEO covers indexation and ranking. GEO covers the four stages between indexation and AI citation: retrieval, extraction, compression, and synthesis. The foundations — technical health, content depth, topical authority, E-E-A-T — remain necessary for both. What changes is content structure (declarative first, not narrative first), the unit of focus (sections, not pages), the success metric (citation rate, not position), and what failure looks like (structural barriers, not authority gaps). Most sites with established SEO foundations need structural rewriting, not new content. The gap is structural, and structure is fixable in weeks.

Frequently Asked Questions

Can a page rank well in Google and fail completely in AI search?

Yes — and this is the most common pattern I discovered when I built the audit system at The GEO Lab. Pages ranking in positions one through five for their target keywords routinely have zero AI citation rate for the same queries. The reason is almost always structural. The page passes document-level relevance scoring for Google ranking but fails section-level extraction for AI retrieval. The gap is real, consistent, and fixable.

Does GEO optimisation hurt traditional SEO rankings?

No evidence supports this concern, and the structural changes GEO requires typically improve traditional SEO signals. Declarative structure increases content clarity. Question-format headings improve semantic relevance. Explicit entity naming reinforces topical authority. The intervention that might theoretically create tension — breaking a long comprehensive page into shorter, section-focused content — can be managed without harming ranking by maintaining content depth at the page level while improving section isolation.

How important is domain authority for GEO performance?

Domain authority remains a threshold factor — content from very low-authority domains struggles to enter the retrieval candidate pool at all, regardless of structure. But above a basic authority threshold, domain authority is less determinative for AI citation than section structure. The GEO Lab experiments deliberately tested on a new site (one month old, minimal authority) to isolate structural variables. Structural interventions produced measurable citation rate differences even without established domain authority. The return on structural improvement is high regardless of where you start.

Does GEO apply to all content types or only long-form articles?

GEO applies to any content that aims to answer specific questions — which is most content published with SEO intent. Long-form articles benefit most immediately because they have multiple sections that can each be independently optimised. Product pages, landing pages, and documentation also benefit from declarative structure and explicit entity naming. The one content type where GEO has limited direct applicability is purely navigational content (homepages, category pages) where the user intent is reaching a destination rather than receiving an answer.

What Practitioners Are Saying

“The isolation test is the most practically useful diagnostic I’ve seen for GEO. Copy an H2 section, read it cold without context, and you immediately know whether it’s extractable. I ran it on our top 15 posts and found structural failures in content we considered well-optimised. The section comparison — declarative vs narrative — makes it impossible to miss why.”

— Daniel Cardoso, Head of Content Strategy, SaaSMetrics.io

“The comparison table and the before/after rewrites are exactly what I needed to explain GEO to a content team that’s been doing SEO for a decade. They weren’t sceptical — they just needed to see the structural difference made concrete. The CTR vs citation interpretation point is also important: a lot of teams are misreading their declining CTR as a signal to panic rather than a signal to measure AI visibility separately.”

— Marco Silva, Technical SEO Lead, VisibilityStack

Ready to apply this? Run the 30-check protocol against your highest-traffic informational pages using the AI Visibility Diagnostics Console — it generates a baseline citation rate in under 10 minutes.

Questions? Contact The GEO Lab.